Beyond the Buzz: SBOM, AI, DataOps for Org Resilience post Log4j

Show transcript [en]

um so first before I get started I I'm sure everybody in this room has heard of these two things uh but I did want to ask how many of you have heard of s-bombs okay how many of you actually use them that's what I thought um now when I I gave it this talk or a very similar talk in Australia when I asked the first question one person raised their hand and absolutely nobody used desk bombs uh which was actually a little bit surprising to me but I learned a lot on that trip actually related to how Australia handles their cyber security and differences between the US and Australia which I won't get into but I just thought it was an

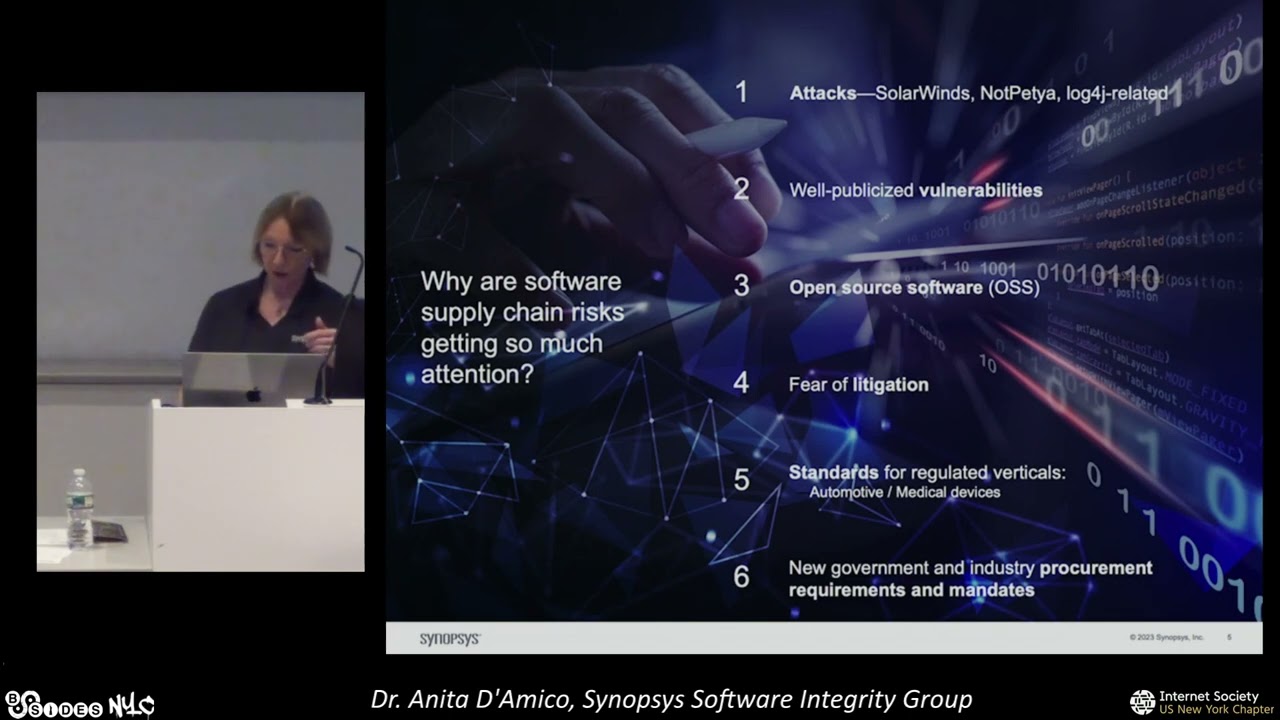

interesting difference there okay so why we're here vulnerabilities nowadays and cyber security are being introduced far left of effect and at scale so with supply chain compromises we see a compromise or vulnerability being introduced far deep into the supply chain and the ultimate effect comes way later when companies organizations teams take software or code implement it into their workflows and then all of a sudden this vulnerability happens we've seen these a few times I'm sure all of us are in the room are familiar with some of these here's the definition from cisov what a supply chain attack is some notable ones not pentia kinda log for Shell solarwinds we're all kind of familiar with these but you know with AI and a

lot of the automated tools and techniques we're also seeing vulnerabilities being introduced not just far left of effect but at scale we've seen talks there's been lots of discussions about Automation and how that's going to increase the rate at which certain strains of malware developed and deployed I highlight ransomware as an example where you know we can spit out encrypted there was a talk this morning the plenary talked about ransomware uh ranswers on the rise it has been for a while and here are some examples of those I'm sure we all are familiar with ransomware but just to go over it again um actually another interesting fact the Australian Healthcare sector throughout 2021 and 2022 was hit with huge

ransomware attacks across the country affecting their health care sector which was you may have already heard of those that was news to me because I wasn't monitoring that um an interesting uh it was all the buzz when I went down and and gave this talk there but really the thesis of my talk is what I said before which is that s-bombs supply chain attacks ransomware the Advent of automation are just new waves of technology and capabilities that are coming about due to or in response to these types of vulnerabilities and the actions that these actors are taking but there's nothing really in particular special about them my thesis is that for these two examples and all other

examples of technology that we will use and Implement and develop in order to tackle the newest Trend in cyber security we are never going to do as well as we could if our underlying data operations and what we do with our data and how we think about data isn't as good as it could be so we really have to focus on these really core fundamentals of data science in order to get the most out of these tools and techniques that's basically what I'm going to be talking about today so first we're going to hit supply chain vulnerabilities all right so we're all familiar with s-bombs that's good um but in case some of the folks who walked in

aren't this is the actual definition so it's formal machine readable inventory of software components or dependencies has information about these components and their hierarchical relationships now since none of us with maybe one exception are actually using them it's logical to ask what are they used for well since nobody is using them I'm actually going to say and I knew that would happen what should they be used for there was actually an article published by a really good friend of mine Amelie Quran out of the Atlantic Council where she does a really big and deep treatment of s-bombs and she outlines just as a example article in her paper she outlines four really important use cases for these for these

documents uh the procurement you can use s-bombs to make informed decisions about software that you're going to buy or that you're going to integrate into your your workflows vulnerability management threat intelligence the two that I'm kind of going to touch on today are incident response and ecosystem mapping and she outlines a few more in her in her talk but there on the right side is one example from spdx of basically the general structure that one of these things is to take and if you can tell there's actually not a whole lot of information in that document if you've worked with them if you have seen them if you've looked at them they're pretty Bare Bones they're meant to be machine

readable not necessarily human readable so there's that but for these documents because they are machine readable not human readable they're really Bare Bones like I said the hard truth the unfortunate truth is that no set of s-bombs no matter how big of a set we get for every piece of software we have in our Organization no set of those is going to tell us anything about our organizational Risk by themselves we have to actually put in a lot of work to get meaningful information and intelligence out of these things and this is where the data operations actually comes in so in some other talks I think there was a talk earlier talking about the important context of data and

your cyber operations the context here is very very very important I think context is important in everything that we do but a reminder is that Services products things that are organization that your organization provides to others those are all dependent on software components that's one of the reason we have as bombs we study supply chain vulnerabilities now and it's one reason why Rands or why uh Bad actors want to take advantage of supply chain is because they are so critical to our operations so some of the questions that you might be able to eventually answer with these bills of materials are where is your organization most vulnerable what impacts would a vulnerability have on the services and products that I provide

and can these risks be Quantified so one way that you might do this is let's say for an example that your organization provides a service monitoring capability like a data dog comes to mind as you know you have a process running and you want to know information about it let's just say you provide that capability that you monitor a service and you give your end users information about that service now that capability that you are so important for is built upon applications that you have developed to serve your product right so you may have this is the most simple example in the world but your service monitoring capability might be built upon a python application that your devs

have worked really really hard on it may also be dependent on a logging service that logs all of that information and then later goes on to service it for the end users and then those applications all the way down are built upon software and software components so your python application uses packages you're going to pip install those you're going to run with those your logging service how has some logging software that might be developed in a completely different language and what you can kind of start to see here is a dependency tree so at the very very top we have all the services and really important capabilities that our organization provides so like as an

analogy you might think of like your your your water treatment facility provides really critical clean drinking water to serve the entire organization or your entire company or country region whatever but their ability to provide that service depends on the people at the plant it depends on the equipment at the plant it depends on you know all the way down to the very fine ICS scada devices the firmware and all of that is anyone in the room familiar with the charm 10 layer model okay you should Google that in this picture this is the most simple picture in the world there are three layers but you can actually go even further down to Hardware components you can intermix

other layers in between you don't just go necessarily straight from applications to capability you can make this as complicated as you want the point is that down here at the very bottom is where those s-bombs live so the elements of the software that you're working with the individual components all are kind of at this bottom layer that feed up percolate up to those very important capabilities mathematically I already said this is kind of a dependency as a mathematician I have to point out that these are network structures that can be measured that can be studied that can be modeled and if you start building these for your organization that gives you a source of data and insight for your organization

as you run through incident response plans as you run through you know analysis of your processes analysis of the decisions you make that can give you a source of data that you can point to and say hey this data here from which I built this dependency tree is actual real data from your organization I have the s-bombs to prove it we did all these interviews we built this dependency tree so then at the end when you start asking questions about what happened we walk through this exercise we took out this water treatment plant or whatever we took out this logging service and you lost that capability what changes should we make in order to ensure that we do

not lose a capability in the event of a supply chain attack or something similar now I say all of that to say and and I actually I'm realizing I'm going really fast so I'm going to try to slow down I get excited um okay great okay great they were like they were like 40 minutes and I'm like it's gonna be 20 minutes for me um yes it's j-a-r-m yeah yeah um so that's a a really interesting model that's used to model uh Network and cyber dependencies that could give you some interesting insight into how to build these now again trying to talk a little bit more slowly I say all of this to say that

what I just said is easy to say this is harder in practice to do organizations are not simple three-layer models organizations are not simple Services one single service they're not built on single python applications maybe some companies are but most companies are not they're not built on single software packages building these out for an entire organization takes time it takes money and it takes buy-in from people who are really interested in building the mathematical models you can use to explore these dependencies so this is what I'm talking about when I say work is required um and why a lot of people haven't actually taken these steps yet um there are baby steps that we can take

to get to a point where our large organizations actually are starting to think in this way and actually Implement these things um the first is that you don't have to model your entire organization we do this for our people we have org charts we don't necessarily tie the org charts to every individual piece or action or capability that a person serves at the organization but we can um so that's one piece of this people are an important piece of cyber security element of cyber security you can start small maybe model an individual team or one component that you think you'd like to study more you don't have to do everything all at once but before you start doing that or as

you take steps to start doing that there are things that you can do to build towards those models and those processes so invest in red teaming your processes I'm a big people process person I am a systems thinker so I'm not you know I work attainable but I'm not down here writing nasal code I'm I'm up here thinking how are we Gathering data how are we using it you know what organizations are talking to each other I think we need to spend more time red teaming people and processes than actual like components cyber security things and even if you are going to Red Team you know pieces of software or whatever you really should take the time to Red

Team how those pieces of software affect the people and the processes that you undertake at your organization so if you take one takeaway from this section of the talk please invest in red teaming your processes because those processes are the are the steps by which your people your organization uh take to make the important decisions for how they respond to these things um we have to be diligent and deliberate with respect to the data we collect and log about our internal processes so I mentioned on the previous slide you know we have these logging services or whatever but understanding how individual applications and pieces of software are critical to everything that the organization does isn't necessarily

something that you can just get out of GitHub GitHub you're going to have to actually sit down and talk to people and understand who they talk to whether they're really critical and important to a procedure to you know something that your organization does um or you know going back to the process piece we have to actually take the time to log almost metadata about our organization and I just don't think we're doing that um and then I've said it with red teaming a little bit but using s-bomb's and dependency modeling to inform your exercises and playbooks and run-throughs like I said s-bombs really could be a great source of Truth and data that you can point to get everybody on the same

page and say Here's the s-bomb that I was given for the piece of software that you're actually using you agree that you're using it and you're using it for ABC yes okay so now we've agreed on our Baseline assumptions when I Implement a plan we you know test something out we red team and we see the impacts well we've agreed on our Baseline assumptions so these impacts really are real and can be measured yeah but do you have like a you said that that previous slide is a little like high level you have like a practical example of what this would look like for an organization like an example process um so in this example I have for for example I

have the capability the applications and the software but you can have a people layer and you can have information layers so if you have people or approval processes so here's me and I may I may be critical to an approval process for purchasing at my organization but the person who is making the decision to purchase has to go through me to get that approval so in my people layer I have you know here's here's the manager here's my manager decision maker layer here's my you know subordinate layer the information that's being passed between the layers not only information about that purchasing agreement but it's information about the approval so one way to model those processes is to actually honest to

goodness put people and the edges between your people here I'm getting into graph Theory land the edges between your nodes and your network are that information the approvals the stamps the requirements that have to be satisfied in order to proceed from one step of the process to the next so when you build these out for large organization or for an organization doesn't have to be a large organization um it almost becomes a social network because you have information being passed from one person one organization to the next you can make it as big or as fine-tuned as possible or as you want you may have a node that represents a team and within that team are other nodes or

you may have nodes that have attributes of what teams they belong to and what types of approvals they pass so I'm always in graph Theory land and that is what that would look like to me and what it has looked like for me in practice does that answer your question okay that's okay because right now it's like this it's like this big abstract concept and I just want to know like if I want to take this into a company and say hey everyone's cool talk about like understanding our whole flow of everything how do I make that practical like do you have an example of that for like a server like service monitoring like what

that really trickles down to all these different layers yeah I mean so what I just described is basically what that does look like what the process of implementing it is a very it's resource intensive it involves talking to a lot of people because you have to understand who person a talks to what what pieces of software they use to get all of that done in instances where I've accomplished this in the past it is months and months long for the scales that we're doing but for small teams it's a whole lot easier when I've done this in the past it's been huge organizations like military organizations yeah so okay one second hold on he beat you yeah

so how do you um how do you do this dynamically and you know organizations the people change the processes change um and obviously software changes itself but even the people part of it change it so how do you keep it Dynamic um you know as as that changes like is there software that you use to to do this um so uh I don't work at the Applied Physics lab anymore but when we were at the Applied Physics lab there we actually did develop a proprietary system for doing this uh there is a paper in open source that you can read about it's called dagger is what the software is called um we used it to do these very things

and you can actually but all dagger is the dag and Dagger stands for directed acyclic graph there's nothing preventing you from in a computer using python or your favorite graph Theory code of choice to implement a dynamic time dependent directed acyclic graph where the nodes the edges change depending on you know actions you take on that graph model you know it mathematically that's how and computer scientifically that's how you would implement this so if you want you can even set it up to be like Monte Carlo and and ran and random seeded randomly right so like let's say a organization I have a model of all the people in my organization and here they are talking to each other and I want a

change to be made let's say someone unexpectedly really sadly tragically passes away or something just as an example I could see that in the algorithm have that Implement that change in my graph model and then have people respond to that how would the organization handle it how would the organization change would we would we restructures that person important enough that we would have to go through an entire restructuring so implementing models like that that are data driven using things like these s-bombs are really important to driving those important conversations about the people the processes at the seams of your organization right um so that that would be that would be how I would do that

um does that does that answer your question all right one for two

absolutely yeah absolutely you hear about the movies this whole process is please because it's difficult yeah no absolutely totally agree I mean you're pointing out the obvious but the obvious needs to be said yeah right and now they exist when no one's using them yay slow link we'll get there it's because this is what it takes to do useful intelligence and actually do things with these no one wants to invest in this I get it everything I just talked talked about spending months developing graph models actionable pieces of intelligence using these is a huge investment I get it that's part of what I'm trying to say is that we're not there yet so don't walk away from this top be like

stop supply chain guys we did it good at spawns no not at all we're not we're not there yet um yes five maybe I don't know I don't think we're I don't think we're close we got a long way to go so yeah so like four yes um what if any relation to because like some organizations are some of those service catalog and I'm more greater than automatically

[Music] website

yeah what attendance but the dynamic issue uh you know come and go so this has changed since intelligence with that professors all right so I haven't that's not an area of research that I've dug really deep into but I mean you raise a really good question I I just don't know yeah oh okay yes so how does this play into the national cyber security strategy and uh where they're actually recommending s-bombs and the second part is business continuity resiliency planning from a people perspective we've done this in BCP VR uh people at process level but not on at software level two really good questions so the first one is about the national cyber security strategy I was very disappointed in the

National cyber security strategy because there weren't any actionable steps I thought it's yeah right um and I don't think it was it gave us any more guidance than we already have about how to actually use these things which is why I'm out here talking about this because these are steps we need to take and I talk about policy a little bit later but there are concrete steps so we need to actually start taking to get people to that to that to that level of Readiness uh and preparedness and protection but um although they they technically are a piece of the national cyber security strategy I I don't think the national cyber security strategy goes far enough

in telling people what to actually do with them and then I'm sorry your second question was uh business plan uh already handles something similar on the people process that but not on the technology side yes yes right um so in um cases where uh where I have done this type of modeling in the past uh the business continuity strategy have played an important part to that because a lot of teams and organizations already have business continuity strategies and plans uh and it is important when we do this modeling that we push those hard which is why I'm saying red team processes that those resiliency plans need to be Revisited they need to be tested across all kinds

of cases edge cases you know events what have you um and I don't know in my personal experience that I have come across an organization yet who is actually doing that as much as they need to which is why I'm up here preaching people and process yes okay so uh is this mostly like a military government thing right now and hasn't really reached the private sector is that do you think that that's accurate so because of the work that I did at APL I will say that my experience with these has been government and uh and DOD uh but and so I've only worked at tenable since May and my exposure to the private sector both prior to working in tenable

and now is that these don't exist that this modeling is not there yeah I think I understand why um why my question was confusing do you said like you worked on a couple of these now can you show us like the core like end output or artifact where you're like now based on this graph we have a better understanding of this capability do you have that um I do not and uh but I can tell you what it looks like and it is a pretty boring list of recommendations uh that's basically what it looks like but their list of recommendations that are data driven that are informed by a model that is based on their actual data

so these people because they've agreed on the assumptions of the model get to a point where they're like I get this you're right I believe you yeah so it's usually a list of concrete recommendations and I'll tell you this while I was at APL the other thing that I worked on was a project called irpa focus and that was a project just meant run by ayarpa meant to study how good people are at counterfactual analysis if you don't know what counterfactual analysis is that is asking what-if questions and trying to measure the outcome so like man in the High Castle you know what if World War II had turned out different or you know such and such

what how would history have changed um and it turns out people are extremely bad at counterfactual analysis we're just not good especially when it comes to policy and a lot of abstract Concepts um so I say this because it's easy in a in an after Action Report to say well if that had happened I would have done this would you would you it's like that Instagram reel mean that's going out around now that's like you sure about that you sure about that um but when you have a model of your people and processes you can actually show them and put them in that scenario and say now let's assume you made that decision or you didn't make that

decision how would the outcomes have been and put them in those positions and force them to actually make that decision and defend it and that's that's where people start to learn that's that's where that comes out and they start to actually reflect on that reasoning that they were partaking in if that if that makes sense but um that was a long rambling answer to come back to say sometimes the recommendations are these like counterfactual things where we can go back and actually make changes and then say here's what you should have done or here's what you could have done or here's you know the lessons learned from this entire procedure sometimes our actual concrete recommendations for

change yes so I'm trying to wrap my head around this a bit so it looks like you need a combination of things in order to get to a place where you can actually even Implement a model like this because you need observability across your entire environment whether that is infrastructure cloud services application Cloud applications on-premises applications and then you need your business impact analysis to identify your critical Services right and services processes and and people right and then um you also have to capture all of the Shadow I.T that exists within your organization that you have no observability on because that's the definition of Shadow I.T yep it's a lot but I will say though

that sometimes in going through this process if you don't have observability where you think you might need it when you start going through these processes that's when you discover that it's like man I don't have observability in this if I had it look at all this cool stuff I could do or look at more all the information I could have so just the discovery process of using these s-bombs thinking about the supply chain can lead to improvements in the observability now for the shadow I.T that's a good example of a risk or a potential source of risk that is unknowable which you highlighted and a lot of times in models like this in these you know graph models these

dependency diagrams the best we can do is to Donald Rumsfeld it and say this is a Known Unknown we have unknown unknowns known unknowns and all of that um and you might not be able to exactly quantify what the effect of those risks are but you may be able to to get close so one thing you might I mean like for example you might have a crit one of these for like critical infrastructure and you don't know what the weather is going to be like on any given day but you know that the weather is a source of risk right so you can take a guess at what different you know varieties of weather what types

of risks that might impose to the model and have you know a piece of Randomness in your model that introduces that risk and end up with probability distributions that you can use to Upper and Lower bound the risk to your organization and for something as unknowable as like Shadow I.T depending on the scale of the model you may be able to do something similar to similar like that and actually get ranges or probability distributions it's it's definitely hard when you don't have all that insight okay so that's actually a great segue into um into my other thought is that like I didn't even know about observability before I started working for an observability company and before that I

was working with Fortune 500 companies um and many different uh I.T teams and security teams and so I was like I was involved and engaged with a lot of different teams and this never came up like even the observability piece never really came up within like the security teams because they'd have to address it [Laughter] and what I'm saying is that like you have a whole bunch of different uh departments that are working in silos that have no idea and no insight into anything that's happening on the other side of the business and and you have to have that in order to get here so yes and no so I would say that um it depends in some organizations

they're siled for a reason I tend to fall on the side of siloing is bad but that's because of the teams and the organizations and groups that I've worked with and on before but in some cases the siloing can be good for like data governance or data production just something simple like that maybe those teams really don't need to be talking to each other it could be a source of conflict or you know what have you um that to say you raise a good point and um that's I think another example where going through a process like this you know I was talking about work organizing or modeling at the seams of your organization and that's an example of

that where I don't I would want to ask questions about the processes that would force those two teams to work together or for that siloing to be a problem make them work through it and then highlight to them that this is a problem and we need to come up with a solution again easier said than done okay one more okay one more question I still have half of a talk uh this is way more popular than I thought it was going to be this was actually I thought this is the weakest half of my dog so uh yes okay

no no no no no

[Music]

I would say yes I I don't label it that way but I would say yes that it is a it is in some ways a risk management framework or an enhancement on those yes okay okay I have to I'm not gonna tell you no okay yeah

[Music] absolutely not that is what this is all right so let's give it back this is this is you got it nailed it okay now I'm gonna go okay this was all I was gonna say else which is just a summary that all of this takes effort we've talked about that okay so how many of you were in the talk that just that just happened in here okay for the three or four of you this is going to be a little repetitive but for all the rest of you who maybe were standing in a line outside and couldn't get in this will be great um okay so this actually is a topic that is very near and dear to my heart uh I'm

not an expert in AI machine learning I am a mathematician who understands kind of how they work under the hood I have uh worked some machine learning for us for a sock before to help them uh better prioritize the tickets that they uh that they should go through but that was a long time ago um but I the systems thinking and the critical thinking about data is really what drives my investigation and thoughts into this okay so this actually is we the last talked about downsides the bad of chat GPT now when I say AI I don't want you to think just chat GPT AI is like the previous speaker highlighted an entire huge field

that's just so much more than Chad GPT this is an article from MIT tech review when when I was at APL I worked on a thing called the covid-19 decision support center we were deep into coven 19. uh this article came out in July 30th of 2021 and uh it's from MIT tech review and it actually was a survey or uh was a study of a survey basically a summarization of a survey conducted by the British medical journal on AI and machine learning tools that were built or constructed to help diagnose coveted patients and predict their outcomes and unfortunately the findings were not very good so in if you think about the covid-19 situation we had

tons of data about millions of people a lot of researchers said oh my gosh this is great I'm going to jump in I'm going to get the data I'm going to do my best to develop an AI algorithm for good and in haste a lot of bad things happened so in this article in the highlighted Yellow Part you can see the British medical journal analyzed 232 algorithms for diagnosing patients or predicting how sick those would the disease might get and they found that none of them were fit for clinical use for some definition of clinical use that's highlighted in the article but you can just think of it as a doctor would use it not a single one of them

only two were singled out as being promising enough for future testing um one the biggest reason that they highlighted for why a lot of these AI machine learning tools actually failed were the poor quality of the data and here is my thesis in bright yellow one example was a AI algorithm or a machine learning classifier that was built to classify x-ray or CT scan images of covid patients and what they actually found were that the AI was classifying patients whose images were taken while they were sitting upright as healthy or had better outcomes and images of patients who were laying down were more likely to be severely ill oh no duh because if the person can't

even sit up in the CT scanner or the X-ray machine they're more likely to be severely ill so because a lot of those tags hadn't been cleared out of the data that was actually what the algorithm learned to associate um in another example um the larger hospitals were found to have better covid-19 outcomes because all of the data that were coming out of systems from those hospitals were standardized they used things like epic which take in large amounts of data and can export nice csvs and stuff whereas smaller hospitals were entering their data manually there were more errors a lot of data had to be excluded and those systems those data products were not cleaned and pre-processed before they're

offended to the algorithm so there was less data from these small hospitals not just because they were smaller because they were having to throw out so much data because the quality was bad this is scary I don't like this another example um we've if you've seen the John Oliver show John Oliver used this one I gave this talk before John Oliver did so I think he stole from me I'm just saying um the self-driving Uber that hit and killed a woman did not recognize that pedestrians jaywalk so I brought this example up to an AI researcher at Johns Hopkins and he said well but the sensors are lidar or whatever and they're not going to detect you know she wasn't

wearing bright clothing and so they're not going to see her and I'm like that's beside the point that's very tragic and sad they should be using sensors that don't that can see people wearing dark clothing that's beside the point what's in the sub context there is what I want to highlight the automated car lacked the ability to classify an object as a pedestrian unless that object was near a crosswalk they never used data that that showed people crossing streets not in crosswalks y'all live in New York City

okay yeah probably actually well I know the accident yeah yeah right so this is just another example of poor data practices that have led to a really tragic outcome okay this example was highlighted in the previous talk this one is very near and dear to my heart because my husband actually works on the data pipeline for the Jameson Space Telescope so yeah fantastic I'm so proud of him he's way smarter than I am um but when this happened everybody in my household was offended because Google put out this um this very easy demonstration of what their Bard capability could do they asked it a very simple hey can you summarize a few cool findings from the

Genesis of the Space Telescope like the third one was incorrect all Google had to do was Google it and they published it and it cost them a hundred billion dollars their stock tanked because their AI model That was supposed to come out in response to chegebt or whatever couldn't even get a basic fact-finding mission right and no one the scarier part to me though is that no one at Google Googled it the marketing team put it out I'm sorry if you work at Google I'm really sorry but come on y'all and okay so this one is also one of my favorite examples this is a very early image you know you might say oh but

Jesse the data from like self-driving cars and covid that's really complicated data one of the first things that machine learning models were invented for was image classification image classification is easy everybody knows of the digits data set where people draw digits and you classify the digits okay well this is a from a math paper it's really hard for you to see but I'll read it to you it's okay on the left is the image that um the machine learning algorithm correctly classified as a Bernese mountain dog with 73 confidence that it's a Bernese mountain dog it looks like it could be a couple other dog breeds but it got that part right on the right you can't tell

because of the quality of the image in the projector but that image has been perturbed by noise a very strategically chosen noise pattern that's been overlaid onto the image that I can't see because I'm a human but a machine was tricked into classifying that dog as a golf cart and that go it's actually more confident that that's a golf cart than it ever was it was a Bernese mountain dog it's is that that's 98.9 confident that that's a golf cart we're not out of a job yet yes so so this is another example of something I want to highlight this is a article on practical attacks and machine Learning Systems we're going to be implementing

machine learning and AI tools into our cyber security our cyber security stack or for doing whatever it is diagnosing people and if someone can figure out what we're using it for what our training data looks like can they not reverse engineer something to trick it into doing something that we didn't intend it to do there are already papers out there about reverse engineering GPT style training data sets and figuring out what data was used to train them okay I have like five minutes left so I'm gonna go really really fast through this part um I don't want to scare you away machine learning and AI we've heard talks this morning we talked about chat gbt being

really cool and effective this paper was published by a guy I worked with the Oak Ridge National Lab actually showed that in um when they integrate a machine learning based malware detector into their cyber security operations they could study how well it did and it actually did good okay you can read the actual paper if you want there's a citation I'm happy to share my slides so to me this creates a really scary scenario where we're implementing these tools that work that are good for us that are helpful that we don't understand and that's like a perfect storm and that's what I don't want to happen to us there's no policies the previous speaker talked about this I'm

glad that he did there's no policies mandating minimal Effectiveness or transparency we have no idea how they work open AI won't tell us what data they use to train it did anybody read The Washington Post article that came out literally two days ago where they actually studied hired a firm to look into the training data for Google C4 and Facebook's llama algorithm has anybody even looked at any of that training data are you talking about how the like every third answer was like racist no no so this is a little different that's also scary very scary um so how many how many of you guys have used like a GPT or Chad gbt looked into

it and not a single one of you have looked at the time

right you know this well so did you know that the training data used for uh the Google C4 training data that they use for Bard and then the Facebook data set one of the top 100 sources they use to generate tokens is the entire voter registration of the United States foreign now what can that be used for that came out two days ago in the Washington Post like that terrifies me that is really scary okay I have two minutes okay and then I talk about lawmakers we talked about this in the previous talk but there's no policies out here policy is evolving it's catching up um I think there's going to be a lot of

changes I think that you is probably going to lead the way I think they're you know mandating privacy laws for data I think they're going to also do that for AI algorithms I think that's coming I think I think that's good um this is a paper I published on ways that we can actually use clinical trial we there's no excuse for this we can like be transparent we can test these things um we have clinical trial structures to help us evaluate these things no one uses them um and the bottom line is in order to answer and solve these sexy problems the data problems are really critical we got to get our data stuff right we got

to solve these unsexy problems first and invest in these methodologies to get us where we need to be don't let your doggos become golf carts um verification traceability the previous speaker talked big time about traceability we have no like open AI made the joke about it being closed Ai and I like that joke because we have no idea what's going on under the hood even AI experts can't explain the black box if you watch the John Oliver special he talked about this too um like before you even start using chat GPT or a GPT have you assessed what that risk of using that tool is for your organization not just the chat gbt but any piece of technology do you have a

process for evaluating this and is it repeatable again I'm a big people process person um Team just decide that they were going to start using it without telling anyone in security yeah it never happens yeah that never happened so when I gave this talk in Australia there weren't nearly as many questions because no one even knew what an s-bomb was um and I had tons of time to go over this and I know I'm basically out of time but I'm just going to throw this up here as something that you can take a picture of so this is my rules how I think about and actually I think Harvard Business School teaches this too but whatever I think you know I

I follow this I subscribe to this not all data science is created equal there's a difference between saying using your data to tell you what you know versus helping it using it to help you understand what you know situational awareness for just versus situational understanding they're two very different things so take a picture of this um there's a different types of questions there are many steps that you can take to move between these levels the blue box is an example of different types of questions that you can ask related to s-bombs the purple is just like a cyber example of the different types of questions you can ask at any each of these levels and how that

relates to the maturity of your organization but I don't have time to go over it that's it [Applause]