A not so quick Primer on iOS Encryption

Show original YouTube description

Show transcript [en]

hello everybody I'm David Schultz I'm a senior consultant with NCC group where I mostly do web testing and IOS app testing and some basic research and fun things like that I've also got allergies and the allergies you might be turning into a cold and I notice there's no microphone up here so I'm going to be trying to speak loud by the end of the talk I sound like I'm coughing up a long it's because I probably am but hopefully we won't get to that so we're here to talk about iOS crypto and why it's important and why are we even thinking about this well a couple years ago which now almost seems like ancient history Apple made a very public

stance where they said we like your privacy we value your privacy they made some changes in iOS they had a series of pages where they talked about it in great detail about what they're doing and why they're doing it why they think it's important and I had some lines in there that raised a lot of questions things like we can't bypass your passport it's not technically feasible anymore to respond to warrants this got a lot of people kind of uh upset sort of asking lots of questions things like what does this really mean what about forensic attacks and it's interesting because this wasn't really the first time this was starting to hit the popular press in May of 2013

so almost 18 months before Apple had even said that Cena had an article about how Apple was deluged by police demands and they were even coming forth with stories and and ideas and anecdotes and things that weren't really supported because the cork Apple wasn't talking about it but they talked about there being a big backlog of cases up to even seven weeks in one case took four months to work on and then you know the uh this even went into there's a case in Kentucky there's all the cases have been happening in New York one of us just got resolved yesterday in fact there's the San Bernardino case recently so all kinds of cases and issues where people are

talking about Apple assist incomes but they were never really clear about what they could or couldn't do the fact that all these articles talk about a backlog makes you sort of think okay well obviously there's no magic key they can't just simply plug your phone into something and boom they've got all the data they could Brute Force the passwords okay well Apple that they're rich they can afford an awful lot of GPU crackers surely they can make it go quickly it doesn't work that way and that's what I'm here to talk about is how does it actually work why can't you track a passcode offline and lots of other details iOS encryption is very complex but it's

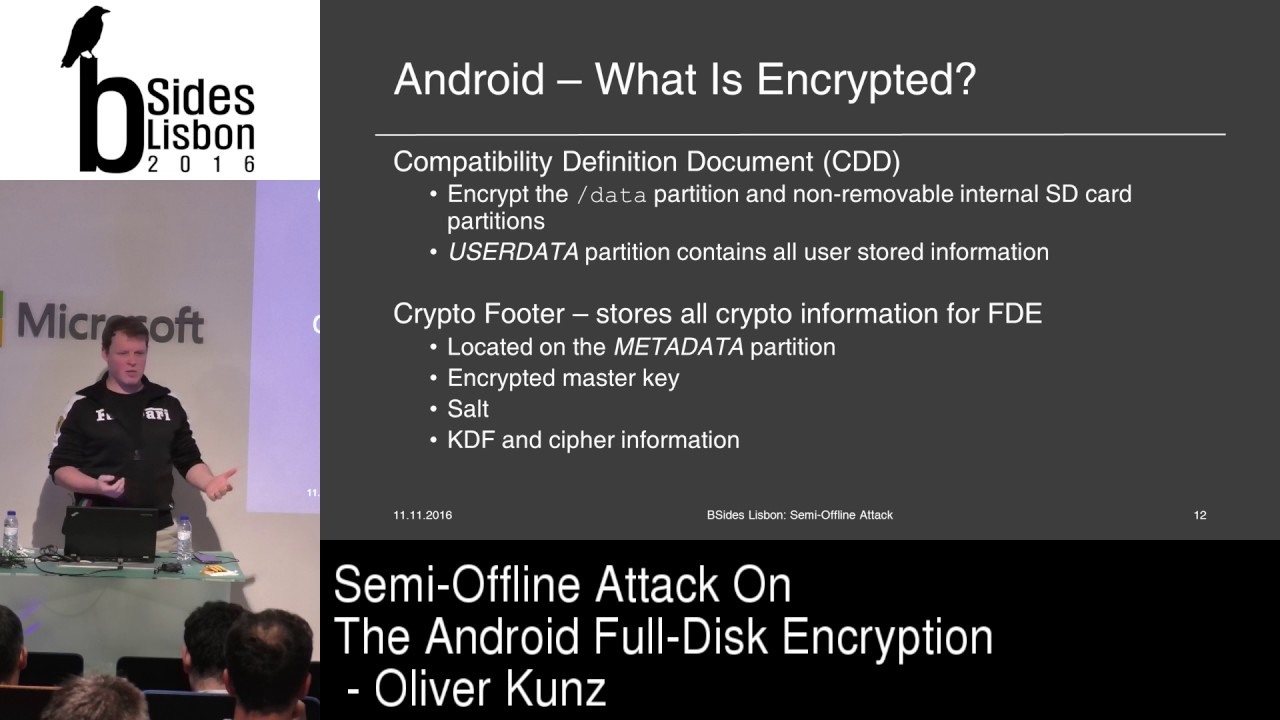

also fairly straightforward and it's very comprehensive uh some early details are figured out some time ago by researchers there's a really good talk that I'll reference at the end uh from sojetti and I think was at a hack in the Box Amsterdam perhaps I don't remember anyway it's in the notes at very end uh they publish an awful lot of very good details way back uh for iOS 4. you know they did this by you know reverse engineering breaking some stuff looking to published apis and then in May 2012 Apple kind of put their cards on the table and they started publishing an Iowa security white paper which is a fantastic document it doesn't go into

you know code level detail but it gives very good information about how encryption Works Apple pay Touch ID all kinds of information all about the the Deep guts of security on iOS devices so definitely check those out if you like this stuff so let's talk about encryption that's iOS encryption okay next slide originally when the iPhones came out the first couple iPhones didn't have much uh data protection on uh they there was uh it was very simple to get data off of them people were making fun of it you could hook it up to USB and suck all kinds of information off of it and iPhone 3GS they added a dedicated AES co-processor located directly

between the CPU and disk I think it's actually on the system on a chip and so basically you can't get data in or out of the CPU without it going through this this encryption step and they generate a random key they call it the EMF key and that key is then encrypted using another key and I'll explain all this as we go through and then stored in the special area and this this key is used to encrypt the disk I've seen conflicting um conflicting documentation on this one sometimes I've seen it say it encrypts the whole disk as a disk sometimes I've seen where it says it just encrypts the file system metadata so it might be

possible to read out the file system structure but you can actually read it in the eye nodes I'm not sure which is which I haven't actually looked at the encrypted data to see but the the basic approach is the same here you're basically encrypting the disk so again there's this uid which is burned in the hardware it's burned in the system on a chip we don't know whether it's burned in when the device is manufactured or perhaps when it first Powers up it might create a random random ID Infuse itself into the into a ROM or a prom essentially on the chip but it's a long code that's unique to that device no other device in the world

should have a matching code if you do go play the lottery that is then used to encrypt a consistent string I don't remember what the strings are but there's there's different strings like all ones or just you know a fixed string is encrypted to create this key 89b then you create a random password the EMF password encrypted with 890 and store them what's called a faceable storage and then that key is what's used to encrypt the partition the big advantage of this is a fast flight and that's I think is what Apple has said is the main reason they even created it in the first place was so that you could wipe a device instantly

if you you know want to turn over a computer or something like that and give it to somebody else and you don't feel like taking the hard drive out and smashing with a sledgehammer you would do a disk wipe but that can take a long time to go through the whole disk all the files and scrub everything with this it's literally just erase the key and the disk is basically wiped I mean the data's still there but it's encrypted with 256 bit AES so it's as far as we know today unaccessible it also means that you can't directly modify the data on the device on the chip so you can't just simply you know pull the chip out you know put something

on it and right to the to the memory or you can't pull the chips out and move them into a different device and read the data there are some limitations though as soon as you get into the system you've got access to anything because it's basically a transparent full disk conversion there's no password attached to it or anything like that it just kind of ties the device to the memory and gives you that fast white capability so in iOS 4 the next year they introduced the data protection API and what this does is for every single file they create a random file key and then encrypt the file with that key or um yes and then that file key is

itself encrypted with a class key so all the files are encrypted with their own keys but all those individual keys are encrypted with sort of a global key that you can use to then get to the files again a lot easier with a diagram you have your data file and then inside the data file you know there's metadata then there's the actual data of the file the file data is encrypted by the file key the file key is encrypted by a class and there are multiple classes uh there's um we'll start with the table at the bottom file protection uh the the protection class none there's no additional encryption the only encryption that's on the file is what's provided as a

byproduct of the the full disk encryption complete unless open is a special mode where it's always locked except when you're uh you can actually have a it's complicated there's actually an asymmetric key that's used so you can write to the file when the device is locked so if you're like downloading something large of the net and you want to be able to have that secure when it's finished downloading you can use this and be confident that when the device logs it can still write to the file but somebody couldn't read it there's complete until first user authentication and basically what that means is all those files are encrypted well they're all encrypted but all those

files are unavailable after reboot until the first time you unlock the phone so the class key to decrypt those is not available in memory once you reboot the phone once you've unlocked it once then that class key is available and you can keep on keep reading that file even with the devices locked and then there's Catholic System complete which means that as soon as you lock the device that file is no longer accessible the class key the decrypts complete file system file protection complete files that class key is dropped from memory as soon as you lock the device Now the default encryption when uh in iOS 4 through 6 was no protection so if you have an application you write a file

to disk it's just written out just normal plain no additional protection starting with iOS 7 through the present day it's complete until first authentication so now if you write a application you save data to the to the disk as soon as that device is rebooted your data won't be readable until the user unlocks the phone at least once and applications can add further encryption to that they can always go to file protection complete I think the reason Apple chose this mode as the default is because there are some applications that might receive updates in the background things like that they might need to write to stuff and if they're chosen the file protection complete by default that's not going to

work it's just going to add some some issues to the developers to try and parse out problems with that so they chose sort of a middle ground compromise just for for the ease of implementation for the time being and then most system apps up until iOS 7 Up Until including Iowa 7 use none so most of the data that you that even the the Apple application is going to do the same thing they still wrote the disk and they were still using the default protection of none so I mentioned the different class keys so again you've got files with file keys that are encrypted by class Keys there's multiple classes you saw the four file projection

complete or file protection classes on the previous slide there's a similar number of protections for keychain items protect until first authentication protect always things like that and for example that's why if you restart the phone it can't connect to your Wi-Fi because the keychain entry that contains the password for the Wi-Fi is encrypted with complete until first unlock once you've unlocked it then you can read it you can connect to the Wi-Fi when you lock the device again and you leave the house and you come back and it's still locked it can still read that key because you haven't rebooted the phone that key is still in memory hmm so the data protection none there's also the class 4 or class D and

basically what happens is it generates a random key encrypts that with another one of these these magical Keys derived from the uid and then stores that in the faceable storage as well so now the diagram's looking a lot more complicated you've got your your hardware-based uid stored in the system on a chip that's used to derive two sort of intermediate magic keys that are then used to encrypt the disk encryption key and the default protection key which gets stored there in class 4 key and then that thing at the bottom is the key back so each class key is wrapped in the key back so remember before we had the file is encrypted by the file key

the file key is moved by the class key now the class keys are encrypted in the Key Bank using the user's passcode key and then the entire keyback is itself also encrypted using another key that's stored in replaceable storage when you when you change a passcode it deletes all the old Keys we'll go into some of that a little bit and another slide so now we've got the whole diagram you have all the data being stored on the right wrap encrypted with the various class keys there that restore the keypad the class keys are encrypted by another Pasco key the passcode key is derived from the uid and the passcode and also that pa35 so the only way that you can create the

passcode key that unwraps all the other Keys is by using that uid that's stored on the devices that you can't extract this is why you can't take the password offline and try and crack it on a GPU cracker the key derivation function that they use is a ppkdf2 using you know the passcode the uid is solved in some variable number of iterations the number of iterations depends on the device the slower device uses fewer iterations of faster devices a lot more their target is about 80 milliseconds per attempt so the idea is that a four character password four-digit passcode would take about 20 minutes to brute force and that's been constant pretty much since they've done this

on the A7 chips and forward they added a five second delay which we believe is implemented in the secure Enclave which again we'll discuss later we're not 100 positive how exactly that works sometimes as I said the iOS security document Apple provides it's really good sometimes it just gives you enough to ask more questions but again the passcode depends on the the key derived from the passcode depends on the uid so you can't pull it from your phone you can't crack it on your cluster uh just mentioned a little bit of this brute forcing a passcode if you want to do that you have to do it on the device so you have to have some way to get to a

shell on the device that has access to certain parts of the system and then you can run a script or a program that will brute force it we used to be able to do this to just people like us because they used to be a boot wrong bug that would let us boot an untrusted image and then there were utilities out there that we could crack it that's how we know it takes about 20 minutes for four digit password uh haven't been able to do that for some years now again it's about 80 milliseconds per attempt but the five second delay that they've added now means you multiply by about 62 which is great until you think

about the fact that it takes a six digits to pass code from a day to two months two months seems like a long time and it is a long time to have a little robot sitting there punching buttons but it's still uh certainly something that the law enforcement Community or a hostile government they'd be happy to spend that time or even a hostile corporate uh corporate enemy competitor better there's also an attempt escalation in Auto white but as far as I understand those are part of the UI so really what's happening is the UI kind of says okay here's a passcode is it right it's not right okay then the next time you gotta wait 10 seconds to enter it again

you got to wait a minute you gotta wait 15 minutes and you have to wait an hour and then after 10 10 uh incorrect passcodes it'll wipe the device but the choice to determine whether or not you do that is a user Choice it's a it's a preference that can be said in the UI so the UI still has a little bit of control over how this works when you boot from an external image there's no limits so you can just keep keep trying and trying as much so when you lock the device mentioned a little bit about this before the file protection complete key is removed from Ram which means any file that's encrypted with that file

protection complete file is now no longer readable so as soon as you lock the device you can't read those files and you can see this if you're on a jailbroken device you can you know be shelled in you can cadify you read a cataphile you read it hit the lock button cat a file and you can actually get a disk error so you can't read it as soon as you lock it it takes a second or two but it pretty much happens right away the other Keys remain present memory which gives you the access to Wi-Fi and certain other features lets you see the contact information when your phone rings there was an odd Edge case that I found

where this didn't happen some time ago um where if you unlock the phone using an MDM command to unlock it and then enter your new passcode because you're a good corporate doobie and you say okay I'm going to enter my new passcode and then lock it it didn't actually delete the keybag from memory so you can still access the files if you could get into the device as soon as you unlocked it we locked it again it was it was done so that was just this very strange very rare bug but it shows that sometimes there may be that they're still relying on certain amounts of the US for some of these protections if you change your passcode and this is

another reason for the way they've got this hierarchy if you change the password it only needs to change the keys that are stored in the keypad and it's actually not even changing the keys of changing the encrypted parts of the keys rewrapping it so it duplicates the keybag because it's unlocked it has access to all the contents all the class keys in a decrypted form it wraps them with your new passcode key and then re-encrypt the keybag with a new bag key throws everything else away if they weren't using this sort of structure then you might actually have to go through and either re-encrypt all the individual file keys or re-encrypt all the individual files obviously that

wouldn't work this way it's a very fast operation to change your passcode it just changes the data in the keybag and everything else on the system remains so intact when you reboot it's an awful lot like when you lock the device you obviously lose the file protection complete key you also lose the complete until of first authentication key from from memory which means that even those things like again the Wi-Fi passwords want people to get so the only files would be available after a reboot is anything that's listed as file protection none and even then they're still protected by full disk encryption and then finally if you wipe the device the faceable efficable the faceable

storage I'm never sure I pronounce that the storage is wiped and that destroys your D key which means all the fire protection known keys are files are no longer readable destroys the bag key which means you can't decrypt the key bag and it also destroys the MF key which means you can't decrypt the file system anyway so when you hit wipe it really wipes you're just not going to get the data so a quick recap you have the file the file is encrypted with the file key the file Keys encrypted the class key the class key is then stored in the keybag and that's encrypted using a passcode that's drive from your uid your passcode and the key

A35 on the system the entire key bag is then also encrypted with a bag one key that's stored in Facebook storage and then the EMF key and all these other keys are eventually derived from the uid which is burned into the Chip And inaccessible outside the device it's not even accessible to software inside the device basically you give the code to the system and it says here it is here's here's the result of the operation using uid but you never get to see the uid all right so is this perfect it's not perfect there's still ways around things jailbreaking devices gets you access to certain things that you wouldn't be able to do otherwise there's obviously the

potential for for bugs in the software some simple some complex uh forensics tools can get a certain amounts of data special things you can do at the boot level and then of course you can just skip the phone and go straight to the cloud so we'll talk about all these here jailbreaking of course it's exploiting bugs in the operating system you're hacking your phone and nowadays you're hacking your phone using something that came from hackers overseas which may or may not be you know a good thing I'm obviously a very U.S Centric view but yes you're getting you're hacking your phone with people from China That's the Way It Is these days I guess it used to

be Canadians or Germans but now it's Chinese at any rate you're trusting some person you don't know to run an exploit on your phone and hack it so you can do things that degrade to security and you're also degrading your security by letting a hacker hack your phone in the first place so good idea bad idea I don't know I used to run a jailbroken phone for a long time because I was on T-Mobile and they didn't support T-Mobile yet but now I only use jailbreaking for uh for research and what it does when you jailbreak it bypasses your code signing bag passes the sandboxes to protect data from being read by different uh different

applications and it obviously needs to modify the file system in order to be persistent so the jailbreak will still be there when you remove the phone but the jailbreaking process the actual Act of jailbreaking can't bypass the crypto on clock device so we always hear a lot of people saying hey well you know of course you can just break through the phone you know teenagers have been doing jailbreaks for years but that's not the same thing once the phone's locked you've got all these very strong crypto protections that you need to get past in order to do anything usually when you're jailbreaking you have to unlock the device install stuff reboot the device sometimes reboot two

or three different times all those things involve the crypto that the jailbreak is going to be able to get past while it's before before it's done and remember that when you're jailbreaking there's a much larger attack surface any app or service that's running on the phone is a potential endpoint for a vulnerability for a code execution attack or anything like that that will let you get into it when the phone's locked there's much much less a surface for you to attack it's what about bucks this is the other thing I always hear about is people say well look at that lock screen bypass it just showed up you can you can do this crazy thing and call Siri and now you

can you can read their contacts well really what your lock screen bypasses are doing is they're moving from one app to another you're not actually unlocking the phone the crypto is still in place it's still locked per se but instead of running instead of displaying the lock screen app now you're displaying the contacts after you're displaying the messages app so certain things you can bounce back and forth between there and that's by Design you know on the iOS now you can swipe up from the bottom and launch the camera so you can run different apps while the lock screen is in place these bypasses just sometimes let you go a little bit deeper into those apps

but you're still Limited in what data you can see the data you see still has to be available when the device is locked and so if you you know even if you get out to a springboard and are able to launch another app which I don't think there's been a blockchain that lets you do that yet even if you could if that app used file protection complete you wouldn't be able to read any of that data either and then of course these are usually fixed pretty quickly by Apple there's also the potential for malicious apps either coming from the app store which sometimes sneak by or nowadays you're seeing a lot more people side loading apps they find ways to get

cracked apps and wrap it with an Enterprise cert to install on your phone you don't need the app store sometimes those can have a bugs in them are exploits in them that attack bugs and there's always the potential for other OS level problems that we're not really thinking about or haven't haven't seen much of yet there's also forensic capabilities but there's no real magic channel for forensics you can't just simply plug something in it automatically finds everything a lot of times they're using the same bugs that we already know about in the community the the tricks that they use the methods that they use aren't really well discussed and a lot of times even the

capabilities are sometimes kind of vaguely defined on the web page so he's like he says oh we can do this and you screw down you all these features these things only apply to this phone so there are certain features there that are good some that are hard to really understand whether they're there or not But ultimately if you have a locked device these tools still face the same obstacles that you and I will if the the phone is locked but the crypto is still in place and these things still can't get at them if it's an unlocked device then yeah there are some hidden or little understood features that allow you to get certain access from the

device and once they can get certain databases they can pull off all kinds of things okay photo information things that you think are deleted but are still kind of still hidden in system databases stuff like that so there is a treasure troop of information when the device is unlocked when it's locked there's very little that they can get off and then booting a new OS you know for a regular computer you hook up a USB drive to the outside you reboot coming off of that or a CD-ROM or something like that include a different OS now you can attack the file system the way that boots work on iOS devices is they have multiple stages there's the

low level boot which starts off that's stored in the boot wrong that starts up another process called iboot and it checks the signature before it starts it so if the signature of the iboot image doesn't match a uh an appropriate signature with a CA chain that goes back to Apple then it won't boot and then once iboot runs it loads the kernel and actually Boots the OS and does its similar signature checks and the OS image when you install it from Apple so if you download an update the operating system image is also encrypted using a key that's unique to each kind of device it's called the GID keypad just like the uid it's burned

into the Silicon when they when they create the device so if you can figure out what the G ideas for an iPhone 6 then you can decrypt iPhone 6 firmware but if you can't get that code then you can't decrypt that firmware and then there were some bugs on early devices they fixed it in iPhone 4S but up to iPhone 4 and iPad one there was a bug in the boot ROM that allowed you to boot an untrusted unsigned image basically there was just a way to to screw with a signature validation process and it skipped right over and you could do the image and that's how we were able to in the past boot off an external image get to a

shell and actually run past the code cracks but that hasn't worked since I can never remember what the years are but four or five years now and then of course there's the cloud many apps now have server-side data components most of the apps that I checked for my job most of the data is online there's very most frequently they're just very thin veneers of apps a lot of times it's just a web app sort of in a window in an application and all the data stored up on a server recently Apple started providing some basic app data storage for free so if you're a developer and you haven't app you have a certain amount of data that

you're actually just store on iCloud for for no cost or very little cost of course users can have their own iCloud accounts where they can throw lots of information all their I work apps and stuff like that third-party storage and then of course the applications themselves might have their own back-end service that they connect to so if you can't unlock the phone just go to the net and then finally MDM and sync when you sync a device to iTunes all the data is obviously synced to iTunes especially if you do an iPhone backup so your calendar your contacts gratification data all that stuff gets stored on the device and you can read that unless you've

encrypted the data if you've encrypted the backup then there's another encryption step that's difficult to get past that you now need to go through in order to read that backup data also the most sensitive information on the device the keychain isn't backed up to the desktop unless you're using encrypted backups it used to be also that you could take the pairing record off of the off of the desktop move it to another desktop and then read that person's phone so if you had an office mate that always left their computer unlocked or or if you were in a environment where you share computers you could get to there you can log into their computer anyway as your

yourself get to where the pairing records were stored take that off copy it's your machine then the next time he leaves his uh just hook it up and pull up a free free software that you can pull down off the internet and read almost all the data that's in all of his apps because now that phone trusts your computer you can get at the data and iOS 8 I believe it was they actually closed a lot of these holes so now you can't simply use these uh free or low-cost software it was like things like eye Explorer and stuff like that you can't use those to navigate the whole file system you can only navigate to certain

folders on certain applications which is great for users allows you for me that's where it is and then mobile device management MDM if a phone is enrolled and configured appropriately you can unlock a device remotely you can send a signal from the MDM server to the device it sees it says okay and it unlocks it actually wipes the key out so now the keybag is is wide open and then you can no longer you know block it with the password but to do that you obviously need to be able to talk to the to the device you need Wi-Fi or cellular access if the phone's been rebooted there's no Wi-fi if the phone doesn't have a

a data plan activated then there's no cellular data and you can't get to it so it's an iPod touch or an iPad without cellular data and again you can't access it on MDF so it's a great way to do it if you've got access to it but you have to have an active network connection in order to uh to send that command so that's how we got to where we are now it's been getting a lot more attention lately this used to be pretty arcane pretty much of interest to Geeks like us people in the security Community why has this been a big big deal now since it's been so stable since iOS 4. and really there's

three things that have been changing in the last couple of years there's been some changes to software some changes to hardware and a very public change to their their policy on privacy and a lot of this was probably driven by some of what happened after the Snowden Revelations so in iOS 7 I mentioned before that the defaults for applications was um none and then starting with iOS 7 the third party apps was complete until first unlocked starting with iOS 8 moving forward all default space all apps are now defaulting to First unlock for the system as well as for third-party generating apps which means that most data on the system is not going to be

readable immediately after you reboot the phone and that becomes important in a little while they also limited the sandbox access over USB that's what I was just saying about even from a trusted computer over USB you can't access all the files for each app so these were the software changes that came out over the last uh between 2014 and 2015. and there's a good way to just to demonstrate this for yourself even if you had an iOS 7 phone still at a time can't really do it now because very few people probably still on that but you can reboot call yourself from a landline and you'd see your contact information show up with your name maybe your

picture stuff like that with an iOS 8 or 9 phone after a fresh reboot when you call it you just see the phone number no information at all as soon as you unlock it and re-block it now the contacts database is open call again and you'll see the whole thing the name of the picture and so on so that's why if you've ever wondered why sometimes they see a phone number and sometimes you see details it's because you probably just rebooted the phone and then they added some Hardware changes on 2013 with iPhone 5S they added the secure Enclave which is a special sub processor running a hardened OS it's an L4 micro OS and it also uses a specially encrypted

area on the disk using again the uid to to encrypt the data and the documentation from Apple says it's also entangled with an anti-replay counter so they've got some additional crypto protections on the data from the secure enclave and The Enclave handles many of the passcode features we don't know for certain whether the failure count is stored there or if it's stored on the disk and they also have a five second delay to password attempts and they've also added some additional features over time to The Enclave for example you can now ask this your enclave to encrypt something for you using a key that you don't get to see so you can actually say give me a public

private key pair it gives you the public it keeps the private hidden you never even see the private key even the app that asks for it never gets to see the private key not many people are using that there's an app that just came out recently that demonstrates it it's kind of a nice little feature that they're they're working on but in general the secure Enclave we only know a little bit about it people haven't done a whole lot of digging though there is a talk I believe in black hat this summer that's going to be on that so I'm definitely going to be watching that see what they say and then finally there's the apple

drawing the line of the sand they came right out and said very clearly we sell products not your data they want customers to be in control of their own information and there's actually Pages up there which give some pretty good technical advice for security choices talking about using long passcodes uh two-factor authentication Touch ID all kinds of really good advice aimed at just normal everyday people for making your phone even more secure and then finally they also promised additional transparency about government access so all these things lead us to where we are today we've had a gradual security of improvement improvements in security over a number of years and then we had Snowden saying oh look the government's

reading all your stuff so it may not be true I'm not going into that but Stone happened everybody got upset and it really started a lot of focus on this which is good focus is good Apple Drew their Line in the Sand saying they are committed to your privacy we started to see some Public pushback public and presume private pushback from law enforcement then there was the attack in San Bernardino and FBI requesting the court to make apple unlock the phone and that led us to where I was in New York the other day and somebody found out I was having uh sitting at a table and somebody found out we was chatting with me found out I worked in security one of

the first things they said is what do you think about the FBI Apple thing so complete stranger asked me about this and I thought that was fantastic even better they were squarely on the side of Apple so I was very happy so what FBI asked for and everybody here probably knows a lot about this but just we'll recap uh FBI has asked for a way to bypass the passcode guessing funds so they wanted to be able to take the phone and just enter passcodes over and over and over again to be able to brute force it in order to do that the order was from the court was specifically asking for a custom version of the operating system

tailored to just this phone was it possible to do this probably I mean like I said we were doing this as hackers for years using the older phones for the boot ROM Doug there's no reason to believe that Apple didn't have the capability technically to do this but Apple also spent nearly 100 pages on court responses detailing why it was a bad idea and they made a pretty good argument I think and then eventually the FBI said yeah we don't need you we hired somebody to do it for us and we're good and so how did they finally get in don't know um we've talked about a lot of possibilities and and certainly everybody on the blogosphere at the

Twitter sphere and everywhere else in CNN they've thrown out ideas and oh this company's doing oh no is this company will cost a million dollars nobody really knows there's some good ideas there's some crazy ideas but we do know is that there are definitely some attack services that still present in the phone obviously the whole system depends on cryptography it's extensive it's comprehensive it's through every bit of the phone all the security basically assumes that that's safe and then there's Hardware attacks ultimately if you can hold it you can own it there's there's nothing that you can do to prevent that fact the question is how much do you want to spend because the the Deep Hardware attacks

are possible but they can be expensive and risky and then finally of course there are software books they happen to everybody they happen a lot every every iOS update there's some weird bug that goes up option bypasses or there's been a couple that actually let you interrupt the uh the passcode process and I'll actually describe this and I'm getting ahead of myself so cryptographic attacks if you want to boot a hacked image so again boot off an external disk so you can actually run Brute Force passcode and cracking on the device you can break into apple and steal their secret key so now you can sign your own image that's the best way to do it

we don't know how they store those secret Keys the Iowa security document mentions that they use tamper resistant hsms for the private keys for certain other facilities certain other capabilities pretty reasonable to assume that the signing key for their OS and their most popular products is probably also protected by an HSN so you're probably not going to be able to do that I would hope you could break the signature process you could break RSA you could break Shaw one you could find a boot wrong book okay the first two probably aren't going to happen again I hope not the boot run bug it could be there but also we know that that's kind of the

Holy Grail for jailbreakers so if there's one there we're not seeing it and obviously the jailbreak Community isn't perfect but they're probably look pretty hard with every new device to see if there's any kind of way around that and then of course there's the possibility of a complete cryptographic breaking on AES which would allow you to either derive the uid and thus assign you know start a offline or just allow you to directly read the files anyway that would obviously be very very bad there are some Hardware attacks you could take the system on a chip take off the top scan it take off a little bit more scan it'll be using chemicals and lasers and I don't know

what else x-rays eventually find where the uid is stored and directly extracted so now you you need this code let's just get the code now you've got it you make a copy of the nand onto your normal computer and then you brute force the password on GPU cluster it's possible it's also risky and expensive if you break it you're screwed if you if your laser is tuned a little bit too high or a little bit off to the side and it wipes out the uid you're never going to get the data back out because it's done it's gone there's also potential for memory chip attacks if there's a way if you can find a way to prevent the

failure from being written to disk then you know OS will never know that you just entered a bad task bill you can find a way maybe to roll the flash back so you enter five passcodes and you turn the machine off roll a copy of the flash back into memory turn it back on again it's back to zero how about that haven't been proven that that's possible certainly theoretically possible I think there'd be a great black cat talk but I'm not going to do that one but I'd love to see somebody do that that'd be very interesting and then there's a possibility for for some kind of race condition if at a hardware level there's some kind of signal made you

know deliberate or inadvertent you know a side effect but if there's some kind of signal that lets you know the passcode was good or the passcode was bad then you might be able to shut the device down as soon as that passcode was bad signal was found before it writes it out to disk and now you've just bypassed the uh the failure count of course now you've turned in any millisecond per try thing into a two minute per try thing but still it's possible you might be able to find the same race condition in software there's actually a bug a year or two ago where there was some kind of a visual indication on the

screen that the passcode was bad and they actually had a little robot that you know would Tap Away the things in it little camera in one spot on the screen and as soon as the bad password was entered the camera would discover this little indication and immediately powered the phone down then you power it back up again so again it really slowed the attack process down but it avoided ever writing the failures out to disk so you could just do it as often as you wanted if you have the time we mentioned lock screen bypasses don't get you much data they might show the springboard they might be able to show that the phone didn't have anything on

it anyway if you were able to find a lock screen bypass against the main springboard and look at me like these are all just the stock apps there's not a single third-party app on here that I care about and I look in the context folder and it's got apple then you know the guy never used the phone at all and there's probably nothing on it there's also possibility for code injections attacks in the device firmware update or iTunes restore or wired or Wireless attack service there's a lot of things that you might be able to go go and do work against the most likely suspects in my opinion either a boot ROM bug so you can then

boot a hacked image and crack the password a lock screen bypass that might get you some Intel and maybe enough to say yeah it's not worth it that probably wasn't worth a million dollars though so that's probably not what they did there might be other bugs in the lock screen that allow you to to intercept the passcode failure count and then of course there's the possibility for Hardware level attacks on memory I think any of these are reasonable and achievable whether any of these are the thing or if there's something we can't even conceive of we won't know probably for a while so how much could you get if you want everything off the phone right away you

need a major crypto bucket if you want everything on the phone eventually you need some way to interrupt the passcode failure tracking and you know using some kind of Hardware software attacks but you've got to Brute Forces so that could take a while if you want just simple General Intel watch me bypass so what does this mean for you and me well again there's really good advice on Apple's privacy Pages terrific information about what to do how to secure it uh good best practices two-factor Authentication uh buy newer devices with a secure on affiliate because that's got definitely has additional protections and I'm fairly sure that Apple's going to improve that's that protection over time

especially with what's been happening lately right now the best advice is select a long pass code even with the outfit even with a five second delay in Secure Enclave you saw a numeric only passcode at six digits could still be cracked in two months so an alphanumeric passcode is best my kids even have alphanumeric pascals because they're strange but you know that that's probably the best way to do this for now which gets around issues of the only really easy attack Vector which is Apple booting off of a trusted boot image if you use a long passcode you can always use Touch ID for your daily use but don't use it so often that you don't

forget your passcode on the other hand touch ID always seems to screw up for me every couple days anyway so I still remember the password and of course if you're arrested turn off your phone right away and if you have a chance try to unlock it three or four times with your pinky so that it will screw up the the past the fingerprint a little longer except the fingerprint I'm sure you'll hear more about that you know from from somebody who had actually talked about legal things because I can't so from from the first version of this talk we gave in 2014 I had a few questions that weren't answered yet can apple Brute Force the passcodes we

always kind of figured they could we still don't really know but we fits us back very strongly that they could would they seems like they don't want to and could they be ordered to we don't know they were they tried to order them didn't finish and has this happened already who knows it might have happened already but there are still some parts some some legitimate questions we just don't have answers for um the docs didn't clearly describe where the crypto processor was it was in the system on ship or not I'm pretty sure it is because that's kind of a silly question can the secure Enclave software be updated that's that's really the biggest question about this is aside from

booting off of a secure trusted image could you update the image in the secure enclave and override the passcode protections and if you can do that can you do it from a black device I would hope you can't but we don't really know for sure all right I need to secure Enclave functions currently in ROM can they even be you know updated at all uh where's the failure account located it's on the flash or is it somewhere inside the system on a chip and then finally will this secure Enclave enforce a 10 chart limit if Apple can get to the point where the passcode failure count all the passcode failure tracking and everything like that are all stored in SE and

they're all in ROMs they can't be changed by Apple and the 10 Tri limit is enforced by that ROM code so there's no way to get around that if all those are true it might even be possible to be happy with a four-digit passive I don't know but we're not there yet so the conclusion as we all know Iowa security is very dependent upon the encryption it's pervasive everything is built upon the presumption that that's strong and safe it's complicated it's comprehensive as far as I know there's no real well-known design flaws in this certainly it's I haven't seen anybody say oh well if they've done this it would be better but they chose this this

algorithm and so there's a problem I haven't seen anything like that bypass the protection therefore depends on Breaking the passcode which you can do with very expensive Hardware attacks you with software plugs that are you know kind of short-lived and it's still a slow process especially to have a long pass code or you can just break crypto in general which breaks everything in the world and we're completely honest and so the best thing is just to fight back with a strong passcode so I mentioned a couple references that the iOS security paper they tend to update that in the fall usually sometimes in the spring as well a very good paper unfortunately it's very generically named so you'll

eventually find it if you Google for it and it's it's available at Apple there's the surgery talk I mentioned from hack in the Box Amsterdam 2011 so that's five years ago now and that goes into an awful lot of detail about how all this works they were some of the first people to publicly describe a lot of this and then there was also a talk from El comes off that black hat Abu Dhabi also 2011 that kind of talks a little bit about that is applied to iPhone 5S or iOS 5. I think the hacking the box top design was for so those are all good papers really the security paper is the canonical document

the other two kind of give you some background a little bit more technical understanding because the paper can be confusing so that's it thank you for your time I've got a couple minutes for questions yes so I came correct it on the disk or at what point the question was is the iOS kernel is the OS in general encrypted as well and at what point does that get decrypted there's a lot of additional nuances and capabilities that I didn't really go into here the the disk is split into two partitions the system partition and a data partition the emfp described talks about encrypting the data partition the system partition which has changed over time I think currently it's a two gig

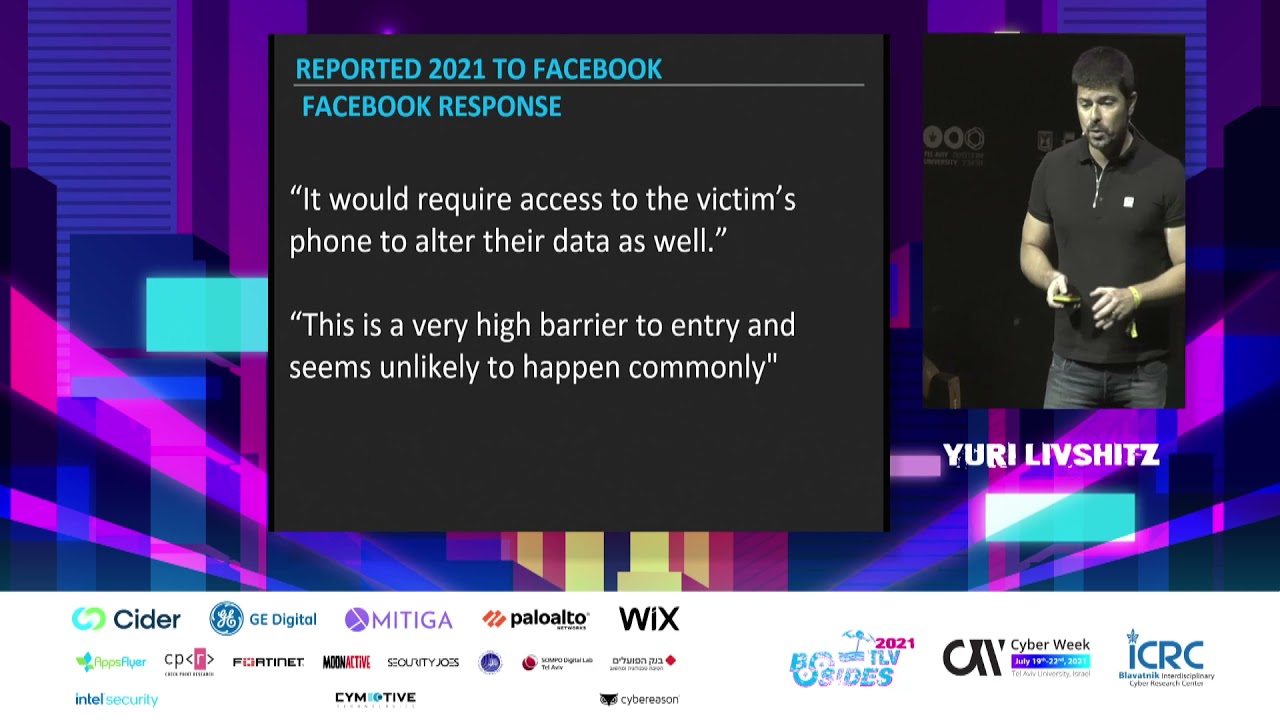

partition is itself encrypted using a different key and that's also derived from uid and so on and you had one no anything else yes uh so after seeing a lot of like the talks between Athena and apple and it just seems like uh both sides whether it's government or the justice department and apple they're in their own like problems right so no one really wants to budge and a lot of the arguments I've seen and heard about let's say the justice department decide is that oh what if it was your kid in Sanford you know would you want this to happen or oh what if it's a child you know molester would you want them to get

away with it and then Apple obviously takes the side of life you want to sell devices and technology and everybody is there a middle ground and what I mean by that is like definitely not key Escrow because crypto Wars I mean both of them did not work out for the government's favor but is there like a middle ground that like you can think of like a strategy or anything like that to say like okay maybe we can work together because maybe I'm just like very um yeah optimistic in humanity but there's got to be a way or there's got to be a solution out there to kind of find the middle ground between the two yeah so so

the question for the recording is um the Department of Justice and FBI or and and apple in their own bubble each side is intransigent is there a middle ground that allows you to have this capability to protect against kidnapped kids or child porn and yet not because everybody else's rights and if there is a middle ground and I knew it I wouldn't be speaking cons like this I'd be making a lot more money um I don't think there is you mentioned uh key escrow key escrow was pretty well destroyed with the Clipper chip years ago and they talk about it again and really kiesco depends on storing Keys securely and we've proven again and again and again we can't do that people

will eventually break into things maybe things like HSM and stuff there might be paths but I'm not confident in that just based on our track work so do you think it'll be depend on the policy rather than technology because eventually policies will all default to governments have control so unfortunately this may be one of the situations where the tech just has to win because it's the safest approach but that's all very that's a political policy legal question that I'm really not even remotely qualified to talk about other than my opinion which you can probably guess is more on the Apple side yes so I know it will be inconvenient for Apple but let's say let's say that

phone was running the latest iteration of Iowa City and apple could say okay we can hack this but then government wants Apple to hack this but then they can immediately release a software patch for that specific method that they use would that be possible visible could so the question that sounds like if Apple were forced to use some kind of not backdoor some kind of weakness to path to break the path Garden device could they succumb to the acquiesce to the court order do it and then immediately issue a passion to protect everybody else it depends on where that where that uh that weakness was you know what they were doing if the if the weakness they

exploded was um excuse me if it was because of the the boot image question then they can't really patch that because they have to be able to boot if it was because of some other things then yeah they might be able to change things and again with the possibilities I've raised about the secure Enclave if the back off code and the timeouts and stuff like that are stored in Secure Enclave but that code is modifiable then yes I could see them maybe it's being told fix this your enclave you know or abuse the cigar Enclave so you can crack the Pasco and they say okay that's great new phones now it's in ROM we can't change them ever that might be

a path that will happen I don't know if they will or not that's kind of where I would like to see it go I only think about this part time so well all the way over there in uh I believe there's another testimony recently talked about this issue the maybe not explicit but the general consensus kind of seem to shift towards well let's just let each group play the role Apple tries to secure their devices as much as they can and we give the FBI you know more resources so they can hire directors and they just try to hack it as much as much of them what do you feel about that situation what do I feel about the idea

of a cat and mouse Deton says it were between government and Tech and that that's really kind of exactly the way I feel um you know there are stories maybe a Pocketful from years back of uh the government getting access to things like Western Union and stuff like that and this will get extra copies of telegrams certainly there was there's documented cases that the British doing that and pulling stuff off the cables you know and there's always been that that question of you know does the company want to feel patriotic and help their country and then kind of give these things on the side an official unofficial basis if they want to do that that's their choice I have no problem

with that it's for noble cause great on the other hand in these days if they do it and it's found out they're going to be crucified in the media so they probably don't ever ever want to do that again even privately under the table but I think it should be a back and forth I think the government has their needs they do what they want to do the Private Industry has their needs and their desires they do what they want to do they want to help fine but they shouldn't be compelled to help they shouldn't be compelled to do the government's job in court but these are just my opinions anything else all right thank you very much

thank you