Paravirtualized Honeypot Deployment for the Analysis of Malicious Activity

Show original YouTube description

Show transcript [en]

Hello besides, thank you for being here and thank you for having me. I'm Androenko Spigakou. Today I'm going to present to you part of my diploma thesis which is about how we deployed multiple honeypot sensors and how we analyze the data captured. So a quick agenda for the talk. In the beginning we're going to talk about what honeypots are about and how they can help an organization get better insights and take action proactively. Then we are going to talk about how we implement our system. And then we are going to talk about how we analyze the data, what results we have taken in an update and some future work. Also, I would like to make a disclaimer that this is currently a work in progress. So, for my, I

am an undergraduate student at Computer Engineering and Informatics Department at the University of Padres. Since January I'm a member of Skitali Research Group where I'm working on my diploma thesis under the supervision of Professor Dr. Nicolas Klaudos. And you can find me on Twitter at Andronkir, reach me via email and reach me on my website for some project I have done so far.

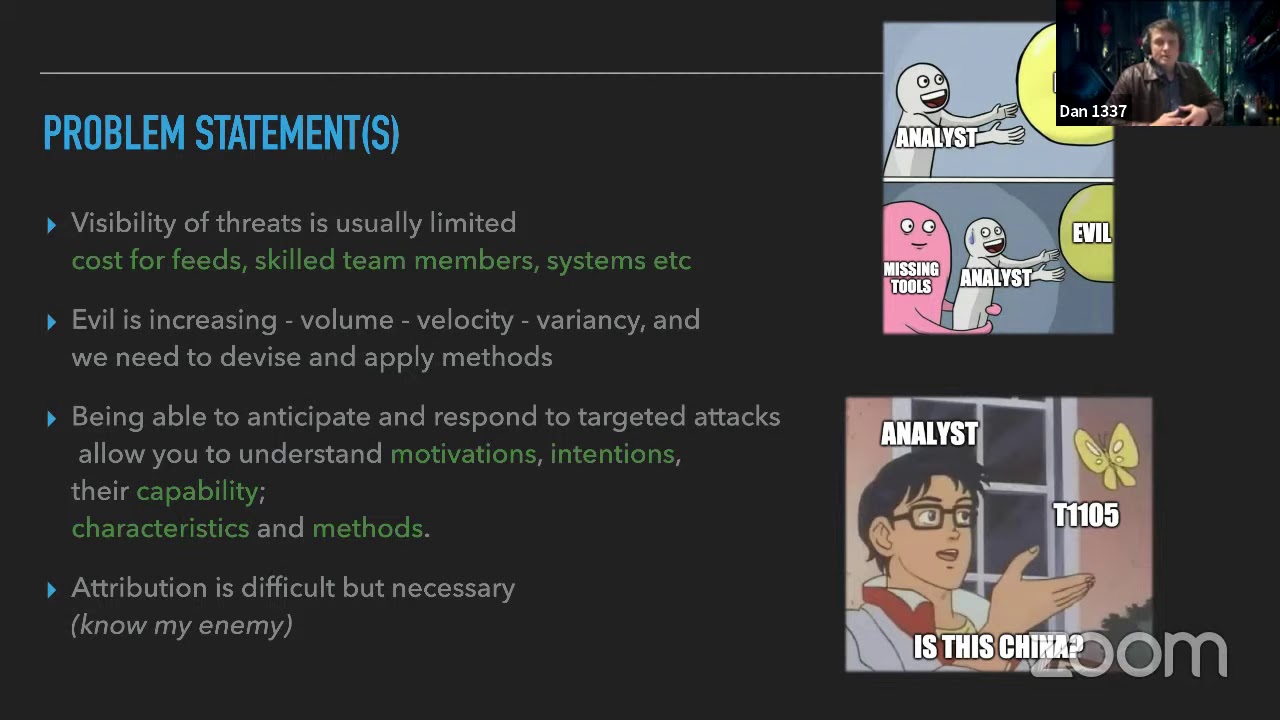

So, introduction to Honeypots. Actually nobody mentioned Sanju before and it was something unexpected. So I will do that and I will quote him, if you know the enemy and know yourself, you need not fear the result of 100 battles. That is actually what honeypots are about, knowing the enemy and yourself. Lance Bitsner, the founder of the Honeynet project, says that honeypots are a security resource who's vitalizing being probed, attacked or compromised. In essence, that means that we are placing an intentionally vulnerable fake system that is designed to trap attackers in our network. In this way, we are defending ourselves with deception and we can get insight about the attackers, learn their techniques and our vulnerabilities.

Some history of the honeypots. So, the first published work was Clifford Stoll's The Cuckoo's Egg, where Clifford Stoll, an astronomer at Berkeley Labs, found an intruder in his system named Hunter and instead of shutting him out he decided to manage and see what he's doing there. Then the first available commercial solution was Fred Cohen's Deception Toolkit and the big real breakthrough came in 1999 with the formation of the HoneyNet project and their series of papers, Know Your Enemy. But why use a Honeypot? Reports suggest that the mean time to detect a breach is 206 days and the mean time to respond to it is 55 days. By using a honeypot we can cut this time short and also prevent, mitigate or respond to an attack more effectively.

Another role is to distract attackers and waste their time, so in this way we're defending ourselves also. We could say that there are two types of honeypots, production and research. Production honeypots are placed in organizations in order to mimic a real system and in this way lure attackers and distract them from the real target. Those are the most commercial solutions. Research honeypots are placed in the wild in order to gather information about the attackers and find new techniques. Of course, honeypots should not be used alone, but they should be used complementary to interaction detection systems or IPSs and firewalls, of course. Another categorization of honeypots is based on the level of interaction. There are low interaction honeypots, which are really easy to install and

configure. They emulate services by using scripts, Python scripts most of the times, and they respond to the attackers in a predetermined manner. The downside of this is that they gather a limited amount of information. Then there are medium interaction honeypots, that they emulate applications like web servers. And they are more coglinks and they are able to capture malware and learn more data about their attackers. Lastly, there are high interaction honeypots, which are real machines. They are running a real operating system. Nothing is restricted, so there is an immense amount of information available. and hopefully they have no production value while they're deployed. So in our world we'll use three honeycodes. The first one is Kaori. Kaori is a medium interaction SSH and telnet honeypot.

It's written in Python by Michel Osterhoff and it's successor of Kibo Honeypot. It provides the attacker a F8-5 system and a self and in this way we unlock the attacker's commands. Also, we can download the files using wget as it has support for SFTP. The output is logged to JSON format and also it gathers all the logs. Here I have an example of the gathered logs.

We can see that the attack is happening like it's happening in real time. From the speed of the attack we can detect that there is an automatic factor running this attack. Also, here, down there, some commands, we can see that there is an unknown keyword, the G, W, W, etc., which is a dummy input in order to see if the last command has been executing. This attack is trying to put our box to make it part of the Mirai botnet, but in our case, it failed to do so, unfortunately. Then there's Dionea. which is named after a carnivorous plant. It's as carnivorous as the name suggests. It allows interaction honeypots according to its developers. It emulates protocols

such as SMB, FTP, HTTP, HTTPS. It does that by giving a self-signed certificate on startup, MISQL, SIP, and many more. It also uses Python as scripting language. And it uses libMu to detect cell-coded data by running the payload gathered in its native VM and measuring and recording the API calls and arguments. It also has a cell when needed.

Then lastly, there's GlassTof, which is a low interaction web application Honeybot. It's also written in Python. And this Honeypot does vulnerability time emulation, that is, it has no pre-configured vulnerabilities, but it responds to the attackers in a manner that they think that the machine is vulnerable. It has some built-in emulations like remote file inclusion, local file inclusion, and HTML injection via post messages, but it expands, it continuously expands the attack surface by extracting keywords from the attacks, and feeding them to growers to lure hackers using search engines. Okay, now, honeypots are very popular, but they are sometimes difficult to implement and manage. So our working solution is to use Docker. Docker, for those of who don't know, uses containers that are virtual

environments. They are more lightweight. than virtual machines as they don't need a copy of the guest operating system, they are using the kernel of the host operating system. And also containers can share libraries between the applications. Also, our containers that are the honeypots are running as processes in user space and as mentioned earlier, this may be insecure most of the times. So to tackle that, we use the Docker Compose Docker Compose is an orchestration tool that needs configuration files with key value pairs. It's very easy to deploy a hand code this way. We can see that there's some port forwarding being done here from the host to the container and some volume mounting for persistence, for data persistence.

And DOKIT.COMBOS has a very simple API, that is, it has basic commands like off to start containers, ps to see what they are doing at their state, and down in order to destroy them. Having said that, we wrote a script, a cron job, in order to recreate the containers on each hour, and in this way, to be more secure for some input and be more resilient to attacks.

Using Docker, we have achieved a very low load balance. We can see that the load average is 1.12, and we can also see the processing that I mentioned earlier.

As far as the detection is concerned, we can see that the running Nmap to our Honeybot system with the default values doesn't return any result, but if we ask for the service version, we can see that the Ionea is found.

On the other hand, Shadan doesn't see our system as a honeypot. Those are limitations, internal limitations of the honeypots. Actually, they have been reported in talks like breaking honeypots for fun and profit. And there's some mitigations in order to avoid detection. So, how we analyze our data. Actually, we used Elastic Stack. Elastic Stack is consists of three parts. Firstly, there's Elasticsearch, which is the core of the stack. It's a full text search engine. It is distributed and it's really highly scalable. Also, it utilizes a REST API in order to talk with and interact. Then there is Logstash that does the ETL extract, transfer, and load. It can read from multiple input inputs, that is our honeypots, all our honeypots. It

does that by streaming files in its input like tail.f and it can also use some filters like grog in order to convert unstructured text to structured. Also it can do geo-iping and we did that using MaxMind's database. Lastly, Kibana is our visualization platform. It can deliver reports and make visualizations as well as dashboards and it can get data from Logstash in real time. Now, our system looks a bit like this. We deployed a virtual private server on Greek research and technology networks, so we were assigned a Greek IP. We captured the data and we shipped them to Logstash. We did the processing offline, but we could choose to do it online, of course. And Logstash transformed our data, fed them to Elasticsearch

where they were indexed, and they were visualized to Kibana.

Now, for the analysis of the malware captured by Kaori and Ionea, we used Elvarez Total. They gave us access to their academic API, and we thanked them for that. Practically, they gave us an unlimited number of requests. We didn't get to get over this number. and we wrote the script in order to check if the samples have already been seen by Aristotle or else to upload them.

So, on the results side we let our system run for 60 days from 15th of April to 15th of June and we have seen those results. We captured a large number of events and IONIA got almost one and a half million Then there was Cowry was 1.002.000 and then Blastopth was 8.000. It was not very popular.

Okay, here's a histogram of our Cowry honeypot. We can see here it's not shown very well. There's the unique IPs. We can see that they are little correlated with the number of events. That is, each attacker tried more than one password username combination in order to SSH. And also we can see here a spike, that is, up to this date, we would forward the port to 2222 for Kaori, and then we change it to 22 so more factors were able to scan it. Then there's Dionea. We can see that the correlation between the unique IPs and the number of events is a little more, is less. And we can see here a spike that is

from the initial scanning that's being done on the internet every day.

Glass-to-op on the other side we can see the correlation that almost here the number of attacks is one on one with the unique IPs. And we should consider here of course that there are little samples. Okay, here's a map of the last hope of attacks. We say last hope because we couldn't possibly know that if the system targeting us has been already being compromised and it's being used as a relay. And also of course we're relying on MaxMine's database in order to do the geo-ip-ing. So, we can see that the majority of attacks to Kaori came from Ireland, almost half. And then there was Russia and other factors. Then on the Ionia, the number of Canbis are more distributed. We can see that

Vietnam was almost one fifth of the attacks and then Russia, India and Indonesia.

And lastly, in Glasgow, that China has an overwhelming 56% followed by Hong Kong with 10%. But again, the number of events is not very big. Some usernames used on our Kaori sensor, we can see that root has the majority of the events. We can also see some interesting ones like Elasticsearch, Admin, Hadoop, et cetera. And on the password side, we can see that Admin is the most successful one. The combination, root and admin gave us access to the SSH HANICOP, so we expected that. Also we can see here an interesting one that's Raspberry Pi, Raspberry Pi 1931. That's a malware attacking Raspberry Pis all over the world and trying to add them to a bot that as reports suggest. So some commands found by

Kauri. We can see that the majority are trying to get what our system is about, like QNAME, CPUINFO, LSCPU. And because, as we said, the result in a pre-determined manner, they are then trying to clear their tracks by setting history, etc. On Glassdoor, the requested URL was the index one, obviously, and then there were some attempts to access phpMyAdmin or MySQL Admin and some other admin pages.

The overall majority of connections came to Dionea and to the SMB protocol with a count of almost one and a half million and then there was the EMP protocol with 50,000 events.

Now on the malware analysis, as mentioned earlier we used the virus total. We captured 9,248 unique samples we hashed them in order to be unique. And as Dionea is running as a Windows XP system, the majority were Win32 DLLs. That was expected also.

Some matching information from VIRIS Total, we can see that 9100

are portable executable, captured by Dionea, but we have some Linux one captured by Cowry.

Also, again, the majority exploited CVE 2017-147

and we'll see a little about it. And one third of the malware had already been seen and tagged as found by Honeypot on various total databases.

CVE 2017 tries to obtain information from processes into memory and as I succeed, obviously it is a Honeypot, They are sending us, in the majority of the cases, ransomware with WannaCry being the most widespread. Now some future work we are trying to do. We are thinking of deploying a sensor to different country that is in order to correlate attacks between the one country here and the one there. And we're also going to analyze more in depth the malware, especially the ones that are not recognized by VirusTotalents database. So in closing, we have discussed what Hannibal talked about, why we should implement them in an organization, we have presented our working approach using Docker in order to easily manage them and have a

low footprint on the system as well as being portable, and we have talked about the generated insights up to date. I would also like to reference some work, the one is by Lance Fincher, the book Honeypots, Dragon Hackers, that we used to familiarize ourselves with Honeypots, and also the work done by DTAC that gave us the initial idea and laid the ground for our work. I would also like to thank Dr. Nicolas Klamos for his time and advice.

the developers of the Honeypots as well as Aristotle and of course the team of Besides, the volunteers and everyone that made this event happen today. Thank you.