Securing Fast and Furious DevOps Pipelines

Show original YouTube description

Show transcript [en]

evening everybody and welcome to BCI's Las Vegas proving ground this stock securing fast and furious DevOps pipelines by its amat a few nice ones before we begin we would like to thank our sponsors especially our inner circle sponsor critical stack and bell email and I was stellar sponsor silence Microsoft and revenue it is their support along with our sponsors donors and volunteers that make this event possible these talks are being streamed live and as a courtesy to our speakers and audience we ask that you check to make sure your cell phones are set to silent if you have a question use the audience microphone so you took mine here you please raise your hand I'll

bring it over okay let's get started okay so first I'm gonna ask you to fasten your seatbelt because this talk is about securing fast and furious develops pipelines so and I thought that would be interesting to start by sharing a personal story that I hope to resonate with many of you so the name would be unnamed but will goes to something like this so we are about to release our new application but we decide to go to penetration tests in order to see if we have some serious issue that we need to fix first but unfortunately the penetration tester was able to breach our application by exploiting an outdated version of a specific library so to the dev team

could you please update the library few one to six to the latest version and the answer is no sorry that would take a weeks we don't have the resources to do that and we have to ask how this happened so in this particular case so it was a dependency which is totally related to other dependencies and use it by hundreds of services some of them have not been updated since years which means updating the library means I've been huge part of the application which frankly a time-consuming task so the main problem is we wish to fix this issue but it is too late and too complicated to to fix at this level and at this stage and this is mainly one of

the many situation where security automation can really help us because what we want is early feedback about this kind of security issue we will see in the company in coming slide how we can do that and we don't want to rely any more on manual security activities because they are not designed to operate at hang scale and they are just a bottlenecks for bug fixes and new futures to get your production and with the push for faster and furious DevOps deployments the bottleneck is starting even more and maybe woman you don't want to get in this kind of situation where we have to accept the risk because as you may know the business goals always

win versus security needs and also we are maybe try out of having the same basic issue again and again after each penetration test so especially these low-hanging fruit that can be sometimes identified by script kiddies and exploited using automated tools so we don't have to happen this we don't have you to happen again and again so so this talk will be mainly how can we avoid this kind of situation and we will see how to reuse the same concepts and tools that are already used by DevOps teams in order to secure and keep up or catch up with the security of the DFS the ICD pipelines so let's see if we can get gonna catch them ok so briefly my

background so I'm from serious advisories of consulting firm at Paris but I work as an application security lead at armondi which is a nurse's management company I'm also certified the offensive security certified professional I also did large contribution to the US community mainly to the mobile security project and the tool I also chaired the tool that we are going to see in this presentation you can reach me at Twitter or LinkedIn so as you may noticed my main background is about offensive security but moving to my actual role as an application security lead I figure out that as a penetration tester or as an offensive guy the service that I want to provide to my client or I did provide

to my clients was all about breaking stuff and offensive security so at some point I figured out that this doesn't really help my customers to improve the security posture of their applications so by moving through this more defensive role I consider it as an opportunity to work closely with developers in order to figure out how we can embed security at Hanks scale with a developer friendly manner okay so let's take a look first at what is the most important security challenges that we may face when we want to automate security testing so the first one is the implementation speed so we so as you may know the development these days are completely moving to rapid age or approaches and shipping

things more frequently and as we discussed before manual security check just continue to be attainable as a way of working with modern teams they are not even designed to work at in scale and then we have integrations very important so for the security team we need to admit that the jeans have already did their process their own process and tools for example to manage the workload and automate the building and the unit testing of their application so the security team need to take on these tools and process and figure out how to integrate in all of these also we have the ease of use so it must the solution that I want to provide it must be something simple to use and

inject in any kind of development software supply chain and then we have accuracy so one of the big no-no field for security trolling is as you may know is false positive so we need to figure out how to manage these false positives and how to enhance the capabilities of our scanning engine in that you reduce the false positive also communication so we need or we have to provide key metrics about first the ongoing scanning activities but also the security level of our application through the whole development lifecycle and finally we have portability so the solution that I want to provide I wanted to work either on Jenkins on Travis wit lab or any CIC the engine so what kind of security

testing tools that we can use inside our organization so there is mainly four categories the first one is software composition analysis so it helped us to track that depends on the dependencies that we are using inside our application for example it's really helpful to know what are the dependencies that you are using that have already some known vulnerabilities if it's the case how severe they are and this basic control can really be helpful in the situation or the case we discuss at the beginning of this presentation and then we have dynamic scans which is a kind of gray and box testing methodology so it tried to simulate a live attacks by sending malicious HTTP requests and analyzing

the response of the application and then we have cess analysis which is we check as an input the source code of the application or the binaries and it tried to build what we called intermediate code representation like that of law or color like the data flow or car backflow and then it took all possible execution paths in order to identify for example some common weakness related to input validation broken crypt crypt cryptography and so on and then we finally have the interactive tests in the dalla G or tools which is considered as the next new bit big thing for automated security testing so the idea it tried to combine the three first technology in order to provide more

accurate results and they have some kind of agents deployed on the QI services servers in order to monitor the execution or the runtime of the application and correlate results from these scanners in order to improve the the coverage of the security tests into of course there is some cons an advantage of each technologies for example in terms of actionability developers tend to use or prefer to use SAS tools because it points right on where they need to fix the issue so it's easy for them but in terms of relevance it may generate a lot of false positives and then we have dynamic application security tests in which in terms of relevance it focused sit provide more

accurate results because it focused on the front end of the application so and the false base false positive rates is very low compared to static analysis but the problem with this kind of truth is in terms of action abilities so it's the only information it provide about each issue which is just the URL the vulnerability was identified so the developer need to figure out by himself which what where is the code implicated in each vulnerability which can be an overwhelming task and finally we have the interactive application security testing we try to combine the advantage of each one of this solution in order to provide more relevance and more actionable results to the developers and

in case you are is it in between which one - which choose to deploy inside your organization you can use this awesome open source project it's called OS benchmark tool so basically what it did it tried to make kind of benchmark between different static or security testing tools based on the scanning results and it provides you which it can tell you which tool which the most convenient tool for your company and the the context of your organization so you can check the online documentation about how to use this tool and in order to figure out as I said what tool fits your organization so let's move on to the most interesting part we will see how to

integrate all this security testing tool inside an existing CIC D pipeline so this was my first implementation it's a very basic one so you just have to for example for if you want to run dynamic security scan using zap you just have to install a plugin inside the Jenkins and it will communicate with your zap instance and you can add as a build step the scanning activity so the ticker is of this first solution so the first one it works and it is a very important takeaway I will say and it's something very easy to begin with and operates but the main problem is suppose that you have some build agents for your Jenkins or grid lab to see ICD engine so you

don't want to occupy a build engines by scanning for example a wasp scanning process because it may break the velocity of you see I see the engine and developers may end up being unable to build their own application so it that's the first problem the second is portability so suppose that your dev team decided one day to move to a complete different CI see the engine or maybe they just want to do some upgrades so for the security team you have to reconfigure everything from scratch which can be a very overwhelming task so I solve the first problem by what happen my security scanners into some docker images so this solution was even more easier than the first one it is just a

docker run comment that you have to add to your Jenkins fight for example in order to run a newest app scan so the good thing is the first one is we solve the portability issues so we just have to build and keep and configure our security image and we can use it basically from everywhere and also it was more easier than the fourth solution we don't have to install anything inside our CI CD engine it is just docker one comment but when you are when we have two or three containers it is not something difficult to manage but when you start moving to or when you have multiple dev teams with multiple scanners the management of your docker

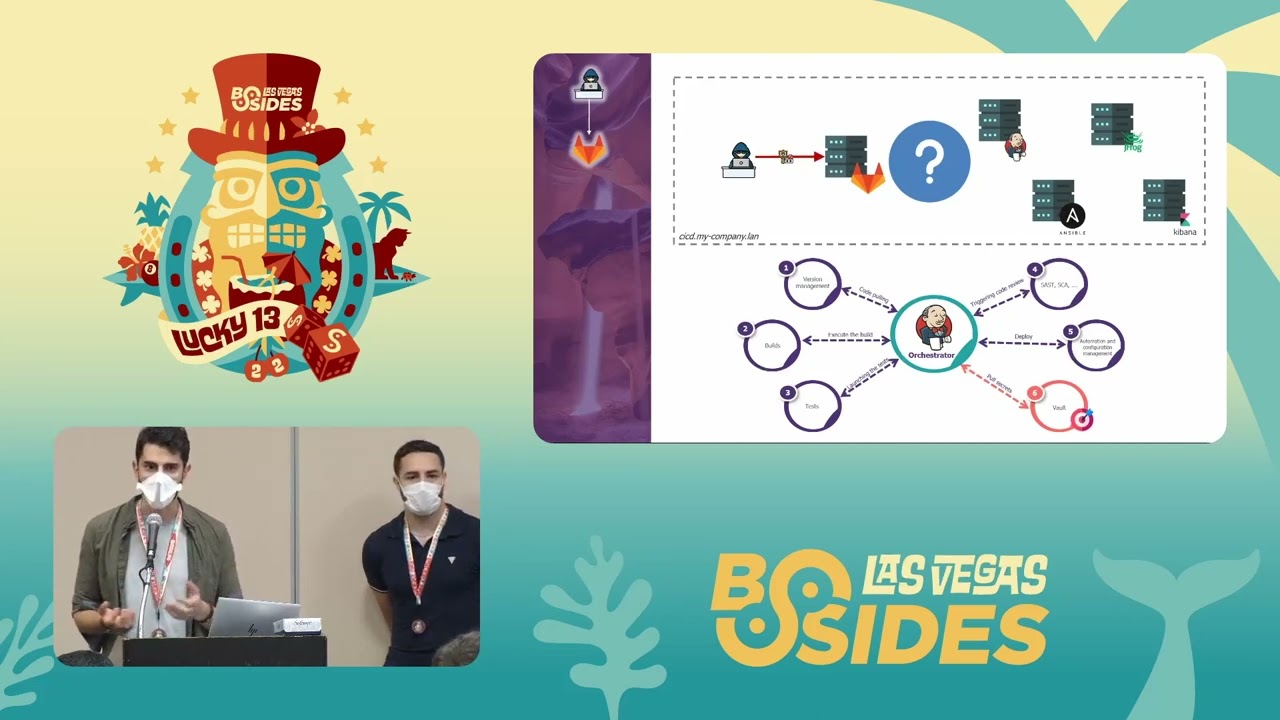

image becomes something very difficult and in general when you are operating a tank scale the orchestration of your scanners and your images become essential so I solve this so we start to think on a more convenient solution in order to solve these two problems and this where we move to a docker containers or scanners orchestration solution so the main goal was to solve the performance issue by moving to a container visit or a continuous orchestration solution so this is the final architecture that we came up with and basically okay perfect so this was the final architecture that we came up with and which can be simplified as follow so I will try to explain each component of this solution

so first we have the scanners orchestration wire which can be used to distribute the scanning workload through the copernicus clusters so and the only thing that you see I see the engine does effectively it's just schedule it apart with micro-service into it which will hand the scanning activities and then we have the scanner registry so we use it our internal registry to store our security images the purpose was to unify how we use and we run our own security torrents and this was very helpful for the security teams because it allow us to continue to make changes to the docker images and which will be instantly applied organization-wide so an order in and in order to make it more

easier for the dev teams to use these scanners we define some kind of charity libraries for security tooling so the purpose is to provide a high level definition of what I want to provide as a scanning service and this is actually the smart piece of code that a dev team needs to add to its Jenkins fight for example under the children a statics analytic using check marks and this was something super super easy because they don't have to worry about the configuration and stuff and I think this the foundation of sec DevOps we use DevOps tools in order to secure a DevOps process and then we have the vulnerability management tools so this Slayer will take care of the

management of our issue because simply when you have everything as critical nothing is critical so the vulnerability management tools will allow us to import result from different scanners and they also got some nice functionality to handle false positive like for example false positive history and then at some point the scanning activity may generate a lot of logs so you may for example want to dig into this log to see for example why a scan was uprooted and why this control was fed or just you want to build your own metrics and visualization so where so this where the elasticsearch stack come to the scene so we use it in a structure to make some kind of sock

but it but for application security so the idea is to ship logs directly to a log stash and then we can do any kind of normalization and fill we can normalize we can filter we can manipulate the risk value and use our custom risk metrics and then we push result to elastic search as a JSON data and finally we have some pre-built dashboard that will visualize our scanning results so this is an example of visualization it's basically used to track the risk over time just cannot quickly show you the interface see this is the supervision interface so at the left we have the sastra results and then we have the test result so the first graph is for monitoring or

to track the issue over time and then we have for example we can see what are the most venerable files identified during the scanning activities we can also identify what are the most of the top 5 vulnerability that we have identified etcetera you also have the the repository with a grid line to how to set up this framework so I suggest you to fork this project and try it by yourself and submit issue if you have them okay so so moving to the docker ecosystem owner or an orchestration solution help really helped us through resolve all the the problems that we faced before and as a result we had a scalable and Hank performance architecture that will help

us to distribute the scanning workload through the whole cluster and we have less to configure and on the deployment server it just the TLS mutual to us identification that you have to configure between you the CIC the engine and the community clusters in order to use this framework we still have some few problems or in Hoffman so all the docker images are still heavy in terms of size so we are planning to move everything to add pin in order to optimize the the memorial resources and also we want to explore more or build more visualization into the last six years stake in order to keep or track the the scanning activity so the next step as I said would be to explore more

functionalities of elastic shirts and enhanced the fuzzing capabilities of our security testing tools and get more detailed logs we also plan in to customise our training program based on the results of the scanners and also establish a solid knowledge base for secure code snippets so that's it and thanks you for your time

we only have time for a couple questions okay I this is pretty cool I noticed that your process start with Jenkins so I'm my question is how you start the process with Jenkins you have like a hook in the github so an engineer when it pushes the call it gets trigger and then evaluate it or how that works how it requires like human interaction or I I missed that part so yeah so I'm gonna just I think we were like beginning so we have defined the groovy functions for example to run any kind of security scan so you just have to put this three line of code into your junkies file and it can be run in

parallel or even after a look in it lab so you either have a choice to put this anywhere you like so the idea is to have something is portability we don't have a specific configuration to run after a web hook or after a specific step so okay so but how you boil an engineer not putting that library in their code because you know in our case for example every time everything gets pushed into a leak is cough it will not get even evaluated by another engineer so it will not get to master unless it passes the Junkin ranking test why do I make sense because yeah like an engineer will just like a boy put in this code because he knows

that Keith cold sucks so how your boy that it depends on the solution you are using for example if you are using sonar cube you can define what we call security provides which is related to security gates and in the security gates you can define for example if the risk score for example hi we will stop the build okay so it is really it depends really on which environment are you using okay buta for a speaker please

[Applause]