Lessons Learned While Building a Privacy Operations Center at Headspace Health

Show original YouTube description

Show transcript [en]

my name is shobhit and today I am going to talk about Lessons Learned while building a privacy Operation Center at headspace health before I jump into the talk a bit about me I am currently the security and compliance director at space health before that I had security and GRC roles at HSBC Deutsche Bank people fitted Investments I did my masters in information assurance and cyber security at Northeastern Boston and I'm highly interested in healthcare compliance privacy running and writing so if you have civil interest as mine please feel free to connect after the talk and we can have a very good conversation so what are the goals for the talk today and why we are here to speak about the

Privacy Operation Center at the first place the goal is to speak about why build a dedicated POC at the first place secondly I also want to talk about some of the considerations for building a privacy Operation Center and lastly I want to leave you with some guidance or initiating successful privacy programs across multiple org beat engineering privacy product or legal with the goals I also have few assumptions that you have some familiarity with privacy laws such as gdpr CCPA cpra and you are kind of aware for the requirements of HIPAA Your Role is at the intersection of privacy GRC product and engineering and lastly you have at least one security or privacy exec to Garner the

support from other management teams now why why do we need to build a privacy Operation Center in the first place first and foremost there are legal and regulatory requirements coming up all the time if you're working in the industry you are well aware of the different state laws from New York Virginia of course California gdpr and then some requirements from HIPAA as well which mandates that you work across uh across the organization with different teams to fulfill these requirements secondly there are mandatory requirements from the App Stores so on the screen I have two screenshots one from Apple the other one from Google Apple mandates you to have a deletion button in the app if you're allowing

your users to have a defect functionality to create the the account from the app itself and secondly Google requires you to declare all the data that you're collecting from your third parties and how you are sharing the data with your third parties third in the last quarter itself there were three uh FTC slabs from uh flaps on the FTC on companies which are in the similar spaces us and this the the second screenshot is on the HHS website where so far as of April 10th of 2023 there are 145 breaches reported on the HSS website and this is that's not the the right way for organizations to to prevent their financial reputation losses and once you have this POC it

becomes a lot easier to manage this different uh requirements for for compliance and lastly there are there is always this ongoing Midas scrutiny the screenshot on the screen is from Mozilla they publish this report called privacy not included every year and each year they give their score on how the application is performing with respect to their privacy and security practices this screenshot for headspace is from last year where the results were not very favorable for us and this is not the the correct way to to work or functional application where you have like millions of users using your application every single day so it either helps you earn the trust of your users or lose the trust of your

users but with all that being said and while we want to bring all of this together we truly believe that privacy is an existential risk for headspace health if we don't have privacy at the Forefront of every single thing that we do it's going to be extremely hard for us to compete and to survive in this space and for that regard every single thing that we do from releasing to a new feature to building a new product we have to have privacy at the Forefront of everything now before I jump into the price Operation Center I always speak about what privacy is and how I look at the Privacy at the first place first privacy is a fundamental human

right if you are having privacy in your product and application it's not an add-on it's a fundamental human right and you have to take care of that secondly it's a competitive Advantage for organizations I work with prospective customers all the time and once we have this this conversations regarding our competitors and ours it just becomes evident that privacy is a competitive Advantage for us and if we are showing our leadership and thought leadership in building this privacy practices it's good for the organization and lastly it's a foundation of trust we have millions and millions of users across the world and if you don't have this Foundation of trust with our users it's extremely hard for us to to survive

and grow now how do we build this Foundation of trust is an extremely simple process first we make promises we make promises via our privacy policies by our terms of service by our notice of privacy practices and then secondly we keep promises we don't want to do anything which is not aligned with the with the benefit of our customers or which doesn't enrich the user experience of our members so this is how we build the foundation of trust just by making promises and then keeping promises secondly we should also talk about what privacy is not so privacy is not only security and I can give an example of that let's say you are having your data

encrypted with the best encryption methods in the world and you have this encryption key distributed to all the engineers is that data really secure yes but is the data really private when all the engineers can access the data no secondly privacy is not a zero-sum game privacy can and need to coexist with Innovation efficiency transparency usability and accountability many times we have this contention with the product teams and the engineering teams that hey we want to build this feature but we have to keep this into account for our privacy and we always come to this conclusion that we have to coexist both of these together it's not in either or for privacy or usability can anybody give you an example of a

company which does this really really well Apple yep Apple does this really well and they are like the Hallmark for us to be uh to make sure that we don't take privacy as a zero-sum game and lastly privacy is not solely an individual or organization's responsibility we need the legal and regulative Frameworks to make sure that we have boundaries defined secondly we need the industry best practices there are a lot of best practices around security but there are not best the best practices around privacy that for example if I want to share a piece of data with one of the research Partners how should I go about it that's still uh we don't have an

industry practice best practice for and lastly we have to foster a culture of privacy awareness we speak a lot about security awareness in the organizations that we want to prevent malicious insiders we want to make sure that all folks are aware of the security practices at the company but we don't give enough uh importance to privacy awnings which is equally important this essentially means that do your members know when they need to share data with some other third parties what communication tools they can use or are your employees aware of the research projects going on and how they can anonymize the data for their research purpose and lastly I want to speak a bit about

the guiding principles of privacy before jumping jumping into the POC so for every single thing that we do at headspace Health we Define principles and then we do the actual work we have principles for our technology Arc we have principles for our Security Org and while we were thinking about having this privacy Operation Center built we realized that we need to have guiding principles so if there's ever an Ever contention that which decision should be the right decision for us we can come back to these principles and take our our guidance from these first one is consent we want to be extremely specific in the consent that we ask but at the same time we want to

give this right to users to withdraw the consent second is accuracy we want the member information to be accurate all the time and they should have the right to correct the information if there is an incorrect information about our systems or databases third we want to secure all the databases and we want to make sure that there is no unauthorized access disclosure or use via physical technical administrative set cards fourth we are accountable for complying with all the legal and privacy requirements there is no other way around it we have six or seven requirements that is required by gdpr CCPA to comply and we want to provide all those rights to our members next we want to be extremely transparent

about our data classification collection used in Sharing practices what data would we collect from our members what data would we share with with other members and so forth next we want to make sure that the information is confidential and next we want to embed privacy by Design from the Forefront of all the systems that we develop we truly believe that privacy should not be an afterthought once we create the applications also systems it has to be integrated with the sdlc and I will speak about it a bit more that how we can integrate stlc and privacy together if and when things go wrong we want to make sure that we have the resources and we are prepared to inform members uh in

a timely and transparent manner we want to minimize the collection of data that we use we don't want any data that will not use for the benefit our members which essentially means to provide them either better recommendations in the headspace app or to just improve their experience when they're using the application and lastly while we are collecting all this data if at some time we determine that this data is not required anymore we need to delete the data or at least anonymize so it just doesn't hang around as a as a risk that you are collecting a lot of data which is not required

now what constitutes a POC at the first place so while we were thinking about building this POC and there were like so many things that we could do we realized there were four things that we really need to do and we could just bucket all these things in this four pillars the first one is governance in management the second one is Partnerships the third one is tools and lastly security and awareness so every single thing that we were doing at headspace Health we wanted to have all of those bucketed in either of these four buckets over the next few slides what I want to do is maybe take some guidance from what we developed in our policies and

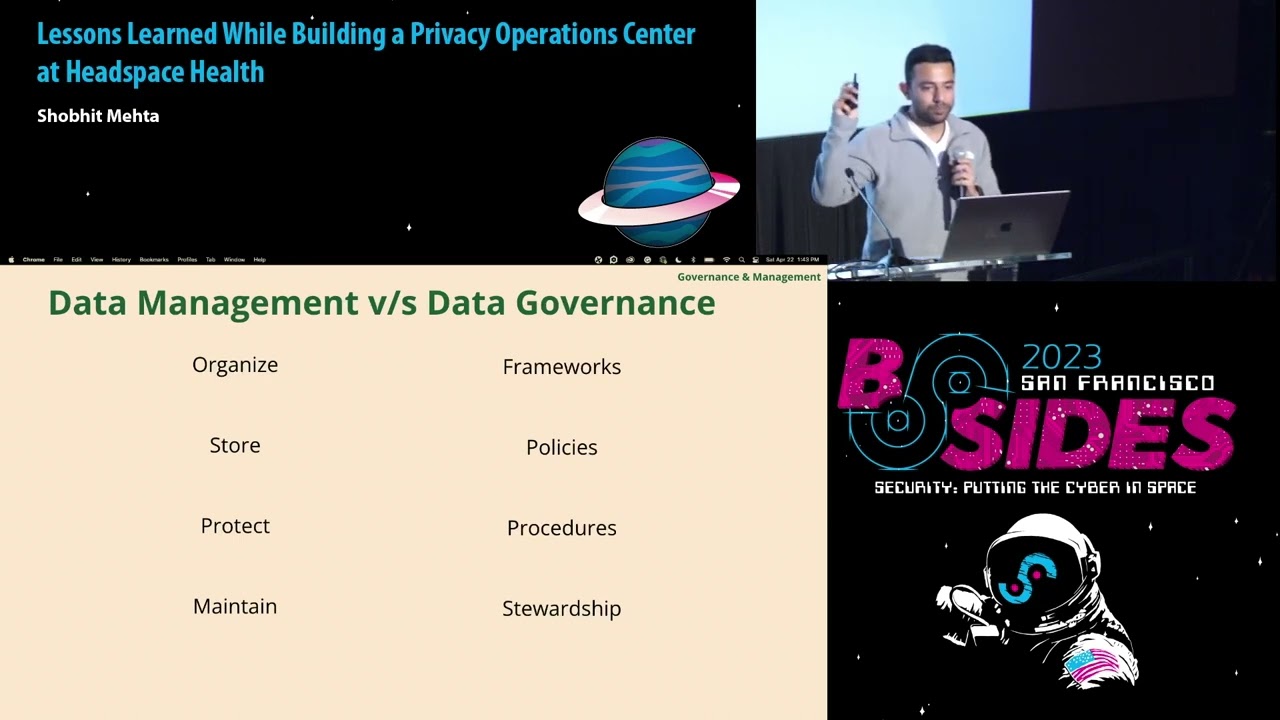

procedures and then go through each of these different buckets and how we can use that guidance for your data classification and your your price Operation Center the first one is data management versus data governance this is the base of everything that we do at headspace health by data management I mean that you have to have organized organization of your data you should have the storage of your data adequately protected you have to protect the data and then you have to maintain the data for adequate reasons and if I talk about data governance it essentially deals with Frameworks policies procedures and distribution now you can if I can sum up all the data governance points in one word

that would be strategic all these things are driven by strategy and if I can sum up all the things on the data management side that would be classification which is the base of every single thing that we do so what I'm going to do what the next few slides is to start from classification and then slowly transition into Data governance piece so for data classification this is the traditional data classification I'm sure you all must have seen this in some form or the other where you have public confidential restricted or highly restricted but this data classification did not fit our requirements because headspace health is extremely unique we are a Telehealth organization with two products headspace which is on the

consumer side ginger on the care side and this data classification just did not fit our requirements so in this case what we did was We Invented our own so this is the headspace Health Data classification on the First Column we have the parent classification which is sensitive private customer confidential company confidential research and public the second classification speaks to the child classification which is Phi SBI pii customer confidential and so forth and there are a few examples for each of these so right now here is on the screen you are seeing only two or three of these examples but when you are working with our engineering teams we gave them at least 15 examples of each so when they are

doing their data tagging for disa requests or when they are doing their data tagging for a deletion request it doesn't come as a surprise and they know exactly how to categorize and tag the data but data classification is incomplete without inventory we all know that you can have the best classification in the world but if you are not inventoring your data and you're not tagging your data it just doesn't make any sense it will just line a spreadsheet and nobody will use it but at the same time complete data inventory is extremely hard when we started doing this for the first time across the company we realized that this is such a critical problem for

organizations which are unique which are in healthcare or which are complying with different requirements uh at the same time such as gdpr and HIPAA which information you should delete which information you should hold it's just an extremely hard problem so what we did we had this initial milestone for the data inventory we wanted to have this nine columns just the name purpose internal external storage and the other columns fill this columns to the best of our abilities for all the data that we had and we thought that once we'll do this exercise to the best of our abilities it will make the other processes a lot smoother we did that and then we realized that

this only covered the information we have it did not cover the information that we were planning to uh collect by uh provide new features or via our new practices that we're releasing so we'll come back to this slide to tackle this problem that they we did the initial data classification but how should we keep this data inventory alive now relatedly I just have this one slide for these are requests for those of you who are not aware these SARS are data subject access rise request where users can come to you and then asked you to delete your information or delete their information ask further information and there are six additional rights that they can ask for

and while we were looking for a decent tour in the market we realized that there is no one-size-fits all if you are in a Telehealth company you have to comply with the requirements of gdpr CCPA but at the same time you also have to comply with the requirements of HIPAA which are very very unique HIPAA requires you to retain all the data for a period of time and you just can't delete the data no matter what so we realized that after uh the entire year we spent on our demo and POC it's just that not the right solution for us so we build our solution for processing the DSA request so this is one slide

that I wanted to highlight that if you are thinking about onboarding a tool such as one trust or security please be extremely mindful that your use case could be very very different from what they are already serving and it just might make more sense for you to to build your own tool and not onboard one of those tools and lastly whatever you choose to do just keep it alive if you are inventoring your database make sure that that's that's uh alive if you are having your DSR till built yourself make sure that the scripts that you have are up to date and they are collecting or deleting data when you are storing the data in a

new third party so coming back to the earlier slide where we only covered the data that we already had and we only invented that data how it go about collecting the data or inventing the data which will cover or which will collect in the future so for this phase we realized that privacy impact assessments are extremely helpful when you're collecting New pieces of information from your members but if I'm part of the security team and I go to the product team and say hey I need to do a privacy impact assessments can you help me out with that they'll be like no we have some road maps for on our quarter and we have to

do this as soon as possible and from my experience I realized that anytime there is legal team in your email chain it just makes the entire conversation a lot easier so I would implore you to make legal team use best friend for every single thing that you're doing now this is one of the procedures that we developed across headspace health and while we want to do a privacy impact assessment for each of the new features we realize that unless and until we have a dedicated workflow for how we want to do that with the product teams and the engineering teams it's not going to serve our purpose so on the screen right now we have three

different buckets product engineering legal privacy and security and this is something that we recently rolled out that every time we want to build a new feature at headspace health it goes via this reviews so the product team they built their PRD they give the PRD to the incident team the incident team creates a text back in later relation to the PRD both of those are submitted to the security privacy and legal team for their reviews the legal team performs their own review which essentially means checking all the regulatory requirements that we need to fulfill for example if you want to roll out a new feature in France the French authorities want all the Privacy policies in terms of service in French

so those are some things that we want to highlight as much as as early in the process as possible rather than going to the product event saying hey we did all the development but we still don't comply with all the legal requirements the second thing is the Privacy review now here privacy team they go in and make sure that every single piece of pii Slash Phi we are collecting it's cataloged it's remarked that why we are collecting that pii and then if we need to minimize that data collection we propose that to the product team and lastly the security team performs the threat modeling on that PRD so if I receive a new feature request uh me or

either my team they perform the threat modeling and also once we develop the feature we conduct an internal pen test so if you are releasing a feature which is going to affect millions of users we don't want to roll that feature out unless we do an internal pen test so we created this entire workflow to make sure that all of these different pieces are covered once we do all of these we propose our recommendations to the product team some of these are blockers some of these are good to have but this has served us really really well so far we rolled out this process in April of this year and so far we pen tested three

features we've been tested we reviewed four prds and tech specs and this just uh streamlines the process a lot more when you have the entire chain in your jira ticket now while we want to have all these processes done we don't want our Engineers to keep hanging in and just thinking hey what we are doing to embed privacy by Design in the sdlc so right now we use these three tools for shifting our privacy Focus from left to right the first one is Privado and what Privado does is every time a developer asks for a new piece of pii or Phi in the code it asks for a reason that hey I see you are collecting this piece of pii can you

give a rational that why do we need to put this piece of information in the first place secondly uh now we are at a better space where we are much more mature in our data classification and labeling and now we move to Amazon glue for automating all of these and then lastly data governance which is equally important so while we are planning to shift all our privacy by Design Focus towards the left we realize that it's extremely important to have this towards the right as well and I didn't give an example so let's say if there are headspace engineers and they want to access a database which is for ginger it just makes no sense why somebody who

is working on a different product they need to have access to another database and what we did was with the help of this tool we created some guide guide uh guard rails and policy so that every time this happens we receive a notification or either their manager receives a certification saying hey this is happening do you approve of that or what's going on so this is our effort of Shifting privacy from left to right which is still in the operationalizing phase but we are really really hopeful for this month this is something that you're still trying to operationalize at headspace Health which is the Privacy threat modeling so while we have a very very good team of our doing the security

threat modeling we realize that it's it's a budding requirement that we have to have a privacy threat modeling as well so while I was researching all the Privacy thread modeling papers uh this is one of the papers that Professor hoppin wrote and he divided the entire privacy threat modeling in just two buckets the first one is data oriented where he says just minimize the data you are collecting extract the collection of the data or to make sure that you are limiting the processing of the data separate the processing of the data and then lastly when you don't need it any further just delete the data or make it unlinkable the process oriented is extremely simple

as well inform your users about the processing provide control to your members so that they can refrain from processing their data enforce this processing in a privacy friendly manner and then lastly demonstrate that you are doing all of these in a privacy friendly manner so this is something that is still operationalizing at headspace health and hopefully we'll take our own split quarter to to learn about this model and operationalize this a lot more in the in the upcoming quarters the fourth bucket is security and awareness so while we talked about governance and management while we talked about the tools while we talked about the Partnerships with the legal teams and the product teams and privacy

teams it's equally important to focus on privacy awareness because this is one of the most important reasons for for developing this entire privacy Operation Center so what we did at headspace Health we developed our own HIPAA security trainings which essentially means that this is extremely catered to headspace health I have seen a lot of organizations using hippo trainings from organizations or just LMS platforms which is extremely generic and it did not have a server purpose for example we don't allow Phi to be shared via slack but it's just so hard to to let the all the users know or the employees know that this is not allowed so we created our own headspace health uh specific hip-hop training

we have monthly office hours with our CSO and the DPO and what we do is we open this office hours for the entire company so they can come in and ask any questions that they have to the CSO and the DPO and all the questions that they ask are then cataloged in our Master privacy FAQ so every single thing that we do we have like a 50 page master privacy FAQ which speaks to the Privacy practices for headspace security practices for headspace and then similarly for ginger and then we have dedicated channels for our research team and engineering team so if they have any questions they don't have to go back and forth they can ask

those questions right there and then which just keeps uh the knowledge cohesive across multiple teams and lastly we have a privacy intranet for all the all the open for all the company and in that privacy form when new hires join we implore them to just go through the internet read through the master privacy FAQ and ask as many questions that they have in the form of a wire form so once we have this form we answer respond to that question and then all that knowledge goes into our privacy Master FAQ which is open for the entire company all right so I just want to summarize this this entire presentation is in three bullets first recognize that

privacy is hard and Industry guidance is weak we have a lot of Industry guidance on security via Nest via sock via ISO but the industry guidance on let's say data sharing practices or how to comply with gdpr it's extremely innocent right now and there's a need to have this industry guidance uh more robust secondly there is always an opportunity to improve and this is something that we learned while we were doing our data inventory when we had this initial Excel sheet filled out we were like we have all the data invented now we are doing a wonderful job but then we realized that we did not account for any of the information that we collect in the

future and then we need to uh update our scripts that we use for our DSA requests and then we realized that this always always an opportunity to improve and lastly when in doubt just ask yourself how this will look like on the front page of wwsj which essentially means that if you are not sure that hey should I make this decision for the users or should I make this decision for the product just ask yourself and let this question guide you on your decisions and influence your decisions with the other teams as well these are the three takeaways that I have first one is security enables privacy there is no other way around it security

has to be married to privacy and as much as we want to segregate both of these it's just that they have to be married and they have to they have to work cohesively to enable privacy practices second put Members First this is one of the headspace health values that for every single thing that we do we want to put our Members First and give them the reason of Doubt or Reason of benefit that if we are in a contention that we should we do this or not we should should just take this uh value Ginger value that we want to put our Members First and keep this into into account at all times and then lastly we want to build the

world's most trusted Mental Health Organization and we recognize that it's not possible without the support of the industry and without the support of external external members so if I presented something on the slide that you don't agree with or you have any additional thoughts please let me know when we can work together on building practices which can be shared across the industry uh Telehealth is an extremely new industry for the entire world and we are all finding good practices for security and compliance so if you have such practices or if you agree disagree with any of the things that I spoke about please let me know when we can we can have a very very

thoughtful discussion on that lastly few resources I talked a lot about the marrying security and privacy and and making sure that we have this guiding principles of privacy so I'm I wrote this book they see a serious handbook and there's this chapter 17 where I kind of jotted all these thoughts in extremely detailed manner that when we think about having privacy and security go hand in hand how it should look like what each of these principles mean how companies should look at privacy threat modeling and so forth so that's one resource that I would highly recommend the second one is conferences there are two conferences the use next paper and then the rise of privacy Tech which are

extremely informative the third resource is the is what was my starting point for for my work in privacy I am from the security and compliance world and I had no idea about how to start in the Privacy world and when I read this paper for the first time a taxonomy of privacy it just opened the entire world for me there are so many wonderful nuggets in this in this paper that if you are remotely involved in privacy and security you should absolutely read that one and lastly IPP which has their own set of resources they have a lot of different resources and trainings that you can you can use but I would highly recommend at

least number three which is a taxonomy of privacy that's just super super helpful this is my contact information my email showhead jrcmusics.com where I haven't wrote in a long time and then my LinkedIn and the slides will be available at the last URL so if you missed any of the slides and you wanted to to have the picture you can download the entire sites from there all right that's all I have I'll open the forum for any questions now

encryption or other data masking techniques in use yeah we so the tool or the question is with our encryption or inventory that we are doing are we also doing the encryption methods or we are tagging the encryption methods as well so all of that is taken care by the data governance tool that we're using it shows this data is encrypted or for example if there's a bucket on S3 which is not encrypted which has Phi it just shows that you have this bucket you have all this Pi in here which is not encrypted so that data governance tool becomes extremely handy when we want to do a risk assessment on our inventory or all the data sources

okay how do you handle that as far as default options go and ensuring you don't overwhelm users with all the Privacy choices yeah the question is how do we remain transparent with all the Privacy practices without overwhelming the users and for this one we always take this approach which is best for the users I would like to answer that with the help of one of an example in gdpr you are required to have a cookie consent disabled by default but in GD CCPA or the US it's not required it's an opt out not opt-in so when we have to make this decision of opt out versus opt-in we always keep users privacy at the Forefront so right

now if you go to the headspace.com website or ginger.com website all of those are opt in you have to opt into all of those choices we don't have that as opt-in for the US even if the law permits that because we think we should always be extremely mindful of data that we're collecting and that may not be required

sorry which resources you spoke of uh could you repeat your question uh yeah it just just struck me that a lot of the problems you're solving here with data labeling and and uh and handling um is reminiscent of uh National Security data in in some ways I'm just wondering if you've looked at that and looked for parallels or ways you can utilize existing approaches yeah next recently released their framework around privacy as well while they have a bunch of things on security they came up with this framework on privacy and when we were looking at this framework we realized that that's not really applicable for Telehealth so we had to build our own practices that's good for

a general sense and general privacy practices but while we want to take this industry standards for Telehealth it just becomes extremely hard because we have to have compliance with HIPAA and then there are bunch of different regulations as well so we were not able to implement any of those industry standards for our own company thanks yeah

all right any additional questions comments thoughts

okay I'll take that as a no and maybe give you a 12 minutes back oh you have a question I guess out of curiosity out of curiosity uh did your company ever look at things like zero knowledge proofs and some of those you know up and coming Technologies for your implementation and I guess do you have any thoughts on those those Technologies yeah I had one slide here which I intentionally did not present which was around the world architecture that we developed so what we did we we have this patent in our Cesar with many of the engineers we developed this architecture on our own where the encryption key for our crown jewels is stored on the browser itself

so while we have all that information on databases that's all encrypted but we also have another layer of encryption which is stored it stays on the browser so the public key that stays in the browser in one of the password managers that public key always goes to the private key of that particular system and then that data is decrypted slash encrypted on the browser itself on the endpoints and not at the at the database level so we have two sets of encryption one at the database level and then also at the endpoint level which is at the browser so that kind of serves as our purpose for zero trust knowledge or whatever you called that out uh we are not looking at

if you are asking about homomorphic encryption or something like that we haven't looked into that at this moment all right can't you know that's interesting thank you yeah

thank you all right thank you [Applause]