BSides DC 2018 - Network Traffic is an Open Book

Show transcript [en]

besides DC would like to thank all of our sponsors and a special thank you to all of our speakers volunteers and organizers for making 2018 a success good afternoon everybody I am Daniel Orlando and this is a delight Mecca and we're presenting a talk on encrypted network traffic and detecting malicious activity in encrypted traffic so we we are from Fidelis cybersecurity so first of all network traffic is sequential it's it's a sequence of sessions one after the other some of these sessions may be encrypted and so for example first you may be as an example of malicious activity first you may be referred by a hidden iframe you may visit a landing page malware may be

downloaded over TLS paying for connectivity and then steal data over TLS so notice that a lot of these sessions are encrypted and in fact a lot of the critical sessions where important malicious stuff is happening is encrypted in this example so but but also there there are some of these things that are in the clear so when it thinks for connectivity that's that's in the clear the hidden iframe may be a HTTP page and the idea is to use the structure of how the sessions flow to understand whether this is actually malicious traffic or not [Music] so first we're going to talk about some of the data we use to to train this model some of the features we extracted

which features are a numeric way of describing data that the algorithm uses and then some models so once we actually have these features how can we use these features to train the models and then we're going to look at some results we're actually going to peer inside from feature extraction all the way to inference model inference exactly what is going on at each step of the process and then finally we'll give an illustrative example for how this is working so first the data the malicious data we have our suspicious files that are submitted to the Fidelis sandbox appliance and these files are run through a sensor and generate pcaps and that our internal start research also

researchers also finds files and submit them as well they have feeds that they can see latest files and and submit them to the sandbox that we have pcaps from from customer submission and threat and we another issue is labels so with supervised learning there's a fundamental question which is what are the labels this is this data an example of a cat or a dog or or a horse for example so we need to know which which these samples are are are these malicious are these benign right because we're taking submissions and going through well in addition to the feeds where everything's obviously labeled as malicious we take submission so we have to label them first and then we can use

them for our algorithmic trading yes so this is um this is primarily from a and our own rules developed by the threat researchers and we use samples which are highly confident that these are indeed malware so for example on something like VT there are a lot of different high scores from from the vendors to confirm that they are indeed malware and that that comes to around 36,000 samples that we used in the training for this particular exercise here yes and as for the benign data we could use submissions labeled as benign but the issue is that these submissions to the sandbox are not in general representative of general network traffic so what does this mean if if you

are a user who is using a computer browsing some stuff on the web that may look different than the network activity of say a word document or or an executable that is being submitted to this even if the executable is indeed benign so the idea is if we want to use this in production with live traffic then the benign data actually has to look like live traffic so what we do is we supplement the benign samples and sandbox with a DMZ network traffic and that comes to about 550 1,000 samples from benign submissions and another 270,000 samples from this general traffic so you know adding adding it up that comes to about 320,000 benign samples

another question is if you look at the samples that we had for the malicious yes or the malicious data what we had is a sample being submitted and then all the sessions that came from from that sample detonating in a sandbox so how does this correspond to live traffic then the idea is to put a hard limit on a two-minute continuous sequence of sessions so there's there's a certain threshold one session after another and a requirement that that that's within a two-minute window because the whole the whole idea of machine learning is you have to have if you're doing a classification problem you have to have an apples-to-apples comparison so for example if you were trying to classify

between a picture of a cat and a picture of a dog it would be it would be problematic if on one hand you had photographs of cats and on the other hand you had drawings of dogs for example so the idea is you want both of the classes to be similar and finally we're going to talk about the features so again as I was saying you you have the raw data which are the proxy logs and we want to extract features we want to come to vectors which describe the data so this is this is a really large problem and n is face image recognition there are many ways to get vectors from images text there are many ways to get

vectors from text and in this case we actually want to use one of these ways to get vectors from text so what we're going to use some natural language processing techniques and talk a little bit about what that means in this content but also in cases of encrypted traffic obviously you can't see the text so you can't do your standard text analysis so there we use the certificate classifier that we presented at last year's besides as a supplement for the cases where we don't have direct national and natural language processing type features precisely and so the idea is what we can see what we do have detailed visibility into we will use and then what's a little bit more opaque

will we'll met with the certificate classifier and we're gonna talk about this is text how are we going to use NLP to understand this and convert this to vectors so a simple way is to use some technique called bag of words and one one hunting coming so for example here this is a document it's a very short document just one sentence the tuxedo cat that's afraid is not really a sentence and it's the document has a very small vocabulary it only has three words three unique words in it the tuxedo and cat so the vectors that we're going to use to represent the words in the document are going to be the size of the vocabulary and each word is going to

be represented by a one hot encoded vector in this space which means it's one in one place and zeros and all the others so me we could arbitrarily represent with this this vector here one zero zero tuxedo zero one zero and cats zero zero and now now that we have this phrase we want to represent this document as a vector so each of these words has a vector associated with it each of the words in the vocabulary so we can aggregate them together simply by taking the sum and get what's called a document vector so this is a document vector representing this document the tuxedo cap and the idea of using this in the context of sessions is that if each

session we can represent by a vector using using a technique like this there are many tokens in a session so we can aggregate them to get a session document vector but we actually want to talk about sequence of sessions not just one session so the ideas our input data would be a sequence of document vectors that's great and in fact that's the setup we're going to use but the problem is the to many dimensions so we can't use this this bag of words approach where the dimensionality of the vectors is that is the size of the vocabulary because of the vocabulary is huge the English language already has a huge vocabulary French Spanish huge vocabulary but if

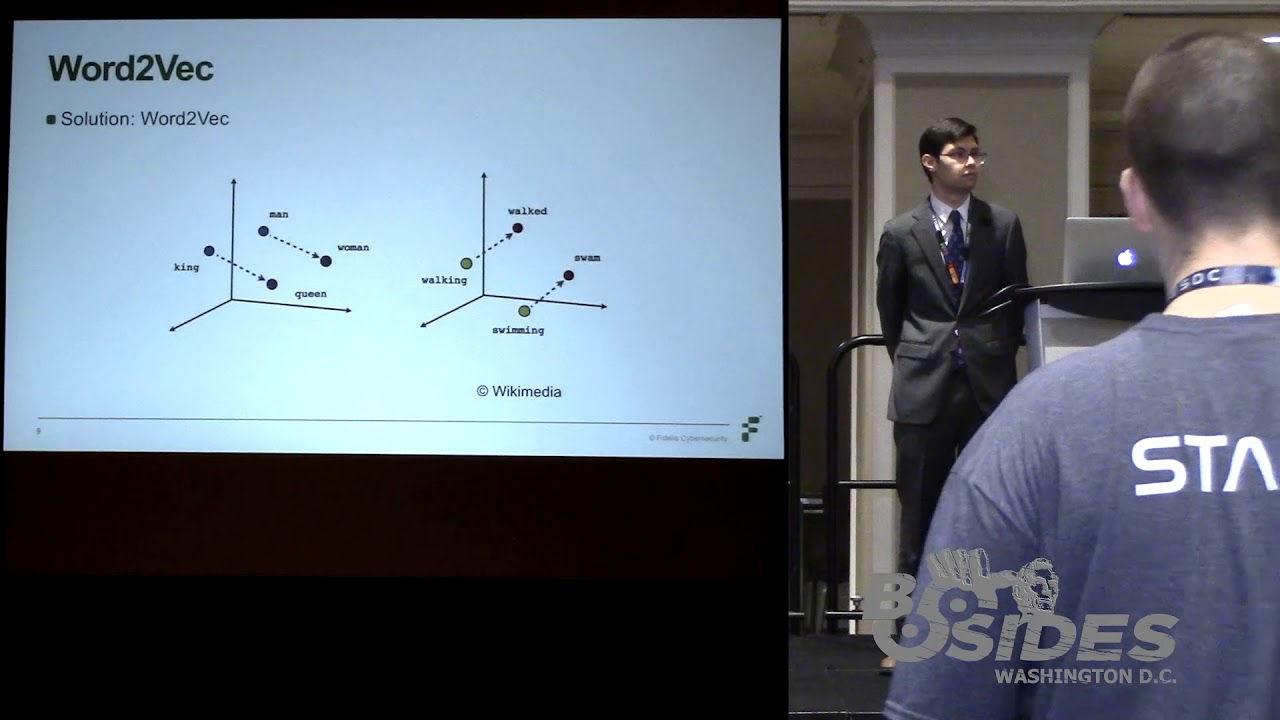

you take something like cybersecurity there's just a whole world out there and a very large vocabulary so a solution is word to back and work to back is a very popular approach these days for doing representation of text so for example even something like Google Translate uses a sequence the sequence neural network with with word embeddings so with word tobacker and possibly some other kind of impelling they invented word to vet for google yes lee yes so yeah for Google Translate yeah I'm pretty sure well but yes they did they did invent work to back and idea is if we go back here notice that the vectors in the space are very sparse it's it's a

high dimensional but sparse space and by sparse I mean it has it has very few values that it can take in this space so you know there are very few vectors in in this space there they only have the values in the component 0 and 1 and actually why not have a lower dimensional space where the vectors are more dense in the space which is to say that the components of the vectors can take all real values so instead of going 0 to 1 integers between 0 & 1 inclusive those are those are only two integers you could have the uncountable set of all real numbers in each of the components which allows a massive

dimensionality reduction exactly and and more get more capability of expressing all these different words in lower dimension so the idea of your Tyvek is you train it on a corpus of data and it's trained to recognize possible context from a word and it actually uses a shallow neural network to do this but the interesting thing is once you do this it recovers some very interesting semantic information so the dimensions in the basically you're using the word to back model to transform your very sparse representation into a dense representation and the dimensions in your dense representation are these semantically meaningful dimensions yes it turns out to be the case yes and so like as we see in these examples the

dimension that the in the the dimension of like grammatical gender for example the word King is in one place and where Queen is in another the word man is in one place the word woman is another or in the semantic dimension of present tense - past tense the present tense are all on this side the past tense are all on that side and so you end up with you end up that the model basic the neural network in the worked effect model actually learns the semantics in furs the semantic variation within your training set so back back to the title of the presentation actually this is a this is a good point to mention it

that's the whole idea of network traffic is an open book because we are using natural language processing techniques so the ideas visibility into encrypted traffic but also using using these natural language processing technologies so now that we've talked about the features we can talk about building models using these features so one possibility is random forests this is very this is a very popular algorithm to use but a limitation of random forests is as we talked about we have these each stamp one way to aggregate these these vectors together to in order feed it to random forests is to just take the mean and and so so yes so now we can use random force but for

those of you who don't know what random forest is I have a small example here a random forest is an it's what's called an ensemble classifier of decision trees and a decision tree is this float it's sort of like this flow chart over here with with this with this root so it's a flow chart with a root and as it branches off it it makes different decisions so this this flow chart over here is representing if somebody died in the Titanic or not this is the canonical textbook example of a decision tree yes it's slightly morbid but but and so you know one of the first questions you might ask is are they male or not and

right off the bat if they're not male there's a high probability that they survive women and children first on the exact so that's so that's what this whole thing is representing actually women and children first and a decision tree you go by sex is the the way they essentially the way a decision tree gets trained is it looks through all the features you have and it figures out which one has the most predictive validity for the set of data that still exists at this stage of the flowchart and so you start off you have everything at which point the women mostly survived is a pretty easy prediction to make but then within the men you have to look I

mean the old ones mostly died and then the children it actually turns out interestingly to be depending on how many adults were accompanying the children whether they survived so and this this actually is a great decision tree but if we have on the order of several hundred features which is what we're looking at if we have hundred dimensional vectors then what happens is if you train a decision tree on these features it has a tendency to over fit which means that it'll have difficulty generalizing new data so one one way to offset that overfitting is to use what a most ensemble yes this ensemble classifier random forest so it's like having multiple experts in a room and they each

have a random subset of the features they each know they each have different strengths but together they can come to a very well-informed decision so the wisdom of crowds as applied to these machine learning algorithms yes and and so that actually did pretty well very well ninety ninety eight point nine percent accuracy with a high AUC of point nine nine seven six but we could actually do better than this so the next step is to use a recurrent neural network which takes into account this information of of this sequence of vectors so it uses the information about ordering of the sessions and the idea the idea of how a girl recurrent neural networks is through each example you

have multiple vectors and the feeding in vector after vector and these these horizontal lines are the weights that keep getting updated as you feed in each next vector so it turns out that the most important feature when we use a recurrent neural network ends up being the certificate classifier score when we train the random forests we use the certificate classifier score and it had an AUC of 0.99 seven six and then when we train the recurrent neural network without the cert classifier score the accuracy went up but the AUC went down and the AUC is the measure of general quality of the model as you vary the predictive threshold so maybe you decide you really want

really want to make your false positives as low meaning that you only predict a positive if you have a higher threshold so AUC is a way of measuring aside from of the standard cutoff of 0.5 how it does over many different carry it's a it's about it does it refers to the ROC curve which area under curve yeah and so the the curve itself is it's a characteristic it's a characterization of the model and it's a standard signal processing metric that we use but in any rate I think the main point is so using the better model drives up the accuracy quite a bit well or rather cuts down the inaccuracy quite a bit I mean once once

you're approaching the plateau is a little incremental mentioning returns but as far as it goes you can see that actually the search score makes a difference but at any rate it's really the switching from basic sequence lists traffic to characterization to the sequence aware characterization of the features that really gives you the in actually classifying traffic so now that we've talked about data the features the modeling we want to show some concrete results of actually what this looks like from beginning to start and actually peer inside the model so first off what you're going to see are are several 2d visualizations but keep in mind that we're actually visualizing 150 dimensional data and more so what we're

going to be using to reduce the dimensions to show you because at least I can't view 150 dimensional spaces but is tease me so TC is is an award-winning dimensional dimensionality reduction technique that essentially builds a probability distribution between the similarity of every two points in this high dimensional space and and has some some it projects down to two dimensions or three dimensions or whatever dimension you you choose to reduce to and make sure that that that probability distribution is preserved so here here are the five stages that we're going to visualize this on one one one stage is the transaction level word embeddings because within each session are the transactions so these are the various

network operations that the HTTP operations for example in an HTTP session yes and and then you assemble these transactions and you you get sessions and there are certain things that are constant now across sessions but are not constant across transaction so these these are the tokens that are at the session level so we have to word embeddings going on and we assemble them together to get the document vector which represents those sessions as a whole so remember that each sample is actually a sequence of sessions so once once you have a sequence of these document vectors then you actually hand it off to the RN n so the RN n is seeing the recurrent neural net so the Arnon is

seeing a sequence of vectors and we're going to look at the activations in the first layer and then we're going to look at the activations in the second layer and okay so these are the transaction level token embeddings and the this is the same plot if you look at the shapes it's really the same plot but we're actually looking at looking at it across these two different dimensions of malicious and benign so malicious is the nine is blue on the Left chart there and on the right we are actually looking at these different kinds of kinds of tokens so there's a - suya tokens from url's tokens from hosts different different things and these are

the different fields in the proxy log that give us token quantity in the different so each of these points is a session and the color corresponds to what sorts of tokens so what's interesting here we got so much the picture on the left because since we're in a very low level we're not seeing a lot of separation between malicious and benign samples they sort of have similar tokens they're their clusters of blue tokens they're little pieces that are predominantly red but overall it's it's really muddled what is interesting though is that the word embedding does learn very distinct things about types of tokens on its own so keep in mind that I'm not telling it some additional

information about what kinds of tokens these are just like if I was training a word embedding I wouldn't be telling it that this is a verb that this is a noun it just automatically figures that out on its own from contact right so the basically the the different colorations of these different regions correspond to the word embedding having learned different semantic properties of the different classes of tokens in the proxy logs so a word on axis if you're confused about that of pi-1 and pi2 recall that we are visualizing a two-dimensional projection so pi is just a mathematical lowercased pi is just mathematical notation for the first projection and the second product projection remember that this is active

these are sort of contrived dimensions here for the visualization purposes and then it actually gets a lot more interesting with TC with sorry with session level tokens so here again we have predominantly a component with predominantly D port on the left on the right and other stuff on the left which others use other things it's primarily s and I actually and there's a lot more variety on the tail of that over there but if we look at the figure on the left again the the deport the D ports are very muddled there's some some benign some malicious looks like yeah the main point here is that by the second in the session level tokens you can see we got

quite a quite a bit more structure in terms of exactly the semantics of whatever between the semantics that was perched on the edge of the table whatever anyway so basically you can see that we got we got substantially more clean structural understanding of the semantic meaning of different types of tokens at the session because because it is higher than transit rights but again so these these semantic tokens don't really court the presence or absence of these tokens doesn't really correspond to a label because I mean you're going to have destination ports in malicious traffic and benign traffic right so yes so moving on to now we're actually yes so this is this is by the time you you

aggregate all be total all the vectors all the word vectors from the session tokens all the word vectors in the transaction level tokens and you assemble them into the document vector one for each session and this is this is this was very exciting when I first saw it because it it goes along with your intuition that some sessions will definitely be malicious and you will only see malicious traffic some sessions are benign and you probably all be seen benign traffic and but there's a lot where you see it's mixed so I don't know if it's the beer model here you have a bit of a muddle here you little model here even in places where it's

relatively clean you still it's still a little bit muddled yes but there are some reasons where it's pretty relatively clear and some regions where it's sort of isolated so but but the idea is that since we've gone up a level we've gone up from transactions to sessions and now our transaction our session tokens to now a whole representation an aggregated representation of that whole session we really start to see some significant separating out between what really looks like malicious and what really looks like benign and so this is now we can look into the sort of knowing on the Left what the labels are for the different regions of this contrived projection space we can look and see

what are some different features that correspond to different locations of that space yes so that that orange down there for example that's from the UK yeah that green up there is from Russia well there's some China there's some traffic that goes to China from here there's some traffic that goes to China over here but in general you can see that actually the traffic going to different countries is relatively I mean there's some muddled in the middle but there are some regions where it's quite clearly defined that the traffic in this area is bound for one destination country versus another destination country yes and then the greyed out blobs there which as you see it's not really in the

color-coding our unknown country so that's like DNS requests that we may not well not just done mostly unknown but also I mean we're not we're only coloring five of the countries yes but I mean there are other countries in the world obviously and so but yeah so moving on this is a different coloration of the so as you see the region there's a lot of DNS requests you know this this piece of a fear are DNS requests that we're only seeing malicious data mainly there's a little bit of blue specks in there right but a lot of that is is malicious DNS requests and these ovals are you know some makes seven to nine DNS and for for our purposes the engine

the most interesting piece over here is this right here you have this big malicious blob in here particularly which corresponds to this which is I mean there's DNS but then this is where you see HTTP and TLS trial yes so so TLS tell us and and focus on that remember that TLS on these this rightness you have this center blob the bottom is not so interesting but the top you have the right side that's the TLS so watch that so now that we get the file type we actually see that a lot of the file types in that TLS section are actually certificates and in and the rest is text because I mean once it's encrypted it

just looks like binary ASCII text yes and and then as for HTTP there's there's really a lot of JavaScript binaries JPEGs things like this which is exactly what we'd expect and then how much so what what's the session size and we see this is this is kind of interesting on the outside remember there was a lot of DNS and we see that a variety of DNS sizes in the malicious but the interesting thing is that Green appears to be more prevalent so there there seems to be made too high in DNS and then HTTP and these are all these are all mostly zero because the size didn't get it's not a well-defined property in those sorts of cases based

on the definition of the feature we're using here so if you remember what I was what all I was going to show you there was the document the transaction level word embeddings the session level word embeddings the document vector level aggregation and now we're actually going to start to look inside the model so before before we actually go into the model I want to talk a little bit about machine learning theory so you understand these visualizations first there's this concept of a decision boundary if you have two sets of points for simplicity that you're trying to differentiate between and you have the feature space or two two or more numerical ways of measuring the differences between that space then what

a machine learning algorithm is actually doing is it's it's doing some kind of regression problem so it's it's learning this boundary between these two classes these salmon and sea bass along these two different dimensions width and lightness so for example right off the bat you see that lighter fishes tend to be the sea bass and the idea is that when you have really good features the decision boundary is going to be more clear and another another thing is that why is there so much hype about all networks so the hyper about neural networks is actually the neural networks generate their own features in the sense that the layer before the last layer is creating features for the layer after it

right so the input to each layer is the features that that layer is using and then the output becomes the the input to the next layer and so it's the features that the next layer is using and then the output of that layer becomes the input to the next layer which are essentially the features that it's using so after each layer you're transforming your feature space which gives you new features that are hopefully more clear or that hopefully provide a more clear illustration and separation of the different classes yes so this this is what the first layer looks like and then by the second layer we we see that it's it's very well separated out yes and

this is the process of right so you go from here to here this is the transformation from one layer to the next yes and just to illustrate how useful the Search score wasn't this this is the same recurrent neural network train without the cert score so you see that by the time you get to the second layer there there still is quite a bit of separation but was not the same type of improve like this this looks this is you can you could by hand just write out the function that goes here as like a piecewise linear function and then just by looking at this you could get almost great results yes so so great now that

we've looked inside the the neural network understand how all the features works what what is a concrete example of how this classifier is actually run with with an actual piece of data so I went into some of the data that I was using and picked out one one example here so here here's an example where the probability coming from the classifier was that it was malicious is very high and this is actually a known malicious sample so it's fitting that it has a very high probability just because it is in fact malicious and the search score is is also on the high side for one of these sessions yeah for it yeah looks like so yes so here for

the sequence we're taking the highest the highest score for the certificates for all the different certificates that comprise the session and so here's here you can see the this is the sequence of sessions it starts off if you want to well yeah it starts off with a couple HTTP web web pages one with Google api's then it goes to vid seven comm and it it does a lot of stuff in the US but it has one session HTTP with Russia fast picked out are you and there were some there were some pornographic web pages in this data it's sketchy stuff you know but at any rate once it redirects to this XO click site you end up with a very suspicious

certificate yes oh and actually I plug and don't don't go to that website it's

you know Google search easy list cloud then the first first result was how do I get rid of easy list Club virus on my PC or something like this so say yeah and so each of these sessions gets represented by a document vector and these are actually where those document vectors landed so you see there's one that's smack dab in the middle of that red zone right there some on the periphery means I guess our DNS requests and so here's here's where it landed in the recurrent neural net in the first layer it was in this red piece over here and by the by the time it got to the second layer it's it's well towards the

it's deep into the malicious tandoori right now my problem this is a clear example of malicious traffic so it's fitting that it ends up very very far from our sort of visual decision bank a rule of thumb decision boundary here yes so so let's sum up we've we've analyzed sequences of network traffic's network traffic and use word embeddings to extract our features so to turn the raw proxy logs into numeric quantities then we've trained an ml model to identify these malicious sequences of sessions and we've leveraged the cert classifier the certificate classifier this knowledge of this this may be a suspicious cert or not and and finally the results are very encouraging so some next steps would be

to investigate possibly mislabeled training data so if you look back here you can see that we have some of these labeled benign samples that are sometimes very far into the malicious territory and so he we've started investigating them some of them look a little suspicious but we haven't concluded that investigation yes but certainly there are some suggestive results that actually maybe are labeled so right off the bat is that I don't know you guys can see but there's a little blue dot next to my star over here so there's actually one sample that I can start by investigating that should actually be very structurally similar to this malicious sample that I just showed you guys so that would be very

interesting to look at and and yeah so this is all with I mean we we got a small ish training data with only a few hundred thousand examples so we'd like to going forward maybe redo this exercise with about 10 times or 20 times 50 times 100 times as much data see how that affects the results I mean already with the hundreds of thousands you're probably in your statistical limit there but you know it's good to check and then you know once once we've done all that then we want to put this in our product so you know production-wise a ssin so testing validation integration with the production code base etcetera etc you know all the regular stuff and but an

important an important thing here is to monitor performance set up infrastructure if they're automatically monitoring this yeah classifier and doing periodic training it may be right because I mean this is all based on the the thing to know about these if you're using machine learning and you have a model that model is trained based on that model is inferring is making inferences based on the training data it had and so you know as threats change as there are new types of malicious behavior new patterns of malicious behavior that are invented the training set we use has become is going to grow increasingly obsolete over time and so the goal is to eventually be in a place

where automatically every month or week or several months or however long it turns out to make sense to do part of the performance monitor right based on our performance monitoring we would then want to just have it automatically retraining or building a new model based on that newer newer example data great questions yes monitoring in this context well I mean sanity check is is one thing but here we're talking more in terms of just in general looking at so this would be in the context of you know classify malicious traffic so you know periodic you know looking at a sample of the predictions it's making and checking to see what fraction of them are correct

based on other sources of labeling and you know comparing the results of our model to other sources of identification of malicious traffic and you know doing that doing that in an automated way so you know we automatically run all this traffic through various like you know antivirus engines and our threat rules and we have lots of other ways of figuring out what's malicious and so you know comparing the results of this to that over time to make sure that as we are sort of updating all these things in tandem that they're sort of continue to agree with each other going forward [Music] so the horsey ho their classification this this month my fail that chef in the tense

yeah yeah I mean that yeah right yeah right yeah and it definitely that's one of those things like even as we were saying even in our smallish relatively small training set we found some things that look like they're suggestive suggestive of mislabeling and so definitely all of whatever infrastructure we end up building out to do that investigation more thoroughly is certainly worth applying to just these monitoring activities going forward yeah yeah because I mean definitely the the goal is that you have multiple different sources of knowledge and you're enriching each based on the other and you're bootstrapping your way up to a better understanding yeah any other yes sir starting this work like this our area

[Music] yes so potentially explore different more nuanced way of extracting features so one one thing for example that's been done before is waiting using tf-idf the different vectors so what this would do is this would emphasize more rare --is-- more significant kind of tokens and the the weighted sum it would be a weighted sum instead of an average to get these document vectors and you would be emphasizing the more unique yes yeah [Music] thank you any other questions yes mr. Craig you showed an example

yes yes yeah so I don't I don't know that we have those numbers no no I don't I don't have those numbers off the top of my head but I definitely did look at directly how the certificate score correlated with malicious sessions and I think the issue with that is that sometimes not the the critical stuff wasn't happening in the encrypted sessions it could have the payload could have been delivered in the clear and you know just have gone to some refer refer page over TLS so there there could be many instances where the certificate certificate score wouldn't come in handy but it definitely is useful in the case that there was is actually critical

stuff that is happening in in TLS yeah so I like I said I don't remember off the top of my head but my record is that it wasn't enough to be convincing on its own yeah and then especially like I mean if you look at our our example here you see that like I mean some of these like this easy let's not club which is I mean that that is that itself is like the that he googled and don't go there is the score is much lower than this ad certificate and this these EXO click certificates are much more suspicious than the easiest Club certificate even and so it I mean it's it's a great feature to have but it's

not we still gained a lot from knowing that you had like a sequence of different different events

right yeah yeah right exactly it's it's it's the context when you have everything in context you can see that together it's very suggestive in a way that by itself it might not be any other questions it would it would absolutely be possible so that that's actually a important note that I want to are you familiar with recurrent neural Nets so so the idea is you have each recurrent neural that is like I was saying it's a sequence of feature vectors so one option is to put the search score in each of these feature vectors as an additional component but what I've decided to do instead is have at the last layer of the neural network a

linear combination of the max search score and whatever whatever the vector coming out of the RN is and so then there there becomes a weighting between the maximum search score and whatever is coming we essentially we have a staggered input layer yes so so basically if there's any other features that we want to tack on like these float base flow based statistics we could absolutely do that easily and that would be an interesting thing to you right yeah is that it definitely having the architecture as it is I mean the recurrent piece picks up we have a couple of recurrent layers but that's not the only input so certainly we could add other things any any other questions okay well thank

you very much