A Code Pirate's Cutlass: Recovering Software Architecture from Embedded Binaries

Show original YouTube description

Show transcript [en]

all right uh hello everybody um i'm evm from apl and uh thanks for coming out to the talk here i'm gonna talk about um some research we're doing at apl on uh reverse engineering mainly static analysis static binary analysis and trying to speed up the process help out analysts so we can understand binaries do do vulnerable vulnerability analysis faster so what i'm talking about today i'm going to talk talk to you a little bit about kind of some high-level stuff that we're doing kind of the the long-term research we want to do it i'm also going to talk about a technique that i developed for slicing up a binary into pieces and so that's that's kind of

the the cutlass thing see it's it's a joke ha ha thanks so the motivation behind this is um i'm guessing the folks in the room guessing you guys have done reverse engineering or you you've got some interest or you're at least familiar with it with the subject but um if if you're not you a lot of what we do in infosec is built on top of reverse engineering right when we have um you know we we have new malware samples you know they get detected in some way um a lot of that is built on humans tearing apart malware binaries or if it's on the other side if it's you know the red team side

there's people doing vulnerability analysis are pulling apart binaries reverse engineering binaries in order to figure out how devices work and then find vulnerabilities attack them right the problem for me is that ari is very manually intensive there's a lot of domain expertise that's involved and particularly where i work is in embedded systems so what happens in my day job is the government comes to apl and they say um you know they a lot of times it's the you know it's a military organization like the navy and they'll say hey we want to put this um you know we're going to retrofit these this system on a submarine or plane or whatever and we want to put this box that we

bought from best buy and we want to stick it here in the you know in the ship and and so our job is to say you know what you you want to do what okay hold on okay here's why you know here's why that's a bad idea or here's the vulnerabilities that exists and here's ways to you know mitigate them here's a way to design the system so that you know those vulnerabilities can't be exploited so i spent a lot of time in embedded systems you know reversing them pulling them apart if you're not familiar with embedded systems they you know when when you're doing when you're reversing or looking at um you know linux or

windows code you get a lot of things that you don't have to look at you don't have to dig into you don't have to dig into the kernel you don't have to dig into the system libraries those are all kind of well understood you get those whenever you're calling into a system library um you know that's that's well labeled right in embedded systems especially um so this is like in the in the rtos world where you have like a single you might have a single large binary that is um it's got the application binary uh the operating system and libraries all smashed into one program space so what happens is all of that gets compiled and linked

together and if if there's no symbols then you don't get any any symbol information so what you get is something that looks like that on the bottom left this is nasty um you know or or if you know you drop it into ida and you get okay i've got you know 12 000 functions and ida doesn't recognize any of them i get this big nasty you know call graph just just super complicated um call graph and i've gotta um you know make make some sense of it so we spend a lot of time just kind of churning through um you know binaries that look like this and so my whole motivation is to make that process faster

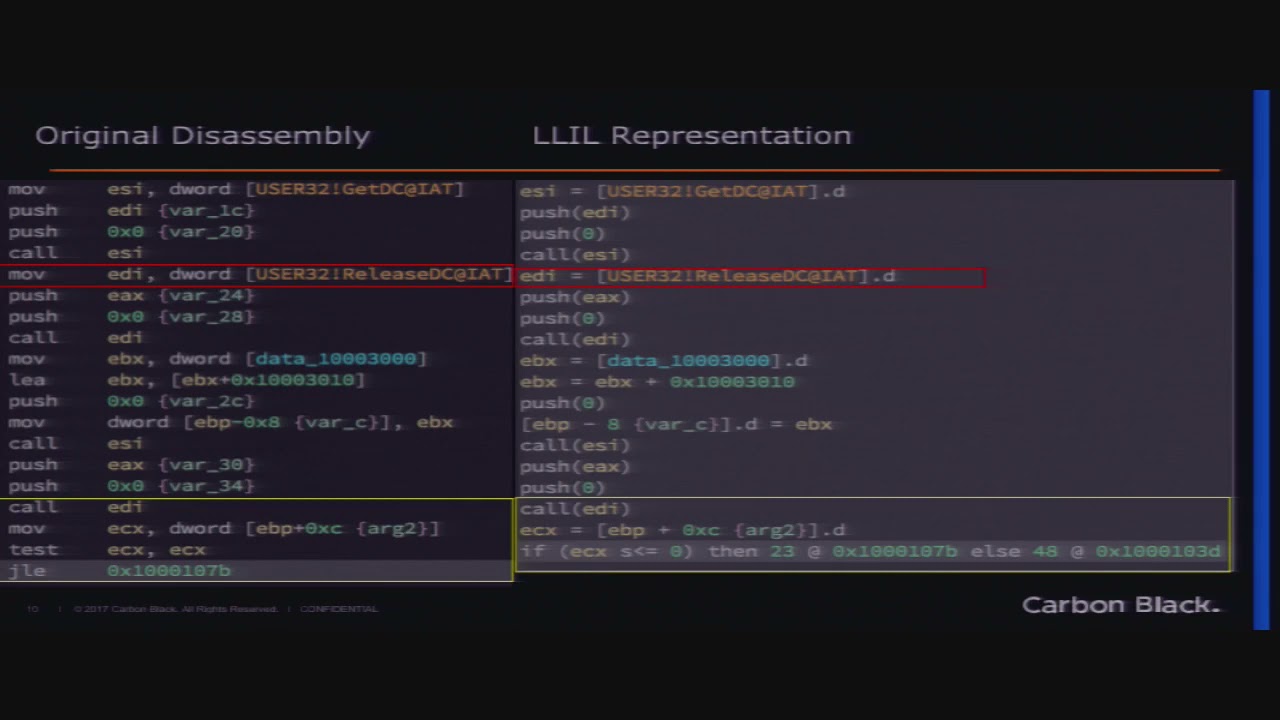

both for you know for me and people like me but also for newer folks we want to make this make binary reverse engineering just more accessible you know more tools available so people can kind of pick it up quicker and get up to speed quicker so i'm going to talk about a little bit more but the previous research in re has mainly focused so the main academic problems that are being researched and solved are um code to code translation so like decompilation right you know people are pretty familiar with disassembly uh guidra king just just came out so guidra has a built-in uh decompiler that's based off of p code hex-rays of course they've they've

had their their decompiler that's out for a while generally the way these works is you take the binary and you translate it to some intermediate language sometimes like even a higher level language and um you know sorry you take the binary converts to some intermediate language and then that gets converted to a high level language like c right that's your that's your basic kind of d dump compilation steps and then the other part is function level matching so things like bin diff diaphora that are out there um gidra of course has the version tracker tool that lets you take two two binaries and match functions between them that's mainly where the the research in speeding up static analysis has

has focused and those problems are pretty well solved so let me talk a little bit about just thoughts about automated reverse engineering and how we can make that better and other areas that that kind of need to be explored so i mentioned the um oh so i'm going to i'm going to talk a little bit more about function matching and decompilation but seems to me that when we reverse engineer code reverse engineers are operating on at least four levels and most of the research isn't isn't really taking that into account um the people that are trying to work on machine learning and deep learning approaches for um for software reverse engineering aren't really taking the

sort of hierarchical abstraction um of of reverse engineering code or the the fact that there are um there's hierarchical extraction in the code um into account so in general we're we're working we kind of work if you want to call this a stack you know we're working up the stack to sort of try to understand at a higher level what the code is doing right so we're we're we're staring at you know uh disassembly down here right maybe um staring at disassembly down here maybe we've got a decompiler that brings us up here we're trying to understand you know we spend a lot of time just trying to label functions understand you know what what the subroutines what

the functions are doing um and then usually we take sets of functions and then we group those into you know into say like a library or just um a grouping of functions so if you know if you're doing malware you you look at okay here's the the protocol section of the malware here's the um you know here's the part that launches a payload and um you know here's the part that parses the configuration or something like that and those are all with within the the binary um so we tend to as we're reversing things we tend to move up and down that sac right so we're trying we're trying to kind of answer that higher level question so

we're tending to kind of answer that higher level question at the top but we kind of you know we kind of bounce around to sort of work our way upwards right we we try to kind of zoom in on the maybe the call stack that we that we care about um and we'll so we'll go all the way down to staring at you know opcodes to figure those things to figure out a particular function but we're generally trying to sort of work our way up to the the um to the top level where we can explain to somebody else what this code does um so the so as i mentioned the current state of practice in

re is is kind of like looks kind of like this we've got decompilation that kind of helps you go up to take takes your disassembly and translates it into a high level language like c the problem with that is i mean there's no problem with that but what you don't get out of that is you don't get you know you don't get c source that's labeled with function names and variable names right you get you know sort of this blank c source with these abstract var1 var2 variable names um so it's good to have the compilation and and maybe it's easier to read c code but it doesn't necessarily tell you anything about the functionality of

that c code you have to sort of interpret that for yourself function matching is also kind of going up here if you have functions that you've seen before you know those those tools will help you match those you know up at that level which is helpful but that actually doesn't do anything for you know our current function function matching approaches don't do anything to interpret or tell you about the functionality of new code they just help you kind of match against code that you've seen before um so in in in general what we are kind of this the summary of what we're what we want to work on at apl is techniques to um to to build on decompilation and

function matching but to treat this as a as a problem where we're where we're translating code back to natural language so for most of us that's english right we want to be able to have techniques that that translate you know whether it's binary or functions libraries we want to be able to tell an analyst we want to have tools that we can run that will tell tell an analyst in an in your natural language you know what do i think this function is what do i think this set of functions are we want to treat that treat this like a natural language translation problem building off of the um kind of the existing research that

that that's out there and um i'm gonna so i'm gonna talk a little bit the the rest of this talk is about something i developed that is really sort of up here um it's the idea of trying to uh oops group functions and uh group sets of related functions and determine what the what the object files or the the libraries are the static libraries within your binary um because i think that that was sort of a a piece of the puzzle that i i saw kind of a a a quick answer to um and i'm also going to talk about later on i'm going to talk about kind of a data set that we're building to try to

ant to try to fill in more pieces of this sort of more pieces of the the puzzle build more parts of the the system the thing that i like to i like to relate it to um jarvis if you guys have seen uh you know the iron man movies right like jarvis is this this like sort of ai assistant for tony stark and mechanical engineering right and he he says hey you know jarvis what's the he can ask it some question about chemistry or physics or something and jarvis sort of spits it out right imagine if we had sort of like a jarvis for binaries right where we had a ai that could say you know this looks like uh you know

this looks like networking code this looks like crypto code um that's kind of the long-term uh thing you know thing that we'd like to build okay so let me talk a little bit about um code cut and um feel free to you know this is a pretty small room uh feel free to shout out questions if you have them as we're going is anybody have questions on that section of it

okay cool um yeah feel free to raise your hand if yet um have a question um so the the um the problem i just decided to tackle i called the code cut problem and so um if you're familiar with you know languages like c and c plus plus that get compiled down to um down to a binary that that runs on the processor um you're probably familiar with this um at least in theory with this um these steps right you say you write your code and you write it in a bunch of um you know different c files you know this is just sort of generally the way that developers you know they kind of segment their c

files into um or their code functionality into different c files and they um they compile it into object files and then your linker builds that all into a binary program now sometimes if you're dealing with malware or something like that you get other things you get packers and you get obfuscation i i generally don't deal with that kind of stuff i think this stuff still applies but it would be sort of like after you kind of get rid of your you know any obfuscation that is built on top of um you know on top of the um the binary um the um in in in the embedded world you know most of the most of the products that

i'm trying to reverse engineer our commercial products and the developers aren't really thinking about you know they're not trying to obfuscate their code for reverse engineering they're just trying to sort of put a product out there right so there isn't like um you know i'm not accounting for any kind of weird stuff you you might um tricks you might do to to prevent reverse engineering this is kind of just straightforward um straightforward stuff um so the idea of the code cut problem is we want to recover those original object files in the binary program so what the what the what the linker does is the linker just takes those object files more or less and just and just squishes them you know

one after the other you know it it links the links all the function calls together and what you're in what you end up with in your binary program is more or less the object files that you passed into the passed into the linker you know in order and they just all kind of get stuck one after the other and squished together into that binary so the idea of the code cut problem it's real simple it's just can we find those original object file boundaries the cool part about that is that it gives you a lot of information about when you when you look at object files usually that's kind of the the the hierarchical design the sort of the

abstract design that the programmers put in there if you look at the relationships between those object files you can actually sort of immediately you know sort of recover design information about the software um so yeah that's that's what we're um trying to do here um so the concept i came up with is actually really dirt simple um so if you think about about c um you've got your you know you got your pound includes and your your functions and so the idea is so let's just say for a second we eliminate external calls and we just look at the directionality of calls so we look at how the at the direction of the relationships between

functions in this file um so and and it's sort of sort of obvious when you think about it is that at first any any any function calls within this file are going to be going in the positive direction right and then toward towards the end function calls are going to be going in the the negative direction and if we also look at um just so we that's that's you know for a particular function that's that's functions that it's calling but we also look at the the reverse thing any any functions that call call a particular function that that same relationship is going to hold it's going to be it's going to be called by functions in the

positive direction and then that's going to switch over to the negative direction um sounds sounds kind of trivial actually but um the the cool part is that you can you know you can to some extent detect these edges in code um so what we're looking for really is you know we're looking for a negative trend and a switch back to the positive direction because that will start our new um our new c file our new object file um so that's sort of the whole thing and so you can imagine if the next c file is gonna look we're gonna have have it's going to start again in the positive direction and then go to the negative direction

[Music] this is the uh sort of the mathematical um formulation for uh what i call local function affinity so it's a way to think of that is it's just the the direction that a a function is sort of being pulled towards other functions around it right so is it called is it called by uh is it called by functions or is it call functions more in the positive direction or the negative direction you know which which way is it sort of being pulled um and what i use here i use this term i use a um a log term um if you're if you're a physics person you might think you know if if these if

this was like a force vector in physics a lot of times you use like an inverse square thing as it as you know so as you as you go further away from the the the object you know the the force is is less um i went for a logarithm instead because the we we don't i i don't think it matters too much the the um i basically i think that that inverse square is a little bit too um aggressive and so logarithm um seems to work for right now i'm going to talk a little bit later about this big data set that we're building to test some of the stuff out so we should actually be able to get

some good results on whether you know whether logarithm or inverse squares or or some other thing is is a better way to to factor the the pull on that function but basically what we're saying is we're just taking all of the all of the functions that call this function or that it that that function calls those are the references and we're taking the logarithm of that distance multiplying by the sign and then taking the average of that so um i mentioned in the previous slide so i i kind of if if you were um following closely there i mentioned here we've got to eliminate external calls and if if you're somebody if you spend a lot of time in

um in a disassembler you probably are thinking okay like how do we eliminate external calls because we don't have any sense of that in the binary there you can't really see whether this was an external call or not you don't get to see like what's the pound includes and that information is there so what i do here is i do a i do a um a fixed threshold of 4k so which is is kind of a hacky way to get around that but it's basically saying anything so i know for a particular function you know if i assume that the maximum object size so that's basically saying what the maximum object size is if it's over that

i'm gonna i'm excuse me i'm just gonna just gonna throw it out and say it's it's external so there's there's room for improvement there so i'm basically just i'm taking everything that's you know further away than a certain size from that function and just just looking at the functions you know within that boundary i'm also looking for uh so i have to detect edges then and the way i do that is i just look the the edge detection metric looks for a negative trend and then a switch back to the positive um turns out to be at about um a change of two with this score and we call that an edge um we just kind of

made that up seems to work [Music] um so this is this is for a particular binary that i was working on this is this is a on the bottom this is kind of the full thing and then there's a zoomed in view and um you can see that this cut this kind of holds kind of works well here um so here here's here's a very nice example and this partic this was cool this was a this was a real binary that was working on evaluating and it had um it had some assert statements in there so with with file net you know so with um file names left in uh in the calls to to the assert function

and so um we were able to kind of from from those file names we're kind of able to validate somewhat what you know whether whether this was working correctly so so the names at the top are from those assert statements and the uh um so that that's that's where we got those those name names from and we could see a pretty nice so here's here's an example where we have a very we have a positive score goes down to negative switches back um this ltc is another one where you have a positive score negative switch back pretty nice some of these other ones oops so you know here's one that's kind of messy um some of these this this is kind of

interesting here this p1 so this this is like the main thread of the whole thing um i had a lot of different functions so it's like about this is on the on the x-axis just function number here so it's it's uh 40 functions or something like that um and at first it was like oh is this is this really you know is this really working but what's what's cool about this is what it actually shows is that there even though this was originally one file they put a bunch of different kinds of functionality within that file so it's actually showing you some boundaries between um functionality within the within that original c file um so played around with it it's kind of

you know this this this was kind of like the the first thing that i got to run it on that seemed to really um first thing i got to run it on in it and it seemed to really validate the approach um okay so so far this has been all kind of you know theoretical and mathy right but so if you're an analyst okay so what why do i care about this um and that this is the real cool part right here um so once you once you find your object file boundaries even if they're you know they're not they're not perfect they're close right um usually we work it with call graphs right we you

know if you're in ida or ghidra you do one of these things where i take a function and i want all of the cross cross references all the functions that eventually call this function or um you know i i take um you can use plugins and things to find a you know just pass through you know from one function to another function or something like that um sometimes you can find like a you know you find a main entry function and i want all the all the functions that are called by this function so i get this giant nasty call graph of the whole the whole binary so instead if i can find those object file graphs

instead of that big nasty call graph binary i can i can now just take i can simplify it and graph whenever one of these objects calls another object i make that that an edge so i sort of bring it up a level so that's that's this guy um so each of these things that we detected and um at first we came up with we we you know these names came from just sort of looking at the at the binary but i actually have in the in the code later some stuff that that auto tries to to come up with the name of the um the binary based on on strings but um so the the cool part so you got this you get

this graph this gives you essentially this is essentially a automated um software architecture graph um and you can immediately you know you can immediately pick out um you know some some uh interesting relationships here that you wouldn't see in a call graph uh you you know the call graph on this thing is so complicated it's really nasty um just some some things to point out here so like here's a configuration this was a c plus you know configuration code you know look read stuff out of a config file and and set variables so you can see okay the reason everybody's calling that is is uh you know they they all need to read some configuration do something with it

down here here's a an events thing so this was just functionality to like register for a timer or a callback and so you can see them all calling that um and another interesting bit here oops here's a whole bunch of code so this ends up let me back up here this is um from here to about here uh you know so so almost a quarter maybe of the of the binary and we can see it right here these these modules that didn't have any strings and you can you can see that actually the there's there's one call here to one of these functions but otherwise nobody calls these functions this whole sub graph except for this configuration

file so you can immediately say well if i understand kind of what the config this is doing i don't have to look at any of this code if i understand this interface i can just sort of ignore all this stuff you know and then i can ignore basically a quarter of the program that way so just that's just something cool that you can you know you can do with this immediately um this this runs with lfa it runs automatically um right away on your on your um binary and you get you know you get this call graph to look at so [Music] pretty cool um here are the results uh so so far which i'm going to talk talk a

little bit about this later the there isn't a lot of we don't have a lot of ground truth data sets to run tests like this on in order to really have a data set you need source code and binary and ideally for me you'd have source code binary and then your intermediate compiler artifacts like your actual.o files and your map files and you know your symbol files and you keep that all together we don't really have any data sets out there that have all those things in them and so this was my first shot at doing something for that which was coming up with basically compiling on my own a bunch of different embedded firmwares i started out with gnu chess

for some reason i'm not sure why as an x86 target the rest are sort of embedded firmwares and just to make a data set to test this out on and this worked this kind of you know validates the approach i had a longer explanation of how these scores are calculated the the idea is that gaps are gaps are sort of anywhere in the code that scores aren't calculated for under lap is just a term i made up for disjoint areas so so places where the the the um where we exp uh or where we describe the object file in the actual object file you know don't line up so obviously a higher match score is better

and a lower underlap score um is better uh so we we actually have pretty good um you know so um some of these you know 90 85 it's like okay that's pretty decent um some of the things so like the reason that the the px4 firmware score low is that this this gap number is high so there's um there's a lot of like there's actually in that firmware there are functions that where the function itself this is how it's written is larger than 4k so we threw so we threw it out here in the some of these other ones are low um [Music] these two are low with a higher underlap score because whenever you have um so in c plus if you

have getter and setter functions um it or you know they're basically you know functions that access their they they live within a file but they're they they access global data and they're used by people outside that file they tend to this tends to link those functions up with the basically the previous file especially if they're you know they're usually at the top of the file and they're called by they're not directly called by things in the in the file so that's kind of what the um that that's kind of why um so so good fat has those and gnu chest has those gnu chests at c plus plus and that's what makes a low score so

probably that the takeaway from that was maybe we want to factor in global data references as kind of like a follow-on score [Music] any questions on that i know it's kind of kind of dry material um [Music] um well what do you mean if um yeah okay so for the for the um camera so the question is yeah how did basically i guess how did i compare or how did i come up with a before and before and after or did i base it on binary and um symbols or how did i do that yeah so so for um uh so for the good fat yeah so you you can download the source actually so all of these are open source

and so the the way we did that is we we took the source built the binary in the process of building the binary got the map file um so that's the ground truth map map file right and then we run our code on the on the binary without the benefit of the map file so basically our code tries to guess a map file is is essentially and so then we just compare two two map files so um that that's sort of um how it's done okay um so this is a um this is a new um algorithm that i that i came up with that i haven't added to the lfa code so i'm going to talk about later but all of the

lfa code is on on github you can download it it works as an ida plugin i'm hopefully going to be porting it over to geidra um now that gedra is out there hopefully maybe this summer and hopefully we'll get this in there too so we had played around with graph algorithms for a while um graph algorithms are cool but they tend to be computationally intensive um and we we tried a bunch of different things and they were generally very slow and didn't work really well so i tried to come up with a an algorithm to possibly be lfa and so this is what i came up with the idea this is this is really simple

and the the idea is we still want to keep we we want to we want to do a you know we want to do a graph segmentation algorithm but a lot of times when you do that you don't you don't keep track of how they are how those mod or how those functions the the the nodes in the graph how they are um organized in the binary so a lot of times when you generate this graph it in instead of saying okay i know these things are all organized in this linear direction it turns into a sort of two-dimensional graph and the algorithms try to segment that thing without really taking into account that that original

organization so what i try to do is develop a um you know normally in in in graphs you're doing like a minimum you're trying to minimize a a score this and you make a cut based on a minimum score this is a maximum cut approach the idea is so if i if i look at all of the calls between functions here and i take their average so for for a particular cut location um so so um take this this this here um i'm looking at all of the all of the calls that cross this this edge okay and i'm taking their distance and then averaging that distance it's actually really really really simple and what that's giving you is it's

giving you a it's giving you there we go um it's it's um giving you an idea of where um where where you have where you might have a gap between between relationships so you can see i don't really have you know everything that's transiting here you know i and you can kind of kind of visually see that in this this picture this is just sort of a made up example but like i have related stuff here i have a related stuff here but anywhere where i have right don't have anything here and most of the things most of the calls transiting this edge are big then you know okay maybe there's a a gap in functionality here

and so so we basically just calculate that score take the maximum score make a cut here and then we remove these edges and run the algorithm again on the on the sub graph in this sub graph and um [Music] and we and we recurse until we've cut it up you know just to threshold we basically just have to pick a threshold of size and then say we're not going to cut anymore that's all i had on that um so this actually i think this might actually work better than lfa it's going to it's gonna be a little bit um more computationally intensive uh so lfa runs in if you're a if you're a big cs person

you know runs an order in um with n being your number of functions um this is this runs in n log n so it's better than n squared but um it's going to be um you know it's still going to be more uh computationally intensive but might end up giving you better results um so okay so you know what's next what are we gonna do um i mentioned i gotta i need to implement max cut um and i need to i'm hoping to so a couple of people working on um ghidra and ida interoperability layers which would be great so i'm kind of hoping to wait for one of those to to win out and then port my code to that

so you'll be able to run it on ghidra and ida i'd love to build a nice um respectable gui uh for it um one of the things so i mentioned the the guessing the name of the um the object file one of the things we've got in there now is we've got the ability to guess the object file name based on common string references so we've got some simple code that just goes through all your all your strings within that object file and says what are the common um what are the common words what are the common um doublets triplets and then says here's a guess at what this at what this um what this object file is

and so if you have a lot of co or a lot of um like debug prints it actually works pretty well so um right now that though you kind of you you get some script output and you can then run those scripts on your thing but you don't get to really interact with it so it'd be nice to build a gui to do that and so i might i'd also like to get it tested more on this project i'm working on called all-star um so as and so as i mentioned we don't have a great data set out there in fact there's no there's no real data set out there and you talk to folks in the community

everybody kind of acknowledges that this is a problem um it it's it's you know people kind of have made some some small data sets for their own research but you know if you can imagine um you know pretty much any other field where machine learning or deep learning has been applied you know there's there's usually some shared large data sets that everybody can kind of do do their research on compare notes and all that kind of stuff so the next thing i'm working on is what we're calling all star which is basically just like in in one phrase it's it's build all the things so we're taking debian um debbie and linux which has uh about 30 000

packages in it um and we're gonna build it for um so so theoretically with debian this is definitely theoretically in quotes you can compile all those packages for all of the architectures that debian supports so you can compile it for x86 arm mips ppc and ibm s390 and so the idea is build all those packages for all of those architectures save all your compiler artifacts save header files shared libraries and get as much as you can through the compile process um so get your rtl from from gcc um get get gimple which is gcc's like sort of high level representation and so we actually have a working right now we have a working um prototype it so it extends the

dot cross project which is which is a basically a set of docker containers that um that have cross compilers and we just extended that to be able to build um debian packages and uh it kind of works that's that's like that should be my like that should be my tagline is like it kind of works um and so we've got in in in about three days so this is this is running it on a single core obviously this is going to need to get run kind of massively in parallel but i i needed to kind of walk before before i ran with it in one week i was able to build 550 packages and um out of that we got about 80

binaries that built for all five architectures so that's that's where the theoretically debian builds for those architectures is that the the packages um a lot of time like every package will build for it for 64-bit x86 every time um but a lot of times they don't really they're supposed to um you know they're supposed to look at your compiler variables and other config stuff and you know in order to figure out what architecture to build for and a lot of times they don't so if that holds up we could expect to get about about 4 000 um binaries which which would be pretty decent if um i'm hoping i'm hoping to be able to figure out

some bugs and maybe we'll get um you know maybe we'll get a little bit more if we could get say eight or ten thousand that out of the out of the thirty thousand that would be um pretty decent um so here's the here's the link to the um to the code and um yeah please um uh download it check it out um i'm on i'm on twitter a lot of people bug me on on twitter when they they um you know feel free to to hit me up and and uh you know give me a give me a review uh you know let me know if you if you tried it out if it works for you if it doesn't

work um all of the above um and i think yep that's all i got so all right thanks a lot guys any uh i'll take take questions for a little bit here and we can also take them offline later [Music] um so the question was yeah i have some some errors or some false positives and error detection and the question was uh what's what's my current approach is it is it making the error detection better or other methods to make it better um i i actually don't have any um i don't have any current um funding uh for for right now so really my my um my hope is to get this data set built

and then to kind of loot back to lfa and then try to run it you know on on run it on that data set try out more more things but so hopefully once i have that data set it'll be easy to run you know right now i can only sort of run it on five things and you know it's it's not very good representative sample but if i can run it on you know on 4000 things um then i can pretty easily tweak different different things and and and if it if that makes it better across four thousand things and that's that's probably a good indication that you know that that tweak is actually better so that that's sort of my

um that's my thought process right now um anybody else i know i'm standing between you and lunch hey question from the little guy in the in the back [Music] yeah we can we can go buddy i'm hungry too yeah we need to get some uh some lunch here

all right thank you guys