GT - The New Cat and Mouse Game: Attacking and Defending Machine Learning Based Software - Joshua Sa

Show original YouTube description

Show transcript [en]

um so hey I'm Josh sacks so my my day job isms a to scientists at Sophos and I'm the talk I'm giving today is entitled the new cat-and-mouse game attacking and offending machine learning based software so I'll start by just introducing where I come from my team so that's me on the upper left these are all the people who I work with so our groups a mix of machine learning scientists and also engineers who helped builds build prototypes and our role within sofas as we build machine learning models that go into our firewall products into our sandbox product and Torrie endpoint security products that kind of thing we are we are hiring so if you're interested let

me know [Laughter] okay I'll start with an outline of the talk so I'm gonna start by discussing why I think machine learning security matters a lot I'm going to talk about the increasing role of machine learning in in the technology landscape let me go on to sort of set up this is my discussion of missioning layering security by just giving it a brief overview of what how I see what machine learning is just in case they're machine learning sort of neophytes in the room to make sure everybody's on the same page then I'm gonna talk about machine learning attack and defense go into a little bit of bacca demmick literature on attacking and defending machine

learning models and finally I'm gonna give a case study where I show how I can attack a machine learning model that we're actually we actually built and are using it at Sophos and talk a little bit about defense also okay so let's get into it so so that this quote is a play on the comments or tech aphorism software is eating the world which maybe some of you guys have heard too so this idea that since like the 80s and 90s right you're seeing software pop up in place in unexpected places and it's sort of ubiquitous throughout our society whether it's in the fast food industry or in hospital illustrate places where software wasn't a key factor net now it

is right into what we care a lot about soft software wherever it pops up and software security as security experts right so I think I think we're gonna be seeing machine learning popping up everywhere in a similar way over the next couple decades so it's a one way of framing this right is that we've had a series of industrial revolutions over the last couple centuries each each of which was driven by a new technology right it's a steam power in the 18th century like mass production driven by electricity and in the late 29 in the early 20th centuries then the IT revolution that our generation is sort of lived through and and a lot of people

a lot of economists and technologists are saying and I tend to agree with them right that we're gonna be seeing over the next few decades a big shift and the way that we we live our lives and run the economy based on based on machine learning artificial intelligence whether it's the transportation industry right with trucking and self-driving trucks and cars whether it's education right and new education platforms that rely on on machine learning etc so machine learning machine learning is gonna be more important than this machine learning security is gonna be more important so our community tends to see cyber security risks everywhere and everywhere software exists right and I think we're gonna be seeing I think

increasing we need we're gonna need to sort of learn more about machine learning and think about the site of the cyber security risks that pertain to machine the machine learning as well okay so I'm gonna bet so before talking about machine learning security is when I back up and give some machine learning basics how many of you guys would say you're pretty fluent and the way say like neural networks or support vector machines work ok that's what that's what I was hoping you said so a lot of people aren't says that hopefully this will be helpful to you guys okay so I'm gonna today to try to illustrate some of the basic ideas behind gnashing there I'm

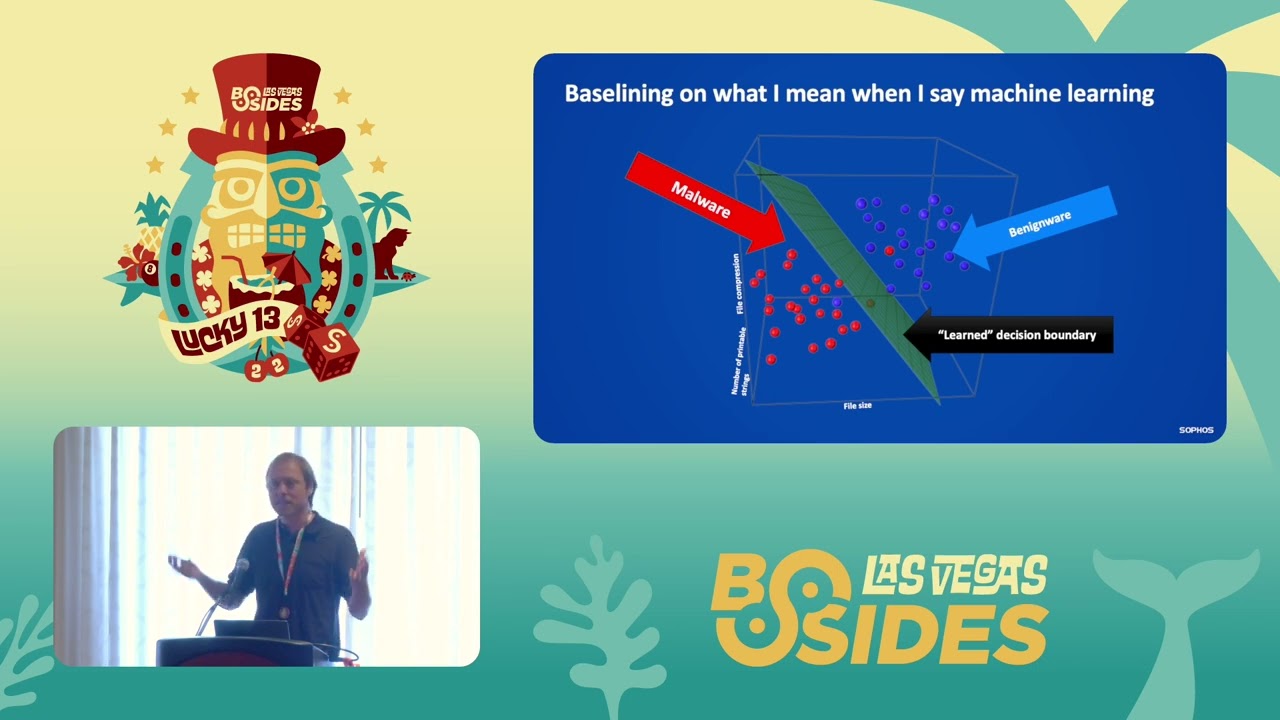

gonna walk through a toy example since this is a security audience and we're all security people in them to walk through a use case where we build a machine learning system that detects malware so to get into this example so he says imagine I want to build a malware detector that just uses three attributes of software binaries to detect whether or not the min the malware is a binary as malicious or not so so let's say to see these three attributes or how how compress the file is how big the file is and the number of printable strings in the file so so if I sort of if it let's say have a data set

of files and I extract these attributes from the files I can plot them in this three dimensional space right and suppose my malware and is all sort of in this area of the three dimensional space and my benign where is all on this area the three dimensional space right so the red dots here are malware and the blue dots here are benign where then I can pose the machine learning problem is it sort of as a mathematical problem right of identifying a plane right that separates that the blue dots from the that's right so we can pose as a mathematical problem then the problem is to solve it so in the image I just showed you there's there's a plane that

separates the examples in the real world usually we need to find a much more complete and complicated function right so like these lines in this image separate the the green dots the code let's call those benign where and the red dots let's call those malware right and the mathematical problem is is to find a function that sort of that defines all of these lines here let's call those lines decision boundary is right because we're making a decision if you're on this side of the boundary right your benign where if you're on that side of the boundary your your malware so everybody with me so far okay so there's this sort of geometric intuition to have how much in learning

works obviously so this is a two-dimensional case with two features this is the three-dimensional case and in real-world machine learning systems you've got like thousands of dimensions right so you can't visualize them but it's the same geometric ideas that they drive in so how do we create a decision boundary I'm gonna I'm gonna show if technology cooperates here and maybe I won't show a video let's see if this works there we go okay so so here's a video in which we watch a neural network which which is shown on the right learn as as part of a training process write a good decision boundary for different data sets right so what you're seeing is sort of any of these

animations the neural network starts out by not having a good decision boundary separating the the green from the blue dots and then as the training process goes on right it's sort of learned how to separate their greens from the red how does this learning process work and I think a good intuitive way of understanding it is through this knob metaphor so imagine these knobs on the Left if you if you sort of tweak them they they change this decision boundary in the image right sort of like an etch-a-sketch but with like a million knobs straight so you're sort of moving the knobs and the decision boundary changes position so but so the problem is to turn the knobs into just the right

position so that the green dots are you know that benign wire is separated from the malware so how do we know how to turn the knob so in modern so in neural networks which are currently a an important sub area of machine the way that we learned that the way that we do it is is through some simple calculus basically and in this iterative process called stochastic gradient descent so the basic idea is that we can use some calculus to determine for all of the knobs what direction we should turn them in and in to what degree but the calculus isn't perfect right so we can't just solve the problem by doing that we have to take sort of a little

step and then we look to see how good the decision boundary is and then we take another little step bite by running the calculus again this process is called so figuring out what direction to turn the knobs in into what the group degree is called back property we'd use it algorithm called back propagation to do that and then actually in the iterative process by which we'd sort of turned and check and turn in check is called stochastic gradient descent and there's a lot more depth than what I'm giving here but this is just an intuitive description of how it works so these are so the two simple idea is that you just I just described it right

the idea of a decision boundary in this geometrical space and the process by which we learn the decision boundary through this sort of knob tweaking and learning process are really the foundations under which that the complex and modern neural networks that are used it like the Facebook's and googles of the world work right so you get these really complex neural network architectures like the one shown on this image this is a famous neural net called Alex Ned that's a computer vision neural network so it involves like dozens of layers of neurons thousands of neurons right but but but the whole system really is like what it would announce to is it identifies a decision boundary

between two different types of images and it's in its tuned to this sort of knob tuning process okay so um I'm gonna move on from so that that's sort of my brief spiel about how machine learning works I'm gonna move on to talking about machine learning security now and specifically subverting machine learning classifiers so subversion to me machine learning systems is currently the current in the current state of the art is it's it's quite possible this over Michigan learning systems and it's it's relatively easy so it's sort of dramatized that I want to walk through an example of the way an attacker can separate it's considered a computer vision system so look at these images

here and and so and we're gonna talk about a computer vision system and the way in which we can trick it into into thinking this school bus it's a different kind of artifice right so if so imagine I'm an attacker and my goal is to get a computer vision system to confuse the school bus for an ostrich right so a burden and so the way the way I do that at a high level and rate is I compute a delta to all these pixels so so so for each pixel a small sort of change that I'm gonna change to its magnitude so the Delta is pictured here right so these are this is what I'm

gonna add to add to all the pixel values in this image in order to get a new image that's gonna confuse the computer vision system and what I have here on the right is the new image that the results from taking this image adding this specially crafted Delta right and then and then getting this new image and it turns out that so there's really no visual difference for to the human eye in between these two images but the one on the left is classified with high confidence by the computer vision system as a school bus and the one on the right is classified with high confidence is an ostrich right so this is this is

interesting I mean so this is a toy example in a real world you know case you could you could you could smuggle porn onto Facebook or wherever you're violating somebody's torn Terms of Service by using a Delta like this and computer vision systems would miss it right you can get you can make your way through spam filters that look at images right by subverting them in this way so that there are real-world consequences to be able to being able to subvert computer vision systems in this way so let's go back to the decision boundary idea and sort of think about how this attack works in a more mathematical sense so suppose suppose this is my

suppose we have this decision boundary and it separates sort of the world of images between sort of the the school bus territory and the ostrich territory right so if you thing about it this way so all of these red dots straight are images of school buses that are machine learning system has correctly sort of placed in the red zone right so it gets those images right and all of these all of these green dots are examples of Oster states right and the machine learning system is sort of learned to discriminate between the tube based on this decision boundary so that this is a goal of the attack right is to take an image of the school bus and sort of

shove it somehow over into this Green Zone without changing the way it's received by the human eye right every every following and so it's so how do we do that so it turns out we can use the same kind of mathematical ideas that we use in the training process for the machine learning system in order to craft at Delta like what I should in order to shove the example over the decision boundary to do that the basic attack shown at the school best example you need access to the to the model code and all of the model parameters basically you need access to the model and so in the real world you can always do that

right so if I'm attacking like Facebook or like if I'm attacking like GG mail spam filter and great I'm not I don't actually enlist impact into Google's servers but I don't have access to the model so that's an issue for an attacker in the real world there's a way around so the the problem of having to have access to the model though which is what's called what's what we can call a proxy attack so basically if I have access to let's let's say I don't have access to Google spam filter but I can send millions of emails through the spam filter and see what gets classified as spam and what doesn't then I can actually build a model that attempts to

replicate the results of Google's spam filter and my own machine linear model and then I can do I can do this attack I can do the school bus attack against my own model and it turns out that the attacking my own model which has been trained to mimic the Google own model actually will reliably get through at least in experiments it's been shown that it can reliably get through the Google models is a sort of called a proxy an attack so I've been talking about images and the way we can subvert computer vision systems and I haven't been talking a lot about programs right like malware versus benign where programs and this is because it's harder

to subvert systems that classify malware versus benign where and the issue is that so with images you can image this gracefully degrade straight I can change every pixel value and an image and and shove it over the decision boundary it'll look visually similar to the original image but the program I can't go and change every byte value right it just won't run and malware and so a malware author who wants to subvert a machine learning based malware detector it has has two problems right they need to get through the machine learning system they also have to have their program work and it's it's much harder to accomplish that it's hard to accomplish that task right so it makes

it makes it trickier to get it is still very possible but it makes it trickier to get get programs through machine learning based detectors I'm not gonna talk too much about the academic literature on attacking and defending machine learning models by just want to give you guys a sense of the the back and forth and the back and forth that's going on in academic literature about how to attack and defend machine learning systems so for example it so that so that school bus attack is sort of based on ideas that come from Ian's good fella who's sort of a superstar and the machine learning security space he came with a method that uses model gradients

so it requires access to the model to support machine learning classifiers somebody else came up with a defense called distillation that people were excited about and appeared to work and then somebody else came out with a paper defensive distillation is not robust ever so you know said that actually shows that that doesn't work you know you can you can you can tweak the gradient based method in a small way you can get past it right so we're seeing a lot of there's a lot of interest in this area right now amongst computer scientists and using a lot of back-and-forth and it's basically defending against these kinds of attacks as an unsolved problem basically and like many things in the security field

right I think attackers have the advantage right now okay so the last thing I'm going to go through is a case study where so to the background a case study where where I attack and defend a security machine learning model that we have in our group and so foes the background to this is we've started a project within our data science group where we're going through an attacking our and our machine learning models so the models we actually have out in deployment and models that we plan to deploy just as a kind of Red Team exercise and then we're working on figuring out defenses because we want to be prepared for potential attacks that happen in the wild so so the target is a

model that pretended before conferences so it's exposed neural network this is a neural network that we use to detect malicious file paths registry keys in URLs so as part of a larger defensive system that we have a net Sophos here's some examples of kind of URLs that the system detects right so so so if you look through these URLs right the first one is a URL that points to a trojan and svchost.exe the second one is a phishing URL that this attempts to steal people's Apple login credentials and the third is a PayPal phishing link right so if you guys like so these look suspicious yeah if you guys like okay so they obviously look suspicious so they look suspicious

to the human eye so our idea was that you know and machine learning systems should also be able to detect that they're suspicious right and it turns out I'm not gonna show accuracy results but it works well enough that word you know it's a model that we use internally and so first so I want to demonstrate how if I'm an attacker I can get past this this model so I mean first to show some that you've attacks against it so Matt my goal isn't as hacker in this case is to craft a fishing URL that will trick like my mom or you know my grandpa right like into into clicking on it but which also gets

past the classifier so I can't just randomize too much about the URL right I needs to look like you're given some fishing URL but it also needs to get past my internal network so I'm this so I start I'm going to start this demonstration by just making up a fishing URL so this actually isn't a real fishing URL I just made it up Wells Fargo customer support that web hosting de piel it looks suspicious to the neural network right it gives it a 97% probability of being malicious so here's it here's a naive attack against this neural network that actually doesn't work great so I tie I take my URL and I append some random

suffixes right and I and the hope is that it'll it'll it'll make the score lower but it doesn't write actually the score stays about the same or even gets a little bit higher so it so a random a random suffix doesn't work now let's try some instead of random suffixes some sort of benign words like Disneyland while we're sand Jacaranda every toy at the same URL I just tack tack these words onto the end again it doesn't work that you know fortunately for me as as the researcher who created the neural network created the neural networks resilient to this kind of attack as well now let's try it so that so then so the more

sophisticated approach that I tried was I created a genetic algorithm that systematically attacks systematically search serve searches the space of possible suffixes and sort of iterates over thousands of examples and in try and sort of evolves suffixes that do well at lessening the score and after after about 50 generations of evolution so after having tried 50,000 examples right I can get the score down to 43% and 57% with these suffixes right they don't look that different from the random suffixes but they were evolved through this genetic process and if I take the evolution even further in so I double the number so I get up to a hundred thousand exactly of sort of examples that I've tried I can I can get

the score down to eight percent right so basically at this point the neural network thinks the URL is benign even though it looks like a phishing URL right I mean it still looks suspicious right so that I would say that the tech was successful just to give some insight into the genetic algorithm obviously I can't cover this in the 25-minute talk but the basic idea with genetic algorithms is that I'm modeling my suffixes as individuals in the population and and I go through a series of generations so I so I start out by creating a thousand random suffixes I look at the ones that minimize the score as much as possible and I made them so I combine sort of

their character sequences and I do another generation where I where I try them again and to this evolutionary process I get good suffixes and in the animation you're seeing individuals in the population and just to sort of carry the example for their in thighs that every generation the ones that survive are the ones that get higher up on this hill and let's think of the hill as is my ability to minimize this the score that my url classifier has fits out so I also explored some defenses to my attack the best defense I've come up with as far as is it's pretty pretty unsophisticated basically if I read retrain my model on a different subset

of my training data and the attack no longer works so we have a lot of URL training data that so first so we trained currently we trained our Europe URL model on about 100 million URL examples about half of those are malicious and half of those are benign so it's not so hard for me to like I sub subset 50 million of those and you know I can have multiple well I can have multiple if I wanted to just you train on 10 million right each I could have ten different models right and and there's somewhat resilient to this genetic algorithm attack so so basically my score goes all the way back up if I

train on a different subset of the data the problem with this approach right is that I'm not training all my models on all of the data so the model performance is going to suffer as a result so I don't think my solution is it's perfect but it's the solution I've come up with this far so I'm just want to end with some I have two minutes left so I want to end with some common-sense wisdom on defending you can't have a serial attack so I'd say I think it's important to attack your own models and see where that where their weak spots are and fix them don't give at a careers access to your model if possible that makes it

easier for them don't even give it at attackers the ability to query your model with thousands of examples like so don't give them black box box access that also makes it easy with the proxy attack that I showed earlier and the genetic algorithm to talk coda showed you Slayer defense is approach you know defense in depth you know helps read no system is perfect and if for those who are Michigan learning researchers and computer scientists out there I would say this is a really interesting area to work in right now it's a hot area and it's a high-impact area and I would say so do do research in this area so that's it thanks a lot

and I don't know if it's time for questions and comments okay yeah yeah so I'm assuming the attacker like like I'm assuming the attacker has doesn't have the model codes or like the like the model software but but can query the model so like like say for example we released them all as a web service within Sophos maybe like a paid web service for our customers and the attacker could get access to that they could upload you know 100,000 URLs and get this course but they would need to they would need to be able to really brute force the system and get scores back for all of their URLs before that he'd get through it yeah you'd have to have act you have

to be able to query the model a lot so that's one that's one limitation the attacker has in this case is there a difference between being able to query the model for legitimate reasons like I buy a license and I can create the model versus I buy a license than I query the model maliciously sorry can you like if I bought the software I gotta find it to defend my network or I could be defying it because I want access to the model so that I can attack the mall itself yeah is there a way to to provide the model or how do you provide the model without for positive use versa without allowing the negative use that's a good question

I'm that I'd have to think about it that's a good question yeah and you said that training on different sets of your data you helped defend it did you look at bagging using you know bagging type approach using multiple models and did that work yeah so in this case we're not doing bagging and so the hand-wavy wisdom about that in the neural network context is you can sort of get the same effects this bagging using it the technique called dropout which adds noise to your neural network so it's just not something we've I mean so we baseline all of our models with with like a bag like a bag in your purse called random forests rated and this

outperforms random forests so yeah yeah go ahead yeah so uh for your genetic algorithm um for each iteration are the URLs fairly similar like just a few characters different or are they drastically different overall so you mean when they mate yeah are they very different yeah you know I I don't know it was a real kind of a quick it was I probably spent two days on it I never actually inspected like what the like I never lever introspected into the algorithm to see how similar they look I will say that the successful if you watch like the leaderboards as that as the solutions evolved like you'd get a lot of regularity and like it would wind

up finding like these characters sub sequences that just like stuck around generation after generation so it's a probably probably there was a lot of similarity between the parents and the children so that might be a good way to go for the defending that gate was asking yeah if you get a lot of queries that very similar yeah maybe just like yeah mm-hmm yeah so you're saying if there's some like global minima that the optimizer is finding that we could just like waitlist like you know basically just like waitlist away or something or like train on like that would help defend against this attack right like yeah yeah that makes sense yeah that's a really good idea yeah so you you seem a

web reputation service or are you using this as a replacement for it so so they would be I mean I don't so yeah I don't want to speak to like sophist is product plans I will say that there right now I mean so it was you know it has it has a reputation service this is the the role of this neural network is is there's a separation of concerns right so the reputation services for stuff we already know about the this URL malice recept that we don't know about yet right it stuff that is not on a black list because it's not detecting based on Hatchery just detecting based on the semantics of the URL so make sense like

so I think it's complementary to a reputation service you definitely want to block stuff that you know is bad you also want to block stuff they don't know about yet and that's what they know that works for the latter problem yeah yeah yeah when you showed the slides where some of the euros got caught and the others did not get caught yeah what was happening in the back end in the newer network that cost that difference it seemed that was there would have been the same yeah that's the million dollar question yeah yeah so you know I mean these you know neural networks are like kind of famously black boxes it's hard to know what's why they do what they do

we try our best to we said we've got some work like like rich here is like doing some work on explaining why they do why they make decisions when they do but uh right now I I would say I don't know it's a mystery yeah yeah thanks

yeah so in this case the neural network you could say derives the features we feed in basically just like the kind of like the integer you know ASCII kind of a I mean they're using Unicode but basically like you know we just feed in the characters and and it discovers what useful so it's it's a convolutional neural network we could talk about more but we also have a paper about it I give you the link but we talk more about it afterwards yeah thank you mm-hmm all right where we are out of time if there are any more questions please follow on peer peer list folks and thank you thank you [Music] [Applause]