Cryptocoin Miners vs ML - John Callahan

Show transcript [en]

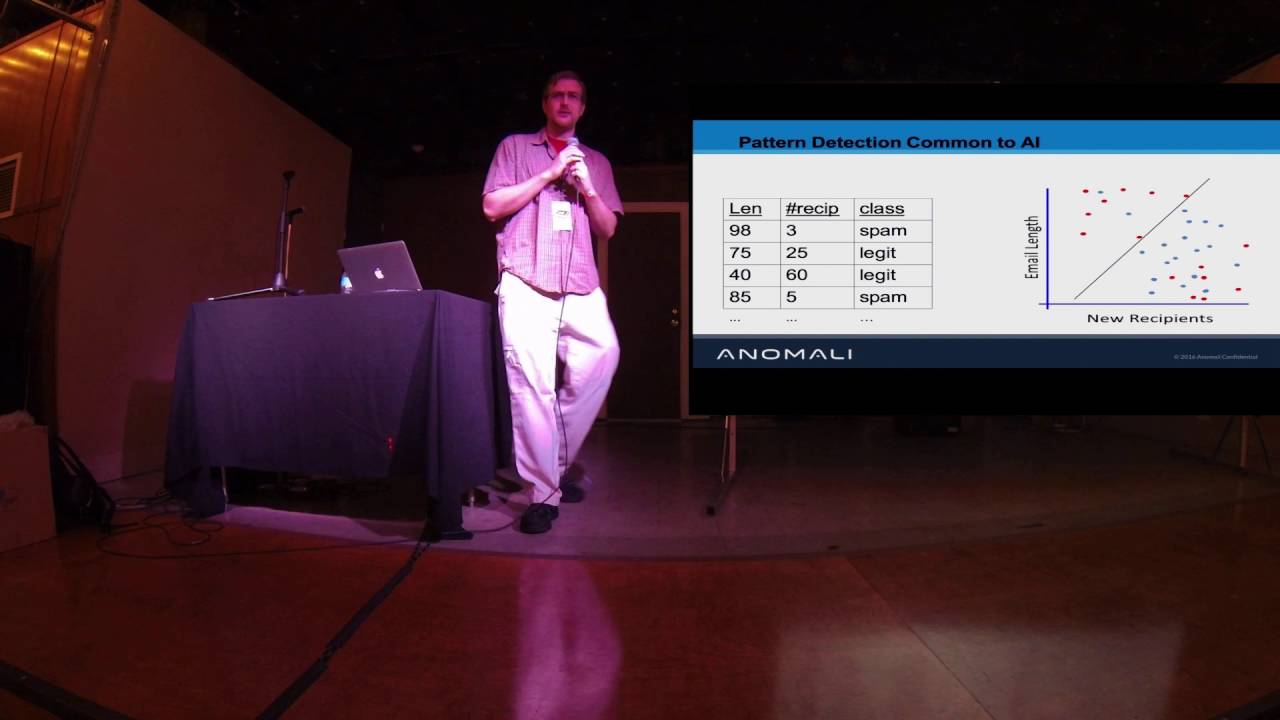

cool guys welcome mostly you look kind of sort of awake right now end of the day so welcome I talked ml versus crypto coin miners I'm gonna go on a little bit of a soapbox here in a minute about why machine learning is a dumb term and what I'm actually doing is just stats statistical analysis nothing special but a quick about me my name is John Callahan I'm a principal abside consultant at a boutique consulting firm based out of DC we do a lot of work with a lot of different people we work with a bunch of different at five's banks insurance industry that we're all over the map I personally like my bread-and-butter was AB SEK but I also

laid our cloud practice I do a lot of container security with kubernetes and openshift and nomad and all that good stuff also a huge Python fan that's really my bread and butter is Python but I've recently transitioned to golang to is kind of like my alternative I need something to run fast and needs to run everywhere all right and go like if I need to write something quick right in a Python or if I'm doing any data science or any math or anything like that I'm doing Python I do really like math math is really cool I am terrible at it I like to call myself a mathematical skid because most of what I do is I just plug

and chug through numpy and I let that handle all the heavy lifting for me I don't really know what I'm doing half the time I'm also a big metalhead if anyone out there likes metal wants to come talk to me afterwards yeah okay so machine learning is cool I think so there's gonna be a lot of graphs and stuff so to light things up beforehand we're gonna do a lot of memes so artificial intelligence is dumb and I mean this is a tech companies in a nutshell if statement is a machine learning pulling the hood off of a bit big if that's a letter artificial intelligence this is me on the left a real-life picture from a couple years

ago of a data scientist that bastardized something or other I'm not a very good software engineer I'm not very good at math so I'm kind of a half-breed muggle of sorts or something like that this was my personal favorite because again I am terrified of math and not very good at it so yeah let's let's get into a little bit so as a quick intro so the whole idea of this talk is to kind of build a very niche ideas kind of solution in terms of finding crypto coin miners within an AWS architecture so within the last couple years I'm sure everyone here is well familiar with Bitcoin and all the other alternative coins that have come out

well it provides a lot of different things it's what it's really done for the black cat communities it's allowed - it's giving you a very direct path from super compromise to cash payout I don't have to worry about trying to sell out your server to a botnet farm or something like that or doing ad click farming or anything like that I can compromise a server turn on a miner and then immediately start reaping profits off of it so it's become a huge attack vector or not an attack vector but a huge issue from servers that have become compromised that's what they have that's what happens to them and all the sudden their CPU gets throttled your RAM

goes through the roof and and your server becomes underperforming so I I went and started looking specifically at Manero and varium which are very very much dead now it's a debt coin I started this research awhile ago specifically because their CPU my noble coins and again I'm working with in specifically in AWS architecture and the reason I focus on CPU specific coins is because preventing GPU mining is really easy just don't let GPU instances run in your architecture or in your environment like I can almost guarantee you like 99% of you out there have no legitimate reason have a GPU instance running just block it I mean just turn it use the I am policies and don't let it run you're

done it's not a threat anymore so I really wanted to focus on CPU stuff what happens if your your m5d large gets compromised and starts running is a variant minor a minor a minor so that's one part of it why I wanted to look in this the other piece too is AWS uses what's called VPC flow logs I'll get into a little bit more of what flow logs actually are suffice to say they are packet captures to a degree of the the network traffic that's going in within a particular V PC if you're not familiar with AWS a V PC is just your veal and it's your virtual private virtual private something I can route the C

stands for its reveal and see you segregate it into your subnets and whatnot so mining traffic is is also very pattern heavy pattern rich and which makes it an excellent candidate for using different techniques to try and flag it as opposed to flag it out from what would be considered normal traffic or not mining traffic so I wanted to look in and see if it's possible to build an IDs it's not really an IDs I'm just looking for one particular thing it's also worth noting that if you are familiar with AWS there's this thing called guard duty that will do supposedly do this for you it will check to see if you have any miners running on your network the only

thing it's doing is checking domain lookups and checking against a known bad IP list that's it so the these all you have to do to get around that is just set up a mining pool on your own domain set up your own private mining pool that hasn't been flagged as a bad IP that's not using a publicly known domain name probably no mining domain name and you're done you just bypass guard duty that was easy as that so I want to build a more robust solution that was able that was built up off the traffic patterns itself I said it something just as a key value lookup so I was I said about and started collecting data

because I mean when I'm doing any kind of like pattern recognition or machine learning type stuff you have to build your data set to begin with so I went and took 12 different crypto coin miners stood them up on a variety of different sea for large or sea for instances they're large through there like 4x large or whatever the largest one is one of each and set up Manero miner and let just let it rip for 24 hours and capture the BBC flow logs and just made a note of the private IP of each miner that I'm sorry the public IP of each miner within the the VPC I also got lucky enough I spent a couple weeks like going into

every slack channel that I'm in begging everyone I knew who was within AWS is like can you please just send me your BBC flow logs I promise I'm not gonna do anything with it I know it's kind of sensitive but just give me your data finally someone was willing to give me a good chunk of their fee BC flow lies they just they just dumped the whole bucket to me which was really generous of them and pretty much enabled all this research it was about three weeks worth of traffic which turned out to be about a thousand different machines which 38 and different eunuch unique connectivity streams across those three weeks so those three metrics at the bottom

don't make any sense we'll get into that in a second so vbc flow logs what they are is they're aggregate five tuple P caps so your typical five tuple P caps is your source that's your source IP address your destination IP address your source port your destination port and the amount of data center or well rather the packet itself in this case what AWS does is it aggregates all the traffic that occurs over that unique connection between each source IP in port and destination IP port and then collects the amount of packets of that have been sent and the size of the packets that have been sent and over those 10-minute windows it dumps that aggregate into

either cloud watch or an s3 bucket which is which again provides an interesting challenge because I don't have raw pcap data to work against this is a good thing because I know I can build my models using far less data instead of having to work off of hundreds of gigabytes worth of pcaps I can work off one or two gigabytes worth of VPC flow logs but also provides a unique challenge in that I have to go back and rebuild the TCP streams since you end up with two log entries for each TCP stream because you have TCPS or bi-directional you have one for each direction but the vbc flow log looks like this it's a

simple space delimited single line entry broken down with the the second bottom line gives you what each fields definition is most of that is pretty irrelevant to us but the things that are important again are the IPS the port's the packets and the data the amount of data so the first thing we can do is just filter out anything that is reject on it so that second last field there that action is either reject or accept and that the reject entries happen when a particular when a network connection is tried to establish but it's blocked by either an apple or a security group so if I try to SSH into a box that doesn't have a

public security group it'll generate a reject vbc flow log entry or if I try to get out of an and there's no there's an Apple that's brought blocking it it'll get filtered by reject so since I'm active looking for active mining traffic it's easiest or it's very easy just to filter all that out right away I don't care about blocked mining traffic right now specifically looking at actively mining traffic the other thing I can filter off right off the bat is the proto field the protocol field so that's the fifth the last one that that's six six and three that's six right there is the protocol six corresponds to TCP so I want to look

for all the TCP entries so again I just dumped an ignore every entry that doesn't have a six for the protocol and right off the bat that that filters out a bunch of my traffic so that makes things very easy to just cut out a lot of the noise so originally I was going to organize by IP ports IPS imports as opposed to just IP addresses when rebuilding this the problem is is that due to the ephemeral ports on the client side of the connection can cause issues so over the course of a ten minute period a mining client might end up using three different ephemeral ports which can break the the pattern-recognition unless I'm able to

rebuild and say okay I have is using this ephemeral port for three minutes and this ephemeral port performance and this third one for another three minutes which is a pain in the ass to aggregate and requires a lot of CPU processing Pat or a lot of processing power so instead I just aggregated things by unique IP addresses and just analyze things that way and then I also in order to to make sure I was treating the same thing as a source I went and checked to see for each IP address was it a an AWS owned IP address if it was I treated that as a source or if it was a private IP address

within the AWS IP space or a private IP address in the private IP space I treated that as a source and from there I went and rebuild rebuilt everything and reallocated each BBC flow log entry so the last piece you have to do which I should have included on here is you also see there's a start and end field the third and fourth the last field you can use those to actually correlate which two entries belong to the same TCP stream at a given 10 minute interval so you take those going back together and now you have the total data sent across one TCP stream over ten minutes and then after all of that you

actually have you actually have some working data to work with I can go back and say all right for the first 10 minutes this box is online I sent it sent a hundred packets to this IP and receive 30 packets to this IP over the next 10 minutes it sent 40 packets received 20 and from there I started building out my data set so I started building out my models so there's really from all that information we have we have eight different features that we can extract the number of bytes that are sent the number of packets that are sent the number of unique source and destination ports which I didn't end up using for anything but it's there if I

need it and then the actual length of the communication which again the length of communication I didn't really use for anything either I focused primarily on the number of bytes and packets that were sent and my general strategy was to just graph the data and eyeball patterns and just see like what stood out to me what what can i quantify and find turn into an algorithm or function and filter against everything else and then I would just as I would build these models I'd go back and run all my test data through it and see what got passed if I had if all my test data was coming back and saying oh this matches this model it's not a good

model so I go back and look at something else so attempt zero was just a look at the number of unique destination ports the idea being that if a client was interacting or a compromised client was interacting with a mining client it was always going to interact with the same remote port such as five five five five six six six six whatever that the usual mining ports if you're familiar with that at all with you and as well as the fact that there'd be a lot of ephemeral source ports this didn't really work too well surprisingly it turned out that in my test data the destination IP address has still ended up with a lot of

single-digit port numbers or not single data port numbers with a number of ports number of unique ports to use or one - so this didn't actually end up being a very good strategy so I just went back to the drawing board and try it again I actually had a lot of failures I don't include all of them here or maybe here all day so moving on I I went and tried to again graph my data and actually see what was going on with it is there any kind of clustering is there any kind of any discernible patterns that I can make out from the data itself so graphing them out this is a couple of

them I think it's only like six of the actual mining clients that I sent out looped over through a gif on the x-axis you have the number of source bytes and then on the y-axis you have the number of source packets so just looking at this we can already tell there's some strong patterns going on here for one there's a strong linear correlation between all the data it follows a pretty straight straight line pattern right up the board on top of that there's also some striations that are being built in there these like clustered lines of points I think I can pause this yeah so you can see there's there's these striations that are coming up through

the pattern through the data which is like that's a probably a pretty unique pattern you're not going to see that and just other randomly generated network traffic so it's probably gonna be a really good thing to work against however trying to quantify that's a little bit tricky and trying to quantify that and then build a model offer that runs quick is even harder so I set that aside for the time being if we look at the destination metrics again we see some correlation it's a lot looser the they're not hugging a straight line nearly as tightly as the other ones are but again you do get what looks to be kind of not a striation but like a

honeycomb pattern so again we're seeing some sort of structure to this data which is a very good thing structure is what we want structure is what still lets us filter out noise and this is this is for the destination bytes and source our destination bites and packets rather and then if you go through and you map out the opposite of that so the number of source bytes versus the number of destination packets you don't really get much of anything again whoops you get the same kind of honeycomb pattern but not nearly as tightly but oh no this one is where you get it yeah so you get the same kind of striation / honeycomb pattern going on

here as well but correlation is not there really at all you you're getting much more close closer to an oval than anything else and then the same goes for when you when you swap a new source packets versus destination packets so suffice to say we do have something to work off of we've got some strong clustering and some strong very clear linear aggression going on between all this data the strongest between being between the source back into the source bites but clearly not between all of them again we also have the striation pattern occurring weirdly I didn't point this out before there's also points at 0 0 you might not be able to see this but

down here in the corner there are points at 0 0 which is a little bizarre that what that's telling me is over a 10 minute 10 minute period for whatever reason my client wasn't sending any packets or any data for 10 minutes straight it's likely that the pool went down for a period of time and it just and it's not actually zero but very very close to it I don't actually know I didn't monitor these miners at all I set him up and Senator alarm on my phone for 24 hours to come back and turn it back off I didn't watch them at all you can also set up like backup mine or back up

pools in your mining so if your pool does go down you switch to another one but I didn't explicitly didn't want to do that I wanted mine against the same target for a long period of time to get very clean data set to work against suffice to say I don't know why that happened and I haven't gone back to really investigate it at all does come back to bite me in the ass a little bit so building model first thing I did was focus on the clustering so we have some very some various against a very strong clustering going on on the source metrics I so what I decided to do was build a convex hull is anyone here actually

involved with machine learning or data science at all two people cool so this is kind of similar to a one class SVM except I built this before I knew what a one class SVM was so I built a convex hull I should probably go back and fix that so essentially what I did was all right so well if you don't know what a convex hull is if you take a point cloud and you draw the hull around it you have a convex hull as opposed to the concave hull and again I didn't implement the math for this I called psych it dot convex hull and then pass my points in and I got a whole back because I'm

really good at math like that so what I did is I took those points and I built a convex hull around it and I used that as my first model and I started taking my test data and was feeding it through this defined hull to see all right what kind of test data do I have that exists within this this hull that I've defined this polygon did the same thing for the destination metrics so I come back III didn't do 100% members so I prayed against membership rate so when I took a set of test data and pass it through here I check to see how many of the points relied with or how many of the

points were within the convex hull itself and defined that as the membership rate I didn't want to do 100% membership rate and rip things out if they weren't a hundred percent because I didn't want to have it caused any false positives so I picked 98% completely arbitrarily just to see what would happen so with a 98% membership rate so yes I have a slide out of order here awesome well so what I'm actually doing isn't two dimensional convex hulls like these graphs indicate they're actually four dimensional I build a four dimensional convex hull which I can't graph because we live in a three-dimensional world so but because defining a convex hold of depends on Euclidean distances you can

define a four dimensional convex hull which is exactly what I did with both the source and the destination destination metrics and then fed all the test data through that as opposed to running building two separate models and then running things through it twice I have one model and running it all through once this is this works in really low dimensions I'm only using four different dimensions so that makes things really easy to do if you ever tried this technique against like real data sets that like data scientists work with that have like thousands of dimensions this quickly falls apart due to though it's called the curse of dimensionality so if you're ever getting into this at all I would highly

recommend looking that up and being aware of that anytime we're trying to build any of these models the curse of dimensionality basically states that as your dimensions linearly increase the amount of data you need to really represent that field increases exponentially so you need a lot if you have a hundred mentions you need a lot of data to properly represent the space within all hundred dimensions because I'm working in four I don't really need that much so it works out fine for me so running it through I end up with the some false positives I'm sorry this is a true negative so this is an example of a data set that got filtered out so this

is a set of my test data that came back and it had under a 98% membership rate and I mean just looking at this you can just tell the the points are nothing like what we saw for the mining data itself this is clearly something very different and we came back and I filtered 38,000 streams down the 19 not nearly as good as I was hoping it'd be that's a 50% success rate that's I mean that's terrible I mean it's not bad for a first try but overall 50% is not good you can't do anything with that so I went through and started to look at what actually got through what kind of false positives I

got this is a false positive as you can see this also looks nothing like the other data we had it's a single point that's sitting down by 0 0 but because it lies within the convex all it passed and it got flagged is mining traffic which is clearly a problem so going through my quick and dirty hacked fix for this was just to strip out all zeroes all points at zero zero or technically near zero zero I just went and I just ripped it out and then I rebuilt my convex hull so that same false positive they got through last time was trimmed out just by me redefining a convex hull that didn't that wasn't including all those points

down by zero and that did the trick I mean that went through and that killed everything from the last nineteen thousand filled streams that had gotten through I got down to four not four thousand like literally four and all they are are just single points that are sitting inside the convex hull or not single points but they're actually stacked there there's like a couple hundred points probably from some protocol that calls out and calls back regularly every so often but the same data in the same response something like ntp or something like that so again this this looks nothing like our mining traffic so this is really easy to strip out but this first pass

wasn't sufficient so from there I have a lot of options I kind of sat down and just kind of like brain dumped a lot of different things I could go off from there some different things you could do is like the size of the actual hull itself so if I was to go through and look at the site if I was to define the convex hull for those single points it would come back as being at or near zero that's obviously a very big difference from the size of the hole of the blue area so that right there would automatically filter those out you could also do the mean median nearest neighbor which is for every point you find the

closest point to it and then your average those distances together that gives you your meet average you mean and your median those distances and those give you your nearest neighbor metrics that would obviously work very well as well spatial homogeneity which is basically just quantifying how evenly distributed the points are across a given area there are functions to do that the Ripley K&L functions but they're expensive and they're also not implemented in numpy which means I have to read it myself and I don't want to do that so an alternative idea I had was just a treat is to build a bunch of concentric circles off the centroid of the cluster so if I was to take

[Music] so if I was to find the centroid of this probably somewhere around here and then basically build a set of concentric circles with its Center sitting at that center of this the center of this cluster of points and then find the number of points that are within each circle I basically get a hack together quantifiable metric of how evenly distribute the points are if the points are all very skewed to one side that you're gonna get end up with a line graph that's very very spiky versus very smooth again there's also the striation stuff I honestly don't know how I would quantify the striations there I've white bordered a couple of different solutions and all of them are

pretty bad so I don't know how I'd even go about doing that time stamp analysis actually going back and looking at how the data changes over time instead of looking at aggregate packets so I'm looking at three weeks of data at a time or 24 hours of mining data at a time it would probably be better to look at the treat that data as it is as temporal data it's data that I'm receiving at 10-minute intervals to start treating it as such watching how the data changes every 10 minutes as I get a new BBC flow log entry and in my bucket and then there's the usual linear regression stuff so actually performing a linear

regression as opposed to just eyeballing and saying it there is a correlation there so I spent like an hour to today actually playing with playing with these and I went through and I did the how the convex hull changes over time this is kind of my attempt at the temporal analysis so the idea was to take every set of VPC flow log entries and every every time I got a new entry I'd recalculate the convex hull find the area of that and then charted so you can see over time the the area grows obviously because as you get more points or more likely to get points I create a new edge or new plane on your convex

hull which creates a larger area the reason it flatlines for so long well actually I don't know why it flatlines for so long this has to do with the psychic tool I was using to actually find that the convex hull was throwing errors when I was using - few of points so I threw it in a try-catch and then I just passed the exception and then that was it I didn't actually investigate why that was happening but you can still see that over time that the area of the hole grows my guess is because the points I was selecting were too close together it couldn't accurately find because because they were too similar it couldn't do the

proper floating-point calculations to figure out where the edges of that convex hull would be so once it had enough once it had enough points that were far enough spread out it was able to finally find a convex hull of sufficient area and then support and then going forward it could obviously keep finding it but as you can see this is they're not super similar I I haven't graphed it out as how that compares to other traffic but they follow a somewhat of a same pattern the big thing to note is that there's a lot of flat areas with large spikes so you're not seeing regular steps at any kind of intervals what you're seeing is a lot of smalls

and then a big jump and then a lot of small and a lot and big jump and that's probably due to how the the cryptic horn miner is finding a share and submitting it to the pool because that's we're gonna require a lot more traffic and as that requires more traffic you're gonna end up with wider points outside your your metrics build a bigger hole and you're gonna get a larger area the other one I did was the the concentric circle once so basically I went through and found the centroid of each of each set of mining traffic and then calculated the number of points so on the the x-axis is the distance from the centroid

and then the Y is the number of points so as the radius increases from the centroid you're obviously gonna have more and more points that are within that circle and again this fall is pretty much the same line and this this would probably be a good and cheap metric for determining spatial homogeneity like how distributed other points are they relatively evenly distributed like they would be when this graph where something we're much more dispersed you probably find something where you have a lot of sharp peaks and then plateaus as opposed to something with a nice smooth almost logarithmic looking curve so this is the - i tinkered around with today other things I would need to do is

optimization I've been running this on my rinky-dink laptop my 2015 OS Xbox it gets through everything in like it'll run it'll build the model in about 60 seconds 90 seconds and then I'll run through a gigabytes worth of V PC flow log data and like five minutes which obviously isn't isn't too bad for just a crappy laptop you could easily throw this on like an m5 large and get ten times the performance out of that it's also single threaded which isn't the end of the world okay since numpy is doing a lot of the heavy lifting numpy just is just a direct hook into L pack or la pack and blasts which are already multi

threaded in multi core so that you're using most of your CPU to begin with but you could probably optimize it a little bit more i also seen you go back and actually benchmark how long these took I didn't actually I should have timed it while I was already in there doing it but I forgot to actually call that and see how long it's taking to actually generate this data as opposed to generating say the convex hull and then collect more data I mean I need a lot more data mining different coins would be interesting although I don't think that'll make much of a difference because it's all built on top of the stratum protocol different reward

systems which is how pools like payout your share like you found a Charice min sharri you get X amount of Bitcoin or theory and whatever you're mining the way that's how much you get is determined there's a couple of different systems I don't think that I'll change anything but it's worth thinking around with the big one to look into is solo mining solo mining generates a very different-looking traffic I actually have some data I just haven't started working with it yet I've crafted it out in which you actually end up with is a thousand points pretty much all on top of each other you get very very very very tight clustering all around the same area not even like a linear

regression line but around like the same very small area you get points stacked on top of each other which again should make things pretty easy to to fingerprint as well as rejected traffic rejected traffic again would probably be pretty easy to fingerprint because it's basically stratum calling out every ten seconds making an API rest call and saying hey are you alive no blah come back it'll sit for ten seconds we'll make another one it's just gonna repetitively do that so it's gonna have a very very structured and predictable pattern that'll be pretty easy to flag and then I need more test data to like actual VPC flow log data so if anyone wants to donate any feel free to come

talk to me so I can get this ball around a little bit more you also if you guys are heavily involved at AWS and you're aware of reinforced that happened earlier this week at all you probably saw that we now have span ports as a service they're calling it traffic mirroring what you can basically do is take a source Ani and elastic its j'veux troll Nick you can take that and say I want to mirror this traffic is somewhere else and point it to another anti so you get like you can get true P caps off of that like you can run bro on top of that or Splunk or whatever is your doing whatever the the file beat version of

that is so you can get like more traditional network monitoring tools hooked into AWS now as opposed to relying on a BBC flow logs which are unique for AWS requiring very special tooling the downside is it's pretty expensive I mean 11 dollars a month just for a single network interface you're gonna have to pretty if you want to make that economical you're either gonna have to be very specific about the things you're looking at or you're gonna have to set up you're going to react attack things so you end up with like you had used proxy servers to tunnel all your traffic out and even then you're still probably gonna miss things is what happens it's something behind the

process it gets compromised you're not watching those logs and it's making requests that don't go through your proxies so it's it's nice that you have it but it's pricey and it's probably not going to end up replacing flow logs as the end-all-be-all network monitoring solution for AWS the other downside to span ports as a Services I'm calling it you get a lot more data which is a good thing you have a lot more data to work with you build far more accurate models but because you're generating you have every packet captured as opposed to just a single line entry representing 10 minutes of network data you very very likely end up with terabytes of data

versus gigabytes of data which means the models you build are going to need much more resources to process and actually churn through all that unless you have some very strong filtering mechanisms to start kicking stuff out so there's some pros and cons to it yeah for the for the tooling and stuff I just I built it all Python numpy and sapphire that are the two IOC you scikit-learn I think I used I used SK learn for something or other and matplotlib was for all the charts if if you're at all interested in like data science not even data not even data science if you're interested in like how math beats security or how like something like

Netflix builds a recommendation engine I highly recommend this book algorithms are the intelligent web it's like 130 pages long it's really short the only prerequisite knowledge is you have is Python do you know Python and did you graduate high school with and like take math in high school like they're really the prerequisites for it or pretty low I will say the Python syntax is more advanced they use a lot of the more advanced numpy features like all the ND array slicing is something that it wasn't very familiar with so I had to go and spend some time learning things on the back end but it's a very good intro to this kind of stuff if you're at all

interested in it which I think every blue team operator should start digging into these things and start building their detection engines around these kind of principles as opposed to just rules simple rules rule checks and again if you're feeling generous I need vbc full log data so if you're feeling like donating I'm even happy to work with you and try to figure out a way to sanitize it like just doing a match replace on all the IP addresses and ports happy to do that I have a proof of concept for that already see how come talk to me if you are I also plan to release this at some point I have some sample code out right now my

github but it's terrible to look at it it's really bad but I plan to make a soups and nuts release that basically you just point it and it has three bucket with vbc flow logs and it'll just you can just let it rip you can deploy it wherever you want and if you want to donate some vlog data I'm happy to give that give you early access to that yeah thanks for listening guys I know it's a short talk end of the day so I tried to keep it a little bit more brief see any questions any follow-ups or anything like that yeah

huh yeah yeah yeah exactly exactly so the question is when I was looking into solo mining did I ever wear any of the solo miners actually find a block the answer is no they didn't and that is definitely something I should be doing to see what that traffic looks like for when a solo miner actually does find a block if you're not in the crypto coin mining if that's not your thing finding a block is extremely rare and quote-unquote difficult to do that's why people do pool mining so everybody pulls their resources and you all look for a block and that's what I was sorry about submitting it's a sexual successful share you found part of a block and your

pool gets a payout and you get a share of that full payout to pay on how much work you did we're a solo mining it's all on you it's you're basically playing the lottery with far worse odds at that point far bigger payout but far worse odds but no I haven't anyone else cool thanks guys appreciate you coming out [Applause]