Bringing Red vs. Blue to Machine Learning

Show transcript [en]

besides DC would like to thank all of our sponsors and a special thank you to all of our speakers volunteers and organizers for making 2018 a success my name's Bobby Fowler and I'm going to do the the talk right before lunch on bringing red vs. blue to machine-learning just a little bit about me to kick things off I do data science at endgame if you guys don't know who endgame is were a endpoints security platform we have some machine learning baked in I'm part of that team so I do a lot of machine learning for usability things like comm conversational interfaces adversarial machine learning which is really the the basis of the talk today and then malware

classification which is one of our flagship features within the product one kind of cool thing I was able to to work on this year really at the beginning of the year was a a paper called malicious use of AI was really the the thrust of it and it was a combination of academics industry folks and nonprofits going into kind of a scenario based adversarial examples for cyber politics military physical systems things like that it was super fun to work on and and kind of got me thinking about this idea of you know how red teams and blue teams in today's security spectrum tackle kind of this machine learning issue so I've given talks like this before it more machine

learning specific conferences and there was a ton of math involved I wanted to take a step back and go a little bit more high level explain terminology nomenclature strategies and then for consumers of machine learning security technology in the crowd or people kind of considering purchasing this I wanted to give you scenarios that you need to be cognizant of as well as potential questions you may want to ask a vendor when their marketing team in a dates you with like ml solves everything so hopefully you get some value out of that and then I'll cap it all off with hopefully a strategy base like here's what red teams could consider blue teams and then finally purple teams so my

motivation for doing this is really twofold I've been going to security conferences for about a decade and I've always been super envious of people who get up here and drop like exploits or talk about vulnerabilities I think it's black magic for the most part it's super cool and I was a little jealous of people who get to do that day in and day out but about two three years ago adversarial machine learning kind of came out particularly targeting computer vision and I was like you know what maybe it's our time for data scientists to do kind of this vulnerability research and red teaming and I got really excited and started to dig into it a little bit more but the other

reason is is maybe even more important for for you in the crowd it's this idea that machine learning has become so pervasive in security stacks and there's been maybe a little bit of a rush to claim table stakes for this technology more and more vendors are trying to implement it to get it on their website to promote it at blackhat and RSA that this rush job could potentially introduce new threat vectors and potentially make security a second-class citizen which for I'm guessing everybody in here is an opportunity to potentially poke prod and exploit so I'm hopeful that uh you know my background and in the story that I'm hopefully able to weave today will provide some insights

there but before we dive into adversarial machine learning at large let's go into how machine learning is being applied just within InfoSec machine learning can kind of be thought of is just a fancy rule in the grand scheme of things so if you have kind of what you have on the the slide here which colors don't come up very well but you have blue dots and red dots good dots and bad dots bad dots being being and you know that these are binaries and you want to devise some sort of rule or logic or something to delineate those you could do something like it the the bottom part of the slide right on our rule that says if you see this many

registry keys and the strings and the file size is a little bit smaller let's call it bad and it will paint the feature space kind of in a 2d plane that that red color and it does a pretty good job it covers I'd say the majority of the red dots it unfortunately leaves some out and incorporates some blue dots and you know that's the whole false positive false negative problem that machine learning is attempting to solve and it solves it I think reasonably well that's a super biased opinion is somebody you use this machine learning every day so fair warning but what it allows you to do is leverage automation and kind of this logic or intelligence to identify

these kind of latent relationships that exist between features and samples in your data set that you would kind of be unable to to figure out on your own using a pen and paper and what you're able to do is machine learning can be thought of as like a fuzzy more sophisticated rule and instead of drawing that nice rigid box on the screen you get this little sloppy less crunchy decision boundary and you can see it it envelops more of the red while leaving space for samples that it hasn't seen before so this ability to generalize is another important thing that machine learning can bring to the table and it does that reasonably well for things like email security one of

the very first things I worked on was spam detection if anybody's taken like a data science 101 you built a spam detector that's almost a given things like identifying c2 beaconing DGA detection or dpi to identify a malicious content is all leveraging machine learning now you EBA is probably maybe the most heavily marketed thing anomaly detection is incredibly difficult I wouldn't say that it works a hundred percent of the time but but maybe 60% of the time it works every time so that's that's not so bad and then finally my space which is endpoint protection leverages it for something called next-generation AV which is a super fancy way of saying just not traditional AV and if you're interested in in checking

that out for free i jump on virustotal and grab a handful of samples and and throw them at the wall and and you can start to see how these traditionally these verses next-gen a V's label label these samples and you'll find that for the most part traditionally these when they're right they are right 100% of the time and that's the luxury they have with with signatures but it's new stuff comes in you'll find that next gen a V's kind of claim it's bad earlier and virustotal has a very cool thing where you can see over time how predictions change and you'll see a V's catch up in a lot of ways to to machine learning

which is I think very very interesting and and really good for the the security community no more hitting that so alright adversarial ml foundation of this talk what does that mean at its core it just means can we break machine learning and the answer is yeah I I mean it's it's not exactly trivial but it can be done and a nice tight example that I like to give is you have this machine learning model for malware and it has a handful of features plucked from you know PE header imports exports maybe some byte level information and it does really really well so well in fact that that you could use it on your desktop or

within your enterprise but we're trying to do with adversarial ml is make structural changes to the binary without breaking it or without changing kind of what it does at its core which seems extremely difficult and sometimes it can be but other times it can be just changing a section name or unpacking it or maybe adding a string or two when these things happen my job becomes really really difficult and we run around with our hair on fire for a little bit but it's still incredibly good to know these things and that's at its core is it's really what we're trying to do with adversarial machine learning and we're able to do this and take advantage of these vulnerabilities

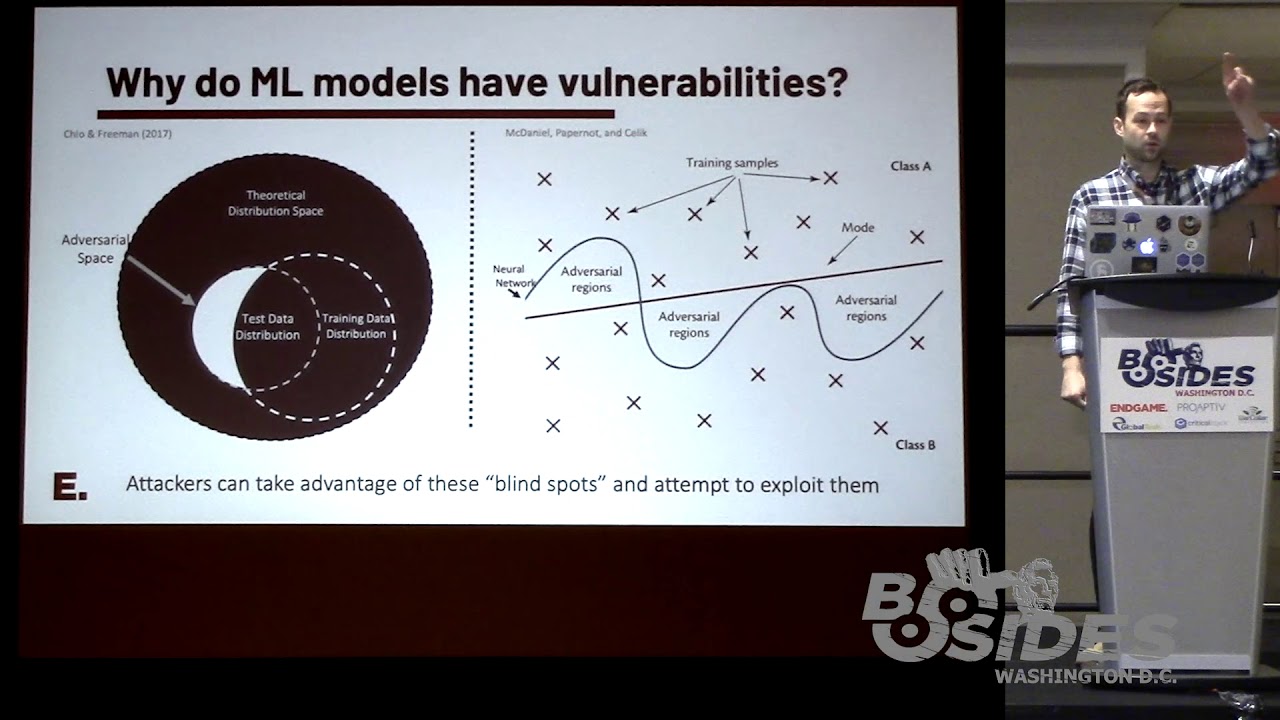

and and this is where it gets a little theoretical and mathy by taking advantage of the distribution space of whatever problem you are are trying to tackle with machine learning so let's say it's malware classification that's that's in you so you want to wrap your head around you have this whole distribution space of every by an area that's ever existed how how can you possibly capture that if you are a data scientist building a malware classifier the answer is you're not going to you're gonna try to capture some sort of subset that gets the gist of it tries to generalize but that's really only representative of a pocket or a percentage and you train your model on

that and it does reasonably well then you have your your test data that you have and there's a small overlap between test data and training data but for the most part training data sits outside of that distribution in that pocket and you can kind of see the little crescent shape that's white there is your adversarial space it's things that your model just simply doesn't know about and can't possibly compute and that's the area that that's sort of crunchy space that you can take advantage of and you can see it more realistically how a model would interpret it over on the right hand side where you have the neural network that draws that nice wavy boundary and it does a really good job

of separating the good and bad but then when you look at your data and you look at the mode and it kind of cuts right through linearly it opens up all these little pockets that allow the classifier to maybe be blind and presents an opportunity for exploitation and there's probably no better example of this then who here's seen this example anybody all right more than I expected so that's great so this is like a super famous one from Ian fellow who's like the father of this research but the idea is for attacking an image classifier you have a picture of a bus and then you inject noise and this noise I mean it looks a little

staticky there but when you overlay it or add it to the image you really can't tell the difference but to the classifier it's completely different so you go from a bus to an ostrich and there will be a couple more examples of this in a little bit but it is a thoroughly fascinating branch of adversarial research and what this does is this takes advantage of things like blind spots and and will do things really to make it as straightforward as possible to exploit and as you'll see in a little bit oftentimes you don't even need direct access to the model you're attacking you can create a substitute model or something that looks like it and exploit kind of this notion of

transfer ability which hopefully the example I give in a little bit will drive that point home we're gonna walk through kind of the the nomenclature now just so everybody has an idea of what I'm talking about and I encourage you like if you're interested in any particular thing screen shot on your phone write it down google it there's a ton of research in the space the papers are really interesting they tackle things from biometric systems to Maur classification to self-driving cars the whole gamut so whatever whatever picture you're fancy there there's probably some research in it but the two primary or foundational attacks in adversarial machine learning are are these ideas of exploratory versus causative in

exploratory really seeks to just evade the classifier find these blind spots through querying probing whatever making manipulations and then throwing it back at that same classifier you don't have any control over training data or anything like that you don't necessarily have a bunch of knowledge of the underlying structure like where the training data came from what models are using parameters anything like that whereas causative is something that's maybe a little bit more familiar to two people in InfoSec it's this idea of model poisoning so getting in to the training data and being able to influence that little wavy decision boundary I showed earlier by by kind of tweaking samples and and throwing them against the wall so spam detection is a

good example of that you have these systems that you know look at stuff on a online basis or real-time basis and constantly change their prediction and how it's made and these sort of attacks these poisoning attacks will say oh well if you say click here for free stuff then I know it's spam and then they change it to like leet-speak and then it bypasses because over time the model changes its decision boundaries and everything else so the the next thing is really trying to capture the knowledge space of an attacker when targeting your machine learning models this idea of black gray and in white box attacks in black box as you can imagine is this idea of like no

knowledge of what you're targeting so this means no knowledge of the algorithm being used so you don't know if it's deep learning or a random forest or anything like that you're relatively unsure where the training data came from really the only thing you know is that you know the task that it's meant to do I mean you're you're attacking it for a reason so you know that it's either trying to classify images or identify malware or something like that but that's really it you're for all intents and purposes flying blind gray box I'll show example of in a little bit this is limited knowledge this means that you're able to pool or tease out a

little bit of information so this could be maybe a blog post it talks about where they got their training data from which wouldn't be very smart it happens at whatever or maybe a more realistic scenario is instead of just giving you back like this is a bus or this is a piece of malware it says this is a piece of malware and I'm 87 percent sure so that little nugget of information that 87 percent is hugely important now show kind of walk through how you go about exploiting something like that and then finally you have white box which is what a lot of us in this room if you are building data science models and

then performing adversarial machine learning what you would be doing you have direct access to the model you know where the training data is coming from you know all the parameters you have kind of the the keys the castle and you can hope to to elicit some sort of knowledge from that I'm not even gonna bother to broli so adversarial specificity is really just the difference between targeted versus indiscriminate attacks targeted the best way I'd like to describe this is I have something that's a dog but I want it labeled as cats this is you have something and you want it to be called something very specific that is not what you have where indiscriminate is more or less like I

really don't care what you call this picture of a dog as long as you don't call it a dog that's the whole that's the whole spectrum of that frequencies you can imagine you have a couple different things this actually deals more with I think traditional red versus blue where you have a single opportunity to exploit something versus you know multiple opportunities so one shot just means you get one chance to throw it at the model learn something and then the next time you have to get it right or it's a lost opportunity naturally there's a lower lower barrier to success there but in real life environments that's kind of the only option you have where iterative is you can kind of go

back and forth playing 20 questions with the model and refine your your exploit sample to to eventually bypass so some kind of fun attack examples that have come out in the past couple years that I always like to talk about are things like adversarial glasses so some researchers at Carnegie Mellon got some 3d printing tools and they built these glasses that look kind of tie-dyed and when you put them on and went in front of a camera that went to classify celebrities it went from classifying you is like some guy with glasses on to Milla Jovovich and there are a ton of examples of this sort of idea working it's this idea of just slightly perturbing what the

classifier is expecting to get a vastly different response than you were expecting and adversarial patch is really a continuation of this and arguably much more dangerous it's an idea that you could pronounce a sticker and throw it on a stop sign and suddenly it's being read it not stop but speed limit 45 miles an hour or you know if you have something that says is this a banana and you put it next to it it says no it's a toaster which is so much I guess funnier example but but these things are I think a major concern and there's a lot of folks in the self-driving vehicle community that are actively researching this and trying to develop

countermeasures and then finally just talking about how I guess effective changing one little dot on an image could be some researchers came out with something called one pixel attacks where they were able to manipulate or more or less just whiteout a single pixel on I think at 256 by 256 image so it's not super clear by any stretch but it went from being able to classify ships with near 100% accuracy to calling it a car or a horse a frog deer and airplane so you can start to see how how effective this could be with just minor perturbations which is I think really really interesting but adversarial machine learning isn't necessarily just attack there is a bunch of research in

the defensive use cases arguably not as fun to to talk about but important nonetheless these fall into two primary categories kind of a proactive where you attempt to make the model more robust prior to ever being attacked things like adversarial retraining which we'll see an example of distillation leave one out all these things to try to reinforce and make your classifier more robust to two attacks and then the second one is probably the one that is much more doable for anybody in the audience and and really even me in my day to day its reactive so how can you detect adverse serial examples after they're already in your environment and being thrown against your model so that's things like

developing a secondary classifier or anomaly detection for anomaly detection which seems insane but kind of what you have to do in these situations or input reconstructions so if you think about the one pixel attack that you saw up there you could run like autoencoder denoising to try to lessen the perturbations and and make it a little bit smoother for you to to classify correctly so adversarial retraining is probably the one that gets tossed out the most is the the go-to most effective approach to beating or at least attempting to stave off adversarial examples all this really is and it's kind of broken down here on the right is you have your training data and you

build your initial model then step two is you yourself kind of purple team it and you create your own adversarial examples against the model you just trained then you if they're effective you roll those examples and your training data retrain and then suddenly you're more robust because of this Augmented training data set and this is pretty effective it works extremely well against one-time attacks as you could imagine but anything where you get multiple opportunities you're still you're still not in the best place you're just giving the attacker too many too many opportunities and what that really does in the grand scheme of things is once you go down this path you're really just setting up kind of

this game theory arm nurseries between you and a would-be attacker and it kind of brings you to this idea and this is used in a bunch of different domains but with an adversarial machine learning this no free lunch problem where you have this trade-off between being super accurate or super robust against attacks and adversarial retraining on the previous slide is a good example of that you get better at staving off attacks but you sacrifice some accuracy or classification performance due to that and that sort of decision that you have to make is is really an interesting one particularly within the information security domain and and red versus blue as well and what that kind of lays out is is really this

I call it an arms race earlier but it's just in the grand scheme of things an unfair advantage for the attacker and I think anybody in here data scientists or just Salk analysts whatever would probably agree with that it's it's way easier to be offensive and defensive person and the reason why and and to that I'd call out kind of on this table is on the defensive side for machine learning you're really only as secure as your weakest link and for offense it's just finding that weakest link and exploiting it that kind of sucks and the other one that might make any marketing people in the audience cringe a little bit but on the defensive side you need

to be almost 100 percent perfect right or depending on if you're looking at materials like 99.99% accurate but then on the offensive side it's like I don't really care as long as like 10 percent of the time it works I'm good to go like this is a low-cost attack for me I'll give a couple of different examples of how easy it is to just spin up these examples and and they're mostly throw aways but when you find one that works then you're pretty much good to go which brings us to hopefully the point where everything I just told you will be reinforced in a way that is both pertinent to you and and and hopefully a

decent example so let's do scenario 1 Congrats people in the audience you just bought pew-pew endpoint platform what next-gen AV baked-in it's awesome it's almost definitely going to stop every piece of malware you could ever have in your ear Enterprise and the vendor put it in virustotal they're super confident this is all great the best part is is they put it in virustotal and they provided a score because I wanted to show you how confident they were in every decision they could possibly make so for you is a would-be attacker the the threat model here is you kind of have limited knowledge it's like a gray box situation but it's potentially iterative because they have it on a third-party

website which is great so you attack that as opposed to attacking the real world platform so what we're gonna do is we're going to attempt to build a an agent based platform that probes this model on virustotal and attempts to craft adversarial examples that beat it so what does that look like it looks like it might've froze now there we go all right so what we're gonna do is construct kind of a a game and this approach was taken by researchers I think at Google brain open AI a few others to be Atari games like breakout this idea of using reinforcement learning where you have your environment in breakout it's the breakout game and then the scoring mechanism so you move

your paddle and if you knock away blocks you get reward that reward is some sort of point system and if you don't break any blocks you don't get a reward and you do this tens of thousands of times until you learn the best approach of where the paddle needs to be for our problem we're going to replicate that same thing our environment is the next-gen AV in virustotal our reward is the confidence score can we make the confidence score go down instead of up and we're going to make non PE header breaking changes so things like adding imports changing section names appending zeros to the overlay something like that and we're gonna do this over and over

and over again until we kick out an example that falls below the threshold and is called benign so we did this at a ten-game and it was it was reasonably effective blackbox attack kind of against a a model that we faked kind of in virus total we created samples and then threw them back into virus total and and we were able to full like 10 15 20 vendors at a time doing this approach and it's kind of neat that you can do this again this is relatively low cost we have code if you want to check it out on on our github so you'd start playing around with this it's kind of an interesting idea and you

can drop in whatever classifier you want that way you aren't breaking any sort of laws or licenses anything like that but yeah so on the red side it's it's relatively relatively straightforward to do this on the blue side though what what can you do I mean they're attacking a model kind of in a space that you have only a slight purview in but what you can potentially glean is you can start to look at you could replicate this yourself and start to look at the what I call recipes that were thrown out is the most effective so what changes were most commonly recommended for ransomware to go from ransomware to benign where so this is things like unpacking appending

to the overlay section renames or you can look at viruses you know 10% of the virus samples we threw at this platform we're able to evade eventually which recipe was the the most effective adding a section adding or appending to imports understanding this will let you better understand which features within your model are potentially the most I guess prone to exploitation and you could either dampen those which is an option you could remove them completely or you could try to go the adversarial training approach generate a bunch of these examples throw me or classifier and then boost it that way so a couple different options there all right case study - this is my favorite one your company

just purchased deep deep the deepest deep learning you could possibly have doesn't even need features just throw the whole thing in there and it will get a right 99.9 percent of the time because that's how these things work their website has no real information on it it's just pretty much all marketing so you aren't able to derive any real ocean there also it doesn't provide any sort of score it's just a good bet so they actually did a good job there your threat model for this is black box it's a one-time attack because it's in its kind of in platform it's available as is so your goal in in this case is to try to exploit this idea of transferability

where you create a substitute model that you think is close enough or sits in the same space as the model you're attacking and they may share the same feature space and you can exploit them it sounds insane but it actually does work so how does it work the main thing is trying to identify training data that would be around the same distribution of the deep deep model which seems difficult until you think about something like malware classification and you realize like there's only so many places you can get malware so you can start to pull that down you can get a representative sample and then you could do something like create your own model or link to the

bottom you could use code like malkov which is a nice open source deep learning malware classification model and what you do is you once that model is up and running you attack that model you don't even worry about the deep deep model at all and you attack it over and over again until you get nice juicy adversarial examples and then you can throw them at the target model the deep deep model and more often than not it'll actually work it's kind of this crazy idea that when you when you deal with a finite number of features in this case probably 256 because it's it's reading in just all bytes there's only so many places you can go so many places you can

run and hide in this situation so any model you create that it's relatively similar to another model you are attacking those examples could potentially transfer over using this approach and and if you're interested in trying to carry this out on your own like I said Malcolm is awesome it was designed by I think some Booz Allen researchers and then if you want data that's relatively distilled down and not just full-blown malware we have a data set called ember which is already feature eyes I think there's one and a half million feature vectors there of good where and and bad where that you could use and you can basically recreate this entire set up alright as far as

defending this it's kind of bleak in the grand scheme of things this is not a feel-good story by any stretch you could do adversarial training again you probably sacrifice performance again you get yourself in this arms race of trying something and then they just come back and try something else an option that has become a little bit more prevalent lately is distillation which is a compression technique that is super mathy I won't go into hyper specifics here but you basically replace the good and bad labels with probabilities and then retrain and then the decision boundary that you ultimately create is less kind of jagged and much smoother and it becomes a little bit harder to

find those little crunchy points to exploit those adversarial pockets neither of these are our silver bullets by any stretch best-case scenarios it slows the attacker down for a little bit which at the end of the day is probably still a win because it's just way too difficult to capture every possible permutation that you could see in a malware classification example any sort of network attack example really anything like that okay last case study here is a poisoning attack you just got an IDs called red herring ink he uses online learning so a classic anomaly detector scenario what this allows the vendor to do is save a bunch of resources because it can start to identify stuff in real time it

doesn't necessarily need to be brought back and manually retrained the threat model here is more of a white box so you are much more familiar with everything associated with this model it's an iterative attack surface it's something that you continue continually poke prod and and throw examples at and and hopefully exploit the exploit in in this case though is is trying to push that decision boundary in one direction or the other so you can start to introduced classification errors which lessens the the confidence so think of a spam example where you are allowing spam to get through and then people eventually are just like I'm tired of this it doesn't work and people turn things off which is really one of the

goals of a poisoning attack a good example of this that was not security related just funny was Taiba if you guys are familiar with Microsoft's super racist AI that came out it was I honestly best best intentions there but yeah Twitter is it turns out can can make people in an even AI go a little crazy so the attack strategy here is it's super straightforward it's you have your training data and all you're doing is injecting kind of a shaft in there things that are a little perturbed now like I said once you identify that decision boundary it just pushes it up or pushes it down a little bit and then once you get it into a good enough place

you just kind of say I'm good with this it's misclassifying enough that I consider this poison and we're done in dusted so this you can't see that well but so the idea here is you start with a decision boundary kind of the black line that is highlighted in step 1 and what you do is you throw those 4 or 5 stars over at the 0 axis on kind of the y axis and you do that kind of over and over again and what that does is this these examples to somebody monitoring it don't seem particularly anomalous they kind of sit right along the decision boundary but what this does over a series of iterations is it moves that decision

boundary up and then it creates a pocket where you could eventually take advantage of it and that at its core is is really what the poisoning attack is trying to do if you're interested in this code actually came out of Clarence Chios Safari book I think it's like security ml which is which is very good there's an entire chapter kind of on adversarial ml focused solely on these examples and it's a great way to gain even further intuition kind of in Python environment defending poisoning attacks this is my one very antiquated meme so this is full dad mode here but really you have a couple different options one is super mathy where you do dimensionality reduction and you try to

take the features and put them in a feature space that the attacker just can't comprehend you just make it more complex this would work at least slow them down again until they gain more knowledge and again you have the the arms race problem the one that's kind of captured on the right is you could you could monitor inputs see if a single input or a bunch of inputs from a single provider start to come in all close together then you could use those spikes temporal spikes to say maybe this is a little anomalous another one is if it's an online learner that's constantly retraining you slow that down to two maybe slow down the boundary decision

boundary shifting one direction or the other and then finally one that is super effective and one that we use all the time are gold kind of gold standard queries these are samples that you you crafted yourself that each time the model retrains they have to get these right and that's kind of you're keeping everything in check if for some reason you don't get these right something has gone terribly wrong and honestly that one it's it's the most simplistic but also the most effective way to do like a sanity check before rolling something out I have a colleague Hiram Anderson who used the the bottom tagline and that's true like labeling is super important you have to have almost

absolute faith in the labels that you get from your data provider which can be incredibly difficult for things like malware or people's interpretation of malware any sort of crowd-sourced data too is is super messy and requires a lot of human attention so things like active learning or after active labeling become paramount to your success kind of in that area so at the end of the day you kind of have two approaches for these scenarios that I I laid out you have the the left side of the screen which is the more traditional red versus blue where your data scientists are kind of kept in a vacuum these classifier designers and they're waiting for attacks to come in and it's

very reactive we haven't started doing this at a ten-game we're actually gonna try this in December where we have a legit red team attacking our models while we try to understand what's going on in real-time and devise countermeasures I think that's gonna go really poorly for my team would be my guess the other option and one that we do employ on a semi-regular basis is it's just like a purple team like army a one I create a model I'm going to attack it and then see how it goes and then make modifications or develop countermeasures so for red strategies you know I'll lay out blue shortly after this you have a couple of different options are there red teamers

in here by any chance no one yeah all right thank you for raising your hand otherwise it's gonna be super awkward you have you have a couple different options most of them are Osen related the easiest way is to see if it's publicly available which is super nice things like virustotal maybe vendor websites that they've set up just to let you test it now for blackhat or are say most of the time vendors are relatively mum on their marketing materials about how their stuff works but every once in a while they'll let loose a nugget like a blog post will squeak through that'll provide more detail I recommend kind of kind of reading those learning terminology and then

using using something like archive.org which is a collection of all academic papers and then you can start to look those terms up get research and a lot of times those will be paired up with really cool things like open source platforms and in frameworks and and that's really nice the other thing is if you're interested in in doing something like this particularly to perturb malware or maybe create like exploit examples or something it's really important to understand like how to perturb that malware we use leaf which is a pretty nice way to edit a binary without breaking it backdoor Factory which i think is defunct kind of makes it easy like python easy to identify code caves which

you can inject various bits of code in there too to fool or stun a classifier and then one that is actually super super good I guess in the grand scheme of things it's just depending a bunch of zeros at the end of a file and sometimes that'll work there was a paper that came out I think two months ago where they did that and it worked like 40 percent of the time which is not at all alarming and just something that we's data scientists need to consider toolkits so this is these are the things that make it super easy to do these red versus blue scenarios in a closed environment like I said the malware env for endgame that

was the kind of Atari based simulator that we built I recommend checking that out clever Hans full box some of these others are done by absolutely brilliant kind of academics and researchers at Google brain you said all these are available open source they're all Python they incorporate red and blue strategies and I think you're going to see more and more kind of growing out of this specifically for the InfoSec domain blue strategies like I said this is this is Sonam a feel good story for blue teamers but no silver bullets really otherwise this talk would have been significantly shorter hardening models at the end of the day comes at a cost of classification performance and it's cost

that most vendors aren't willing to incur so that's something that you need to consider on both sides of the house it's just you need to be right more often than you need to be secure which is a bit of a bummer but that's just the reality of the situation some things that you could do though is seek out like third-party testing and validation so you can start to understand how your models hold up to scrutiny as I said labeling become super important so you could do things like look at samples that citrate on those decision boundaries that may be a little difficult for your models to classify and then have human experts review them and provide more steadfast confident

labels and then at the end of the day I would recommend just doing kind of this purple team approach where you build a model and then you attack it on your own at a minimum you'll start to understand some nuances and and some unpredicted or unknown behavior of your model which is always good to good to understand if you're going to push it out to something like virustotal we add endgame do that too III just recommend like maybe don't provide a score don't incentivize attackers with any more information than they need just doing like a traditional good bad will get the job done and then finally I guess just two questions that if we have

time at the end I'd be interested in hearing from from the audience your thoughts but like do red team and blue team's kind of need a machine learning expert or a data scientist on hand as attacking these technologies becomes a little bit more in vogue and then finally is there really a difference between developing a adversarial example and just finding a positive in the eyes of a machine learning classifier what does that responsible disclosure process look like this is a little bit different than the traditional vulnerability community and it's something that I don't think anybody's really thought about because this is such a nascent field and then finally if the bulk of you here are just consumers of this

technology I definitely recommend the next time somebody comes knocking your office door saying ml will will save you from everything think about these questions like don't be afraid ask where did they get their data how did they label it when they throw up things like 99.9% effective what do they actually mean by that like are they is it an accuracy thing or is it actual classification performance how often do they false positive on something does it generalize well concept drift so overtime do they run into any problems do they have to retrain more often because malware can can change depending on the the flavor of the week these things I think and and there was a great conversation a few

months ago with Swift on security where he was being I think approached repeatedly by ml back vendors and he just threw it out there he's just like what do I do I have no idea what to ask but I want to ask something and a lot of these questions kind of came out of there a couple blog posts we've done at endgame and they seem to resonate well and in a minimum you'll make you'll make an SC or sales person sweat a little bit which it's not not the worst thing but yeah it's these are these are great questions people should have the answers to these they're not overly difficult but it will provide you a little bit of

information before you you plop down some money that's it any questions yes such a human

yeah so man it's almost like you work at endgame yeah so we we actually just rolled out something that was ml back for for fishing part of it was just like looking at static features of a like a office document and that's that's pretty effective in the grand scheme of things the other thing that we're doing is applying deep learning and in computer vision so we're taking a screenshot of the image and then using something called Yolo which is a very funny way of saying like you only look once and it provides object bounding boxes of things like enable macro buttons or click here for finance reports and we try to identify those so when we present them

to a stock analyst we say hey we know it's bad because of static features but if you don't believe us here's a screenshot with like circling it and marker like here's exactly why it's bad so it definitely can be done I think other vendors are also doing some really cool stuff with that too just just in different ways so yeah

yes so we have so the example you see if I can pull it up quick so here this this was actually against real real vendors we took the samples that the bypassed are our little substitute model I guess and then threw them against buyers total just to look at kind of a before and after so before we made the changes and then after so we were able to to actually evade a decent mix of both AV vendors are starting to do a really good job with like heuristics so they're able they're just looking for maybe one thing and if you don't change that one thing then it doesn't really change anything at all right so I would say for the most

part they're relatively good at being resistant to to that sort of problem unless you make fundamental changes like packing it but if you just add an import here and change the section name there I doubt that there's like a massive difference

No so I think that blackhat this year and if anybody here attended that I feel like IBM try to create like a simulation of how like a large-scale actor would do this I I personally haven't haven't witnessed this I think what you'll see with with like the rise of deep learning platforms and and things like that that everything's more accessible and it becomes easier to build these things so it wouldn't surprise me at all if that becomes something that happens in the next maybe 18 months two to two years and I think that's that's it we're out of time I'll pop over here or go outside if people have anything else but if not that's that's it Thanks

[Applause]