Oh my Pod: Lessons from building one of Australia's biggest CTFs

Show transcript [en]

so thanks for another fantastic con from uh silvio and kylie and and all the uh organizers and volunteers running community events is extremely stressful and um they pulled off another fantastic one this year so what will western australia export when we can't dig anything more out of the ground could it be hackers for four consecutive years a volunteer team of pen testers instant response specialists software engineers and other infosec professionals from around the perth community have come together to build and run one of the largest capture the flag stand alone capture the flag or ctf competitions in australia maybe it's the largest it depends how you count i don't know so hi my name is sam and i am the state

director for trustwave in perth i look after wa and south australia but i've only been in that role officially for 10 days so i'm better known as a principal pen tester for trustwave spider labs formerly from the hyphen side of things i'm also the technical lead for wactf i build and look after the infrastructure and try to try my best to ease the integration panes for our challenge authors every year a dozen plus hackers from western australia and a small number of interstate allies contribute their technical expertise to build challenges for wactf these were the technical contributors for our most recent event wactf0x04 and it wouldn't have happened without them volunteering their time more broadly we've received financial

support or other relevant contributions from several big players in the space including oz cyber pentester labs offensive security some universities and banks wctf0x04 ran in december 2020 and we had more than 300 west australia hackers compete in person for more than fifteen thousand dollars in prizes perth was fortunate to skip most of covert so we supported multiple locations around the cbd for players to compete from but we also had a number of pre-approved interstate and other remote players west australia is a big state and we wanted wactf to be as inclusive inclusive as possible to wa nationals so at its fundamentals wactf aims to increase the cyber literacy and skills in the wa infosec community facilitate

new entrants to the field whether that's from high school or university graduates through to people who are career transitioning and to showcase the talent that we already have in the state to industries that are looking to hire infosec talent ultimately wactf aims to make wa hackers a cut above the rest or at least that's what i think maybe the real reason was just an excuse to hire an entire cinema for the awards ceremony and watch hackers on the big screen we also eat several thousand dollars of pizza each year and we've even had red bull sponsor us because hacking is an extreme sporting event wactf is a two day event with technical challenges built from real life

many of us in this room would have played cts before where to solve a challenge you had to realize that some random string was encoded in some obscure way and oh what do you know it's also been roll 13. i see limited value in those sorts of challenges last year's wsetf had 52 challenges split between web forensics exploitation crypto ir and misc and with the exception of the misc challenges every one of them were built or modeled off things that the challenge author had seen in the real world in its humble beginnings wactf started as a set of shared docker containers running on a single host we also had a syslogger and a jump box for

administration this was the layout for the first two wact apps with so many challenge authors we needed to make sure we could support their contributions without needing them to learn a bunch of new tools and ensure that if it worked on their machine it would probably work in prod so docker was a logical choice here and we still use it to this day here's a dockerfile from our last year's wactf all of our containers must be built from maintained minimal attack surface base images such as alpine linux or docker scratch they also mustn't run the challenge binary as root and we do not allow privileged containers privileged containers are often the first thing that gets mentioned

when talking about docker security they have most of their isolation techniques disabled or severely weakened and they grant the container full access to devices on the host without any capability limitations capability limitations are a key strategy used by containers to reduce their attack surface and hence the ability for an attacker to escape out of the container route inside of the container isn't the same as root on the host linux divides the privileges traditionally associated with the root user into these distinct units that are known as capabilities that can be independently turned on and off docker containers by default already have a reduced set of linux capabilities assuming they're not privileged containers so if you find yourself in a container

as part of an engagement or another ctf you should check to see if you're in a privileged container you can do this by trying to run a command that requires a capability not normally given to docker containers such as the iplink add command which requires net admin capabilities or if you have it the captch command will print all the capabilities available to your container even if you're not rude escaping privileged containers is outside the scope of this presentation but if this is new to you i highly recommend you check out frenchie and maya's talk from b-side sam fran last year called checking your dash dash privileged container we also use docker compose for authors to specify which pods they need

sorry which ports they need exposed for their challenge to run and also to set resource constraints on the containers docker compose is a simple tool that moves the configurations that you would normally have specified in the command line when spinning up one or several docker containers into a yaml configuration file we'll come back to documents later on the limitations of this early setup were clear shared containers greatly reduce the types of challenges you can have without the player being in a position where they can tamper or break the challenge for other competitors it meant that having shellable challenges and many sorts of prives challenges would be extremely difficult so without wanting to break the challenge author's docker and

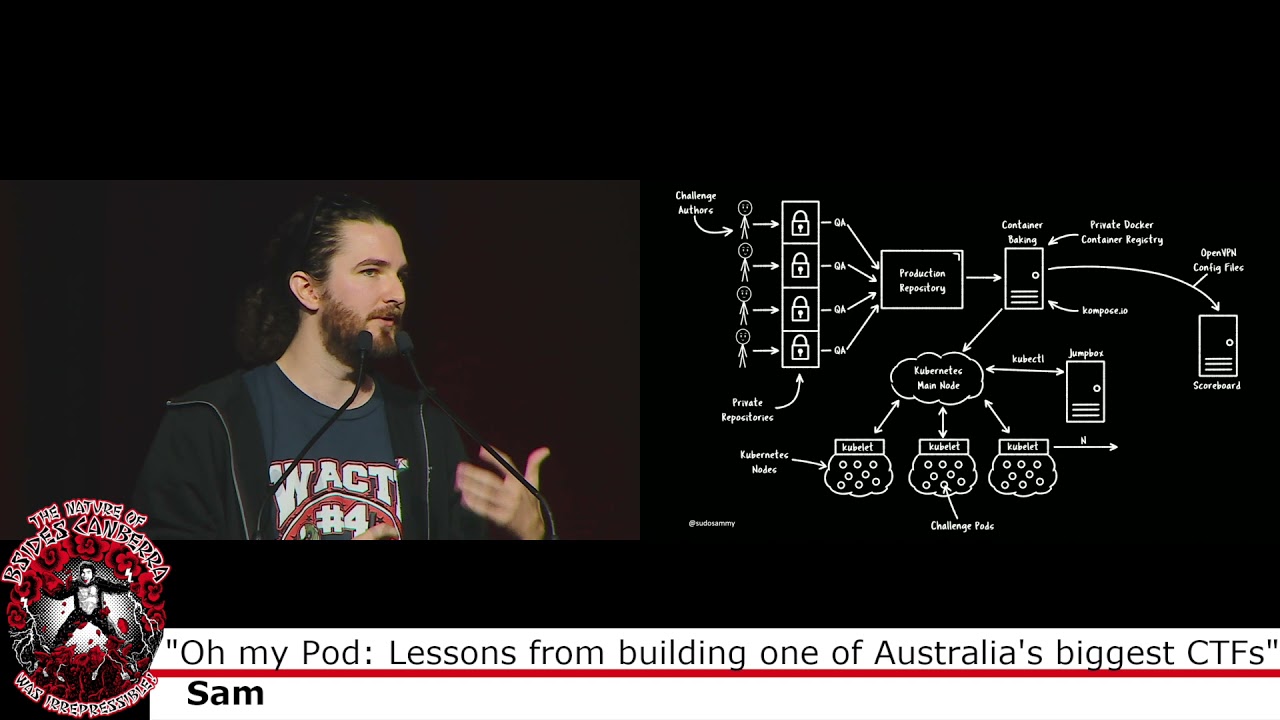

docker-composed workflow that they are familiar with but also wanting wactf to grow up and support unique environments per team i decided to follow what everyone else seemed to be doing at the time and use the container orchestration platform kubernetes and with that our infrastructure now looks a bit more like this to break this down a bit we'll start at the top left with the challenge authors each challenge author has access to their own private github repository inside the wactf github organization this is where they push their challenge code docker files docker compose segment and their documentation the private repo set up prevents the players or the sorry prevents the challenge authors from seeing challenges that they didn't

develop as much as i trust all of the wactf contributors with more than fifteen thousand dollars in prizes each year we have to put in a bit of effort to avoid cheating the challenges are then queued and merged into a production repo which is pulled in by our private docker com private docker image repository where they're built into static images i refer to this process as baking the containers i don't think that's canon but it makes sense to me we're taking our docker files and the source code and we're baking them into an image file we also use a project called compose.io to convert our documpose file into something that kubernetes will understand which are deployments and

services that's then all pulled into the kubernetes cluster itself which we have previously run on google's kubernetes engine or gke and it's then replicated for the number of teams that we have so this is the model we've used for the past two years from the player's side each team has unique name space within kubernetes containing all of their challenge pods pods are kubernetes speak for containers technically one pod can contain many containers but in wactf it's one to one each team has an open vpn pod which is the only internet exposed container at the start of the game players are able to download their team's specific configuration and connect into their own internal game network segmented from

any other team and even has internal dns for them last year wactf kept more than 3600 containers alive across 190 name spaces consuming 1.28 terahertz of compute and 300 gigabytes of ram and it cost us 600 australian dollars a day which i think is pretty impressive in each team's namespace there's also a shell box which can be ssh to for the players to receive reverse shells and other callbacks from challenges the shell box has several familiar tools installed on it including bash curl wget vm and nano and i can understand if this setup raises an eyebrow there are not many environments where you willingly let hundreds of hackers have fully interactive shells within them we built this environment with the

expectation it would be hacked just hopefully in the ways that we're expecting if you're a defender who uses kubernetes yourself ask yourself what happens when your containers are shelled when the attacker has persistence in a kubernetes namespace what then well for us and likely you too you're going to be concerned with the attacker doing one of two things either escaping the container security boundary so if a player is able to execute commands on the docker host which is the kubernetes node that they're residing on from within side a container that would likely be game ending for us or an attacker moving laterally or escalating their privileges within the environment so living off the land if a player is able to access

resources from other namespaces interact with kubernetes itself or the cloud services residing on that will also be game ending so let's take a look at number one first as i mentioned before we use docker compose to configure our containers here's the segment of our docker compose file for the shell box you can see here we set container resource constraints so this is saying that at peak for any given team's shell box it can consume five percent of a single cpu core and sixty megabytes of ram not only do resource constraints deter any resource consumption denial of service attacks but they also allow us to quickly calculate the total resource consumption required for the cluster to help us make

costing estimations you can see that we also drop all the linux capabilities from the container and then selectively add the ones that we know that we know that are specifically required for the container and the programs installed on it to run we do this for shellable containers but since the overhead for doing this is pretty high for other challenges we leave most of the capabilities alone and the dock composed segment looks more like this due to a legacy compatibility issue with docker containers in kubernetes containers are given an extra capability net raw by default which allows them to use raw and packet sockets what that means practically is that containers can dns spoof each other

and intercept traffic that's destined for one another obviously that's far from ideal so we drop that capability from all of our containers this attack depends on the cluster using a networking stack that supports arp spoofing but that's actually pretty common so i'd recommend both attackers and defenders check out the aquaset blog on the topic and race each other you've probably heard this before this saying before because it's almost guaranteed to be brought up whenever talking whenever anyone talks about container security containers aren't a security boundary but let's be honest that's a little bit confusing because the name kind of suggests that they'd be good at containing things but that's only the beginning of confusing container stuff

this is a diagram that roughly outlines the container ecosystem today and there's already too much in it to understand there's a good chance that what you think docker does is actually what two other projects that you've never even heard of do container d and run c see docker is only responsible for providing the nice experience you get when working with containers it helps you uh run docker files parse uh sorry pass doc files run containers from the internet um and that's about it it provides your cli for interacting with those things and that's nice container d is the api that sits between docker and the runtime it's responsible for managing the life cycle of the containers

and then lastly you have the run time by default docker users run c they were the original developers of it and this is where the magic happens the run c container runtime is responsible for providing the sandbox that runs your workload it's what's known as an os container or a lightweight virtualization platform which makes heavy use of existing security controls available in linux such as namespaces second app armor etc to do this so when you hear someone talking about docker container escape vulnerabilities they're much more likely referring to run c escape vulnerabilities run c is the software that's in charge of containing the processors running inside your container and it's only as good as the containment features

available to the host kernel are you feeling a little bit like this right now maybe you're wondering why does this even matter well i'm not suggesting that run c is poorly developed because it's definitely not but how it performs containment has limitations and there are other runtimes that address those so we changed our runtime to gvisor it's a security focus container runtime that's designed to be a drop-in replacement for run c it's built by google and it aims to be an emulated linux kernel that sits on top of the host kernel and operates in user space you can think of it like a little mini kernel that operates on top to provide a defense and depth step

it's not quite a virtual machine but perhaps it's the next best thing there are several other container runtime technologies built for security in the ecosystem cata containers and firecracker vms which are just the left of g visor there are probably the most popular these are marketed as micro vms that behave like containers within docker and kubernetes but uh provide the separation and segmentation of real virtual machines that's definitely an area that we're looking to move towards in the future so before we get too far ahead of ourselves i wanted to recap of what we've looked at so far so we've accepted that hackers are going to have persistent access to at least one of our containers

and we've taken steps to reduce the attack surface of the containers themselves by using minified images and not running vulnerable binaries as root we further hardened our particularly sensitive containers by reducing their capabilities and we've implemented a security focus container runtime to further mitigate against container escape vulnerabilities but what about the other scenario attackers that are looking to live off the land within the kubernetes environment well there's three main ways an attacker is going to try and do this abusing inadequate firewalling or accessing internal resources leveraging service account tokens and leveraging kubernetes secrets kubernetes has the concept of network policies that are used to create rules and control the flow of data within the environment

we use these to build the team environments this is a policy that's applied to each namespace to segment teams from one another as you can see they very quickly get hard to read and digest thankfully there are some good tools to help visualize them so this is the same policy now but visualized it shows the team name space in the middle and all ingress traffic on the left and egress traffic on the right from the ingress side we can see the only traffic from pods in the same name space is permitted with all other ingress traffic whether it's outside the cluster or inside the cluster being denied from the egress side we can see the

policy disallows any connections to pods outside the namespace with the exception of the kubernetes dns pods that are shared by all teams and load balanced in a separate namespace it's not shown in this image but we only allow udp port 53 to these pods to facilitate dns and nothing else we have another policy specifically for the openvpn pod to punch through this to the internet but again only on the udp port that's required this policy also does one other massively important thing and that's to prevent the player from interacting with the kubernetes api or any cloud metadata apis these are the holy grails of apis if you find yourself in a position to interact with them

each cloud service provider has an internal api that can be used by resources within the tendency to access various configuration information they're mostly unauthenticated apis and sometimes contain secrets or other information that be leveraged by an attacker and then the kubernetes api is the same one that's used by cube control which is the cube ctl command to administer most of the cluster's functionality it can create pods remove them modify them change firewall rules execute code within existing pods drop shells into pods you name it this api can do it there's a great bug bounty report on hacker one of the tester using a server side request forgery bond to interact with the google cloud metadata api and then using it to obtain

a route using it to obtain root access to a portion of shopify to do that they use the cube m google cloud api this is one of the more lucrative apis for kubernetes clusters hosted in gke it's enabled by default and can only be disabled via the command line on creation of the cluster this cube m api returns a significant amount of information about the kubernetes cluster including a client certificate and enough information for you to authenticate to the kubernetes cluster using that other api at the kubernetes api the severity of this will depend on specific configuration but at least for the near future this will get you within a couple of degrees of a complete

cluster own you can see here the cube ctl command using the extracted certificates from the google api to interact with the kubernetes cluster the cube let me in project is a much easier way to abuse this api and it has support for all the major cloud hosted kubernetes clusters so the second api that's available by default to resources in classes is the kubernetes api as i mentioned before this is the same api that's used for management of the cluster so it's often exposed externally too and getting privileged access to it really is game over it has a dns entry internally so from any container you can easily find it at kubernetes.default.service thank goodness for the defenders it's an

authenticated api kubernetes supports a couple of authentication methods but at minimum it requires a jwt for all sensitive api calls regardless of whether they're internal or external to the cluster and thank goodness for the attackers there's a world readable jwt mounted to every container it's always in the same predictable location too so with a shell a local file disclosure or even some ssrf fonts you can very easily begin using this api with these credentials besides san fran ctf found this out the hard way in 2017 as this was the vector that resulted in compromise of their ctf and down under ctf almost found this out too in 2020 thankfully these days the jwt that's mounted to pods by default has a reduced

set of capabilities so it maybe wouldn't have been game ending for them but definitely one step too close for my likings i'm told a great ctf otherwise though so wactf makes use of another policy to ensure that these tokens are not automatically mounted to our pods having your pod interact with the kubernetes api or any cloud metadata apis would have been something your team has very deliberately done in many cases there's no reason why you want your pods to communicate with these apis and so firewalling them away and removing the auto-mounted service account token may be quick wins for you but that's not even the most bizarre secrets management thing that kubernetes does kubernetes secrets are a way to expose

sensitive data to pods the documentation states that this is a recommended way for exposing things such as passwords oauth tokens and ssh keys to your pods and is safer and more flexible than baking that stuff into the container image itself they're either exposed to the pods by environment variables or similar to the surface account tokens mounted to mounted to a folder inside the container calling them secrets though is a bit of a stretch because by default they're just unencrypted base64 encoded strings which is comical enough to be a ctf challenge in itself a hint for the next wctf perhaps so hunting for secrets and running the m command if you've landed a shell in a

kubernetes environment could be a quick win for you attackers so to recap again we now know that making use of strong network policies no service account tokens and no kubernetes security by default are some good strategies to contain an attacker once they're inside your kubernetes environment that's really all i wanted to talk about today um and uh we will probably maybe i don't know run another ws etf this year i'm interested in opening opening wactf to more interstate players but we'll trade carefully on that one to ensure that was etf stays closely aligned to its goals we have always had the ability to reboot pods and push updates to pods if we needed to like mid

ctf but allowing the players themselves to uh reboot a pod if they feel like they need to uh sort of like oscp style would be really good um we had ad challenges back in wact 0x03 in 2019 but they were an absolute nightmare to get working and we only they only came through in the sort of 11th hour so no one was keen to try them again for last year but um it's a great ctf area and so i'd love to bring those back as well and wactf has always been a nine to five sat data sunday thing and i'm not a fan of big like 48 hour competitions and stuff like that but given that a lot of people work on the

weekends as well it would be nice to extend the hours a little bit maybe go from 9am to 9pm or something so that people work a shift they can still jump in and have a bit of fun and so with that i'll wrap up i think as my closing point i hear a lot of people say that kubernetes is hard to learn or it's hard to use but i actually disagree with that i think that anyone in this room could use kubernetes tomorrow and start deploying workloads on it but the more you learn about kubernetes the the more you realize just how easily your cluster could be owned and i think that's the hard part about

kubernetes is building and maintaining secure clusters so thank you very much my name is sam i'm the state director for trustwave in western australia and south australia but i live in perth you can reach me on twitter or linkedin if you're that way inclined not to shield my company too hard but we do do all things cyber security so if you do run a kubernetes cluster and you're interested in making sure it's secure i can probably help you with that thank you [Music]

um

yeah very good question um we we actually haven't yet touched wood uh yeah so we haven't had any container escapes or um any sort of major problems like that we've had a couple of challenges that didn't work as intended and we had to push updates to them mostly our problems have come from the um cloud service providers that have been hosting our clusters so we we used to run on digitalocean but unfortunately we uh hit like a scaling issue on them and uh the digital ocean dashboard was like throwing 500 errors like the night before the game and support wasn't answering and it was very that was scary so we don't use uh we don't use digi lotion anymore

[Music]

yeah very good question so the answer is is possibly um i'm not too sure if they can dns spoof across namespaces though and if they could dns spoof across namespaces that would be a much more uh severe uh problem you could cause like denial of service issues with other players pods um and so uh i just would much rather play it safe and drop that capability since they were never going to need it rather than tempt fate yeah good answer another question is there any specific reason you chose to go with google kubernetes versus kubernetes on aws for example because google gave us money and another question the questions are coming in now do you find it hard to keep up to date

with kubernetes changes or is that mostly handled by hosted kubernetes yeah so it's definitely one of the the pluses or positives for using a hosted kubernetes cluster like gke is that um a lot of that for the most part gets um shielded you're shielded from from that so but yes it is challenging um and i'm sure as we ramp up to do wactf0x05 this year i will have to spend dozens of hours reading what has happened in the kubernetes landscape over the last 12 months and i'm sure there's been tons of things like through this presentation i really only touched on some of the bigger ticket items for kubernetes security but there's so much more pod security

policies i didn't mention at all um security of cubelet itself which is the um the process that kubernetes runs on the node to manage the containers on that node there's a whole roth of security stuff you have to be uh wary of with that thankfully like gke does um uh do a lot of that stuff for us a few more questions uh one question by gt noting the historical advice against containers and containers will wactf be able to host challenges targeted targeting kubernetes itself with the current infrastructure yeah not with the current infrastructure but it is definitely an interesting ctf avenue to explore and i would like to explore it but uh i think we would be

[Music] it could it could go it could go south turn south very quickly if we try to have people hacking the kubernetes infrastructure that was also hosting all of the other uh pods for other players and a few more questions still uh given the security the secrecy about you know challenges and their solutions and so forth how do you handle testing of the challenges so um the expectation is that uh all of our challenge authors should do all their local testing of their their challenge so that they feel fairly confident that um someone with some amount of technical ability could read their documentation and solve the challenge itself um so we we have every year there's a

couple of challenges that don't integrate kindly and it's not necessarily the challenge author's fault it's just kubernetes nonsense so when those um once the challenges are submitted we have a much smaller set of quality assurance personnel just uh three or four people who will go through and qa those challenges themselves on their local machine and then i will help them integrate that into a little development kubernetes cluster that we run for a couple of months before the conference before the event rather and um and so we'll do a bit of integration testing there we'll make sure that the documentation still works and can still solve the challenge and um yeah but uh there's always there's

always issues but thankfully um most of the kinks are ironed out by the time of the competition and we'll have one more question what are some of your favorite past wactf challenges oh gosh that is a fantastic question um oh there are so many that's such a hard one uh so the active directory challenges were really really cool because that's something that not a huge number of ctf challenges so ctf competitions around the place have um so it was really cool watching people learn like kerberos team for the first time and jumping onto getting like local admin on boxes like that was very cool um we've had some fantastic web challenges as well where uh the developer or the challenge author

has put a lot of effort into making it a working and very realistic uh web application as well it really takes a lot of enumeration to find the uh the entry point that you're looking for and i love those i love challenges that um when you load up it looks like it's uh it looks really real and it's not just like two html text boxes log in here nerd you know well great answers to all of those questions let's give sam one more round of applause