Fragilience: The Quantum State of Survivable Resilience in a World of Fragile Indifference

Show original YouTube description

Show transcript [en]

our first speaker to kick us off in this first return to in person besides las vegas is someone you probably know way better than me uh he's someone many of you have probably had announced the changes of your careers in your lives ladies and gentlemen please welcome chris hoff or beaker to the stage

good morning i thought i got out of this with the speaker not working but unfortunately it is can you guys hear me okay with this bane mask on yeah all right fabulous let's see let's see if this can get this to work all right cool so uh they asked me for an abstract for this talk which is what the line underneath the fraudulence is i took a word cloud of words out of words out of my presentation and threw it at them so it actually means nothing the title fraudulence is a mashup of two words fragile and resistant and resilience and there are a couple of takeaways that i'd like to establish out front about

what this talk is about and why i'm here and what i want you to take away from it the first is i have not been able to publicly speak in seven years that's what happens when you get subsumed by a risk-averse financial institution so uh not only is it b-sides back but uh i'm very thankful and grateful that i get to uh come up and and talk to folks and have ultimately a set of discussions after this um the second thing is i um there's a bunch of points in this talk that i think will come hopefully into into uh cognition as we as we run through it but it's about five different talks crammed into one so i'm

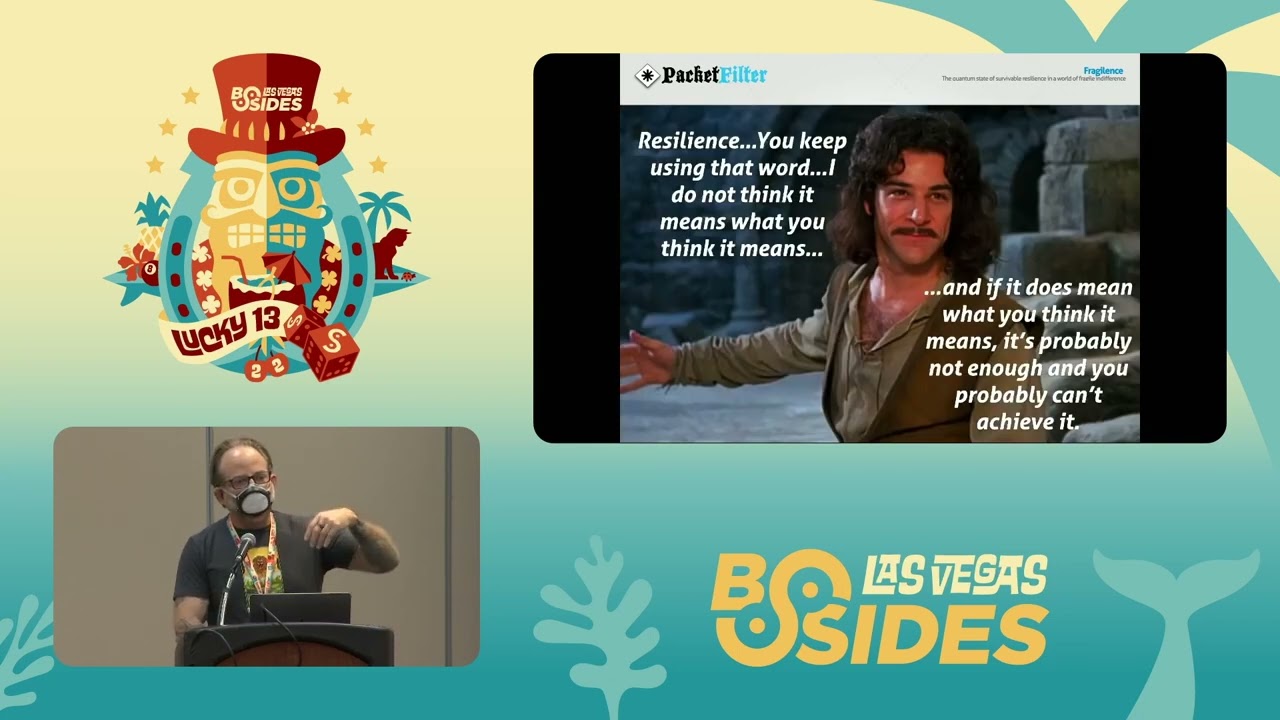

making up for for for uh for miss time when i think about infosec there's a lot more art and compliance than there is science today uh when we do make use of science and when i and i use that term broadly it's often very siloed we aren't very organized in terms of how we take advantage of science we don't define model or manage risk well at all uh we are not agile and our definition of resilience varies and it is insufficient this word resilience is sort of critical to this conversation uh instead of resilient and you some of you may moan or groan when you hear this term but we ought to be anti-fragile and anti-fragile is an interesting topic

that i'm going to get into in this talk because it talks about both the past the present and the future of where information security is headed and hopefully the thought process is here they don't pretend i don't pretend to be right what i do hope is that we start a set of conversations to get people to recognize that things aren't quite as bad as people make them out to be number one and we have an awful lot more at our disposal than most people actually recognize so with that this is not one of those grumpy oh my god infosec has broken talks um although i it sounded like it from the introduction but what it is is a discussion about

words so there's a bunch of words that get used interchangeably these days secure or security resilience uh robust survivable recoverable defensible certain sustainable resilient has become or resilience has become the new buzzword of our industry a lot of it is because of the types of attacks and the outcomes that have happened so when you look at things like supply chain attacks nation states when you look at what we're seeing with with ransomware no longer is it about being secure it's about being resilient and the definition of resilient is is interesting um if you look at what nist defines the um the government agency ultimately that that defines a lot of things for us and standards that we use

these words are important the ability to anticipate withstand recover from and adapt to bad things from happening the stuff on the left which will be hard to read but i'll give you all these slides later it just talks about the context so you talk about network security nation st are you talking about um critical infrastructure applications people etc the table on the right which ultimately came from accenture was about goals and outcomes from from the perspective of performance characteristics of what happens if you do resilience well so if you look at that chart you'll see oh if you are more resilient you will stop more attacks you will find breaches faster you will fix breaches faster and

you will reduce breach impact but the the challenge with what is meant by resilience came to a head for me about well not me in this fictional guy we'll call chris uh in in let's say a financial institution about five years ago showed up uh chris did uh to this uh regulator meeting where they were talking about a horizontal across these gcps which is globally significant financial institutions systemic financial institutions there are eight of them in the united states by the way they're not publicly named but you can probably guess who they are and the question that they ran through this in terms of scenario modeling was uh listen we understand what you do from

a security program perspective we understand all the controls you have etc we want you to assume that all of your controls have failed and you are a smoldering pile of rubble and you have to rebuild and recover and restore and they looked at me and they said what do you do and i said besides the normal sort of cliche you know update my resume and crack open that bottle of bourbon was i don't do a lot i'm an infosec besides securing the environment in which the owners of applications and services would restore like i don't do much and they said well who does and i said the application service owners in i.t and they turned and i watched this

imaginary turret turn towards them and they said okay so out of all the app and critical service owners purportedly in this bank which you know who's the most important and they all raise their hands and they said okay we're gonna pick one payments okay payments who's the owner me this guy says he goes which one of the sub-component services do you get back up and running and of course there's like 200 of them and he couldn't really answer the question that kicked off a set of the portaly uh these things called mras matters requiring attention across all these banks that talked about you're doing resilience wrong which means you need to be able to recover rebuild within these service

level agreements to make sure there aren't impacts to the public at the same time in the frb that was the us base the bank of england was talking about no no resilience means you should never go down in the first place so if you if you look at what we talked about right anticipate with staying recover from and adapt to adverse conditions the definition by itself even with regulators today that drives a lot of what people do in terms of their security programs is out of date and the challenge is that if we don't understand that word and it's supposed to be the next evolution of security how do we even know what we're aiming for

so a couple years ago uh whether you're a fan of him or not um nicholas taleb came out with a book uh titled the black swan and black swan events are events that in the middle of things in the fog of war that you could not anticipate ever happening but in hindsight you're like oh yeah of course we could have seen that we could have prevented that great examples the reason we're wearing masks pandemic 911 uh for example the interesting thing is in hindsight planes crashed into buildings like the empire state buildings like the empire state building we've seen methods but the motive and opportunity and outcomes are very different so in hindsight you look

at a black swan event it's something you can't really predict and ultimately the outcome is much more is largely greater than instead of being proportional with with with the event itself and so hindsight is a very interesting uh uh part of that conversation because at he he uh talab went on to write a book called anti-fragile and what he stated in that book was the the primary uh uh thing uh a part of of what is the definition of anti-fragility which is that some things benefit from shocks they get better they don't just recover human bones are a great example you break a bone your bones actually calcify and become stronger than they were in

the in the first place so anti-fragility which is a huge part of the conversation we're going to have is about getting better in the face of stressors not just remaining the same and that you can visualize that by looking at this graph uh ultimately if gain and benefit is the y-axis and and stress and change is the x-axis normally what you'll see when people use the word fragile is it's a concave response to stress you break and you don't get better if you look at ultimately resistance or robustness which is a natural response you resist you get you get a little bit better for time but then you go back to being how you were ultimately if you look at

resilient you recover for and and you get better for a while or you improve for a while but then you ultimately go back doing what you want the key here is that anti-fragile fragility is convex in response you get better consistently right you actually not only just learn but you become stronger from the stressor so if you look at how anti-fragility uh is more interestingly defined on the right you have this notion of disorder volatility and randomness and your responses to them so on the side of being agile and anti-fragile you ultimately uh embrace change and you gain or benefit from it you you ultimately get better you absorb shock and get better in the face of stressors

trigger word security is ultimately all the way to the left in the most part which is we try to get to at least a base level that we feel comfortable with we don't like change uh ultimately we don't adapt very well we resist change if you think about controls and you basically stay the same not that we don't learn and ultimately incrementally get better but we don't fundamentally change the system itself does not get stronger so with that the the notion here is if you start thinking about what that means to our practice and our industry um you could you could uh posit that that infosec is actually fragile but without sounding like that's a terrible

negative thing there's a lot of opportunity there and in fact those opportunities have come from decades of work that have been done in the past so there was a paper written in 1999 that was titled information survivability and it basically talked about how the world was changing uh and on the left you can see for example systems are centrally networked and under organizational controls they will transition to systems that are globally networked with distributed control right the internet and without barfing things like web3 you can go down this list and really understand that at the bottom of it it talked about that today security is seen as an overhead or an expense versus survivability as an investment and

essential to the organization and that technology it-based solutions have to become enterprise-wide and risk-based now we look at that today and we go well that makes a total bunch of sense in 1999 that was sort of heresy but the people that wrote this thought about what it meant in the evolution from both secure to survivable to anti-fragile and one of the challenges that when i looked at why we don't or can't or have a difficult time getting better it's we seem to conflate these tactical information frameworks sort of the what with this set of guidelines and playbooks that are linear in action chains the how with decision systems for complex system problems that actually tell us about the why when and who

now that may not make a lot of sense in one slide but let me let me let me clarify that today if you look at this this sort of um spectrum of of sensors sense making decision systems and levers and actuators we have an enormous amount of sensors help take whatever you want in terms of instrumentation or observability and define it that way in a shotgun across the stack we have many different sense making capabilities lots of consoles lots of ways of single panes of glass that ultimately allow you to visualize what you're seeing we tend to skip decision systems that allow us to make decisions on risk and instead head straight towards with the application of some level of

automation these levers and actuators right and you'll note the words on the top you'll see them again later probe and sense categorize and analyze weigh and decide and act and respond now i'll say having run large-scale security operations teams being able to have the time and luxury to make decisions in the fog of war is a very difficult thing but a lot of that has to do with the velocity the volume and the quality of the data we capture in the first place and we're going to get into that in a little bit because we're going to focus on the fact that the reason i posit that we are largely fragile and not anti-fragile is that we don't

take time to invest in decision systems to actually make risk-based decisions in fact just the opposite this is just a small sampling from a company name a reseller called optiv and these are the products that they sell there are literally thousands upon thousands of sensors sense making and levers and actuators you can buy today and each of them claim to be open and integrated etc etc but the reality is none of them really feed into a methodology that lets you make a decision so with that being the case uh just an illustration i went through and i sort of did a quick inventory of a lot of different inventory of systems from it asset management to graph base uh

functionality in clouds to vulnerability management platforms you name it there's tons and tons of different ways we can think about visualizing the data we get from sensors we have the uh a linear sort of view in lockheed martin's cyber kill chain that says that well this is the methodology by which every adversary uh basically enacts his his or her craft and it's always linear when we in fact we know it's not in many cases it's iterative we have great tools like mitre attack which allows us to talk about the and categorize the knowledge base of adversary tactics which lets us think about how adversaries may indeed be looking at exploiting us we have cool things like detect and attack flow and i

think gabe bassett's giving a talk on attack flow later which allows you to visualize and map your defenses against attack to know how well sorted you are against a particular adversary and then actually even visualize it uh so go go look at gabe's uh go attend gabe's talk on on attack flow later interestingly we have the new cyber security framework which again says you should identify protect attack respond and recover and it's always linear but it's not in fact you go back and forth a lot of times we have sunil u's cyber defense matrix which takes the uh inventory of nist from the perspective of those categories and maps them across domains and lets you

visualize where you have clusters of technology and or human-based responses to these particular sets of of threats and allows you to understand where you're weak you will notice that in most cases if you were to map this in your environment you would be you would have very few things in the respond and recover or at least in the recover category because it's mostly people focused um we have great things in in the in the vein of threat modeling we've got structured processes to do this we have pick your pick your favorite model stride dread pasta you name it so we have all the capability to essentially be able to document how systems ought to work

we have debiakio's pyramid of pain and the effects and outcomes in terms of how we could degrade deny destroy or otherwise disrupt adversaries based on the availability or unavailability of certain iocs we even have amazing uh var model based frameworks for doing quantitative based risk management and risk assessment what that really means is you're able to get to and express dollars of risk based on the probability and frequency and magnitude of loss and actually attribute dollar amounts to what a particular event could bring you so i just shotgunned you and fire hosed you with an enormous amount of stuff we have and you would say well if that's the case then why can't we make good

decisions quickly and a lot of that would be answered by these four guys so dave snowden not related to edward snowden james rasmussen nicholas talab and simon wardley incredibly smart people i only share two um two things with them which is a receding highlight uh hairline and graying of the beard but i am fans of the work and we're going to cover some of these in a second in a very rapid-fire way because i want you to think about tools and whether you're even thinking about using these tools so simon wardley built this process and he open sourced it called worldly maps and it's a way of mapping strategy a lot of people use it

for business but you can literally use it to map anything and it basically uses a bunch of doctrines and rules to allow you to understand based on a user's a customer or user's needs chain a set of needs and dependencies and components what that allows you to do is make really interesting decisions um in a relatively short period of time now there's a statement that says all maps are wrong and some are useful and this is a true statement because it is a very uh we'll call it personal um uh uh thing a map now maps are good if you know where you are if you don't know where you are it's very hard to know

even if you know where you're going how you're going to get there right so that's where mapping comes into play this particular map was open sourced and published on the web it was a team that was looking at the executive management as a decision maker or customer with drivers like regulatory compliance they need to prevent breaches detect reaches and manage breaches and they took those components over a level over an x-axis of maturity of the component and and that chain if you follow like regulatory compliance depends on having automated control monitoring but that particular component from genesis to custom to product to commodity means that some of that stuff as they grouped it here would have to be delivered

in-house because it is not available to be able to you know just buy you have to build other stuff in their view was more commoditized it became more product oriented so you see things like vulnerability scanning or attack monitoring so mapping like this gives you a view if you take like sunil's cyber security defense matrix and you say i have all these capabilities and tools not all of them i'm able to harness not all of them able to harness myself how can i map those and make use of them in my environment to make better decisions mr snowden is famous for something called the conoven framework and it's a welsh word which is why it sounds

nothing like what it's spelled like just like comrie and basically what he says is it's a decision fabric that is based on four different domains simple complicated complex and chaotic and in simple what you ultimately have is a bunch of known knowns and so you apply best practices you sense you categorize you respond complicated you sense analyze and respond because there's a bunch of known unknowns things that you know that you don't know complex is a bunch of unknown unknowns like you just absolutely don't understand the space but you're able to sort of probe sense and then respond and then chaotic is where you ultimately are unknowable unknowns really think about that black swan events i was

talking about and you act sense and respond the interesting thing about that is it's a maturity of where you are in the space that you're analyzing if you apply this to infosec we tend to have a binary view here of looking at things as either being simple or complex where i would argue that the majority of things are more complicated i'm sorry simple and chaotic where i would argue that in many cases the majority of stuff is more complicated and complex and the reason that's important is if you're trying to make decisions about things especially in infosec space you have to understand the space in which you are actually afforded good quality data so if you if

you apply this to like the history of our aeronautics in the beginning it was very chaotic somebody somebody said we ought to be able to fly we don't know how and we don't know what stuff looks like there were weird designs with flapping wings and corkscrews and a whole bunch of other stuff and people tried and iterated and failed a lot right they made good decisions by just trying things out complex after the wright brothers made a plane that had wings a propeller and a tail people sort of knew you could fly it was a good and reasonably safe plane but then to make to make planes better they had to ultimately iterate and test what was

already there years past you got to the complicated realm where now you had longer and more complex problems like uh overseas travel which brought a whole new set of challenges or problems to the table i need to make decisions on isolated cabins fusillage equipment uh fuel efficient jet engines need to be cost effective so in that particular space and complicated you had to analyze hundreds of materials designs and test them to make sure that they actually worked today in the simple realm we already know how to fly there's not a lot of magic like for the most part unless you're optimizing for a particular trait like speed or longevity or potentially outer space travel for

example if somebody needed to buy a plane to build a plane today you could pretty much take all the existing knowledge and apply it now that's important i got lazy and ran out of time i was going to do one for infosec but you could you could you could map this to any one of the domains you could talk about perimeter security data security you name it and what you would find is that in many cases the decisions the data that you have would indicate that you would make different decisions um and and what's interesting about that is there's another field parallel field of study called safety science and human factors there's a guy named jen's uh

rasmussen he died about 2018 but he talked about four different themes that emerged in his work and this is the part that in infosec we tend to leave out a lot and you can all let out a collective groan about users and if we only didn't have users security would be easy right and at the end of the day most of the stuff that we impose in terms of control is to prevent users from doing bad things we tell them not to click on stuff we tell them not to do stuff well what he actually said is that for for example industrial uh scenarios like nuclear power um there are four things that you have

to think about human operator performance results from behavior and you can actually identify and model behavior which is something we don't do a lot of human operators flexible and adaptive they compensate for shortcomings they cope with complexity by applying mental models using this the skills rules and knowledge based uh system that he that he archetyped and then risk management requires an understanding of all of the inputs and outputs and so the whole point about this is it brings in another definition of resilience called cognitive resilience which is it implies a practitioner's scope or a user or a person's ability to cope with unexpected events so whether you're in security or a particular user we count a lot on people

making good decisions which is a challenge so uh by the way uh who's seen soylent green in the movie with char charlton heston all the old people yeah so it was set it was set in 2022 by the way which is uh uh the irony is not uh lost on me um so what do we have to do to apply all this stuff in infosec so there uh uh there's a really interesting uh talk that mario plot gave recently on on how do we apply um safety and our thought about safety and and you'll see some um like i am the cavalry and a bunch of stuff that josh corman and team have done around you know

medical devices in the same way thinking about how we make sure that devices are safe and high quality but there are really different ways you can think about different applying different strategies based on the domain that you're in and i use this big giant modeled mess to say look if you have a threat actor and we have threat models and we have ultimately situational context by using snowden's mapping you take into consideration human factors you map this stuff out you can apply your models ultimately what you get to is a way of being able to apply stuff that we have today but make decisions in a more formulaic way now this looks complex and it will be decades in the making and so

what i want you to take away from that is a much more simpler view of what this means to infosec and john lambert did a great job of actually summarizing this somewhat orthogonally in one of his talks where he talked about info advancing infosec towards an open shareable contributor friendly model of speeding infosec learning because everything i just talked about is about learning about your environment so if you look at the left he said traditional defenders defend a list of assets manage incidents minimize risk by keeping uh incident secret view pen test as a report card and think about stopping attacks he implied that modern defenders defend a graph of assets right you also heard

defenders think in lists attackers thinking graphs same concept we manage adversaries we maximize learning by sharing incidents with trusted outside peers we view pen tests as an input comma to get better and we think about the increasing increasing the attacker's cost and time in order to attack us so this is a little bit more simpler to grasp because if you look at how we've applied those rules and all that muck that i talked about in the beginning without even knowing what we're doing today you see a lot of structures and organizations of security teams looking very very differently than they were before we're seeing diffused and embedded security teams especially in places that build product you literally

have embedded security team members that aren't part of some big collective borg in the middle that is called infosec we've seen the proliferation of continuous integration continuous deployment and now continuous service as we integrate security tooling into our dev tool chains cloud and site reliability engineering have really brought a lot of the practices i just talked about about secure by design and secure by operation into fold we've seen the emergence of whole new sort of subspecies of capabilities that we've put new names on like observability and detection engineering crop up which is about where we lay in that sense sensors sense making decision systems timeline we've seen robust and threat risk modeling start to take shape where

people are doing this stuff more religiously chaos engineering has entered the chat and we start thinking about uh you know monkeys and the simian army and turning things off and on and looking at uh in a dot and adopting uh cloud design paradigms like you saw with aws when they came out a decade and a half ago with design for fail which is by the way antithetical to what we do in security and we've seen the emergence of a book and if you haven't seen it you should all run out and get it in my opinion it's called team topologies it's about how you organize technology teams to really optimize what you deliver and how so we're going to

cover off that a little bit by the way my my uh my little timer is not running what's the time 9 55. oh fantastic all right i'll slow down a little bit all right so wendy neither uh the goddess of of all goodness and security um came up with a term years ago called about 11 years ago called this cyber security poverty line the visualization may not be accurate but it's good for effect right where she said basically big companies can afford more people in technology and there is a line the cyber security poverty line where many of us are afflicted by the fact that we can't and so how are we supposed to defend things when we don't

have the budget and people to do it a lot of this is not because we don't have the information or skills but ultimately that that that poverty line is real so we have to be able to think about how we structure and organize what we do without having to depend on spending more money or building more people and a lot of that hearken back to the conversations that we were having or the statements i made about automation in the past but dino di zovi in and i don't expect you to read this i'll hit the high points and his keynote at black out 2018 said look software teams need to own their own security now and security teams need to

become full stack software teams let's sink in for a second right basically what that means is from a security perspective we need to be able to deploy security at code and we need to be able to inject ourselves into the frameworks and methodologies that developers use it also he also went on to say that security teams will become internal security software teams that deliver value to internal teams through self-service platform and tools now for those of you that look at that and go that sounds great um what many of you have interpreted that to mean is we should just call ourselves now devsecops teams because instead of being on-prem we've moved to the cloud mario platt uh did another great talk

where he said uh and he he ultimately uh captured a bunch of input uh from the intertubes that said if if your notion or strategy mentions the word devsecops and you're not understanding how the governance teams need to deal with what that means and thus regulators you're you're not really doing devsecops if you're not increasing the agency and ownership of security across those that own the product you're not doing devsecops if you're not enabling the best possible out of developers and engineers you're not and all you've redone is essentially all you've done is essentially rebranded yourself to look like you are relevant and in fact the um the two little there's a quote down on the bottom from uh kelly

shortridge who is uh who has lots of spicy and snarky takes on a lot of stuff and what she basically said was devsecops is something that most security teams think they can run out and buy to make themselves seem more relevant now that that seems sort of a little bit gruff and in some cases wrong but in many cases just because you read it on the internet doesn't mean that it's true and how many of you have been in conversations where the words site reliability engineering have come up in a conversation an sre is now something that some manager says that you ought to go do without really knowing what it means and in fact

harking back to discussions that you might have around how it was implemented at google sre is not used in every software team it's very expensive it's very onerous and ultimately is it the right design principle and a destination aim for sure but in the same way that devsecops or devops in that particular case means a lot of things to a lot of people simply calling yourself devsecops does not actually get you towards being more anti-fragile uh in in fact the problem becomes even more interesting there's a law called conway's law that basically says for any organization that designs systems you ship your org chart and what's funny about the pictures you see there like amazon google microsoft

or the funniest one is oracle where engineering's this big and legal is this big right the reality is they ship their org chart and the usability of the products really do reflect how the software developers and security teams are organized and that little graph for microsoft on the right that indicates how you ought to structure your infosec team is incredibly complex right so we are essentially applying conway's law to security when the org and engineering teams and business teams are look completely different from how we're organized so there's a thing called the reverse conway law which allows you to basically say you really are ought to organize yourself differently there's this notion of team topologies i'm not

going to get into it in detail but if you haven't read the book again i suggest you do it talks about four different types of topologies streamlined enabling complicated and platform teams they do different things they provide different services they consume different services and ultimately they have different ways of working with each other so you've got collaborative models acts as a service and facilitation and just really quick take that for for for what it is i i pulled down uh a an example uh that docker has implemented in what they call building stronger happier engineering teams with team topologies and what they had on the left was were very sort of monolithic teams where if you were

building something like the docker hub what you might end up doing is you would have sort of a a bunch of a subordinate team that does ui middleware back end and that team is responsible for that particular product and they would have to ultimately jockey for uh time uh money and resources to be able to get their product done and there's a lot of shared code there's a lot of ambiguity as to who owns what so when the model that they that they ultimately uh applied the new structure was one where they organized their development teams writ large everybody responsible for for example the in the growth uh category which would be account growth of the akap growth team

or a particular product like docker hub had everybody they needed to be able to bring that product to market including ultimately security teams they build these team apis that describe exactly what each team does and how they interact and why that's important is if you take it to the next level and and this next slide is something i added on to because they alluded to it but they didn't include it in their slides if you think about that from a security model there there's this notion of a complicated or acts as a service set of core capabilities that aren't necessarily engineering-centric so that would be like grc incident response incident um incident response management um maybe

even vulnerability management that provides a set of services with embedded security engineers in each of the product teams what that gives you is a much more focused close to the business way of thinking about from a decision perspective what decisions you ought to be able to make to bring that product to market securely there are a number of models that exist uh for example in large finance and other industries where the security teams are massively monolithic or they are very distributed uh where ultimately the individual teams are empowered to do what they want but what team topologies has done is started to be able to align how security teams function with the business and the engineers that

are doing it and and dino and i were having a conversation along with other people the other day and he said well it's almost like if technology isn't all built by one monolithic org then we shouldn't have technology secured by one monolithic org either and that's a great summary of what that means so organizationally um that also sort of and i know josh's corman's probably here and i put this in here because he yelled at me though he's over there the last time that i didn't mention this in response to resilience but um i don't know eight years ago maybe more uh okay maybe even more than that the rugged manifesto was really aimed at developers but it talked about

um how you should take this mindset of being rugged in software development which um ultimately the important parts down at the bottom it's not a technology process model secure development lifecycle organizational structure it's not even a noun it's it's not the same as secure this is a way of essentially applying these rules to be able to think more holistically and it really does rub off on what i talked about with jan rasmussen's safety talk about things like this is the way we function this is the set of services and this is the ethos by which we we implement more secure code security is a byproduct of being rugged right which rugged is another word for

resilient secure survivable etc or in this case anti-fragile so you may be in the audience and you're like well i don't work for a company that develops software it doesn't matter the same things apply you consume it and so the way in which you think of applying these these concepts apply whether or not you develop software or not now you also may not be in a position where you can change the org structure but you can think atomically about how you ultimately deliver services and part of the problem that i that that i wanted to bring up is that you know security has a leadership dinosaur problem and we're waiting for the meteorite to hit right so most of most

of the people that manage and lead security teams today in larger established companies are old like me now we came up from a time that was much more complicated and or chaotic in as much as security didn't necessarily exist with all the frameworks governance and tools many of us were network administrators or system administrators when the internet came online we started doing security stuff and here we are but we've ultimately been doing the same stuff over and over again that's starting to change as you see new leadership and new blood and a lot of the younger folks in the audience as you come up you're the availability of thought processes and tooling and knowledge sharing these events didn't

really exist the same way they do today when we were coming up so leadership is an issue organization culture is another one the the notion of security being a control function and not an enabler at the same point in time i sort of ralph every time i hear somebody say security should be a business enabler it should be but in the context of how you organize is important and the culture we have is very punitive there's a lot of there's a lot of noise and not a lot of signal we get drowned with a you know an awful lot from that sensing sense making and lack of decision systems um issue of cognitive load the

outcomes are really about let's just not be any worse than we were yesterday not how do we get better uh we have a very punitive incentive model right instead of stick versus carrot or broccoli versus ice cream a lot of what we talk about with developers what you can't do not what you can do and language is a problem so from the perspective of how militarized our industry is just look at words like dmz and pen testing i mean if you go into an environment with people that aren't security people i mean many of us have gotten on the plane on our way here with our disobey and defcom backpacks and strange piercing and wi-fi devices and

we sort of look weird to people we're not particularly accessible i mean in in many cases we have over militarized what it is we do which makes it very difficult to communicate um in in easy terms i mean somebody in my org one day said jesus we're saving passwords not lives uh so uh not unfair um so what does this mean listen at the at the end of the day uh we have uh we have an awful lot of stuff uh at our disposal we have a lot of data we have a lot of uh tools we have a lot of technology but um we we have ultimately relied more on on art and compliance as the way in which

we operate versus science a lot of what we do is siloed places like this are fantastic because we get to share what we do we get to share our successes and our failures we can think differently about how how we're organizing and larger companies will have a trickle down effect on all of us as they display success in organizing differently and operating security differently we cannot continue to simply be the department of no i know we've heard that a lot but a lot of the capabilities that we have really um means that at the end of the day being resilient meaning being no no worse than we we were being able to bounce back but not actually learn from

and get better is not sufficient and we we really need to be more anti-fragile and again that means getting better from stressors and adverse uh adverse events so that was my my hope that i had uh in combining five talks into one and probably annoying the crap out of you with buzzwords um i wanted to to um to communicate and at the end of the day i don't think anybody said it better and uh than dan gear which is uh up on the screen work like hell share all you know abide by your handshake and have fun we can do all of that at the same time as we make better decisions by being less fragile and more anti-fragile

this was not a talk meant to even have a punctuation point it was meant to spur a set of conversations discussions and and perhaps research in a lot of those strategies and things that i talked about jens rasmussen's approach to safety snowden's view of decision-making systems etc and if i have time i'll take questions as best i can but the point was i was hoping to spur a conversation not make not not not end with a a big bang so i don't think i ended with a big bang so at least i got one out of two but yeah you had a question

so that when the accident happens and it might not be your fault it might be your fault it doesn't really matter the occupants of the automobile survive and walk away right we need to discard engineering our security programs in exactly a great way yes let me replay that real quick for people in the audience he basically is making a a parallel to the automobile industry and their advancement of safety and safety science over time and they invented things like seat belts or actually gave them away and crumple zones in the automobile which is meant to protect the asset in case of an accident uh and ultimately we need to do the same it sort of ties into

a lot of what jens rasmussen said but a lot of that is being thoughtful about being rugged about being you know anti-fragile in a way in which we think about outcomes differently which is not just you know i stopped this this particular event from occurring what impact or outcomes did it not have that adversely affected the org yeah thank you for that it was a good summary all right uh yeah unlike the automobile industry maybe we should also be concerned about the people outside oh that's an interesting one unlike the automobile industry perhaps we should be concerned with people outside the car uh well my my wife's uh fsd tesla is certainly an indicator of that

uh she's hit numerous curbs luckily no people but yeah i do think that the system as a whole if you look at snowden's thing about right the the the environment as a whole is a very important piece not just people we protect but but unintended consequences and if you need me to go you just give me the hook i'll just keep talking to them all right anyway i hope that was helpful thanks for being with me i haven't talked in seven years so i'm a lot out of practice thanks [Applause]