More bugs, bugs, bugs! Thoughts after a year of fuzzing popular open source projects

Show transcript [en]

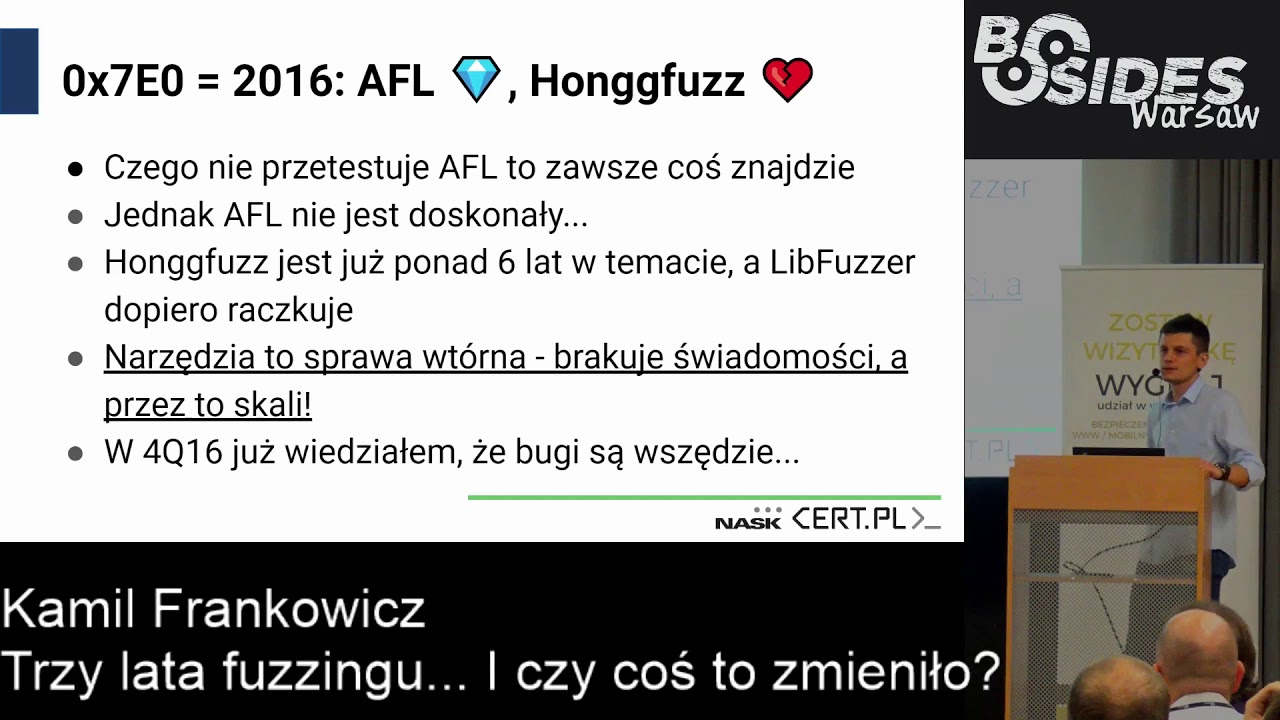

I wanted to talk about a project that I have been working on for over a year now, namely the phase-up of various open source projects. Generally, you should know me, because I have been here for the third time. Third time. In 2015 I was talking about Soho routers and about the vulnerabilities I managed to find. In 2016 I was just starting this project and I was telling you how to approach this process. But now it's a kind of a summary, because I haven't finished it yet. I'm still doing it, because there are still errors. So... It's hard. Until we eliminate all of them, we will do it. I work in CertPolska, like the other two

colleagues who presented before. We do different things. As my colleagues said, we recruit. So if someone wants to do different weird things, I invite you. Mails are submitted. Besides, I run a blog in private time. I tweet a little, but there is no hype. So Twitter is not my source of contact with the world. What I want to say in general is why all this. It's hard to understand. I got such questions, I remember, among others from Michał, that why are we doing it, why do we benefit from it, as my My team, my company, this kind of things. I'll tell you why in a moment. Some statistics, some things I've learned, because it's not so obvious. Some things are just being told. First of

all, important, soft, this is a novelty of my slides, because I always try to make technical slides. A soft part, when working with developers, because it's not so obvious. And often, reporting mistakes is like... You have to do it right, you have to learn it. And of course the technical part, what you managed to find from the most interesting cases. Because to tell you about everything, I would have to sit here for three days and you wouldn't stand it, and I would probably not. So why? First of all, as most things, because you can. No one has almost done it. When did I start it? It's the end of September 2016. So it's like... The

approach of developers is changing at this moment, but even though it was only a year ago, it looked a bit different than now. In my work or privately, my solutions use Open Source components, so the security of the blocks from which these solutions are made is safer. So it's a win-win situation. Developers are usually functional, as MSM showed. Even in e-commerce, it is rarely created with the thought of security. Often it is copy-paste from stack and not only. So it would be worth doing something like that, at least to improve security. And it's like few people are breaking the code. Definitely too few people are breaking the code. You have to do it as MSM shows, because

in fact different flowers come out. Often very strange. Why this technique? It is very effective. It is great for finding new paths in the code. There are very solid open source solutions. There is AFL by Michał Zalewski, LibFuzzer. All of them are connected to LLVM, Adres Sanitaser, Memory Sanitaser and more. It makes the work easier. This project, since I started it, after being fed up with developers, is a pleasure for me. Even though I had to write a lot of code, automate a lot of things, it's still all the same. It's on the giant's shoulders, so it's cool. It's fast, I can get, I'll show you how many times I can do it, and it's easy to scale, just add servers and it works.

The problem is that the entry threshold is low, but to do it on a really cool scale, it's hard to work out some things. You have to learn all the time. Statistics. 44 projects. I tried to choose projects that have a large impact in the case of finding a gap. The second thing is the projects that I use in my solutions, in my work and not only. We managed to find 361 bugs, 323 were corrected. However, it is disappointing that in the middle of the projects, even in the middle, because it just so happened, we found more than one error. So this proves that it's really not good. I collected 101 CVs from yesterday, because two fell

yesterday. Oh, I ate a letter. The fastest found hole is 2 seconds. Use after free in the yard. The longest searched hole, very interesting, I will talk about it later, is about 879 hours. I say about, because I'm not exactly able to I can estimate it, but out of 16 servers in the project, 7 physical, 9 VPS, it was not a super hyper VPS on Azure, I used crypto advertising for RUB, so for 4 PLN per month you can have a pretty nice computing power, 20 GB SSD and 1 GB RAM. I saw that some people watch the slides because they are low on the slides, there are some texts or schematics, so I suggest to turn on the

stream. On the stream everything will be visible and just slides. This is a very good solution. I tested it a year ago, now I see that some people watch the stream instead of looking at the slides and it works quite well. Yes, who is the most hole-hole project? Generally, the most hole-hole is TCPdump, at least it was. Over a billion executions. In fact, it is a fast binary. Here, the input was pickups. Pickups with different network traffic, because not everyone knows, but TCPdump, apart from collecting traffic, can also analyze this traffic. It has built in a lot of parsers of various strange network protocols. at various ISO/OSI levels, so it's cool. Second, second, second, listen.

Ok, that was not a question. The second project is GoStrip. I don't know if anyone knows what it is for. Generally, it is a very cool project, because it can be used as a PDF parser, PostScript documents, it can be used as a rendering element in printers, a set version, so it's a pretty cool impact. I'm listening. Ok, ok, I'm not asking for trouble in general. Another project is LibAV. If someone didn't know, this is a fork of FFMPG, which in 2010 or 2012 was divided into two parts. FFMPG went one way, LibAV went the other way. Radar, oh damn, I didn't correct. I have 18 CVs from Radar, so I should jump up, because I got these two yesterday. Yara,

you should know her, if you have any boxes in the company or not, you know her for sure. But the good thing about Yara is that it is fast and responsive. These errors, like in Radar, are fixed instantly and thanks to that you can start another phase iteration. And the next project is regular expressions, PCR2. Pearls are also very cool project. You can clearly see which targets are faster, which are not. In the case of fast targets, these are just some text parsers. So almost 4 billion are achieved in this year. In the case of free projects such as GOST, only 80 million. But despite this, it was possible to find 18 different errors that were accepted to CV. It could be surprising that there

is so much of it here. Here, in Libav, 22,000 is a lot of crushes. The statistics are mainly from AFL, so let's read what the author has in mind by implementing the Unique Crush feature. Generally, if some part of the performance, we will not go into the basics, if some part of the performance is delicate, then it is Unique Crush. So it simply makes these numbers so cosmic, but in actual crashes after filtering, which are important, there is definitely much less. Of course, if I say unique crashes, it comes from the fact that of these 916 crashes, 40 CVs. It's like a sum of all the crashes found during all iterations of phasing. Here we are. Found types of vulnerabilities. I am glad that

developers have learned to defend themselves against buffering. There are relatively few such errors. We see that buffering on 100% is not a whole percentage of all found errors. Invalid write is also a method of writing outside of the buffer. So it could be just some damaged indicators or things like that. However, there are also few of these errors. The most of course, the dereference of empty indicators and reads beyond half of the errors are read for memory outside the buffer or reading some memory that we should not read. These errors, in the case of the two largest parts of the cake, can result in the fact that we will be able to break the SLR and leak some pointer.

And so, as usual, the whole SLR and randomized memory of the process will not work as it should. Of the more interesting things, is a big relative indicator of use after free errors. However, These errors were not necessarily exploitable in the sense that you could do something cool with it. These were errors, for example, when slowing down memory. Here I will tell you about such a mistake that somewhere we slowed down too much memory, such a buffer filling, only slowed down. And at some point, by slowing down memory, the loop that was spinning touched already slowed down memory. Generally, instead of making some descriptions that have 10 slides, it is always better to make a picture. The process of

phasing generally leads to a few simple steps, but a bit annoying, especially those at the bottom. Managing the body and the crashes is incredibly annoying. And you just have to filter everything well, and also connect these bodies well after the next iterations of phasing. In order to achieve something cool. At the beginning we scrap the internet, we look for various PDFs, docx, whatever we like, which will be a target to destroy our program. We are looking for the best things. We are investigating. All this is interrupted arrows because it is basically one step. Everything is about minimizing the body. to realize the issues that will be raised later. I had to divide it in a strange way. This

is how we study code coverage, i.e. we are looking for as few elements of the corpus as possible, which reach the largest number of lines in the code. This is logical. It's just a simple choice. The best, so to speak, This is the best-suited genetic algorithm. Later we start to phase, i.e. we pass our body through various strange modifiers and load it into our program. Generally, when we find something, we manage it with crashes, so we have to filter duplicates. Unique, because as you can see, the crash uniqueness algorithm is not perfect. Because during the code execution, we have limited information about what happened to us. I wrote a special solution that automates all this and does the same for

me. It simply collects the bodies, connects them and chooses the best ones. I would probably be out of work from 8 to 22 if I did it manually. Building a body is generally simple. There are many different resources on the Internet. Even Mozilla can provide what feeds its phasers. It is best to build it on your own project, but it is better to build it yourself. Do not use random files from the Internet, because this corpus evolves with each iteration. There are more and more tested cases, which are smaller, primarily in terms of size. for test cases and of course, a bigger code coverage. It is worth doing it at the file format level. If we have a couple of files, when they

are reusable, we can load the pickup to TCP dump, Wireshark, Snort, Suricata, to do everything we want. Similarly with JPEGs or other formats, we do not load the YAR. With YAR it is weak. The body management, that is, what we throw everything on the computer, on the servers, they are good at it. Deduplication. A lot of the same test cases are created, unfortunately, between iterations. This is simply the cost of the whole process, you have to accept it. We minimize it in terms of code coverage. It is known, what I said before. Removing important data from the file in the corpus is a very expensive, computational operation, because we have to check the program every time, check if the change made at

a given moment has changed the code execution path, compare it with the previous one and then cut the data one by one, one by one, and then we can cut everything. Generally I do not recommend it. I once did it on a corpus of 10,000 files, but I do not remember what it was for binaries. But it lasted a long time. So I do not recommend it. It's better to just do it like a corpus core, find smaller files and then it's much easier. maintenance or creating dictionaries, I will tell you about it in a moment. And of course, automation, if we can do something, we can do it on the servers. Generally, what we can do, we automate. I come out of such

a rule and so far it is quite true. As I said before, it is difficult to determine whether a crash is unique. In the moment of existence of such a state in the program, Fuzzer has limited information only from the code instrumentation. If you are a fan, I recommend reading the documentation of EFL, Sankow documentation, LLVM documentation, there are a lot of tastes about it. Yes, it is often a lie, as I said before. I use it to give me an asana that will show us what is broken, where exactly what has been overwritten. Then it is much faster. How do I do it in my solution? I do it very simply. Namely, I use asana reports. If I don't have asana reports,

I do it using Walgrind. Minimalize the found crash, because for one test case this operation does not take as much time as for 10,000 cases. And so we check if we already have a stack trace set up in the database. If we have, then we ignore the case and do not care about the test case. In case that a test case triggers some unique paths, we have to define what is uniqueness. Sometimes it happens that many paths lead to one place and for example, even 10 different functions called on the way, reports of the asana, it is still the same crash, so we have to somehow call this parameter to be "cizzle", so that there are not too many crashes, so as not to

catch a lot of duplicates, but at the same time also to be careful that something interesting does not disappear. If we have a unique crash, it's cool, we either report it or exploit it or sell it somewhere. In the case of duplicates, we remove the file and we have to deal with these wasted CPU cycles, which we are wasting a lot anyway. Regarding the code coverage, this is a very interesting topic. Here, the industry standard, which is used by both AFL and Litfa.0, is Sanity's Air Coverage. I generally recommend it, because it is very fast, it is a light instrumentation and it is enough to compile your program. Here, if you can't see it from

the back, I recommend it in the long run. With such a switch. And we have nice reports about where our case touched what function. We can generate a report in HTML and calculate the percentage of code coverage for each file in a given project. This is exactly the Yara and where we actually fall. So it helps a lot in the case of manual analysis of how it is done. Now we have mixing and breaking engines, which are phasers. Since I mentioned this a year ago in my presentation, nothing has changed. There are two main players, AFL and Leap Phaser. The advantage of AFL is that it is very widely supported and we have also created sister projects for various

languages. Python projects, Go projects, I have links to them in Rust. However, in the case of libfazer, the matter is a bit complicated, because it works at the level of the function. So we have to write code ourselves, which will be phased. Of course, we omit the technical aspects of this, i.e. mixing, data mutations and feeding. All you have to do is use simple API. However, there is one disadvantage, It's fast, but it has a very small amount of RAM. I managed to get up to 50, no, 300 GB of RAM last year, so it's quite good. But I still had a little bit, so it's fine. If we have specific goals to test some APIs, then we use Leapfazer. This is a comparison of sister

projects with AFL, which, as it were, They evaluate the usefulness of the paths in the code in different ways. I mean, the usefulness of the found paths. Generally, I don't want to delve into the technical aspects of this, because the whole paper was created on how to choose cases in the code There are 4 projects that do it quite well. These are the paths that are rarely touched. It is said to Fazer that you should try harder and go into those paths. Generally, this is how it looks. The rule of fuzzing, which has not changed since the existence of fuzzing, is first of all the second iteration. We don't count RAM and CPU secrets, because we waste too much of them anyway. So generally, memory fuzzing,

which is associated with a high need for RAM. Not only computing power, but also RAM is counted. For example, in case of parsing text or if someone knows YAR or some other, PCRE too. So that Fazer himself did not guess the permanent ones, but knew some permanent ones. For example, that the YAR rule starts with the dollar sign and that HEXs in YAR start with the number sign. So it's worth it for Fazer to know, that he didn't discover it himself. The advantage is a slightly different approach to the problem. As I said, there are different slides available here. Louder? Okay. But what's the point? For example, you can... No, just for the slides. A

slightly different approach to the problem, because it's very nice to use the Markov chain to give priority to the appropriate test cases. This is what I was talking about. I'm just telling Fazer: "You, go there and get interested in what you rarely visit, but leave the paths you're practically always falling into." Testing individual commits. Is it also a very interesting thing? Or is it searching for new paths using Symbolic Execution? Ok, I'm talking to myself, but how many of you think that you can use it in your daily work or projects? Ok, Michał, who else? You can talk about phasers for 3 days, but if we don't integrate it with our code processing, it's a waste. Someone will be phasing somewhere on the side,

and the rest of developers will be a weirdo, wasting the process for some stupidity. A Google guy who is responsible for the Fuzzer link, tried a certain approach to development called Fuzz Driven Development. Generally, it is new because it was presented at USENIX this year. And in the process of creating a code, we also add fuzzers. Here are the fuzzers I mentioned in the FL projects. We write tests, if we write in TDD, of course, and how many people write in TDD, it's out of curiosity. I knew that there would be Last Run. I'm one person. I started writing in TDD, so ... So when I start writing, it's ok. We add one step, we write phasers to it and that's how it looks. We write tests

first, first red, then green, before writing the function, then we write phasers. We put a commit, we build a project in our Jenkins or somewhere. Recently there was some RC in Jenkins, so you have to be careful with it. And we run the tests. If the tests fail, we fix the code. If the tests fail, we phase the project. I don't know, it's not said what to do, when to finish the fuzzing. Because you can do it for the rest of the world, one day longer. But it's like with these crashes, with the uniqueness of the game, you just have to choose. We need to make sure that it won't take too long and at

the same time meet our business needs. If everything works out, nothing falls apart, let's say for an hour, then we implement it for production and it works somehow. And it turns in such a nice circle. I think that the approach is very little to the benefit of writing phasers and fuzzing Fuzzer are simple, I don't have here how to write fuzzer in libfuzzer, but in the case of EFL we don't have to write if we use it. There are even ready articles on various blogs on how to automate EFL with Jenkins. We have it all, there is no problem, just copy and paste and it works. Google did it. Google has created an initiative called Open Source Security Fuzzing. It does

it the same way, but it has similar motivation as me. But I was the first one, because it was created on 1st December. I started on 30th September. So I was the first one. Yes, the claim that They use a lot of Open Source components in their solutions. Yes, they have to be safe, because if a simple "stick" is compromised, then it will be bad. And everyone can report to the world, having an open source project, to such a phasing, deliver phasing, and then it's spinning. It is phased on Google machines, reports are sent to developers, there are 90 days for improvement, the bot verifies it and it actually works. From what I know from

statistics, it is so that they have 2000 virtual machines and they managed to find more than 2500 bugs. So it's not bad. And it's until August, so from December to August, so about 9 months. 2000 bugs. Of course, not all of them are memory corruption bugs, a lot of them are insertion fails or things like that. However, if someone has an open source project or intends to add a feature request to a project that he likes and does not develop it, then this is a very good idea. Even if the guys from Google write fuzzers themselves, it's not a problem. Only if this platform had a bigger voice, because so far few people know. Did anyone know about it before? Ok, one person, so bad statistics, still

1:1:1. Ok, now the soft part. So far it was boring, it will be even more boring. How to work with developers? It works as if you are in contact with some people. Generally, approach the relationship without expectations, every other way. If we think: "OK, I'll report you to Buffer Overflow and I'll give you 24 hours to fix it and I'll report you to you on Sunday morning." So it's just weak. Not everyone thinks that security corrections are a priority. Yes, awareness increases, nice. However, I reported such errors, here, use after free, which was exploitable, buffer overflow, lay for about 180 days in a public bugzilla. So everyone could go in, download a test case, there

was also a SAN report, so there was no problem, because it was known what was broken out and what were the processor records or what the memory looked like. and you could easily write down and exploit some things remotely. Not everyone understands the burden and the weight in the same way. Here, Jarek, who had a presentation, announced to me that it was worth expanding it, because it is a very important point. And here we have two cases. WD_MY_PASSWORD is a weak Pwni in my opinion, but SystemD and Pwni for the worst response of vendor that keeps the project is I will talk about it in a moment. Jarek's statistics are: what is the error for a regular user of programming? He told

me that 100% of non-technical users are annoyed by it, if they are programming, and 90% are technical users. So 10% may look at this code, maybe it will report, maybe it will not report this sequal or what happened there. Okay, so now the summary of the midpoint, because it is very good. I summarize the matter in two sentences, I summarize the matter of what went and what the midpoint was received for. So when you read it, scream. I recommend the stream to the people in the back.

Ok, we know more or less, right? Generally, here you can't see it well But generally, a certain developer, SystemD, argued on GitHub that we do not need to assign CVs, that CV is some kind of currency in the security world, that we are just so excited that more CVs are a bigger deal and that there must be a CV for every mistake. So generally some answers are even funny. I have an opinion from various open source projects, for example, developers of TCP Dump allocate a CV to each bug fix, because CVs are announced privately by e-mail, they send a summary later, if there is a new release, they send a summary that CVs were yours, they thank you, they add you to contributors, this kind of things. But

here it was so basic to get out of the way, whether they should be or not. The background was that if you gave zero in the user's name, you got a local root at the beginning. Later, you could use a prepared DNS request to run the systemd. It did restart automatically, but it didn't save them as a state, so you could run DNS requests into the systemd loop and it would run all the time. So generally, this is how you get a payout. I do not recommend using Bugs.Zilli. Further part. Not every law is subject to taxation, you have to accept it. Sometimes the justification is that it is described somewhere in the documentation on the N-page and we are basically getting into

what has been established in the documentation. However, this is obviously bad, but if it is in the documentation, then no one would use it without documentation. An unrealistic test case. Unrealistic test cases according to developers are sometimes just a mistake, just in-vile, because no one will do that. Because no one will write such a rule of Yara, for example. Although with Yara it is good, but let's say that no one will want to write Yara in the rule, not to write something like that. It's just about Yara, it was just useAfterFree, once there was a comparison of two values of the tested binary MD5 and it caused useAfterFree. It's an interesting thing. However, it changes all the time. And for the better. Programmers understand that it

is a win-win situation. That some researcher has made mistakes and they are fixing it. It's not some kind of "you are an idiot because you can't write safely in C" And I will hit you in the face? No, no, no. Really, most developers are people on the level, it is quite cultural, there are no challenges, this kind of things. So it's nice, it's really nice that it's changing and that it's going in the right direction. So it's very, very, very, very constructive. It's nice to make mistakes for such things. How to do it effectively? Version, first of all, writing that Git clone from yesterday is a bit weak, if we clone from repo. Compiler environment, because

I have a very interesting error that was only released if the project was compiled in Clang. Not with GCC. So, you know, compilation flags, reports, ASAN, MSAN, a bad file, because sometimes it is forgotten. It also happens to me that I will send a bug report without a file, a command to correct the error, because not everyone has a plan. Contact to yourself, you know, tips, advice, look, depending on how we are involved in the project. Now meat, because I'm bored a bit, some are already sleeping, so let's start. Generally, I like Radar 2, I respect developers because they do a great job and they try to compete with AIDA, but I saw that there are two Toki or even three. I will show you the radar

from the other side. Generally, this is the main developer of the radar, who released it two days ago. Unfortunately, he started tweeting and here in version 2.0.1 they will be improved, they are already in the git. Unfortunately, I have already broken the release 2.0, it is already old. Even though it's two days ago. Two, three. Something like that. Two, three days. So whenever I see a radar release, I take it to the workshop. What is the problem with the radar? First of all, they don't use the canary on the ship and they use the C-function. These are the 90s, this is just a coincidence. In case of routers and radars it's the same. It's known what every security researcher likes. So 4.1.4.1.4.1 in the registry. Instruction pointer,

so elegantly. But these are the 90s. This bond should not happen at all. This bond is probably found at the end of 2016. It should not happen at all. Because if you have a large project that has many parsers, because here we have a virtual machine compiled by Android. We don't do such things. I wonder why it's off, even though the rest is on. It's very strange for me, but maybe I don't know something. Maybe I don't know something. It doesn't matter. It's obvious. The second problem of the radar is that we have borrowed code and it's in the repo. Unfortunately, the errors in this code have been improved for a long time, over 4.5 years ago. Unfortunately,

it caused the fact that there were 5 bugs in the grab code, which is from 2013. And it was like that until June 2017. Later it was improved, but it is not a fixed grab, but fix of radar developers. In general, in some rarely used part of the radar, so it's even weirder that this code is still there. It's still a problem of the external code that is supplied and the security of these single components. It's coming back all the time. Among other things, it came back to the Equifax case, this leak, when there was an open source component in the structure that deserialized XML. This problem comes back and until external lippies are used, it will

be so. Unfortunately. Unfortunately. But not only grab. We also have capstone, we also have squashfs tools. A lot of different, strange code. It is in old versions. More than 9.5% of files are the latest modification in 2015, so more than 2.5 years. Let's say 2 years. 2014 5.6% of all files in the project. Sometimes they are old and there are many of them from 2011. So it's worrying that a large project like RaLer, I suspect that in other projects it is not always better. That this code is just lying there, unfortunately the code is not like wine and it is not better the older it is, it is the opposite. Especially that this code, let's say, in 2011,

I think that certain techniques of safe programming in C or CPP were already, unfortunately, exploitative. Buffer overflow by Alephante in 1996, so a little time has passed. This is a very interesting mistake, because you could have entered a very large CVSS base score, because it had 9.8x10, so it's a very cool thing. JS engines are a very complicated piece of code, because there is a part of the base that works there, as if the base is under the bottom, so that it is fast and the superstructure is in C or CPP. It depends. Well, a developer forgot to expand the match indicator in the webkit's ASM component. And simply, by collecting arguments, the function was able to So

you could go outside the locked part and save it remotely, because of course it is a remote operation. Using JS loaded to memory, 3 kilobytes per 100 victims. So 3 kilobytes is a lot, you could write a lot of things in there. However, it only happened when WebKit was compiled using Clang and only when we used it on Linux. Unfortunately, it was not possible to exploit remotely using iOS or MacOS. So, a nice mistake, a nice remote error, but unfortunately we were limited by these two permanent ones. But the plot is very simple, 80,000 arguments. It is simplified, it looks a bit different. Because here you can write anything you want. But it really impressed

us. Here we can see how Redzone looks like. Generally, it's a simple thing. Compilers allocate additional space to not manipulate the STOS indicator for free. We have 128 bytes here, so we were going out, because we wrote it down. This is a very nice mistake, because I have led to a fight between the developers of two projects. ImageMagick, which you may know from the famous imageTradig, they even made their own logo on their own site. It was a remote code making using a picture. It's a funny project, because it's very complex and used in many services, for example Dropbox uses it and other trivial things like creating miniatures from the image. You could also leak

the memory of the server using what Chris Evans used to do in Project Zero. What was the point? The error was on the side of Magic, because they did it on their side. The problem was that there are profiles in TIFF, something like profiles and generally this function, no matter what it gets, always returns success. So it's something similar to the type of encryption of Cesar, which Jarek found. Generally, what happens, we return success, something is encrypted, we spit something out. One basic assumption is that the size of the buffer is always correctly transferred to the function. Unfortunately, this is a very dangerous assumption, you need to have a lot of balls to say that. In my opinion, we always know that the developer uses our

app and correctly gives the size of the buffer. ImageMagick assumed that this validation occurs on the liptif page. that the profile we request will not be converted because it simply does not exist. Unfortunately, it was not the case. Developers argued, we argued on bugzills, on liptiff bugzills, why it should be in liptiff, on liptiff, on imagemagic forum, why it should be in imagemagic and after 12 days we concluded that this game is over and we are improving it on imagemagic. This is how the SAN report looked like, you can clearly see that the problem is evidently in liptiff. In my opinion, it should be corrected by Liptif, because it is not allowed for me that no matter what we put in, it is success. No,

it doesn't work like that. This is how Stacktrace looks like for what happened in the case of the Magic image. This is an interesting mistake, it is a mistake in the style of what I said that part of the use of Terfee is not interesting because these are errors related to memory loss. We do it too much at once. I heard somewhere, but I don't know if Linus Torval said it or if the programmer is not a person who I can write code, but I'm not a person who can manage these abstract objects in memory. I don't know if it's a new star wars, I don't know the source, it's probably Abraham Lincoln from the internet. I hear that my name is known, I never know what is

written on the internet, Abraham Lincoln. The problem is that there is a function that has 750 lines of code. So there is a mega-cobblest. In general, it was released in 9 different ways, but only 4 methods ended with memory loss. Something was wrong, because the memory was floating somewhere. Developers did not follow one simple rule: if you do not allocate the memory, you will not slow it down. Unfortunately, it was not the case here. We have something like this: in the function "gainBeginImage" from 1 to 4, the allocation of the object took place, then we initialized this object. In the case of incorrect initialization of the object, the object was also released through the function

gx_begin_image and the object was released and we have a free memory. Unfortunately, if ... or further work, if everything was actually playing there. However, useAfterFlip was such that it was not necessary to have the object released here as if it was released twice. and here, but the whole core, the core of the error use after free, was in such a way that it was unnecessary to call the function that releases the object and we simply skipped the legitimate steps that took place to release the object and to clean the memory. We simply forced the release of this object. This is quite accurate. There was nothing interesting in this Asana report. However, it shows that you

should not be so much into functions that slow down memory. It is also worth reducing the function, because the function that has 750 code lines is already quite large. In my opinion, it exceeds my perception and I do not think I would be caught in such a function. but maybe some developer, a Cossack, will actually be able to handle it. I have 10 minutes left, so I'm off-top. We are completely away from the topic of phasing and this kind of stuff. But also a nice bug. Adam is an expert in this actor. There is an actor named Thomas. And during the course of this project, Thomas produces various campaigns. Play, Netia, PKO Leasing, Multimedia. Some police, traffic police, Giodo control, yesterday there was a lawyer

who wanted to take our property with a deposit. Or confirmation of a leak in WMBank. Generally, the guy is very, very good at scenarios. You have your ransomware, which sends you documents, it calls you Polish ransomware, we also have our own ransomware, so it's good. This person sends most often Office documents with macros. This is a simple way, everyone has an office, so he will burn it and the world will take a bad cat, he will burn it and he will have a hard disk encrypted. Every time you look at this macro, this is how this document looked like, that something went wrong, turn on the macro, and simple social technology, generally, you can even

see that something went wrong with the communication from Word. But the late version of Microsoft Word, not in Polish, the newer version, somehow better. The problem was that when the macro was turned on, Word fell out. Well, the macro was darkened and in the library that parses Visual Basic there is a problem with the zero pointer dereference and all versions 2010, 2013, 2016, those that are used, they are displayed when they run the macro in this document. Unfortunately, we are not encrypted, because we are not encrypted by the computer, because usually in the world Word is broken, we have nothing to download and run this connector And here you can see this fragment of the code, exactly this RDI register

is empty It is not validated before this move operation It didn't work out, but I'm still producing these campaigns and I think I will continue to produce them unless I manage to collect them from racing organ. That's all from me, if you have any questions I will gladly answer. No, I'm not answering you. Kamil, this question must be asked, if you find mistakes and it's so cool, when will you start exploiting them? I didn't want to show here, because first of all, demas don't work on presentations, that's one thing. And the second thing is that I write exploits, but generally to the drawer. I said it a year ago, I will say it now, I'm trying

to develop... Am I listening? I can't hear. No. No, not Zerodium, WPEN, NSA, Mossad, SKW, ABW, AGD didn't write to me about it. But maybe they will call me back. But I think developers haven't matured to some things yet. Kamil, have you matured to writing exploits? I'm wondering myself. I think my colleagues at work will help me. Probably If I had my colleagues at work, they would help me So, I think that OK, some people share exploits, that's fine, but I think that... Well, no, you saw it yourself, mistakes and so small resources. We managed to find 323 errors that have been corrected. 361 that are all, all of them. So generally everything is hollow, quite strong and it seems to me that

It wouldn't be a good idea. I still have a bit of self-preservation instinct to not publish it. But I'm writing something in my drawer, slowly learning. Any more questions?

Did you think about joining Fuzzing Project before starting your project? Generally I was inspired by it, but I see that Fuzzing Project is very irregular. There are shots that nothing happens for half a year. Suddenly there is a post on the blog, let's say 10 different CVs in some project and again silence. I work here systematically, at my own pace. I also value independence, so I think that limiting yourself to some additional external project and Maybe inability to do things in my own way would be unacceptable for me. I prefer to do things my way, fool around when no one is standing above me and it's quite good. Okay, second question. Do you do this project within your tasks in CERT? Yes, I do it within.

This is even my goal for this half-year. A framework for searching The best is to do it with a large scope. Next question. Do you have any external funding in this regard? How do we define? No, we don't. For example, Fuzzing Project received funding from Linux Foundation. No, we don't have any. And the last question. I mean, the lack of funding is related to my version of bureaucracy, I would have to fill out some papers. Really, these VPS cost 4 PLN net, so how much was there, 9 VPS, 36 PLN net monthly, please, I don't even pay to approach the printer. And the last question, do you share the toolset you use for triaging somewhere on your own GitHub? Triage

mainly depends on whether this project will be in some way. So far, I have been developing it personally, yes, during work, outside of work. However, it will probably be turned on and definitely available. However, it must be, first of all, it is in the process of registration. It's a bot-based infrastructure, like in botnet, where there is CNC that collects and there are bots, or phasers. At the moment I managed to write tests to the component that sits on the phaser. I still have to write the CNC. First of all, I have to integrate it with external cloud providers, i.e. Aruba, DigitalOcean, Amazon, Headsnart, KimSufi. It makes sense if it is done automatically, because it makes no sense

to install it again. There is a lot of garbage on these servers, you have to put it back on and make it all automatic. At the moment it is semi-automated, this project is not yet mature enough to show it to the public, but I'm still digging on it, so someday it will definitely come out somewhere. I don't see any sense in it lying somewhere, when someone can help. Okay, okay. Of course, it's not about exploits, right? It's obvious. I have an idea for a business plan. It's cool, come back later. No, the point is that you can use this, write some kind of exploit after finding a bug and simply find a company that uses

such a library, report to them, I found such a bug in you, I mean, because you don't have a patched library and you just get rewards for finding it. Sometimes it's a thousand, sometimes ten thousand dollars, it depends. It's cool to get... but I think that companies... companies have different approaches, I think that maturity is still so small, like with these exploits, that a company may come and scare us with some exploits, like ransomware. So it's a risky business and I think it's better to do it in a model like... I have mixed feelings in cooperation with various companies, especially in the context of SOHO and IoT. I don't know how I would have to endure hunger to decide on

such a business model. I would rather see the future in integrating it with a platform for tests and continuous integration. This is more ethical, but it doesn't stink. Question for the organizers: did they consider providing a small sacrifice stone to make it possible to put the blood of the victims in the demigods so that the prelegents could not explain that the demons do not work? Maybe in the next edition, not yet. Any other questions?

I would also like to remind you that if you are willing to do some weird stuff like Jarek or Mac, we would gladly welcome you. We would gladly welcome people who know how to think in a non-standard way, do some weird stuff, most often spoil it, because that's what our work is all about, but there is a lot of fun and, I think, benefits from it. Do you fix it when you fix it? It depends. It depends. We clean up after ourselves. I have one friend who doesn't clean up after himself, but it's hard. His desk looks like a cheap Augeas. Greetings Bartek! If there is no thanks, I will be very much. You can talk to me, drink beer

and discuss business ideas. Thanks!