JARVIS for Code? Meaningful AI Research for Software Reverse Engineering

Show transcript [en]

besides DC would like to thank all of our sponsors and a special thank you to all of our speakers volunteers and organizers good morning everybody and yeah I'm gonna be talking about just some ideas about new challenge problems new directions for meaningful software reverse engineering AI research so imagine I want you to take a second and imagine you know hopefully I'm guessing if you're here versus one of the other talks you're you're familiar with software reverse engineering or you know at least on a surface level and so imagine take a moment to imagine if software Arie could be faster more effective and accessible to a brighter to a broader scope of people with different experiences different training

levels imagine if you're a software reverse engineer how many software reverse engineers the in any audience that you do some software reverse engineering in your job show hands okay smattering okay how about folks that work with software versus engineers or you you have software reverse engineers as part of your operation in your job okay some some more so some familiar some familiarity there that's good so if for the software reverse engineers imagine that you're working you have a tool that when you load up a new binary and you're you know you're disassembled it disassembly tool or your you know your software tool you you have you automatically get meaningful descriptive labels of the code that you're looking

at in easy to read text imagine that when you load up a new binary that you're going to analyze and reverse you get a well labeled software architecture diagram so not just descriptions of each module but also well labeled you know flows and connections between each module it sounds kind of it sounds kind of far-fetched John's kind of crazy but that's what I'm going to talk about today imagine for the for the for the rest of us imagine that you are able to imagine that you get it you have a tool that is able to meaningfully describe to you in natural language you know what a piece of software is doing how it works and you know it's actually

work it thing actually works and it's reliable imagine what kind of how that could change infoset so that's kind of what I'm going to be talking about today I'm going to be talking about in in software reverse engineering what problems I think we should be we should consider solve problems that's from a research perspective you know from a research perspective a solved problem is one that has been reduced to practice for a for a given scope I'm going to go quickly through through that the gaps between sort of the solve problems in reverse engineering and the existing software reverse engineering process and then hopefully that motivates what problems to work on next and talk a

little bit about how to get started on those things so just a quick bit of background so at APL the reverse engineering research that we do it's the the work that I'm involved in is is vulnerability analysis for embedded systems so regularly what happens is we sort of have like a trusted agent or a value valuator role for the government so the government will bring us a you know a military system that's designed by a contractor group of contractors and say evaluate this does it have any vulnerabilities in it you know and usually it's kind of a mix of contractor designed commercial parts and you know they'll say hey does it have any vulnerabilities in there but the best

one is when they say you know sometimes it's we're about to put this on it you know the best one is you know hey this is already out there on the ship and does it have any you know vulnerabilities in it so that's kind of the world that we that we play in it's a little bit different from you know say a day-to-day you know network defense or you know malware kind of kind of re and it's also it's also a little bit different from you know from reverse engineering vulnerability analysis on you know on PCs or phones we're often in kind of the bare metal or artists world and because of that because of working with complex systems

and these embedded systems where we don't get a lot of debugger access you know it's it's sometimes hard to hook up to get to get like traces or things like that you know that that kind of motivates our our thinking we end up having to do a lot of our analysis in static analysis right where we're just like staring at code we're like we're the the the folks that stare at code that's that's kind of what we do so I think we I think that gives us a kind of a little bit of a unique perspective into some of the hardest challenges in in software reverse engineering especially static analysis okay so quickly you know what problems in

reverse engineering should we consider solved there's been a lot of research out there a lot of university research and part of this talk what you know why I'm giving this is I'm hoping that if there are you know if they're students in the audience there's folks that are you know either in involved in research in your you know in your company or you know you're you're a student you're maybe you're currently a student maybe you're thinking about adding on a degree that you'll think about this kind of research and this might be something that you choose to pick up you know in in those pursuits so anyway what should we consider solve I will argue there's

there's been a lot of different if you look through the research coming out of lots you know different universities there's a lot of things in that that have been done in static analysis but the main things you know over the past decade or so the main things that are the most useful that have come out of out of research is D compilation and function the function matching and so I'm talk about that kind of quickly I mentioned here there's also a lot of work in combine static and dynamic analysis approach approaches so that's the kind of thing where you have your static disassembly of the code and you also have you know the ability to hook

up a debugger and capture trace information and then you kind of you know mesh that data together to make sense of what's happening since we don't do that a lot I'm not in a really good position to kind of evaluate the state of those tools so I'm just going to sort of pun on that and focus on the other two quickly so if you're not familiar D compilation is the idea of taking binary code which most of the time in that in the embedded world we're working with C and C++ you know what started out as C and C++ source code and D compilation is taking the binaries and translating it back to you know a C code or C like form

as of a couple of years ago there wasn't much out there and there there there there wasn't much out there that was free so now you can see across the top Ida Pro Dedra ret Dec relic anger management and GEB are all out there Jeb and Ida Pro are the ones that cost money but now especially with Deidre B being released this past year there deidre now is basically a fully-featured decompiler that works for every architecture that giedrius supports so that's pretty awesome and and open source available to anybody for research that that's kind of a big deal decompilers they all sort of work the same way it all all of it traces back to

a researcher named Christina so Fuentes in 1994 she wrote a PhD dissertation on the the D compilation process and which you can see up here and it's basically all of the tools follow her process you know there's been improvements in different stages of it but all of the decompiler research everybody says you know we owe everything to Christina's original doctoral dissertation so just quickly the way these things work is they they take the the binary code they lift it to an intermediate representation and then then they each kind of have their own intermediate representation they reconstruct the control flow graph and the data flow graph of the other function and that gives them like a high-level abstract

abstract syntax representation of the function they all sort of work on a function or subroutine level and then they and then they use that abstract representation to output the final code the thing of it is you know from a practical perspective decompilers always output blank code right so reverse engineers you know we spend a lot of time staring it we used to spend a lot of time staring at assembly code now we can spend a lot of time staring at blank decompiled code which is a little bit better but it's not really fundamentally changing the speed at which we reverse engineer things we still have to kind of stare at it a lot takes a long time to

kind of understand what's going on it still takes a lot of training to really get what's going on you know inside a binary so that the other kind of solve problem is function matching so that's like where you have a function and you that you've you've either analyzed before or it's a library so sometimes you know sometimes people take open source libraries like say open SSL and then they integrate it into their their code and so these are tools that help when you know the you know libraries they're you can or you have kind of code that you've already reverse-engineered you can match it up to the to the code that you're to the new code that you're

looking at and these you know they work fairly well in in most use cases there's kind of improvements that could be made to them but you know they work pretty well for for you know kind of day to day reverse engineering I forgot to mention too so I'll put out my slides after this and you can see that I've got everything kind of references all the way through so you can certainly check out the slides and you know check all this out later they sort of all work in the same way they take the basic blocks of the function and then they they take some signature of that which is like lossy compression so converting the bits and

bytes within a basic block into a into a hash or some compressed representation and then doing doing basically a graph match you know but so taking the control flow of the of two graphs and and matching them that way then they'll also kind of go back out one one level up to the to the two like the function the function call graph and and and and compare the two that way the point point is that you know they they work pretty well and you know we should keep we should keep working on decompiler research we should keep working on function matching research but we're not gonna you know incremental improvements they aren't going to make a fundamental breakthrough

in the speed of reverse engineering that's sort of my my argument okay so what are the gaps between our solve problems and our existing process so how do we do reverse engineering how to reverse engineers operate and what are the gaps between the tooling and our existing process when you ask people you know what is software reverse engineering most people you know software versus engineers get this this this brilliantly artful PowerPoint diagram in their head you know where they like okay we said we stare at this assembly and then I don't know we kind of understand it you know we stare at it for a while and then eventually you know lightbulb and we kind of figure it out

and we we know so I tried to think about our process as somebody at APL that kind of I'm constantly kind of consulted to think you know if people saying like how do we make this process faster you know what what is the what is the process anyway so I've kind of thought about this a lot I would argue that reverse engineers when they're analyzing code that they work on at least five levels and so this kind of represented in the diagram here so we start out looking at you know it's start out looking at assembly code and then we kind of and we kind of work our way up to levels of abstraction till we kind of understand

the the higher-level functionality of a piece of code right so you you know at the lowest level we're looking at you know disassembly op codes we start to look at the at you know sort of a high-level construct like you know if then statements for loops that kind of stuff and we go up to a function level right so you know we're familiar with looking at at subroutines functions functions are then grouped into libraries so then we have okay this set of functions is you know these are related and these set of functions does something different they're all within this binary and then finally once we get all that figured out at the top level we can then say okay

what are the pieces what are the pieces of code the main you know in in our artists environment that's usually like the user-defined threads that are doing the main functionality you know if it's if it's malware you might be you know you might have your your main threads of you know of execution that are calling into all those you know all those libraries all those areas of code underneath so that's kind of the areas that we operate in we we are we constantly are bouncing back and forth right so we didn't we're not you know it's it's not it's not a linear process right we're digging into you know one function looking at some some opcodes

saying okay this is you know this is adding something okay I see this is adding up all the numbers in this buffer and then you know then we're saying okay so that means that this you know that this function is a check sum function and then if I you know bounce up even higher that means okay they're you know they're they're pulling I can see at the top level they're pulling out the payload and checking the checksum or or whatever so we're constantly kind of bouncing back and forth but what we're doing at each level is we're translating from code into natural language right where we are looking at op codes and we're making you know comments about

what the op codes are doing you know at the function level we're saying you know okay this is you know this is what this function does this is what this subroutine does and eventually when we get up to the top you know so if you're a malware reverser when you get up to the top you can say oh okay I finally understand how this thing works I understand the components and the general flow I can write my report you know maybe for us it's the same thing you know it's okay I can I've decomposed it I can write my report you know for us it might be I've understand the general flow and now I

can target one particular area for vulnerability analysis but that's kind of the that's really what we're doing in software reverse engineering it's we're bouncing back and forth between these layers of abstraction working our way up but we're translating you know into natural language at each stage and so when I think about this you know there's a lot of people because reverse engineering is so hard it's it's a difficult you know it's it's a difficult process it's a it's a thing that requires a lot of training it requires a lot of layered skills so like skills you pick up doing one thing and you kind of add add on and because of that there's a lot of there's there's folks out there

like maybe we can just get rid of software reverse engineers altogether maybe we can just like take them out of the loop I don't think that's a very realistic goal but I'd like to think of it is what what could we do to make reverse engineering accessible to more people what can we do so that it's not so it's not a thing where I have to have multiple these levels of training to even kind of you know get started in it you know how do we make it so that it that we and and not to say that we we won't have highly skilled people doing it but how can i how can I just make

there be tools so that people with with less training can do it and people with you know more training can do their job faster so that's that's kind of how I I kind of think about our current situation I guess so what kind of problems should we work on next my argument is basically to make software reverse engineering faster we need to we need tools that provide provide information to an analyst in natural language right we we need to kind of we can speed up the the process that we have which is essentially a code and natural language translation problem by by developing tools that that do pieces of that problem in an automated way so this is a

set of this is a set of challenge problems that I would like to see kind of I would like to see research done in and I'm I'm interested in doing you know research in and I've already sort of put kind of a set of proposals together on and so this is kind of just ideas for and your problems I'd like to see next first ones variable name prediction so so I've got to remember I said it so when we when we D compile a function we get a blank you know C function out the idea is given that this blank decompiled function predict the variable names within that within that function based on code that you've seen before this is

actually one of the areas that's being actively worked on it's really really cool I actually had to update these slides this week because this this reference right here number 19 the the CMU actually just came out with a new paper where they they claimed I want to say 74 percent of the variable names they're able to in just kind of within their data set within their the experiment they did they were able to recover and and predict so that's that's pretty cool I think you're going to start to see tools that do that they're working on a an Ida Pro plugin I think they're planning to work on it for giedrius oh you're I think you're going

to start to see that out there soon another one a statement commenting so the idea would be if you have you know have decompiled code put in auto-generated comments for it right you know look at what what the the basic blocks are doing you know give me a meaningful content if I've got an if-then statement or I've got a for loop give me a meaningful comment that's saying you know this is checking a this this is checking the state of the serial port and or you know this this for loop is a Turing iterating over all of the all of the bytes and a buffer or something like that give me give me some meaningful natural

language text for it for each statement in a function but so and you'll see these challenge these problems are kind of moving up those layers of abstraction that I talked about so function summarization at a function level you know given a decompile function output just a summary of that function and and what it's doing summarization is actually a difficult if you talk to natural language processing people summarization is kind of a difficult problem anyway if you're familiar with if you're familiar with like Google Translate so you know the way that they trained Google Translate is they have things like BBC articles where somebody wrote an article in English and then hand translated it to another language

so you've got a very high fidelity translation and then you can train on those things summarization is difficult to train on because you don't have a data set there aren't big data sets of things where somebody has hand summarized a you know an article or something like that it'd be cool you know I don't know if they could do train on cliff notes or something so that's a difficult problem anyway but so so imagine if you can get you get some natural language output of kind of what the piece of this function are doing and then you can summarize that and output a coherent statement about you know you know about what that function is doing there is

existing research and source code summarization mainly for it that that mainly exists for sort of like Auto commenting so they're like people that do research on like if I take source code that somebody writes and then I just want to have it Auto commented you know because my developers are lazy and they don't want to write comments or something but that's that that's out there so maybe we can kind of build on that and if we get enough information enough natural language information about the function from the the other pieces you know maybe we can come up with a coherent summarization next one it's not something I call something I call the code cut problem and this is

the one that I've I've worked on myself so you can you can check out my my github repo is reference 27 there and we've come up with sort of a preliminary solution to the problem the idea is so given I've got a binary and generally what you know the way that large binaries work is you've got different source files that get compiled into object files and then they all get linked linked together and then all that information about the object files where their boundaries were gets removed so the idea is given a binary locate the original object file boundaries within that binary to give an idea of you know this is a this is a cluster related

functions this is a cluster of related functions and so we've got it kind of a solution that does that you know okay it it uh it kind of works so that's definitely something I'd love for you know more people to check out and and you know the the idea there so if you think about that and and think about the summarization idea if you're if you're able to take a large binary and you're able to find those clusters of functionality and then you're able to output text for each of the functions in that you know in that object and that cluster related functions if you can that you you know ideally you can then summarize it and

then say okay this set of related functions is you know crypto related or this stuff is a particular you know this is stuff as a particular SCADA protocol or you know what you know what what have you this this is the this these are the standard library functions we kind of do that in with code cut so code cut currently it kind of does it in a sort of simple naive way it just sort of looks for any string references within that within that object and it will try to like look for common words and stuff like that so if it finds like common you know words or common sets of words and it will try to rip try to name your your

library based on that but that would be really cool because at that point the cool part with code cut is if you get a high fidelity solution there that's where your architecture your software architecture comes in because if you can if you can if you can identify those functional boundaries within the thing within your code you can then map you know anytime that this this modular code interacts with this module of code and you do that throughout your binary and you you pull out a an architecture diagram so code could actually does that you know it does that right now but so imagine you can get to a point where you get a software architecture diagram and

it's well labeled in you know in natural language I'd be really cool some just quickly some foundational work the this is kind of in natural language processing they call it word embeddings which is basically the process of taking you know the corpus of words and mapping it into a vector space so that you can do you know you can do reasoning on it there's there's been a couple of projects on mapping assembly code and high-level code in into and doing that that embedding so that you can do reasoning on it so I think that those code to Veck just came out I think it was like they did they did the research last year so that's kind of

foundational work that people should be able to build on which is pretty cool okay so quickly so those are those are the problems and so how do we get started the the the way that we get started and in and the way that I sort of decide to get started on the problem was collecting data I started to talk to people at work kind of machine learning folks and that they said you know and they said well you have you know you you want to do this machine learning stuff you've got labeled data right that's what we do you know we we we take you know we train on well labeled data and we kind of make algorithms that that

work with that and and I said well and I thought about it so I'd actually know the we have a ton that there's a ton of open-source code out there and there's a ton of there's a ton of firmware out there you know binary firmware but there's almost knows debugging symbols right so most of the time you know when you build production code you you don't have you don't get your people don't publish the symbols they don't publish artifacts from the compile process right and so in order to do meaningful research here we need code compiled you know with and we need to save the compile artifacts while we're you know while we're compiling the code

and save the binaries save the symbols and kind of publish that all as a data set ideally from for my research ideally it would be cross architecture right if we've got so many things out there that work on in you know intel code but you know we pour it man maybe it works for arm because of phones but if we poured it too you know the next architecture or we just can't port it to the next architecture it's kind of purpose written for those architectures or whatever so ideally I would like to have a you know data set that has many architectures in it so it doesn't just get overtrained on intel code yeah okay

so I created this thing called all-star all-star is a data set that we're planning to publish it's currently building and we're at about about two-thirds of the way through building it we're taking the Debian the Jesse distribution which is slightly older but there's there's a reason why we're doing that and we're basically taking the 30 mm Debian packages Debian theoretically builds for six architectures so it's theoretically builds for x86 32 and 64 arm PowerPC MIPS and IBM s/390 and we're using the dot cross project to build that to basically basically all we're doing is iterating through all the packages and building them for all the architectures that Debian supports this is kind of the the with Debian we're

able to sort of override the compiler flag so we can make it save that that extra information that we want to I said it takes five to six weeks to build it like once I get the I think like subsequent hopefully subsequent builds will take five to six weeks I like hit hang ups every every once a while I have to like you know basically just it's pretty much as packages that that aren't well behaved that either takes a manual like configuration or something like that so then I like remove them and and gonna restart the that that build thread so theoretically I can can we can build in on the set up we have about thirty five

to fifty five packages an hour so this is the what it's going to look the like I said that builds currently and currently in process but we're planning to have it released by the by the end of the year it'll end up being about a terabyte of storage and it'll so it'll end up with about 160,000 64-bit binaries and about twenty thousand binaries that are that build across all six architectures that's pretty decent some of the other research that was out there you know that like this the CMU research and stuff they're working off of about twenty thousand github binaries so this is actually actually pretty decent and if you want to just do 64-bit

so a 64-bit x86 it's it's really good so kind of the sort of why we did it this way that the there's you know some of the other papers that are out there are using a get hope approach which is basically just like try to suck down as much as you can off a github and then build it all and so this is my argument for why do I use Debian vs. versus github github has more packages theoretically so theoretically like four hundred thousand packages that are in see the the the problem with that is a couple of these projects have actually found that there's up to 70% duplication in github you know so you think like

there's repos that are just better that are just cloned but there's also just like copy and pasted you know code so somebody copies and pastes some open source code into you know into their repo and so I don't I don't know you know maybe there's more original code out there on github but it's probably not a lot more than what some Debian the github approach is you basically just try to run configure and make you know there's no standard way to build a github package right and generally that just sort of works for 64-bit x86 because that's what everybody's running on their PC you know and you presumably you can try running it and say like in a

Windows environment a Linux environment maybe an mac OS environment I think they generally run in a Linux environment but I'm not sure so it's I think it's a lot less likely the github stuff is a lot less likely to build across architecture the licensing there's there's a problem with github so in github you can you can label your project with a license you know you can say this is GPL code or something else but there's no way to like check it I guess and if you don't choose to label it which is a lot of lot of them you know aren't necessarily labeled with a license then kind of how you share it and how you you know just

legally it's it's kind of non not obvious you know what is allowed there so a lot of a lot of people are like sharing datasets from github that are like you know they're just code or they're fragments of code the cool part is with Debian it's all GPL so we can build it all and we can share those we can share those binaries publish those binaries and you know legally it's all it's all good I'd also like the thing I'd like to think that Debian has a little you know on average the code is a little bit more like serious or polished you know I'm not going to make a stronger you meant there you know github

you know you got stuff where people just kind of they write something you know you know most people or I shouldn't say most people a lot of people just have their own github repo or they kind of like play around the stuff hack on it so I'd like to think that Debian you know in general is a little bit more polished code on average this is kind of you can check this out later you know kind of if you're interested in working with this you know what's what's in it and it basically were we're taking a binary and for each source file we're outputting sort of the abstract syntax represented representation from GCC and the the register transfer

language which is kind of like that the final internal representation in GCC and then the object file if that binary has got a man page we're saving it symbols in the binary and then any any header files that the fought that it uses any system libraries we also the cool part too is we're also we're cloning you know we completely cleaned the Debian Jesse repository so even when Debian isn't using that anymore we'll keep that you know around so you'll always be able to kind of pull those source packages and then we make it browsable you know so it'll be a browsable by HTML and have a JSON index for you know for code parsing this is

just a picture what it looks like thanks to my my buddy mark for making us the the suite logo and and pretty pretty looking HTML yes I mentioned we're planning to get that out there at the end of the year and we've got some internal research projects that are planning on using it and we've started to try to work on on on some different proposals out there with our government sponsors to do more research in this area hoping to see a you know a lot more research in this area please if you know if you're interested in doing research with or you know interested in funding us absolutely you know come come talk to me

and yeah that's all that's all I got

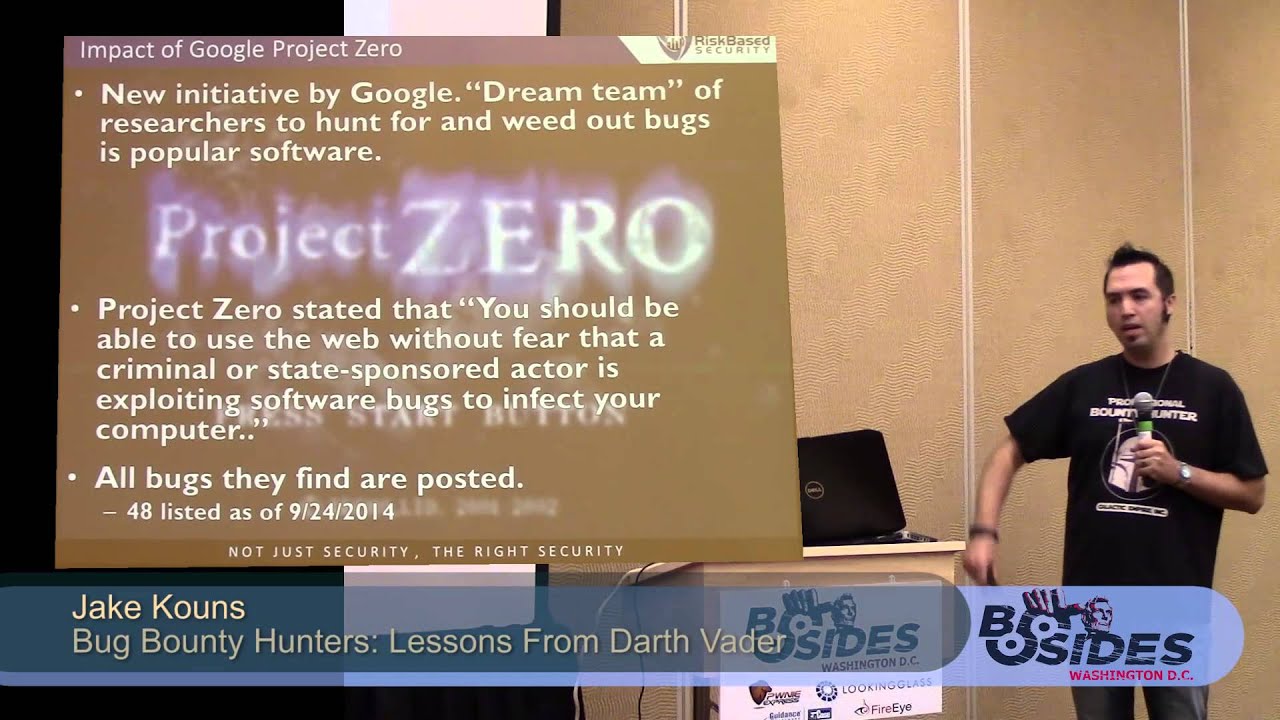

I would like to say I'd like to thank everybody first of all it's a thanks thankful to my folks in management at APL in my my line management for all the the reviews and working on this folks in my program management that that funded our research I'm also especially thankful to have our flake and many of you know formerly of Google project 0 he was actually like really awesome about like brainstorming with me on all-star and gave me a lot of encouragement to do it so I really appreciate that and Igor pushing and Joan kind of helped me track down some of the the resources and references that are in here so I wanted to thank them as well and I think

Chris I think I got time for questions okay I got a question in the back how can you do you say how can you hear about it when it's finished yep so good question you can follow me on Twitter I'm I have I think we decide our official hashtag is the is allstar data set so if you want to you know what for that or follow me on on Twitter I love you know now basically tweet out when it's when it's done [Music]

get in your focus method system why do you choose to do the whole operating system your face that was a really good question that question was given my focus on real-time operating systems why choose to do a you know Debian which is basically you know it's a you know general purpose you know operating system and a bunch of user space binaries there the reason is there isn't anything that's quite like that and I'd love to kind of brainstorm and where there isn't quite anything quite like that in the our top space there are some are tosses that that are that that are like cross architecture one of the one of the ones I've looked at is a free

are toss the problem is that they they don't necessarily have a consistent if like free are tossed in particular didn't have a consistent build process you know across the different architectures and and it's also a question of then the the volume of code that you would get a really good question I would love for there to be such a thing and I could see us doing like you know how doing you know finding data sets like that that would I think that might be a good place where you know if you get kind of a initial thing working and then you would you know evaluate it on a smaller are toss focused data set I think that would be

nice so yeah that's good am I thinking on that any other questions you raise your hand or shout loudly all right cool thanks everybody [Applause]