How To Fit Threat Modelling Into Continuous Security

Show transcript [en]

So, a little bit who am I, why do I care about this thread modeling thing? I started as a software developer and architect many years ago in the 90s and it just so happened that a couple of companies I was working in,

the doing transition to secure development lifecycle. How we introduce security into software development.

And... I think your Google is not happy.

What is not happy? Okay. Hi. We are just chatting.

So there were two conflicting desires in company to move to agile development, do everything faster, but at the same time to introduce security. And in my role as a technical leader at the time, I had to do a lot of trying to put it together, security and agility. So it was actually quite fun and I thought, oh, I want to do more of that. So I moved into security consultancy. done a bit of pen testing but mostly I was on the defensive side doing architecture reviews for the clients, threat modeling and some code reviews and security activities in the life cycle in general. And out of all of these engagements I like threat modeling most

because other pen testers they would go to something like infrastructure pentest and they would come with the stories how everyone hated them and they came to a team whose manager three times removed books this pentest to prove they're not doing their job and how you can't get a chair in this office and basically very very unhappy kind of engagement but in thread modeling it's very collaborative you come you talk to a team of people who work together with you it's not the kind of us against them so I do like these engagements but two things I've discovered that make me pretty sad for example I would deliver training to thread modeling couple of days of training and in the end

the team would tell me, yes, it's very interesting and we feel like we know how to do that, but unfortunately we cannot start doing it tomorrow. We will have to wait for the next project. Why? Why can't you do it tomorrow? Because we need a greenfield project to start doing thread modeling. Our current project is too much of a mess, we have too much legacy, we cannot start. But your current project is the one that your clients use, you keep releasing versions of it. Why do you want to wait for the next Greenfield project? And do you know that when your management promises you now on the new one you will be allowed time to do it properly? They're probably lying to you, it will be more of

the same. The other problem was instead of training we would do collaborative thread modeling with the team. Do you think another dongle will make it happier? We would do collaborative model together with the team. And what I hear after, yeah, it was very interesting. We found interesting threads. Unfortunately, it will become out of date. as soon as we are out of here. Why would that be? Because we will develop new features. We will develop new versions.

And why can't you... Hello? Oh.

So lots of people were lost in this maze.

Don't worry guys, we are still chatting, we didn't get to.

Why do you think your model will go out of date? Because we are developing new features, new iterations, why don't you spend some time each iteration to keep it up to date? No, we are so agile, we run weekly iterations, we have half a day of changeover, we need to feed demo, retrospective, next planning meeting, no way we can put threat modeling into each of these iterations. So what I hope to do today is to help you to deal with these two situations. I don't know which one is more relevant to you. And the goal is to say you can start threat modeling without waiting for the next project. Even if you own this massive, complicated legacy system, you can start doing

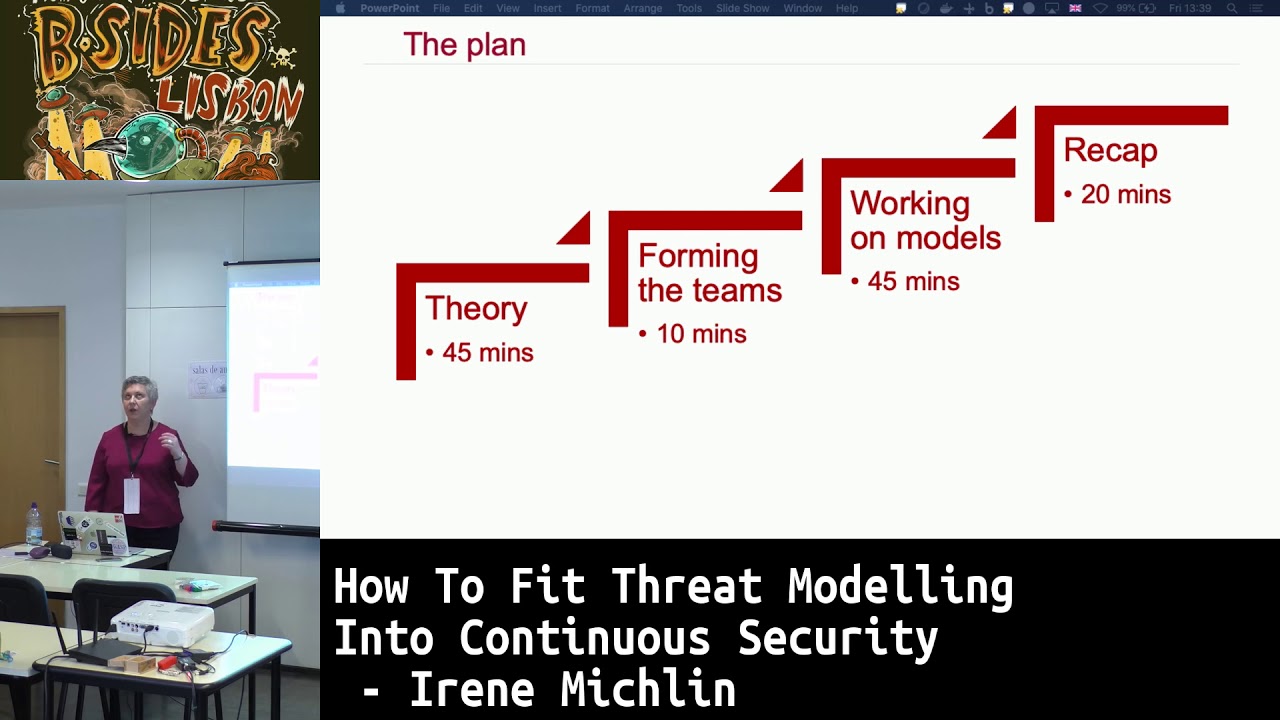

stuff tomorrow on your next feature to ensure it doesn't become even more of a mess than it is now. And how do we do that? So that's the plan. First I do a crash course into thread modeling. Then we break into three or four teams and you pick an example. I have three different examples. We work on the models and in the end we get together to recap what we've learned and maybe exchange the models that we've built. So that's the plan. If you will want to run away, this is a good spot to run away. I won't be offended. So how do we do it? I will introduce a simple system, a new feature that we are developing on this system, and we will walk through

the process of this threat modeling before a little crash course. Okay, who's done?

Who's done threat modeling before? Okay. Anyone who hasn't done it but has a vague idea what Stride is? Okay, but don't worry, if you have no idea, we will get enough. So threat modeling is all about answering questions like what can go wrong with the software we are building? What can go wrong with the solutions that we are building? let's come up with some potential ideas and let's think how we can mitigate against these threats. So first we decompose our architecture in a specific way using data flow diagrams. Then we search for threats looking at this set of diagrams and applying stride. And then we get this long list of threats, obviously we are not going to

fix them all in one day we will have to prioritize somehow but that's out of scope for today. So what is data flow diagrams? You've probably came across them. If not, don't worry, it's pretty simple. Five types of elements. The most important one is called process and we put a circle in our diagram and it doesn't mean necessarily process in a sense of computer processing memory. It's any kind of logical entity that gets data, does some transformation with the data, passes data to somewhere else. So any active data processing in our system, we call process. We have data stored to parallel lines. Again, it doesn't necessarily mean database. It's anything that holds data. For example,

when we were modeling smart cars, the natural way to model the state of the lock is data storage, basically one big data storage, am I locked or am I unlocked? Then we have data flows that connect all these elements and we have external entities. So external entities are the users or other systems that send data to us or get data from us. And we want to analyze what's going on, but we don't have control over their security. So they are external to us, out of our scope. We are still interested in interacting with them in a secure way, but we can't make decisions on behalf of what security controls they have. And the last one is trust boundaries. They are pretty important, especially for fitting

into fast life cycles because the most interesting, the most dangerous threats will be on data flows across trust boundaries. So we want to put them in the right places and when we are pressed for time, we want to start with these data flows because this is where the most juicy threats will be. So we have a set of diagrams. What do we do with them now? We go through these diagrams and we apply stride to them. Now stride is just a mnemonic that describes six families of threads and each family of threads violates some kind of security property. And this is just to help us to start thinking about these threats. It's not a classification system.

What I sometimes get, people start arguing how best to classify this threat. Is it spoofing or is it elevation of privilege? It doesn't matter. As long as we found it and we put it on the list, we can move on. It's not a classification system. So, in the UK,

People get it when I say it's not a field guide to the birds of Britain. It's not important to identify them perfectly.

Okay. So how I will introduce the example? I will explain a simple existing architecture, I will explain the feature that we are going to add, but then we will pretend that we don't know the model for existing architecture. That's the problem of the legacy systems. You need to start somewhere where lots of code exists, lots of architecture exists, but you don't have a model for that. So we are going to model this situation. So you will have to pretend to forget what you're just going to see. Okay, so this is our very simple architecture.

It's some kind of a web application that has anonymous users.

who can only read content. And you can register, you can create account, and you can modify content on this web server. And at the end, it's served by some kind of SQL database. And we have a trust boundary because this is in our data center, either on-premise or in cloud, but it doesn't matter because we know it's ours, we control the defenses, we control the setup. So it's very simple but reasonably realistic, full of web applications.

Like that. Now, we need to pretend to forget it. Let's say it was a much bigger, much more complex system for which I cannot create a thread model in half an hour. And I'm not trying to build a straw man here. I'm not trying to tell you threat modeling is so easy. Just stop with your excuses and do it. No, on a big complex system, it's time consuming and pretty difficult. And I totally accept that there are companies that cannot stop and give the development team break from adding features and just doing security work. I accept that. It's not my goal to say, this is a straw man, let's destroy it. What I'm saying is, it is true. But what we can do, we can put

all this existing architecture in a circle, call it legacy blob, and on the next feature we can do threat modeling without analyzing all these complexities that's inside it. So we are a development team. we have the existing system for which we don't have the threat model. But what we have a product owner who explains a new feature to us. So I think I'm setting up a very fair conditions here. In a planning meeting, we should have a product owner who can explain a new feature to us in three sentences. And if we don't have that, then we have bigger problems with our developments and just lack of security. And the other condition I'm saying for a planning meeting, get a

room with a whiteboard where you can draw a circle on it, call it legacy blob. If you don't have that, get a different room for a planning meeting. I think it's very fair. So what is this feature? We are adding business analytics to our system. we want to model this new feature. And what's inside can be much more complex than what I've just showed you two slides ago. So how do we do that? First step, we want to go through the story and to identify existing components that participate in the story. So we go through our story, we notice a database plays a role in it. So we need to separate it out of the blob and to show it on

the diagram explicitly. Do we need a trust boundary here? I say no because we know it's in the same level of trust as everything else. It's in the same data center or it's in the same cloud. Same level of trust as everything else, so we don't need a trust boundary yet. We good so far? Can you see from... Yeah? Okay. So we go through the story, we identify existing components. Next step, we try to find new components, new stuff that we are going to build as part of this feature that needs to appear on the diagram. And because of the story we see that we need to build a new aggregator process. And the name is a strong hint here. It's active, it processes data. So it

needs to be a process in our diagram. And what it does, how it's connected to everything else, it consumes data from our database. Now here we go into trade-offs between being very

exact and keeping the diagram visually simple. Like we all know data will not start magically flowing in that direction. There will be SQL select going first in the other direction. Do we need to show it in our diagram? And here you can argue both cases. You can say, yes, threads like injection and stuff, but I deal with it in other ways. I have

coding standards, I will catch if someone tries to do stupid things like string concatenation, I only use prepared statements, I'm not worried about SQL injection to such a degree that I will put it in my thread model. I want to keep it higher level than that. So conceptually it is a one direction flow. We are never going to modify data in our operational database in this feature. So you can argue both sides. say if the overall level of security in your development is pretty low, then you can say I want to look in depth to how I access my database because probably there are bad things going on there. So we have data flowing to this new process. We keep going to the story and

we identified another new element. We have a data flow that uploads data to the data warehouse. And finally, we need the trust boundary because it says data warehouse will be on somebody else's infrastructure, on third party's infrastructure. And that immediately means trust boundary. There is us and there is third party. and they are not in the same trust zone, so we need to show it explicitly with trust boundary.

We keep going, we identify another thing, reporting server, it's in the same trust boundary as data warehouse, so this is where we put it.

And then we have analytics, or analytics user. Again, it's a question of keeping it visually simple. You can show users separately and the app separately, but it will not give you much in terms of threats because they're both external entities. Two external entities talking to each other, nothing we can do about them being back to each other. So might as well show it as one external entity. Now, interesting question here. Do we put this analytics user inside trust boundary or outside trust boundary. And this is where story as it is doesn't have enough information. We will have to ask our product owner clarifying questions to understand. So for example, product owner can tell us, this analytics

are for our finance department. And these are people who always work from the office, They are going to be on our corporate network. They are going to be authenticated. This is how we expect it to work. If this is the case, then this user signs the same trust boundary as the rest of our company's infrastructure because we expect these users to be always within our corporate network. Or product owner can clarify it in another way. Maybe

These analytics are for our salespeople. And our salespeople travel, they need to work from hotels, they need to work from airports, they need to connect from all sorts of dodgy Wi-Fis. It's a completely different scenario.

If that's the answer the product owner gave us, then we need another trust boundary here. So if it's for our salespeople who are on dodgy Wi-Fi, then what we have? Third party data center, our own infrastructure, and everything outside is internet. If it was the first kind of story, if it was, it's for our CFO who always works from the office, then we would move this box within our own corporate trust boundary. So sometimes we need clarifying questions.

This is a diagram that reflects this new feature. How do we know the diagram matches the feature? When we do threat modeling, the guidance says, you've done enough diagramming, when the team agrees, the diagrams tell the story. And sometimes on a large realistic system, it's pretty hard because the software tells more than one story, Sometimes it's a complete saga, multiple stories, people don't agree. Here in incremental, it's actually simpler because we have a very specific story to which we need to match our diagram. So hopefully the team can agree reasonably fast that yes, the diagram tells the right story. Now we need to start looking for threads And we need to focus only on the new components and

data flows that are relevant to this story. So in here, it's basically only one data flow from the new process, upload of the data to the data warehouse. So we go through stride one by one and we start thinking about these threats. So for example spoofing, what does it mean to this interaction? Can attacker pretend to be us and upload data to our behalf? How we authenticate to this business analytics system? Can we be tricked uploading our sensitive data to an attacker's controlled location? So before we start uploading what is the handshake, how do we know someone isn't faking the destination of our data flow? Tampering and information disclosure usually go hand to hand and then to do with

security of data in motion. Can attacker intercept, can attacker tamper with data, can attacker eavesdrop on the data as it flows?

Repudiation. So if you are working in e-commerce systems, then repudiation is usually, can the user say, I didn't buy it or I didn't initiate this transaction. But in a wider sense, can someone dispute the activity and what controls we have still prevents them from disputing it and damaging our company. So here repudiation, for example, We are using this, we came to analyze the data, we notice half of the data is missing. What if this third party says, no, you never uploaded? How can we prove that we did upload it and they've lost it?

If we are paying per gigabyte or whatever, what reassurance we have that they are not charging us for what we did not upload? These are all repudiation questions. Denial of service. What if they're not available? So this is stride and stride is not complete. It's just a good starting point to start brainstorming. But there are threads that are not covered by it. For example, privacy threads. You have to think about privacy outside of this framework. So here a couple are pretty obvious. We said aggregator There are all sorts of famous stories the companies thought they cleaned up the data, they made it pseudonymous, and then someone went and reverse engineered it back to individual users. So,

can our aggregator be reverse engineered? And also GDPR, we are changing the situation here, do we need to notify our users?

So what we come up with is a list of questions. And some of these questions will be answered immediately in our threat modeling session. For example, if we know that the upload is over HTTPS and we know we are not going to mess up the implementations, then tampering in information disclosure should go away. Right? If we implemented it correctly, there is no threat of sniffing or tampering. Some of these are not going to be answered here because we are not designing our aggregation algorithm during the planning meeting. But what I hope will happen if we have this joint session and we invited our QA engineers to participate in the session, what I hope for QA engineers will come with security test

cases here they will make a note, we want to try to reverse engineer the aggregated data. Some questions product owner will need to take to legal team. Do we need to change notifications that our application presents to people? I don't know, I am a developer, I am not a lawyer, I don't want to decide these things for my application based on my understanding. I want a guidance from someone who knows the law and can give me a proper guidance. So the session results in a list of questions. Some will be answered straight away. Some will result in security test cases. And they're pretty difficult to come up with. So here we have something that helps us to write

security test cases, which is an achievement in its own right. Some will result in clarified security requirements. which are also very difficult to come up with like how many places where I worked all we've got is this should be secure thank you

so we've done all of that in under 20 minutes where is the catch? is that I send relevant threads. What happened to all the irrelevant threads? How did I manage to hide them to keep this such a short exercise? If we were doing thread modeling on this system from the very beginning, this would be our full picture. So we have the initial thread model, we add new stuff, we've analyzed it pretty quickly, all is good. we don't have this full picture. So for example, you've had threat modeling training or you've just read a book or a blog or whatever and you're doing it for the first time as a team, you will come up with things

in existing parts of the system. You just can't help it because this is the first time you approach it with security mindset. You will just keep thinking So, in our diagram, the only access to database is from web server. It's very nice to draw these arrows, but how do we know it's true? How do we know I cannot be on the internet here and access it directly? Who says? So we have this one directional arrow that says anonymous user can only read data. We've never tested it. What if anonymous users can modify data? How do we know that this authorization works because we think registered users can only modify their own data, but we've never tested it. So the

team will keep coming up with questions that are to do with the existing part of the system. And again, in this small one, it's all pretty small, but on a realistic system, if you are running this kind of session and you are not careful, It will run for hours and hours and hours. So how do we keep it time-boxed? What we don't want to happen, say you are a tech lead or a team lead or whatever is your title, and you come on Monday to work all enthusiastic and you say, I want to try thread modeling. If it turns into the whole day of development consumed by this meeting, very quickly you will be told you don't have time for that. So I want

to help you to keep the time box and sustainable so you can do a little bit of it every iteration. So how to make irrelevant threads go away?

Two phrases. One says, we are not making it worse. Actually, let's go back for a second.

We are not making it worse means we've never done any security on the system. We are probably facing a big pile of security debt or we are in a hole security wise. But what we can do today, we can stop digging. So we are in a hole, we don't know how deep it is. at least we can stop digging, we can stop making it deeper. So, some examples. Can registered user inject malicious content? Maybe. We don't know. We didn't have pen test. We've only learned about prepared statements last month, probably something in our legacy code, the restring concatenation. We don't know, but at least we know that our new business analysis feature doesn't make it worse.

Can anonymous user bypass access controls and modify something? Again, maybe, but it's not relevant to this new feature. Sure, we want to take a node somewhere, but we don't need to resolve it right here, right now. Is our infrastructure secure?

Here need to be careful because yes we can say we are not making it worse but are we? If this is happening on a port that requires modifying our firewall rules then we are making it worse potentially. So need to be careful not to say we are not making it worse when actually we are making our security posture worse.

And the other phrase sounds horrible to say it's not our problem, right? Because security is everybody's problem. But it can genuinely be so. So for example, what about a threat? Analytics app comes with 10 licenses, but we try it and 20 users can use it at the same time. It's their money, it's their problem, right? In a more realistic scenario when we are talking about complex system, that multiple teams build the system, we need to recognize that we have a thread discussed in our team meeting, but it's not within our team scope to resolve it. And I don't mean it in a horrible way, let's push everything to the other team. But in a healthy collaborative organization,

we should have a way to communicate it to the teams that work on the other components that we've identified the threads that we think is relevant to your work.

So I've seen situations where a team that works on some subsystem basically doesn't trust anyone else and goes, we assume they're all going to be terribly insecure, we're building our own defenses. Again, on the level of the whole solution, it's not the optimal way to deal with security. It's not the best way to assume everything else that should be in our trust boundary is not trusted. So we don't want to be in a situation where team makes these decisions either because it's not great for the security as a whole. So a couple of caveats. I said not our problem in the context of we as a development team were told that our company evaluated this third party and

decided we are buying this BI solution from them. So you assume the company done some due diligence on them. Then we can genuinely say we are not going to investigate how secure their solution is. But quite often development teams have as a task, let's evaluate a couple of alternative providers. And if that is our task, then we are going to be interested in how the security of the third party is implemented and we are going to ask more questions. So let me go back here. So in the story I was telling you someone else done due diligence and we were told we are going with these guys. In this way I can say I am not going to investigate in depth how secure, for example, this data

flow is. I can just assume it's fine. if my team was told please investigate couple of alternatives, then when I am doing threat modeling, I will be asking questions about this data flow as well and about this one because it's a much bigger brief to evaluate the security of our potential provider. The caveat to the other one, I don't know if you are familiar with Lean and Kanban principles, they have a concept of pulling a cord. So I'm working on a production line, I've noticed something so catastrophic, it puts lives at risk, I will pull the cord and I will stop the whole Toyota factory. So if you are seeing something that bad, even so if it's not in our immediate scope

of our incremental threat modeling, I think we need to communicate it. What examples can I give? There was a story a couple of years ago that Oracle discovered where embedded admin accounts with either empty or hard-coded password in their codebase That I would say yes, no matter what feature we are building, if we discover it, we want to fix it immediately, even so it's nothing to do with the feature we are building. So something like really, really bad.

So I said stride is a good starting point, but sometimes it's not enough. privacy, how to reason about privacy.

This blog comes up with four questions you can ask about privacy. I think data transfer, data retention, it's quite easy to combine with stride.

Depending on the domain you will be able to find additional thread libraries or collections of threads. So for example, if you're doing a machine learning solution, we want to break it down that way. But there are tons of others. There is something for automotive and for cloud and for Internet of Things. Depends what you're building, you may be able to find additional sources of thread to complement Stride.

Another interesting question is, we've done this modeling but it's just piece of paper. The actual software is going to be what we write afterwards. What if our implementation deviates from our design? How we can capture that? So an example here. In our diagram aggregator was one logical process. When we come to implement it, what if we split it in two? One will just read and aggregate the data and the other will do the actual upload. I think it's a sound engineering solution, makes it easier to change the provider later. Does it invalidate our threat model? Well, it depends if C is wrong at the same level of privilege. Say you've done it as two microservices. They

have exactly the same architecture, they run in the same way, they connect to all the same things. You can reasonably say it makes no difference to our overall threat model that instead of one process, we have two processes in exactly the same trust zone. The second example sounds hilarious, but it's based on real life. We are a little startup, we are building this feature and our VP of something important comes into the room and says I'm having this really important demo next week and no time to wait until the end of this iteration but the nice people in this business intelligence company promised if it gives them accounts they will show some nice pie charts to our potential investor. hopefully it should be clear to the whole team

that it invalidates our threat model completely. So this is why I recommend doing threat modeling as a whole team activity, not just the architect, not just the lead developer doing it by themselves. Bring everyone, bring QA, bring your business analysts if you have them. If you're doing DevSecOps, then obviously people from operational side need to be here. At least it helps everyone to share this mental model and spot later when implementation starts to deviate from our model. Also, if you involve QA, as I said, it helps them to build security test cases which are otherwise pretty difficult to build.

If you want to introduce security testing to tell your QA people start thinking like an attacker, it's not a very helpful advice. But this gives you a structured way to come up with attack scenarios.

So if incremental is so great, why it's not enough? If you've ever read a book about testing legacy code, you've probably seen a similar picture. It says, don't waste your time trying to test everything. As you introduce new stuff, or modify stuff, introduce tests around the new stuff, and then in the end, everything important will be tested. Which is fine for functional bugs. Say, I have a feature, it's been deployed live, for five years and no one complains about it. What does it mean? It means one of two things. Either it really doesn't have any serious bugs or no one is using it. Either way, why would I waste time writing tests for this feature?

Unfortunately, in security it doesn't work like that. Even if legitimate users don't use this feature, if it's vulnerable, attackers will find it if it's there. So I've picked several examples. Famous bugs, really famous bugs that have a massive time gap from when they were introduced to when they were discovered. Massive. 10 years, 20 years. So when we say, if no one is complaining, it must be fine. It's not at all true insecurity. And if we do just incremental, if we focus just on the new stuff, we may miss some massive security issues in our legacy system. And attackers don't care that no one really uses this feature. If you have it in your live environment and it has vulnerabilities in it, they

will be exploited. So for that reason, eventually we need a whole picture. Doing incremental threat modeling is great and useful and you should start as soon as you can. But eventually we will need to tackle the whole.

For two reasons. As I said, what we don't know can harm us in security. And the second reason, the system is greater than some of its parts. So if I have subsystem A, I've analyzed it, it's secure. subsystem B, secure, the way I put them together can be very, very insecure. This is why we need the whole. So, if eventually we still need to tackle all of that security debt, why don't we just do it? I think it's better to start with incremental anyway. Even if you have incredibly supported management that understands security and will give you time to do the whole, it's still, I think, better to start with incremental because you develop skills. You don't face this monster of existing

systems that was built over two, five, ten years, starting from blank page. You have something. In a multi-team organization where it's not enough to deal with the security debt of your own team, where you must work across multiple teams, you will have to set up something known as a work group. And if people come to this work group not motivated. If all this thing is what I'm doing here, I should be at my computer writing more code, it will be a pretty depressing workgroup. So you want everyone who comes there to have an experience of threat modeling even on a small scale. Then they understand the value. And also hopefully you will be able to

demonstrate value. You would have stopped some spectacular threats just doing incremental, it will be easier to justify this activity. For all of these reasons, I think...

So this was supposed to be a metaphor. Don't slice too much cheese up front, it will dry out. But then I'm getting lots of feedback that it's not a problem that ever happens to people. No such thing as too much cheese. So do. If it works for you, fine. So this is the end of theory. Any questions before I introduce exercises that we are going to do? Yeah? Are there any additional threats around the API? Yes.

Around... With the API itself, but it's an additional layer between the...

the aggregator and the rest of the house, is there something specific that people would need to consider with the threat modeling by having that additional component of the API? I think so, yes. So you would need to look at the specific APIs that you are given and you would need to analyze what kind of threats are possible there. So we'll see if we were heading on it.

So this API that we are talking about, the data upload, it crosses trust boundaries. So we are interested in threads on this trust boundary. So I think this API specific thread isn't captured by stride. Yeah, you need to think about it on top of stride. Okay, let's make it specific. Say we have an API, data upload, you will need to look at fields of this API, what kind of data it wants you to send. What if you don't want to send this data outside of your system?

Maybe a stupid example. If you did the Spanish one decided, Yeah, but management didn't analyze every parameter of API. Because it's a business requirement. Yeah. And if you did this in the business, it means that, independently if it's an API or not, or if you have a guy copying the API to the other dot center, it's exactly the same issue. Yeah. So it costs you trust probably, and everything that you explained, you are wanting to trust the other guy from the school. Sorry, this is the ABI within the third party transparent re-a-a. Okay, let's go back to this diagram. Well,

we've got the data flow, whether it goes by us… What the hell? … or hours in the process

with… Isn't it all just the same? Yeah, so that's the one we are talking about, right? So it's not within a trust boundary, it crosses the trust boundary. So this is exactly where we are focusing. This is where all the threads will be.

So I think potentially, yes, there will be threads that are specific to the API that are not discoverable within Stride. Okay, let's...

Introduce the examples and then we have forming the teams. Okay, I say two but actually there are three now. Okay, first example is Internet of Things.

Internet of Things. S in IoT stands for security. So what we have...

We have a system with a smart camera. We are adding to it now a smart doorbell. So When it rings, the camera will send a push notification to the app and then we can choose to interact with the feed. And also we get access to speakers and microphone. So someone rings a bell, we get a push notification. We can choose to talk to whoever rings the bell. It's real. There are products like that. The other example is to do with banking. So, imaginary country, several banks and they've discovered that young people don't like this whole issue of sort code and big number and whatever you need to transfer money they want. This is

the email of my friend or a phone number of my friend and they want to transfer money based on that. And there are lots of startups that do that. So banks decided we are losing market share. We want to implement this feature that will allow us to transfer money between banks using basically a unique nickname.

That's the second option. And the third option is to do with APIs. So we have a retailer shopping, successful retailer wants to connect the shoppers to loyalty card. And this is the new feature. We need to integrate a third party loyalty card. We will need to deal with a new cloud provider. And to keep it simple, we are only doing minimum viable product. So sure, there are lots of APIs. We are only going to use add points to this user. And if you want, if you have time, you can also implement the user now can check that they have more points.