BearSSL: SSL For All Things by Thomas Pornin

Show transcript [en]

Okay, welcome. So I'm here to talk about some work of mine which is called BearSSL, which is an SSL library because at some point I told myself that the world really needed another SSL library and I wrote it. And of course it's all about what I'm doing to ensure that I did it right. Because it turns out that writing your own crypto, first, you should not do it. But if you do it, it's not obvious how to do it properly. And the biggest problem is that if you do it incorrectly, you'll know it only when you're going to be, let's say, abandoned by the enraged Twitter things or... It's not going to be pretty because on your own computer you can have something which works

well and you're happy with it and then you test it in the real world and you understand that you did not understand it. So, this is the outline of my talk and I'm supposed to go through all these points and it should take about one hour or so, a bit less because I've got 55 minutes.

So, first a few words about SSL. We know that there is another term which is TLS and in everything I say, when I say SSL, you are free to understand it as TLS. I'm using the SSL name as the general name for the concept because it's a long family of protocols The name changed at some point because initially it was Netscape and they did it for their web browser. I don't know if most people here remember Netscape Navigator but it's an old thing. It was called for secure circuit layer. And after version 3, somewhere in the late 90s, it was transferred to the IETF and they wanted a new name which was explaining that it was not specifically for sockets but it could be used for

any transport, for instance a serial line or something. And less officially but More importantly, they wanted a new name which had no trademark issue. So it's a new acronym, but really it's the same thing. So the point of SSL is to start with a reliable transport for bytes. By reliable, I mean that if there's no attacker, only a random error, hardware issues and so on, bytes will flow and you won't lose any and if there's a problem things will be acknowledged and repeated and so on. So basically that's what you get with TCP. If you have a reliable transport then what you need for security is merely to detect problems. You don't have to care for repeating things

if the message did not go through. You just have to repeat things And the point of SSL is to provide that security even in the presence of a malicious attacker who is trying real hard to modify things or understand what you are trying. So it provides a secure bidirectional transport. Bidirectional meaning that you have real bytes, binary data, and a continuous flow in one direction and in the other, and sometimes simultaneously. So it's not a transport protocol for messages, it's really for a stream. So here is the most important diagram in my professional life. I mean, if you understand what's going on here, you can make a living for decades. So, it's a copy from the one in the RFC, the specification for

TLS. And what you must see here is that it's based on messages. That is, it works on a stream, it provides a stream, but internally you've got messages. And the handshake is the start of the protocol where client and server will agree on what they are going to do. and the client is the one who speaks first and it sends a message and then waits for the server answer. So during the handshake, you've got a strong half-duplex strategy that is at any point, both client and servers know whose turn it is to talk. But at the end, when you go to the application data, that is everything is fine, data can flow in both directions asynchronously.

So the various messages are about the First, making, agreeing on what algorithm to use and the client is sending a list, it's proposing, it says to the server "I know how to do this and that and that and that and that" and then the server chooses and it's an important property of SSL that, in fine, the server is choosing. However, You must remember that if the client really wants to use a very specific algorithm, then the client always has the option to disconnect and reconnect and offer only that algorithm. So the server is choosing, but the client is ruling. So we are now going to investigate something which is technically known as "things". So these things are able to go over the internet.

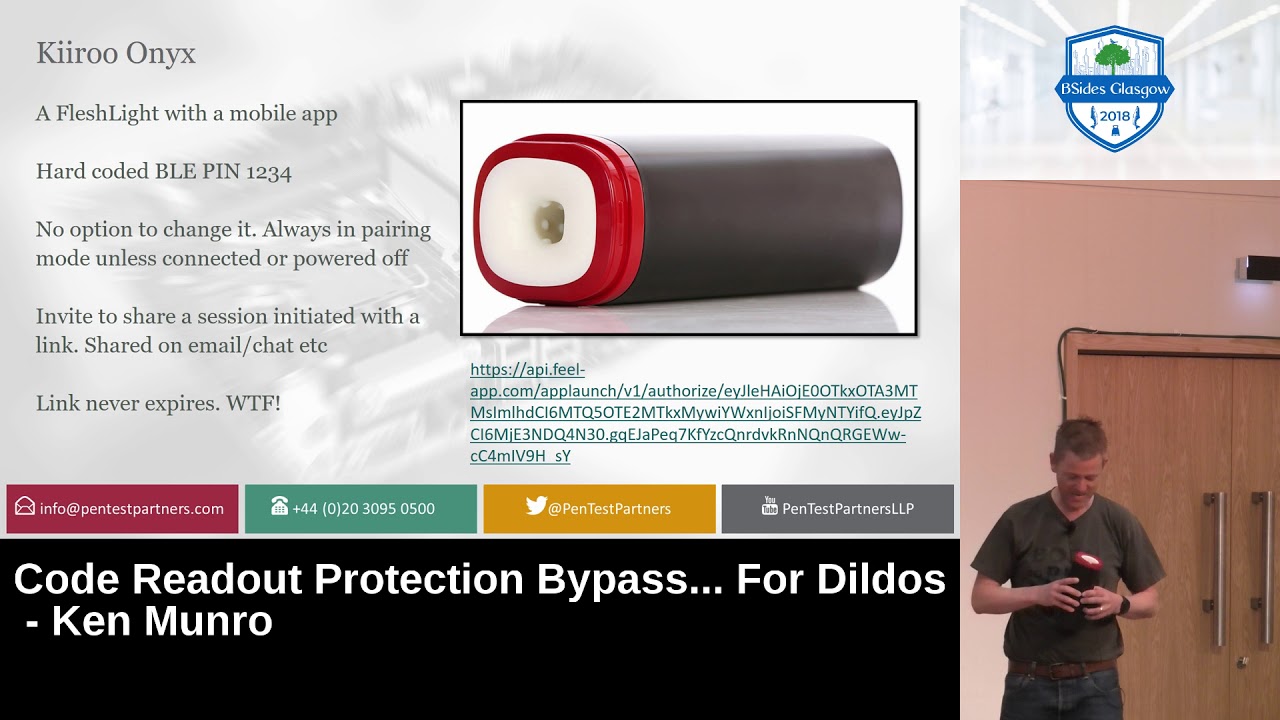

So, all the business about Internet of Things is about That kind of device. So on the lower, on the high left you've got a lock. Don't remember the name but it's basically a lock on your door that you can open with your phone. You can lock it remotely and theoretically you could open for somebody else with your friend who's in front of the door and wants to enter and you are on another continent and you can do that from your phone. So you understand that there is a strong security issue for the lock not to open to anybody and so on. On the upper right you've got a camera and with the antenna it is really a small server that you can

connect to from the outside just to see the picture. Again it's for seeing what happens in your home when you're not there. On the lower left are light bulbs, which are also Wi-Fi enabled because you can light them up or switch them off or dim them or change their color. And again, you can do that remotely. So it's not very obvious why you would like to light things up when you're not there, but it's possible. And on the lower right is an internet coop. So the interesting thing is the green part. It's called the Mu-Cole. There's a lot of work which is done on coves and Fujitsu made something, a lot of research and they

equipped coves with other devices that are detecting whether the cove is dancing. The point is that at some points the cove is ready to be inseminated so that you can make new coves and it can be detected because at her point in her fertility cycle, the cow is making specific foot patterns. So when it's going to do a moonwalk, you know it's the proper time. And they can even detect at which exact point they are in the fertility cycle, so they can somehow try to choose whether they will get a female or a male cow, and they have a 70% success rate. So it's rather fun. The mooc code which is displayed on this picture is at the other end of the business. I mean

it's meant to call the farmer when the new code is about to pop out. So you've got your codes in your field and then the farmer knows that specific code which is at this specific location because there's a GPS in that. We should get it right now because and call the vet because there's a wheel is about to happen. So that code is able to make calls to a server and it's connecting to the internet and it's a long-range thing because it does not go fast but it can go far. So that kind of device is perfectly able to participate in a distributed in a lot of service. So what I want to do is

to help in avoiding your servers being brought down by cattle. So there are many SSL libraries and I decided to write one because all the existing libraries were a bit lacking in a few features. So the first feature of a good crypto library is to be correct and secure and it seems relatively obvious but if you try to download things from github and so on not everything is correct and secure. But I wanted something which could work with very little RAM because embedded systems are often limited more in RAM than in computational power. And I also wanted something which was very small about code size. I was involved about 10 years ago in a specific project from people who were designing payment terminals and

they wanted something to do the bootstrap at the end of the factory line. That is, every 30 seconds there's a new terminal and they must, over a serial line, inject some code which will download the firmware. And it has to be fast because every 30 seconds there's a new terminal. And if you are working over a serial line, if your code is 30 kilobytes, then it means three seconds just to upload it. So they tasked me to write an SSL library, another one, it was 10 years ago, and it had to fit in 20 kilobytes of RAM and 20 kilobytes of ROM, and I did it. This one is better because when you first write something, you don't really understand

the problem. So this library is my sixth SSL library, so now I believe that at least I have some notions on how one should write that. And along the same lines, that library should not need anything from the operating system because it will be used in situations, in microcontrollers where there is no operating systems. So it should be compatible with embedded systems, and so it should be written in C because everybody is using C. But there is a large variety of microcontrollers with ARM or MIPS or extensa any kind of microcontroller so it had to be portable. So I wanted this good logo. I did not do it. It's made by somebody who was in Slovenia and was really good at the things.

So he designed the logo and I wrote the code. So I wrote it from scratch in C and because I wanted Still other features, I made a state machine API. So I'll talk later on about what this means. But basically, I tried to make a library which can be easily incorporated into an existing code which must do several things at the same time. And I did absolutely no dynamic memory allocation. There's no malloc call. In fact, the three function I'm using are the string copy, string length, and mem copy. And none other. So it should fit with really a bare-bones system. It uses about 25 KB of RAM. It can use less, but when you use less, you're somehow

going out of the specification, so it won't work with all clients or all servers. I mean for about 20 kilobytes of code, I mean on an arm in some mode because that's a platform where you have very compact code. And not exactly there, I'm at about 21. I'm working on it, but it's not finished. As a few extra goals, I wanted something which could be pluggable with, so that I could add extra implementations with more better code, more teammates, and so that it could be done from the outside by other people. And I wanted it to be clean, to be usable as a pedagogical tool to teach things. So it's basically me trying to show the world how such things are done. When I

started the BaySSL, there was yet another security issue, a buffer overflow with OpenSSL and I've looked at the code of OpenSSL and never, never more. So I wanted to see, no, it's possible to write an SSL library, but which is more readable. I wanted it to be open source with an easy to use license, so it's MIT license because it's about the the one with the less encumbrance and which is still known to be legally valid because they're putting things in public domain, it's unclear whether it actually works from a legal point of view. In France, if I wanted, according to French law, to put my work in the public domain, the only option I have is to die and then wait 70 years. So I wanted it

to be done a bit faster, so I use MIT license. And it should be secure, but it should also support many cipher suites and a number of extensions which are very nifty. And it should work well also if you simply take a PC, like a big machine. So it's not the core market server, but it should work. There's no reason why it should not work. And I wanted all the secure cryptography things, so RSA with a decent case size so I've got an internal limit because if I have no malloc I must work with fixed size buffers so 496 bits means half a kilobyte and half a kilobyte is a lot in my domain but I also wanted

all the good elliptic curves so the nis curves and the the fancy newer curves which are supposed to be much better and faster and so on. I wanted the AES with GCM, I wanted the stream cipher, CH820 and so on. So all of this is implemented and in order to support a smooth transition I wanted still to have some support for older things like CUPODES and SHA-1. Fortunately, one can put that at the end of the preference list so that SkrippleDesk will be used only when the client and server really know, when one of them knows no better. But if any of them can do AES, they will use AES and it's cryptographically granted that they will use the right one. So I

can tolerate to have all algorithms of questionable security Because using Sharwa and Anthropodes is much better than not using anything at all and just sending plain text. So if you want to do some SSL, you have to be aware of all the existing attacks. So I'm going to speak for about 20 minutes about SSL attacks. So there will be some technical points, but what I want to impart is the idea that There are a lot of points to take care and if you want to, you have to understand them all, you have to follow them all and to keep track of new discoveries and it's a huge part of writing a crypto library is being aware of what other people are doing and just maintaining that.

So one first attack is something called version rollback. Since we have several protocol versions, the client and the server will negotiate what protocol version they are going to use, and it could be beneficial to the attacker to force the client and server to use a lower protocol version than what they could both support. For instance, to make them use SSL3, which is a very low version, even if they could do better because SSL3 has some weaknesses that the attacker could try to exploit. For version rollback to work, the client must be something stupid. That is, the client must first try with advertising TLS 1.2 and so on, and then the attacker will break the connection, send a reset packet and so on, and then the

client will say, "Oh, this server is probably an old thing from the last century. I'll try again claiming only SSL 3." And that part is a rollback, and that's the stupid part. That is, a client should not do that. when the client is doing the downgrade, it's accepting to use subnormal security. And there is yet a protection which is called the fallback SCSV. SCSV is signaling cipher shoot value. So the client is sending a list of the cipher shoot, the set of crypto algorithms that it supports. This one will be in the list and it does not mean I support some other algorithm, it means I am doing something stupid, please block me. That is, if the server sees that fallback, it knows that

the client could do better and something wrong happened because the client has apparently decided that a downgrade was necessary. So it's implemented and it's easy enough to implement, but The real solution is not to do something stupid, but if you do, at least use that signaling cycle certificate. It's cheap. This attack is from the 1998 and it was quite a discovery. It was discovered by Bastian Bauer and it makes some noise because it's an attack by which he could talk to an existing server and again and again and again to obtain the decryption of another secret key that is intercepted from the outside. So it's all about how to use RSA and SSL, since it has been designed 20 years ago, used RSA as we used to

do 20 years ago, which in fact is the wrong way. So in the ARC key exchange, the client is generating a random premaster secret which is 48 bytes and it's encrypted with the server public key. And the encryption begins with a step called the padding in which extra bytes are added to the premaster secret so that the total length of the padding message exactly fits the length of the ARC key. And then the modular expression of ABC is applied. And the padding starts with two bytes which are zero and two, which means it's a PKCS1 padding type two. Then there are a lot of random non-zero bytes and zero bytes, then the premaster. So when the server decrypts things, it's going to check whether the padding bytes are

there where they should. That is, there is first byte which is zero, second byte which is a two, then a lot of random bytes, none of which being zero, then a zero, and finally there should be exactly 48 remaining bytes which are the premaster. So what happens if the server decrypts the thing and it's not a proper padding? In 1988, code which was early version of OpenSSL which was not called OpenSSL at that time, but it was sending an explicit error message if the padding was wrong because that's what good software does, it sends explicit error messages. So the attacker by sending carefully crafted message could learn whether what he sent would decrypt to something

which started with the two bytes of 0 and 2 or not. And by sending carefully crafted messages that is not actual encryption of a premaster secret but other values and sending about between 100,000 and a million of them so it's not a fast attack. He could get information on the decryption of a specific secret, that is, an other encrypted premaster secret from another session that the attacker is trying to break through. Because once in a while, a specific message, the attacker won't get the error message that says this is wrong padding. So when the attacker gets something else, that is the server is keeping on with handshake, he knows that that specific value when the private key is applied, he did correct padding, so it's

one bit information. So by trying it again and again and doing a lot of math, the attacker pinpoints on the specific solution he wants. So countermeasures were devised. This is the countermeasure. These are two excerpts of the bare SSL code, which basically means the first one is that the "co" here, it applies, it's an invocation of functions or function pointer because it's pluggable, and it performs the modular exposition. So, at that point, it should check whether the padding is there. So each of these lines, that one is checking that the first byte is a zero, The second one that is a two, but it's using some constant time inline functions that will return 0 or 1 but will keep on. So I'm just accumulating in

the value x either a 1 which means there was a proper padding or a 0 if anything went wrong. But crucially, all the sets are still done. So in this group, I'm checking that all the bytes which should not be 0s are actually all different from 0s. Then there is a 0. Then I'm taking the last 48 bytes and I'm moving them to the start of my decryption buffer. And this is the color code. What it does is that it generates a random value and it will override these 48 bytes which are the pre-master secret with the random value. So that if the padding was wrong, I use a random value instead of the decrypting

value and I will keep on. The server will keep responding. There's no explicit error message. The server is always aware that there was a problem but then it forgets it. If there is a problem, it's just using a random value instead. So the idea is not to yield the crucial information to the attacker. From the point of view of the attacker, everything always works. So, another issue to take care of is something which is called forward secrecy or someone is called perfect forward secrecy and when something is called perfect, you know it's a marketing ploy. And the idea of forward secrecy is that you and me now is not somebody who is after your money, it's the NSA, they're

after your privacy. And it's well known that they have recorded everything you've ever done over the internet. and they're recording your past sessions and later on they steal the server private key and they'll try to at that point to decrypt all the past sessions and the notion of forward secrecy is to prevent that that is if the attacker can steal the server private keys and from that point the attacker can at least impersonate the server and run a man in the middle attack and so on but the attacker should not be able to decrypt everything that was exchanged before that point of compromise. So the solution for forward secrecy is to make private keys that cannot be stolen and the to avoid a

key to be stolen, then you just forget it. You never store it anywhere. So a server has its static permanent key, the one which corresponds to the certificate is the one which is in the file. But whenever there is a connection, you generate a new key pair with the Diffie-Hellman or native elliptic covariant, which is done for the key action, and once the key action is done, you just forget the key pair. So, all cipher suites where the acronym contains DHE or ECDHE with the final E meaning ephemeral are cipher suites that use ephemeral k-pairs. So they provide forward secrecy and it's considered to be a good thing. But it has some issues. The main problem is that you're generating new k-pairs so

it has a computational cost. And if you are working with an embedded system which is attached to a core, you don't have necessarily a lot of computing performance available because usually embedded systems have some time to work but they don't have a lot of electrical power. And an embedded system usually that runs on battery will try to sleep most of the time to and to avoid heavy computation because it reduces the time between the next recharge. So if you are trying to use ECDH without the final E, that one is static ECDH, it's not forward secure, but when there is a connection, the server only perform one point multiplication. That is one of the internal operation which

is expensive. And if you're using ECDH E, then you need three point multiplications, so it's three times as expensive, it will take three times as much time. Okay, so, and it also needs larger code, because you have to support not only the ephemeral CDH, but also the use of the actual permanent server private key, which is a signature, so, and a signature with ACDSA is one point multiplication and other things, and these other things are extra code, and I mind concise. and there's an extra message so you have more bytes to send over the wire. So there are costs. Therefore, in BSSL I support both. And you can choose whether you prefer the static SDH or the ephemeral one. And

you have to be aware of what you are gaining with the ephemeral. In TLS 1.3 they decided to completely forgo static ECDH or ALS occasion and they are all about ephemeral and forward secrecy always. And TLS 1.3 is not supported by BSSL and probably will not because it's a relatively large protocol which is meant for web browsers and it requires a lot of RAM. A lot, I mean, dozens of kilobytes. For your servers, that's not a lot, but for my embedded systems, it's too expensive. Okay, this is in French because I took it from a French version of Windows. It's what all version of Windows were doing when you were connecting with Internet Explorer to a server. that was doing SSL, and

the server, after showing its certificate and doing the key exchange, sends to the client a request for another certificate. That is, the client should be authenticated with its own certificate, so it makes the protocol more symmetric with one certificate on each side. When Intel XFL receives that, it shows this magnificent pop-up, where it invites you to choose one of the certificates in that list. So, as you see, the list is empty. And yet, the pop-up shows up. It pops up. So, most web users and customers are understandably a bit uncomfortable with that kind of pop-up. And we servers have learned not to ask for client certificates unless they have some good reason to believe that the client has a certificate. So that

the list won't be empty and the client will choose. The thing which could happen if you took care to distribute smart cards with certificates to your customers. But when the A web server is receiving its first connection, it does not know whether it's a client who wants to access a specific authenticated page and it has a smart card, or whether it's a random client who is just trying to see the font page. So the server won't ask for a client certificate at the first handshake. Then there is something which is known in SSL as a renegotiation in which the server at any point can ask the client to do a new unshade within the first one. That is when the server has seen the actual HTTP request from the

client, now the server knows that the client wants to target a specific page where there is for authenticating client with certificate and so on. And unfortunately renegotiations have an issue which is expressed with these schematics. It's about... It tries to take the idea of an attack which was shown about 10 years ago I think. So basically it's a man in the middle. The client is first trying to... He's connecting to a server but the attacker is in the middle so the client connects at the TCP level with the attacker. At that point, the attacker just delays things for a few milliseconds and starts a handshake with the server. And then it sends an HTTP request, say a POST request, which does things. Then the

server says, "Okay, that kind of request requires client authentication." So it sends an LO request, that is, "Let's do a new handshake over the first one." And at this point the attacker should try to do an unshake with the client certificate and the attacker has a client who is perfectly ready to do that unshake because the client is trying to do the unshake. So that's here when we reach that point the attacker is just forwarding all messages from the client. So the property of SSL is maintained in that the attacker is completely outside of the data. The attacker cannot impersonate the client, a client certificate is used, the attacker cannot see what the client is sending. However, from the server point of view, what

was sent before that second uncheck, the only uncheck from the client point of view but second uncheck from the server, should be more really also authenticated with the client certificate and it will honor the first request and that's the problem. The conceptual problem here is that the authentication in the second handshake normally applies to whatever happens after the handshake but the server wants to apply it to data which was received before the handshake. In the SSL specification, it is said that you can do a new handshake, but it is never specified what that new handshake is supposed to provide as property, and it's unclear. This attack shows that it does not provide an authentication that works backwards in times. But it was fixed with a

specific extension, which is a way to tag each handshake this is the first one or this is a new one which relates to that previous on-check and so on and it prevents the attack as explained before. So it's implemented in bareSSL and if that extension is not present, that is if a client does not support the extension or the server does not support bareSSL in the other side, it will simply refuse to do a renegotiation. Support for that extension is rather common in existing web servers and others because SSL labs are giving an F mark if you don't have it and managers everywhere, they really don't want an F mark in SSL labs. So you can count on that extension being present if it's supported

at all. Another solution is to simply never renegotiate and to reject all attempts at renegotiation and it's also supported. and in TLS 1.3 they decided that there should be no renegotiation. So for the pop-up with certificate and so on, they had something new at the end of the uncheck where the server can say, "Okay, we just did an uncheck, there's no application data for now, but I need a certificate," and so on. Another kind of attack which happened is a problem with Diffie-Mann parameters. That is, if we're trying to do a Diffie-Mann with ephemeral keys, the server is choosing the parameter which is the group in which the Diffie-Mann will be placed. So there's a prime module, there's a generator and so on, and the client cannot know

whether what the server is sending is correct or not. It could be a non-prime value. It happened. SoCAT, it's a tool which was open SSL, had a prime modulus which was not prime. We don't know why. It's weird. But the point is the client cannot validate the HFDFielmann parameters. And the client will also try to work outside of the normal specification of different, by for instance, if you're doing it on elliptic curves, it could send points which are not on the curve, but actually on other smaller curves and learn sequence from that. So, attacks have been divided on that. So I chose a rather radical solution, no Diffie-Hellman, only elliptic curve Diffie-Hellman in specific curves where I know that everything is right because that curve has a prime order

and so on and is well rated. I'm not reusing my ephemeral secrets because whenever you reuse an ephemeral secret, you keep it in RAM for say one or two minutes just to save on CPU. This means that whatever leaks of that secret may impact other connections. So I mean the key to be ephemeral, I drop it immediately and I validate the curve. Recently there is a JSON web toolkit library which was using DFLMAN, it's not SSL, but it was using a DFLMAN and it was not validating curve point and then it got broken and they claim that they did not do it because they did not want to pay the overhead for doing the validation. It's 0.5%,

I measured it. It's not expensive. You just have to, it's simple to do. So there's no excuse not to do it. This diagram shows one context which are the

It's a new concept which was discovered in the 2008-2010 and people were doing SSL and they discovered that they were doing SSL with web browsers. And web browsers are a nice thing for attackers because they open possibilities of chosen plaintext attack. So here the victim is sitting somewhere in a Starbucks or whatever equivalent and it's free with Wi-Fi so he's sipping on his double matcha with latte and he's trying to connect to the internet because it's free with Wi-Fi but it connects through an access point which is maintained by the attacker. So at that point the attacker can do some man in the middle things, that is, it can relay all data Moreover, it can inject some hostile things in anything

which is HTML and which has not gone over SSL. At that point, the attacker controls the traffic and it has its own hostile code running in the context of the client border. So there are several attacks which use that model in which the host JavaScript is going to send get request over HTTPS to a target server, say a bank server and so on, and what the attacker wants is a cookie. That is the host JavaScript cannot make post request because same origin policy and so on, but it can trigger request that contain attacker control data such as a path and a secret that the attacker wants to learn. So this is a context for what cryptographers call chosen plaintext in which the attacker gets his own data

to be encrypted over a secret key and trying to learn things on that key or on other pieces of data encrypted with that key. And in SSL encryption before TLS 1.2 goes that way. It's called CBC, which is a chaining block mode for encryption. And there is an integrity control, and it's known that SSL does things in the wrong way. It does things as explained here. Plaintext is the data which is sent in the record. HMAC is an integrity check with a key which is computing over the plaintext. Then there's some padding bytes which are added. The last byte tells how many padding bytes are to be added so that the total length is a multiple of the block length for the block

cipher which is used. Here, AES and AES blocks are 16 bytes each. And then encryption is done by splitting all these marked and padded entry into blocks, 16 bytes. And each block is first XORed with the encrypted previous block and then it goes in the block cipher. This XOR is done in order to somehow randomize the data because since encryption is deterministic, if you get twice the same input block, you get twice the same output and it shows and normal data, everyday data has repetitions. have a repeated box and we don't want to show that so this CBC mod tries to do some randomization. So I should speed up considerably. Sorry. So an attack which was named Poodle because now attacks have names and they have

websites and logos and so on so that one was called Poodle and it's an unfixable problem in SSL 3. which was that in SSL3 the padding bytes which are here don't have a specific values you can put random things so if the attacker arranges for the plaintext that it sends to have a length such that the padding block will be a full block that is it's 16 bytes upon decryption the only thing that the receiving part will check is that the last byte has value 15 meaning there are 15 other bytes so the attacker can change from his vantage point with the access point can change the last block of an encrypted block and if the server does not mind, if the connection keeps on, then

this means that the last byte was 15 and the attacker just learned a one byte information on the decrypted version of an encrypted block. For instance, a block which was perloid elsewhere in the stream where there is a cookie byte. And that way the attacker can learn several bytes. And it's a problem which is un-fixable, so don't use SSL3, just use CLS. And that's exactly what I'm doing. So there are other errors, other problems for Paddingo records, which were also raised back in 2008, but actually it's an older attack. And it's still on whether the padding is correct or not. And trying to talk to a client or server, sending some data with a potentially correct padding or not, and see whether the client or

server is happy with that or not. And it could be detected initially with an explicit error message which was "bad padding different from bad Mac". And then this was fixed by using the same error message, then it was done with timing, that is, trying to work out if the padding was wrong, supposedly the server would not recompute the MAC, so it would respond a bit faster, like one millisecond faster, and this could be detected. And then there were some variants on whether and actually was the position and the length of the decrypted padding because it could impact the down to microseconds the time to compute the edge mark and so on. So if you want to do everything right, you have to do everything constant time, always recompute

the mark with always the same sequence of operation regardless of the padding length. So there's some code which is relatively barbaric and I don't have time to delve into it but this one is extracting the MAC from the position it should be depending on the padding length without letting attacker measure that padding length. So I can't just take okay that this to this byte because I don't if I'm To do that, I have to recover the padding length, which is the information that the attacker is interested in. So I have to examine all possible bytes and do some multiplexing and these games with shift and all, just to get the value. Another attack which was the "Children's Test Text" which was in 2010, it

was called "Beast" and that one was on the start of the record because the start of the record is using as previous block for the CBC chaining the last block of the previous record so it was known to the attacker so the attacker was using some XOR based ninja tricks to put the right value and test hypothesis and it's always testing hypothesis on an NQP block just to see if that leads if the hypothesis is true then it reveals one cookie bite and start with the next and so on so there are some protection one of them is to use TLS 1.1 because then there is a bare record random IV and when bareSSL has to use TLS 1.0 it will split records, that is whenever a record is

to be sent, it actually sends two. The first one contains only one byte of data, because then the MAC will serve as randomization for the IV for the next one. And it works, that is it prevents the attack. It's certainly not comfortable, and we really should not use TLS 1.0, but if you want to use TLS 1.0, then it's possible to use it correctly if the developer of the library was aware of the attack and did what I do. Another one, probably that... I'll have to... Okay, that attack is the most fundamental of them because it's unavoidable. It was about the use of compression which was in SSL. Client and server could agree on compressing data with Zlib. And

the problem with compression is that it will change the length of the data based on the contents. And the encryption is good at hiding the contents but not the length. So it's necessary that data leaks that way. And there is only one solution which is do not compress. Deal with it. Okay, that one is about using block cipher with small blocks because when you encrypt data in CBC and your blocks are too small, you can run into trouble after some hundreds of gigabytes of data and that's exactly why AES was designed with blocks of 16 bytes and not of eight bytes like Drupal-desk. So don't use Drupal-desk if you can avoid it and so on. So it's not very practical. because we're still talking hundreds

of gigabytes and your code will not send hundreds of gigabytes, but anyway. And don't use weak crypto because weak crypto is weak and it always surprises people, but no really, it's weak. So don't do that and I don't support it and everything is fine. So there is a strong emphasis in my crypto code on constant time cryptography which is about not leaking secrets through the timing of operations. This can be done if you design all algorithms, all implementations, instruction by instruction, and you know exactly what will happen from your C code, how it will be translated to assembly of codes, and how they will be executed by the processor. So the issue is that it's a

side channel attack. Side channels are everything which happens outside of the abstract model of computation. And timing attacks are the specific side channel attacks which can be exploited remotely. That is if you try to exploit the noise that the computer makes or the electric consumption, how much power it is drawing, these are local attacks, you need some to be within a small distance of the attack device. But the timing attack can be done from the other side of the world, so it probably matters. It was never spotted in the wild, To my knowledge, no attacker ever used for profit an attack on non-constant time cryptography. But the consensus is that it matters, so I wrote constant time code. I wrote a constant

time RSA so that not to use that specific method which is called masking and which was all the rage about 15 years ago. which tries to do algebraic manipulations so that any leak related to secret key is somehow blinded. Instead, my RSA code is always doing the same operations and accessing the same bytes at the same time regardless of their contents. So, more code. This one is extracting, is doing a window base optimization on modular expansion. So it's about making a bunch of squaring and then multiplying by one specific value extracted from a table. And which value depends on the secret key bits. So it's extracting all values, but it's using some hand. to just select the right one but all array elements

will be hit at always in always the same sequence so this operation won't link information on exactly what array element i'm interested in and then it's used here so we have the squarings multiple because it's a Montgomery multiplication and then there's always one multiplication with the value as extracted and this bit is where I forget it in case the secret key bits were actually zero, in which case I shouldn't have done that multiplication, but I must do it, because I don't want to leak the information that the key bits were zero, so I must do the multiplication, then not keep it.

There are a lot of things on caches because there are nice lab demonstrations that allow recovery of say an AS key or an RSA key or elliptic curve key from another process on the same machine or even from another virtual machine which runs on the same hardware than the target system. So it's a cloud attack in which the attacker just rents some machines on the same provider and extracts your secrets. So it's all about accessing data at addresses which depend on secret data. Don't do that. And to not do that, or you can try to access all cache lines and so on but really to do some true constant time you must have no secret dependent memory accesses

which means also no secret dependent conditional jumps if you do consider the jump it must be on say the length of data which is not secret but not on the contents so there's a An arcane technique was built citing an orthogonalization of data which was discovered by several people at several times and in cryptography by Biam in 1997. And it's about representing data in not the way you should do it. So I've got an example. Suppose you have an algorithm, you want to XOR two 6-bit values and then rotate left by 1 bit. On the left, that's how you usually do it. You have two values, you compute a XOR, then you do a rotate. This slice method is about thinking, okay, we'll

do a secret with transistors and so on. So I have six bits for each input, so I have six XOR. And I'll store only one bit in every variable. So now the rotation is only a matter of rooting the data, that is rooting here, you see the Z value, are not zero, two, five, but one, two, three, four, five, zero. It's a rotation. So the rotation costs nothing. And the real trick here is that suppose that I have 64-bit value, so I'm not doing one XOR, I'm doing 64 parallel instances. So I'm using parallel computation, and It's by computation is much faster than my talk. So it's something which really you have to lie on your side and it will break your brain. But it allows making

really fast and constant time code. Really fast as long as you can do parallel things and you have a lot of RAM and you have a lot of registers so it has disadvantages. And I still use it in bareSSL with some mixed strategies and you can see the implementation. There are commands, but it's not an easy read. But I try to be thorough in my implementation. And there are still problems with multiplication and some opcodes which are not constant time at the hardware level. And I've got a whole web page to see that. So I have to cut off here because I don't have time anymore. So that was all about... I mean, I was not content with writing my own library, I had to invent a new

programming language for that. So I implemented something which looks like this. It's a kind of force, just so that I could run the handshake in its own code, within its own context, that I can interrupt so that I can stream data without using callbacks or threads because I don't have callbacks, I don't have threads, I cannot block things. The whole library operates as a set machine in which you push data from the outside or from the inside and then it says, "Okay, I've got some data back, "I've got decrypting this, you can pull it." So it's meant exactly for better integration in hardware. And I got emails from somebody who was doing exactly that and who thanked me for that. Because he's doing it with some serial line

and he has several things to do at the same time in his hardware but he doesn't have thread, he doesn't have an operating system. So it works but you have to understand that and Of course, so there's C and FORCE and the compiler is done in C# because it's an extra language. I just wanted more languages. Okay, so I won't talk of certificates because they are the work of the devol. And I still support some minimal certificate validation with support and I extract name and I do a Unicode normalization and so on and there are a lot of nifty things. And you have to master that. Okay, certificates, don't do that at home. And SSL

sucks because it uses too much RAM and there are large buffers and so on. And there are things that should be removed and CLS 1.3 is probably not the solution because it's using more buffers. And that's exactly not what you should do for embedded systems. So probably SSL is going to fork with one for web browsers and one for small systems. And TLS 1.2 is there to stay. Okay, and I should have asked for two hours. Okay, no question? Don't have time for questions anyway? Okay, but you can follow the red shirt, I'm here all day.