Security and Ops in Startups

Show transcript [en]

[Music] yep all right we're good okay so let me introduce myself first my name is Daniel Jeffrey I work for the Linux Foundation I've gravitated to regulated environments I guess I've worked in the financial sector in banks I've worked in retail PCI environments I've had to build FedRAMP and FISMA environments and yeah I see I'm with you and then more recently I've had experience dealing with web trust and some of the regulations that circulate they're so regulated environments have been the place that I've wandered and I've done that both in startups and then in well-established companies and as I was preparing this talk this actually grew out of a talk that I gave last fall and

I thought you know what there's a lot that regulation requires that really is just being smart and when it comes to startups we can apply many of those things without adding a significant and onerous overhead I think most of the time when people think about adding security to their startup it sounds like oh no we're going to make things really heavy and hard and slow and so this is actually talking about some of the things that may sound scary that I think we can implement in a more reasonable way so to get us started we're going to wake up there we go so we're going to use a hypothetical startup and founder to illustrate some what we're talking

about we'll give them some parameters and then we'll make some decisions about how they're going to result how they're going to implement some of the things we got so being as we're in Utah our startup come on there we go senator hatch at least as a constituent of hatch I get regular emails explaining how he has done everything you could ever want in order to help technology so we're going to have senator hatch start up cyber as a service he's got a cyber API so that everybody can get their cyber and that's going to be our startup that we're going to work with here so I said a little bit of this already but the

security processes that are implemented in many of the things that are implemented in most regulatory schemes are actually just common sense they're best practice things that you ought to be doing anyway and one of the things that startups have to realize I've had many startups tell me or even even a bank that I worked with at one point oh we're not really at risk because we're super small right and as security people I think most of the people in this room are aware that that's ridiculous most attacks are opportunistic you have sure the large targeted attacks that get a lot of press but most of the attacking that happens is somebody who knows how to do this one thing or a couple of

tricks that they've got and they look for people that are vulnerable and when they find a target they go in so tax or opportunistic size doesn't matter if you're on the web you're accessible so and you know if you lose that trust especially as a start-up where you're struggling against others it can be very difficult to recover and adding security later doing the Oh we'll get to that when we're bigger is a huge expense in most cases if you take the time to think about your security and your infrastructure in the first place you can save yourself a tremendous amount of time money and pain later because often there's significant overhaul required once you've come to rely on it so today

for our hypothetical startup hatches cyber security as a service we're going to talk about four different categories that I've drawn from regs there are many other things that we could look at but we're going to talk about system patching frequency and automation when you're talking about monitoring and centralized logging specifically from a security standpoint and we're going to talk about configuration management code review and change control and we're going to talk about step based of duties we're going to talk a little bit about why we want to do these things why it's worth spending a little bit of your very precious startup capital and human resources to put these things in place and we're also going to

I'm going to give a few suggestions on ways that we can achieve those things now I'm standing up here I'm the one with the mic on but especially as we get to the suggestions on how we can do these things I would be very open to having members of the audience who have experience with that raise a hand and say hey here's another way we've done that or hey here's something else I've seen done that can help achieve this goal so think of this as interactive we can do to anyone a at the end but if you guys have something you want to pitch in the intention of this is let's learn from it so I think we all know that no

matter how long you've been in this industry there's somebody else that knows a trick you don't so and I am by no means the savant at the top of the ladder so the overview of our little company that we're dealing with here we have our cyber API that must be available 24 hours a day seven days a week it's relied on by other businesses so that they have adequate cyber in order to service their customers hatch has in his company currently for developers for operations staff the operations staff is also functioning as the security staff at this point that role is crossing between operations and and developers we want to make sure the developers are

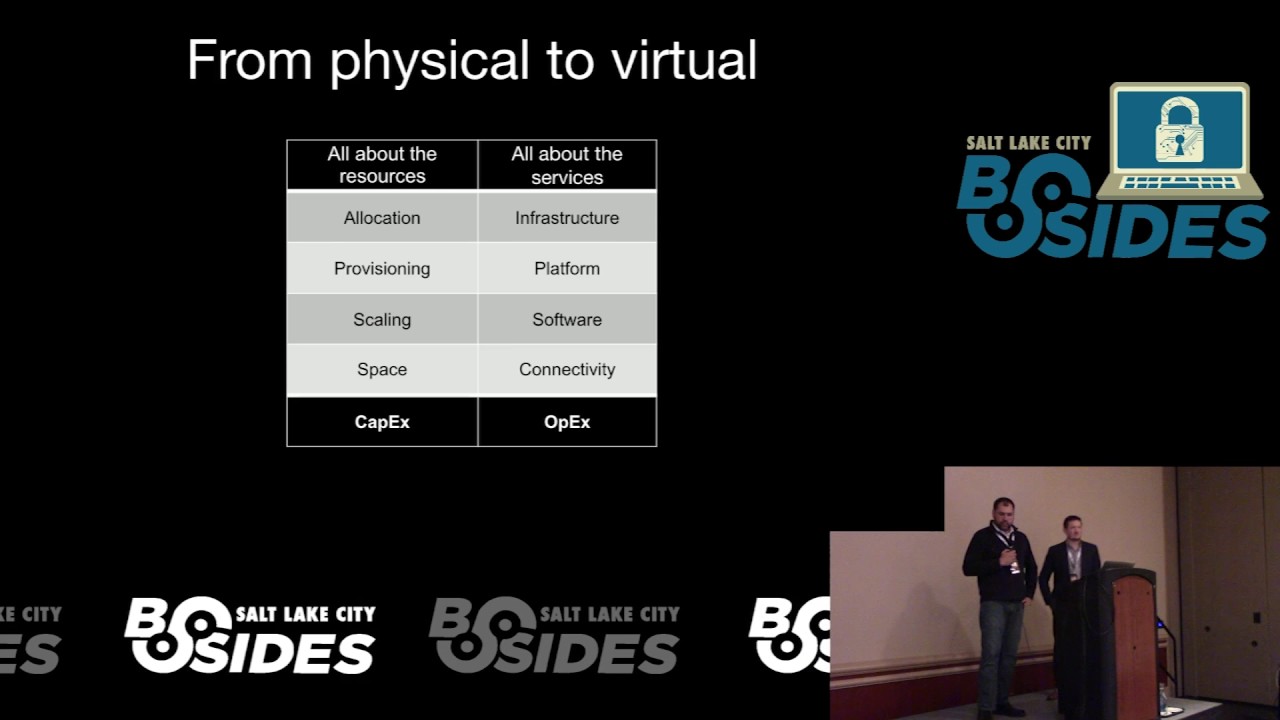

also security conscious and then we have five marketing and sales staff that we try and keep on the other side of the room and then three people doing finance and HR jobs so you know we're really really small lean trying to get our feet on the ground take off with this company at this point we're going to assume that hatch is going fully modern and they're not doing anything with hardware in a DC so we will talk a little bit about dealing with hardware issues but we're going to assume cloud so the ops context as we move on to talking about patching now patching Dredd is a very real thing among operations people patching Canon does

break production environments when you talk about patching to an ops person any multi-year ops veteran will have multiple stories to be able to tell to management about why they should not do this patching because bad things and they're their real stories it happened patching can totally destroy the environment if the wrong thing happens that when you dig into putting on the latest of whatever it is that somebody's deployed for you so the conclusion that many organizations I've worked with that many more that I've heard stories from come to is often you stock up on the red bull and then you patch once a year when hatch is going to be out of town so you

figure out you know when he's going to be taking that vacation in the mountains and you won't have his cell phone and you got 24 hours to fix whatever happens it's not an ideal solution right so we talked about patching frequently the reason to patch frequently is because patching is your simplest and most effective way to be protected from existing problems right there's always the threats that we don't know about yet but you can be protected from stuff we do know about if you patch now the we talked about I've used the term frequent patching and frequent patching is I think critical because the more often you do it the less likely you are to run

into the hypothetical nuclear wasteland patch scenario where everything blew up that can still happen I mean I have from my own experience I remember when we patched I was in an environment there was a mix Linux Windows and we were running samba and we got a Samba patch that they the developers had realized that there was a serious escape from their sandbox area possible if symlinks works were enabled and so they just decided in that next release that they were going to add a new parameter and set it to and set symlinks to disabled by default so this entire service that the organization relied on their file system like half of people's files didn't work anymore after the

patch because they've been sim linked in order to not have lots of duplication of particular structures and now they were gone that was a fun thing to troubleshoot in the first place because you don't know what happened unless you have unless you read the patch notes for absolutely every single thing you upgraded which in a startup you got time for that your patching and you're trying to get on to the other thing you've got to do and so you've now got to figure out okay what happened I think this happened because of the patch we did last night but it's a challenge so frequency and doing it regularly though means that there was only the one problem to fix

there was this one thing that went weird instead of having 30 different things that are all accumulated over the six months to a year since I did my last patches okay so on the patching section this is the strategies component right these are ways that we these are some methods got a couple slides here talking about ways to approach patching the method that I was using in the scenario with Samba was we decided on a cadence that we were going to do it at and then we just did it periodically right with the just do it approach it it always feels like a time thing because you have to be engaged you've got to be there you

don't have you pulling you away from everything else you're doing so you jump in you do it it's going to get delayed because other stuffs going to be happening you're say I don't have time for that right now and when it eventually happens it won't be as frequent as you wanted it to be however the team that's doing the updating is going to be there because it's manual you're going to be eyes on when it happens and you have the opportunity at least to vet every item individually now it's not necessarily a dedicated part of this particular strategy but most of the time with this particular strategy testing and preparation kind of goes out the window

there will be less staging effort because they know they're going to be there so groups tends to default back to well I'll just do it and I'll watch it because they know they're going to be there anyway and it takes extra time if they do it beforehand the next strategy that I threw up here is a more modern strategy that I've seen a few places trying to implement it's ephemeral izing everything you identify all the instances that can be stateless design things to be a stateless as possible and then just get rid of them on a regular basis not update them make a new one so you put a new one in place and for this

to work well you got to make sure that you have that prod like environment to test them in before you put them out there or else you're going to have surprises you've got to make sure that well then you struggle with stateful components like databases or other pieces that some may design the software so that leaves bits and pieces of itself around on the OS and you have to retain those and roll them forward on to the next instance that becomes very complicated if it wasn't designed well in the first place I mean you talk about databases you've got a lot of different kinds of databases you got your authentication databases you've also got retention servers like log servers you

can't just you there are ways to do with log servers but you still have to have the data stay somewhere and as to tolerate that update without disappearing this type of a solution is most feasible in a cloud environment definitely a virtualization environment I have known people that have done it on hardware and I have a tremendous amount of respect for the level of effort and thought that went into making an ephemeral type set up work on hardware but the redundancy that you have here does allow you a clean cut over because you're bringing up completely new things and you can cut over and still have the old thing to cut back to if it fails so again if anybody

has experience with some of these that you want to chime in on feel free ok so then another option now on most desktops nowadays you expect automated unattended updates it's just going to update itself and that's a good thing it's still gonna be frustrating at times when things break but on a desktop it's not as doesn't affect as many people unless it hits everybody's desktop at the same time and you've got to roll that back or fix it in a corporate environment but doing it on servers is scary and so but-but-but-but-but right doing it on servers is scary but if you do it you have a guarantee that hey we can keep things up to date and it can happen

on an automated schedule so how do you do it safely how do you do automated patching on servers safely one strategy that I've seen done and have observed and had really good experience with personally was actually having a truly production like staging environment right down to the hardware and oil if it's hardware if it's a cloud environment it's even easier but have something that really really honestly replicates production and hasn't had people fiddling around and making manual changes it has to be forced to maintain the same configuration as production and manual changes get over it on a regular basis if there are any for testing purposes so this staging environment is not a development environment

it's an environment where you may have to test things that are coming from developments to make sure they're really ready for production because the development environment isn't truly production like close as you can but it doesn't have the same hardware investment the staging environment needs to be production light and clean if you have an environment like that then you can trust if I do updates here and I'm monitoring it like I do my production environment and I give it some period of time and I'm hitting it with traffic like I do my production environment then I can feel a reasonable degree of confidence that after a few days running there I can roll those same patches into

my production environment and I'm not going to see it break it so you can put it on an automated schedule with monitoring in place that says here it's running it's looking good I didn't have problems so when it hits here I can feel comfortable I'm also not going to have problems and it can happen on a regular cadence say once a week you update staging the three days later you update your production environment and then you pull it out more patches update staging again a few days later you update your production environment that's the method that I would push people toward and if you can do it in conjunction with an in federal method I think the ephemeral

method is the most awesome because you get somebody with an apt that they got onto your system somebody who's got themselves a nice little backdoor where their excellent rating whatever they want but you blow that box away and bring a new one up it doesn't matter so ephemeral where you can but you can't is really hard to ephemeral eyes databases yeah

[Music]

right

all right

right yeah well and and containers are you know really the easiest way to achieve ephemeralization and you can absolutely and that's one of the reasons I didn't mention containers yet but containers are the easiest way containers have their own sets of problems that are definitely improving but the last time we did a major container evaluation we realized that we had serious issues achieving the kinds of firewall and logging capabilities we wanted and those have improved significantly since then and continues continue to improve but you have to evaluate whether or not this gives you everything you want with the container but for ephemeralization purposes containers are great you can prep up a new one that has

everything you want loaded on it and just bring it online and blow the old one away once you're comfortable with it containers are good that way so I appreciate you bringing that up and the segregation that's possible is also really nice all right so let's look at so the last point I wanted to make here does anybody have any other strategies I think I missed that would be good ones to bring up in talking about patch management okay the last thing then is you want to design the software so we in this case we are you we can talk about the infrastructure and we can talk about the software since we are a Web API is a

service basically in our little hypothetical company here we're going to say that hatches development team and his operations team need to work together to design software that is that is built with the intention of being patched cleanly and regularly that's more and more common nowadays as developers want to move toward continuous integration and be all kinds of agile and fast but it's something that's critical to think about where the rest of the infrastructure is concerned as well you're an dad from the get-go okay so patching hardware patching hardware sucks I mean I don't think that there is if anybody wants to disagree with me I'd love to hear what you're doing but patching hardware sucks in my experience

there are solutions that are available for usually they're more expensive enterprise oriented products that allow you to do some kind of synchronization across that vendors particular suite of hardware products to maintain patches but those solutions are really hard to justify in a start-up environment they are onerous expensive and require somebody who's spending a fair amount of time maintaining them a lot of times some of them aren't some of them are pretty slick but they're still expensive so with hardware it really goes back to the just do it option is about all there is and you've got to get yourself a cadence and you've got to commit to doing it the other thing you have to do

even more so with the hardware is make sure you're staying on top of and you're watching alerts you need them with the software as well if you've got a really regular cadence that's like a week you're not going to be as stressed about new vulnerabilities that come out with software because you'll be remediating them about as quick as they come out with the patches to remediate and whereas with hardware because you're going to be on a slower cadence you've got to be aware that oh something critical happened and make sure that you're subscribed and watching what is coming in for the specific components that you run rancid is a tool that I like I don't know if all of you heard of

it or not but rants is the tool that helps with keeping track of what's going on with logs especially on network devices not logs but configurations and it helps you to track for changes and modifications that might be being made there and have a backup of them so that when you do that update and it hoses stuff you have an ability to say ok this is what my config was before the update and see how things differ or what you can do to fix it I didn't get any takers on the I've got a magical solution for patching hardware did I hey I'm open to anything if you've got some piece of hardware that you

found is easy to patch tell us about it

so you're talking about patching what specifically okay so with UC with UCS you're using Cisco's you've set up their management components somewhere in the environment in order to talk to each of those boxes how well do using that scales for a 20-person company the level of investment to get to that point I haven't done it I have not had a lot of experience with UCS so I'm asking you right so there isn't a I've never looked at the UCS management tool there's an expense for that as well then right okay but it is a slick tool I've seen several others I've used somewhat Dell Hardware I've used some of the HP hardware as well and there are ways to do it that

are they've designed but they have cost and there's both in terms of money to buy it and then in terms of time to set it up and maintain it it makes sense as you get to scale as you get to hundreds of units it's a great idea okay so just to retouch automation briefly automation is I need to speed up a little bit okay automation is how we fix human error that's why we do automation and is and we need to think about that in terms of patching if patching is all manual then every time we try and patch we're looking at humans to screw something up the better way to do it is to script it

fix our scripts and we get consistent results over and over again we put a process in place we obey the process and then we have something we can fix six Joe decided to do patches this week and he did it different than last time and so we got different results the other thing we'll talk a little bit more with monitoring but automated testing so that we have consistent repeatability of does my stuff work when I'm done with patching so let's talk about our sample company really briefly so what they did here is they decide we're going to build a full production parallel staging environment we're going to generate load against it we've got--we've as part of

the development of our API we've decided we've developed a load generation tool as well so that we can make sure we hit it just like we expect customers to that tool is going to take tuning over time as you observe the customers don't use it the way you thought they would and that they come up with creative ways of doing really things that you thought nobody would be stupid enough to do with your API and so you've got to adapt and make sure that you generate those cases as well at least on some schedule to make sure that the thing still works the way you expect it that's good just for testing the software but it test is your

up it tests your updates the environment as well did the include things like checking that your DNS service and your and your authentication service are still working as well after the update things that normally wouldn't get checked until one of you logged in and realized oh I can't do what I was supposed to do identify what services you offer in your environment and check those services so they've done that and they said all right we're also going to automate our patching so that we do staging and then we deployed a prod because I think that's cool so that's what I had them do and then they're using the clouds so they don't have hardware to patch but

they're providing a redundant service across multiple locations and as this gives them the opportunity since they're in a couple of different cloud locations to bring one up and then bring up another so they can rotate through their patches so that their service is never down because they're distributed across multiple sites and then they put monitoring in place to make sure that they're testing which they're doing continuously rather than even just after their patching they'll get alerts on it and go oh yeah we have a problem and we can fix it so again if we build in patching planning from day one you get used to it it's normal it's not painful because you've got a pattern

and you've got a way to deal with it so when we talked about monitoring and centralized logging most of the time some kind of service performance monitoring is getting placed pretty quick because the developers and the management want to know is my stuff working is it doing what I expect it to do how much volume are we getting through did we sell all those new contracts and look at all the people that are using the service those type of metrics usually get in plates pretty quick the metrics that we need for security are often neglected and those metrics are not that hard they're put in alongside at the same time if we plan on centralized logging is overlooked in a

shocking number of cases centralized logging to me seems like a complete no-brainer but the number of companies that I've worked at or with that still had all the logs just sitting on individual servers instead of pulling them in somewhere where they were easy to access easy to scrape easier to learn on it's crazy to me and the maxim here is again we want to prevent we want to configure our stuff for defense-in-depth and stop the things from getting attacked but we got to make sure we can see it when it happens

so it's centralized logging if you plan for it from the beginning you it's easier for you to say in the first place when I'm spinning up these boxes I want them to log to here in here and these are the different kinds of information that goes to each location you do it while you're setting it up so that you can say right off the bat I'm getting logs from all these spots in each of these spots I can say this has debug information in it so I need to turn the log down a little bit or I just have a syslog filter on it and say I want these type this level to go to this

log that I can then share with the developers and I want this level to go over to this log that I can make sure isn't shared unless we sanitize it and then think plan from the get-go in a startup environment you need collaboration you need a team that's working together the operations and the development and the management all need visibility and ability to collaborate and logs are a big part of that developers especially on their own software wrote those logs so they can figure out what it's doing and in order to get that information you want to make sure that you've abrogated it in a way that they can get access to it and collaborate with you oh one other from a

security standpoint by centralizing the logs they're harder for an attacker to get to there are ways that they can alter but you can also identify on non reception hey we're not getting logs for my house because somebody shut them off and I realized that's the problem we need to look at but you can only do that if you're pulling them together to a central point so here's a brief list I've honestly put this slide in because I'm gonna stick the slides up on sched after the talk and if anybody wants it here's a list of what to monitor there are a lot of other lists that you can find with a little bit of googling but

you want to be aware of the usage of privileges and taking steps that could lead to failures that seem like things that you are not expecting so let's talk about our yeah okay so let's talk about our hypothetical cyber as a service company that Senator hatch is providing in this case they realized that some of the data that we're getting some of the data that we gather could be considered confidential and in some of the settings maybe they're logging the tokens that were used to authenticate to the API and they just said you know actually we're just not going to log that anymore except in a critical debug case we'll drop that but this other data

that's specific to the company we shouldn't be giving to the developers so we're going to put that in here in a separate log that only operations can get to then we've got a log over here that has all of the data of how things are flowing what's happening and we can make that available to the developers and they are running modern linux OSS so they had to deal with system D and pipe it into something else system D doesnot ascend stuff off box and in this case they decide you syslog-ng and pipe it into an elk stack and then in their elk stack they've got Gravano sitting on top of it so they can get some nicer graphs and they can pull

in and they can do alerting off of what they're finding in L they also set it up in Nice elated Network segment because the nice thing about using something like your fauna is the front end is they can have all the logging happen in an area that operations has access to is a network segment ops has access to it is secure and then they can have a separate network segment for the stuff that they share with the devs that they can give them access to and so the devs configure fauna and then get access to the logs they're interested in but they can't get access to ELQ on the backend without figuring out a way to break out of the

network segment you put them in I didn't dig into that in this talk but one of the critical components of designing the infrastructure is in designing the network so that it is segregated properly and that should go right into the design of the components of the API service itself and then you want to instrument the API services the developers are developing it so they can go straight to a tool like Prometheus and then that can be displayed by Gore fauna the Prometheus can pull the stats that it exposes on the Box gather them together and then the devs have full access to really seeing what the operation environment the operating production environment is doing while

things are in flight and so they hatches company said we're going to run with this we're going to dig in and they put all these steps in place at the end of this process they actually have I mean if you look at what's written out here there are security components to this but to a significant degree this is just really useful for getting business done for understanding how their software is working and how they can make it better and that that's one of the things that I wanted to underscore is doing this right is good for the company it's going to put you in a better position to succeed and make progress so let's talk a little

bit about configuration management well is there anybody else - want to add anything on that last slide actually do you want to talk about monitors or logs any other components that we should that you think would be useful to everybody else here yeah

mmm-hmm it can absolutely and so the tool of the different tools that you've got so ingesting them into ELQ or even at the syslog level where you're aggregating it in the first place on the host where the web application is running you reg X is your friend I mean you start identifying you have some flags that are already in there of the information levels that the dev decided it needed whether it's an info a debug or whatever else type of parameter and you can use those to start to divide things up I want it I want debug logs here and I want warn logs here and I want crit logs over here and these are

going to be accessible in these walls but then you apply reg X is on top of that but then say here's where we pull out the things that you're looking for but they can be I mean one of the one of the services that I've worked with recently had the number of log entries that they were getting is on the order of about thirty five gigabytes no the main info log was hitting between 76 105 gigabytes a day on the central log server and that's I mean it's not the biggest thing there is out there but all of that is one service doing one thing and trying to parse through that we had to put in place okay so here's here's

what things should look like and there you have the dialogue back with the devs because that web app is your web app most likely and so you say all right guys we need to be able to find this in the logs just like you need to be able to find it once it's busy so they can add you know recognizable lines it'll be unique for the particular flags or problems or alerts or whatever else you might be looking for but yes

sure absolutely

[Music]

you

right

and one thing that's worth recognizing the reason we have to separate things is you've got logs and you've got statistics which are different things right we're using Prometheus and or together simple stat data of what happened how many things did you process whatever else that our numerical and really want graph stones and then you have the log data where it's giving more verbose information and you can generate graphs for graphs from it after you reg X out the right pieces that you want but it's going to be more verbose and it's intended more for troubleshooting and for identification exactly what's going on thank you this is what I wanted let's move on so let's talk about

configuration management code review and change control so most of the regs don't actually explicitly require that you use a configuration management system they do require uniformity though your auditors are going to come to you and say I want you to show me these ten machines and I want these particular configs and they expect them to all be the same or unique in intended ways and if they see things in the configs that they have you pull while they're sitting there watching over your shoulder that are inconsistent or show ad hoc behavior on the particular host that you're looking at then they that's the stuff they look for and then they go good let's see what we can do with that and

dig deeper any really you don't you audit should be boring best kind of audit is a boring on it because you know exciting audits are not a good so but configuration management is a great way to get there now you know 20 years ago we all rolled our own everybody wrote some bash scripts and said I'm going to have this happen on that server and this happened on that server but really nowadays you've got great configuration management options right here in Salt Lake we've got salt stack housed and then you've got ansible you got puppet you've got chef you can go back to cfengine there's options that allow you to have a framework where you can draw on the

community I mean open source is a great thing there's nobody in this room if you can prove me wrong on this statement I'm going to be I'm not going to believe you but there's nobody in this room that does not use open-source software on a regular basis and what we gain there is a community a synergy as we each put effort in and we develop something that we can all use and when it comes to configuration management all of those solutions have modules and formulas or recipes that have been developed by others for the for the problems you have and you have the ability to implement or improve them if you need them but it

lets you lay your system down the way you want it I'm digging a little deeper on this slide we'll keep moving so code review config manual code review is also helped by configuration management systems because you can do code review of what's going to happen on all the systems code review is something that has been established in development environments for a long time but code review and operational environment is a big deal because that allows us to have that same kind of consistency it allows us to have multiple eyes on it allows us to build an architecture that we all agreed was the right architecture we wanted to build and you're going to have a lot you get a higher level of effort

as people know that somebody else is going to look at the crap that they wrote they're going to try a little bit harder to make it somewhat less crappy and then you move forward to the last section of this or last piece of this section talking about change control change control is a word that I see many people who have worked in large environments people have been at the multinational massive bureaucracies of doom and you say change control and they're like no because it means nothing can get done I know I worked with the guy who told me yeah when I was working for this healthcare company what we would do is we'd kind of pile up several

tickets of little things we needed to get done on a server and then we wait for it to have an as soon as that server had a problem we get like 10 things done that we've been waiting for that we can slam through on the outage because change control sucked and so that means change controls wrong in that environment change control is a way of enabling us to get things done in a safe way and so if it's preventing us from getting things done we're doing it wrong so talk a little bit about how we can do change control in a start-up in a way that is still productive and helps us get stuff there we go so let's talk

briefly config management already talked a little bit about things like puppet chef saltstack ansible to framework there's modules available other people have already written for you it allows you to enforce consistency across the environment and to quickly change it one that one mistake that people often make is thinking of change control as a or not change control configuration management as a provisioning system it is useful for provisioning for getting something set up the way you want it in the first place but it is also useful for enforcement and it should be run regularly to make sure that that environment stays the way you told it to stay you're going to overwrite and change things that would be useful to an

attacker or someone else alter later it also makes it really simple to review changes and I would argue that containers still benefit from using configuration management inside the container because you have the ability to tweak and make changes inside the container without completely having to deploy a new container and you have the ability to enforce the container staying in a particular state if you're changing your containers really rapidly maybe there's an argument to be made you shouldn't use configuration management but I would argue that it's still worthwhile to have in there to connect back to the system and then at the beginning of a configuration management setup you should plan for separation secrets because sharing the

configuration management setup with your developers or it can be very helpful as they can see oh this is how the systems are set up in the production environment so they can plan for that as they're doing development so you can see how you're laying down the config files for the software program the they gave you because you can share the configuration management system with them and you have another set of eyes to be able to say oh you know what this isn't working quite the way we intended it to because they know their product and they can look at how you've configured it with the config management but in order to be able to do that you

have to plan from the get-go to separate secrets you need to plan so that the data that you don't they can't take out of the production environment the data that can't go to the people who don't have production access is easily separated and hopefully encrypted so if we talk about that in code review and then and change control environment code review in systems like Garrett github crucible bitbucket are all solutions that allow you to say hey we need this many people to look at it we need to approve it beforehand so that we know that we've all looked at it and you're able to that way enforce best practices and force the architectures you guys

have decided you want in your environment you make sure that nobody's going in there and using three spaces instead of four and that it looks pretty three spaces is just wrong but and it 1:10 just just tell you them to put spaces in a new tab so that way everything gets reviewed and formally approved and you have sign-off from multiple parties who all taken responsibility for what is to happen here then as you move code review to change control you're able to have a process where there is something for change control to review I've been in change control meetings where the person steps in and they're like yeah we're going to put this stuff on the server

are you guys okay with that that's it and there was no framework within the organization for it to be more detailed there was no way for to easily communicate what was actually to be done code review provides us with that and so then as we look at the code the change control also enforces the stages of progression of a new pieces of change so it hasn't been tested in the development environment does everything look good are you guys comfortable with what you're seeing as you review the actual code right now it's approved to go to stage 8 and in the staging environment it runs we have tests that we make sure we're validated we record those in the

ticket and we say okay the tests have been run it's successful do we have to make changes to it okay we had to make some changes as we review for the changes or we say all right we didn't mean any changes it works exactly the way it was we verified it we put the verifications in great we can approve it and move it on into production on some pre-approved time schedule but change control allows us both to do the initial approval and then to validate that process was followed and things move safely from one area to another it does not have to be brutal and onerous it does not require 10 people signing off from all branches of the company who

have nothing to do with it but are mad at you because you didn't deploy their thing first instead it can be a lean process that says yep we've looked at it yep we're safe hey everybody else checked it and checked off on it and we can move forward it can save us a tremendous amount of time in terms of downtime and production issues and reputation so at Hatch's cyber as a service company they decided to go with a change system attached really wanted to UCF engine because he heard about that back 15 years ago and he thought it was really cool but the team managed to convince him that you know what let's let's at least move as far forward as

puppet and we can use vault so our secrets can be stored encrypted and separate and then they hatch also you really want to use CVS but they managed to convince him to get was worth and then chose to use garret and then to play Artie internally one of the nice things about Garrett is that Garrett has really good enforcement of change control parameters and good logging of them then they define their process that process they came up with was you got to get one approval from another ops team member before you can deploy to staging somebody else has to read your code and then in the end the change can iterate in staging but if it changes in staging

it has to have a full review by another ops person before I can go to production if it doesn't have to change in staging then once it's been verified and passed the test we can move it on to production on the proof the proof time schedule in the original ticket so let's talk I've got three minutes left for separation of duties which is a bummer I'm going to do this really fast separation of duties is teamwork not isolation separation of duties should mean you do that job I do this job and we work together if separation of duties means I don't talk to you anymore because you're in that other job you're doing it wrong it

defines responsibilities cleanly so that people have tasks they're responsible for theirs ownership there's investment if and I mean the obvious reason separation produced reduces risk of insider actions whether it's malicious rushed or just incompetent and one thing to consider as you look at separation of duties operations is not the job for your worst developer you want people who do operations work it's different than development work and the two are complementary I'm bringing up the DevOps word really quick in that context because DevOps can be a description of the glue that holds separation of duties together its developers and operations working together and communicating well it is not some developer the the development team got pushed through and

given operations access so that you can do whatever he's want and bypass process and ignore change control and just get the things done the developers wanted to done done that the operations team was taking too long to do and it's not the operations guys saying hey we really wanted to write code cuz we want to be developers too and coming up with some really crappy Perl script and saying we're DevOps everybody has to work together everybody needs to have clearly defined roles and they collaborate which is what makes it DevOps it's a team working together to achieve good work as a team as my definition and there are plenty of people that will argue with me about it

you guys want to we'll say that one for after separation of duties in a Lean Startup so you want to share as much as possible I talked about this already but I'm harping on it again because I think it's so important logs config ticketing and the production like development environment all lead to a really good collaboration with the development team the more the development can see into the operations environment instead of operations trying to hunker down and hide because they're embarrassed or stressed or whatever the better that relationship can be but it is up to management as well to make sure that there is an equal relationship and the office guys aren't just getting treated like lap dogs that it should do

whatever the dev team told them to do it needs to be working together as equals peers and then planning from the beginning to make sure that secrets config that secrets are out of config management that the logs are shareable and the monitoring servers are shared everything's developed or fork in anything this is worth just a second anything that gets developed or fork in-house now belongs to the house it has to be maintained just like your web api just like whatever other components you are owning that you built you built that script you built that piece of code that you so badly needed in-house and usually that's a bad thing because then it sits and it atrophies

you need buy-in for management's to support it you need to be able to rely on the community push it upstream and I'm going to conclude on that time at a time so I'll put the slides up but my argument would be that by implementing these few things these four general categories that are common in regulated environments you can make your startup more stable and more successful as well as avoid a really embarrassing security ism that's it if anybody has a last question we don't really have any time so come up and talk to me