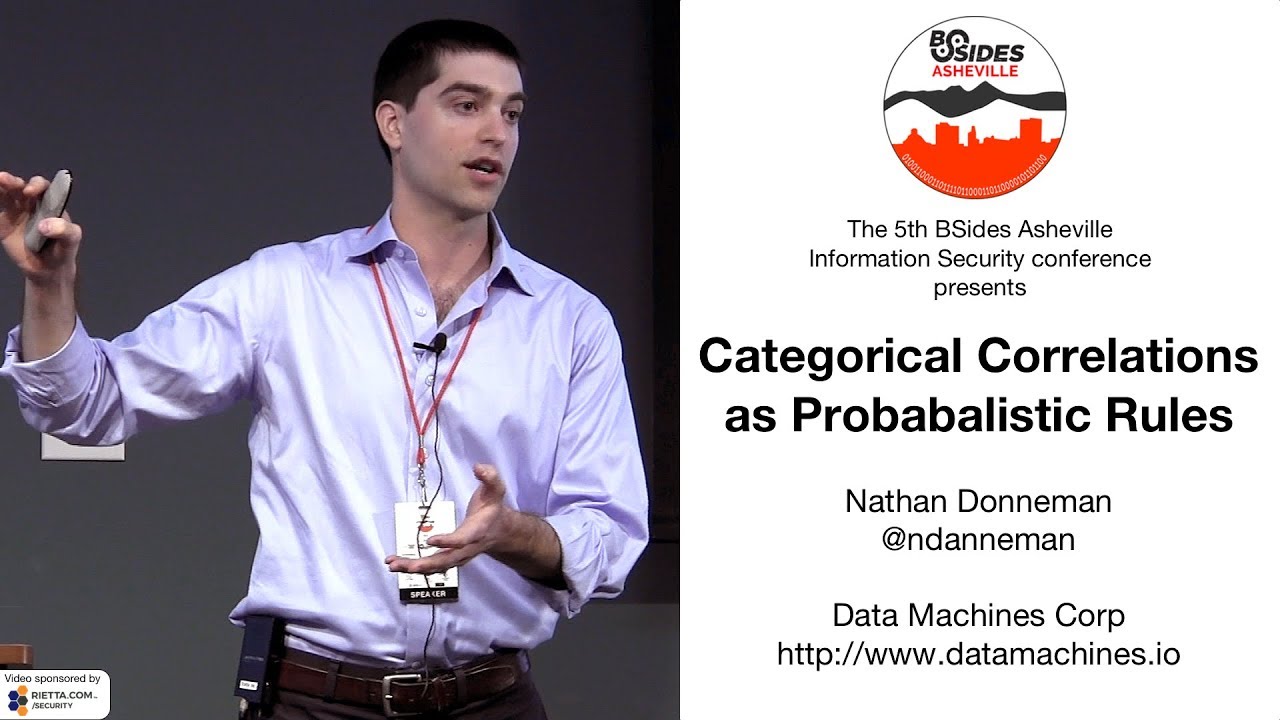

Categorical Correlations as Probabilistic Rules

Show original YouTube description

Show transcript [en]

hi I am Nathan Dannemann I'm going to talk a little bit about this thing that I'm calling rule breaker really it's just exploring categorical correlations as probabilistic rules that'll make a lot more sense to you in a minute if that makes you yawn just kind of hold tight for 30 seconds okay a bunch of huge things the besides people staff especially Daniel who help me figure out what on earth I was going to be doing here you guys are awesome you lined up great speakers big thanks to the earlier ones I'm gonna stand on the shoulders of those giants the time especially Tim who said who made a great 9 great points about why machine learning for InfoSec

is really really hard I'll be jumping off from dad a lot I'll also talk a lot about jump off from the first talk about rules and inducing rules from indicated to compromise and kind of marry those things a bit I I didn't realize that this thing is gonna be ordered this way but if this was the intention of great job big thanks to a company called dated machines I work for them they're kind of a small outfit they do kind of Cisco consulting they also stand up fancy private clouds for customers who need that kind of stuff we're not a product company so I'll be talking about this thing that I've given like a name to but

you can't buy it from me but I can give it away to you for free in part because Uncle Sam helped fund part of it big thanks to the government doing lots of kind of high-end R&D in the cybersecurity space i it's mandatory that I have that gray stuff on the slide there it is ok so we're going to talk a little bit about big data and analytics for cyber defenders and that's gonna really riff on some of things you've heard this morning already I'll talk about this thing rule breaker which I like to say it with like I don't know some kind of bad Scandinavian accent right like rule breaker we'll talk about how it works and what it is

I'll talk about how to try and make it security relevant and it's use cases and I'll talk about next steps a little bit because this is active research this isn't kind of a beautiful shrink-wrap thing that I'm kind of extraordinarily proud of and again it's it's not a product you can't buy it from me dad machines doesn't sell anything except people research time so it's nice I get to kind of make fun of people who sell things which was me as of a couple years ago really brief background I'm kind of an applied statistician so perhaps much like Tim I know who I think is no longer here and can't defend himself which is perfect I know enough I guess about

Matthew stuff and macduff on the fly over the last four or five years but networking computer cyber security it's a really really big space so I broadly claim a lot of ignorance there so if I say something silly just you know give me a benefit the doubt or come tell me later we can dream up new use cases at the end of this what I'd like to do what i will do come in brief and show you how to code this thing for yourself it's easy even if you're not like much of a coder it's still pretty easy so that's kind of the the kind of goodie bag at the end of this hopefully that'll keep you engaged for like no

more than 30 minutes okay I'm going to set up this kind of topology of ways we could do cyber defense that are data-driven and then I'm going to break it just just kind of for kicks because breaking things is fun so one way you can do defensive fiber analysis is with rules so the idea here is listen code knowledge and a bunch of kind of if-then statements these are often like known bad actor TTP's which I'm told stands for techniques something in practices but you can also give use from IOC list as the first presenter talked about at a o'clock this morning if you call it that so an example kind of tooling here is something like snort and

you get rules hard to fast ones that look like if some IP and some port or some other domain then block it reported or Don it you know stick it in a queue and never look at it whatever you're going to do so these had some real pros right these things are understandable when some jerk face like me hands some real cybersecurity expert a possible issue and they say well what makes it an issue you can just say look if if bad guy domain then bad guy stuff there's a bad guy domain it's really easy to understand and they're targeted they're fast both to implement though maybe if David's here you'd be telling me I'm

I'm kind of making too small think of that they're all so it's super fast to execute I can do these often at line speed big cons though these things are pretty simplistic so they sometimes waive some things to be desired bad guys can kind of sidestep these things pretty easily by changing small characteristics another big con here is the kind of known knowns thing so if you have a bunch of rules you can catch known knowns pretty reasonably but you're kind of helpless still with respect to kind of things you don't know about and that's either because IOC lists come in kind of slowly or they're they're bad actors they're just slightly more clever and they're kind of changing things on

you we could talk about catching zero days that I'd probably be a lie but we'll talk more about that in a minute okay defensive analytics too so you won't like not necessarily level up but take like a different approach maybe we do statistical pattern matching which was the whole thrust of Tim's talk right the idea here is you get a bunch of examples of for instance now we're carrying PDFs and a bunch of benign PDFs you learn some statistical model the different shapes those two you put that model into production and it says that's a good one that's a bad one that looks like the good ones I've seen that's a bad one just one of many examples of

people who do things like this fireEye silence I there's a slew of these guys on the market right now statistical pattern matching aka supervised learning is sweet in that it is targeted so you're gonna like have these really specific models to try and separate bad guy stuff msword attachments from benign ones and they can be quite accurate in spite of all the very real problems Tim alluded to you can't get pretty high accuracy out of these things of course you know if you're getting it right ninety-five percent of the time you're getting it wrong a lot right because people are swinging a lot of files cons though as Tim really nice alluded to you need training data that's labeled so you

need examples of regular people msword files and a bunch of bad guy containing msword files you also get like he also very aptly pointed to you get these crazy uninterpreted models so I send results to the cyber Smee that I sit next to and here she goes what the what is this why is this bad why are you saying it's me and I say oh we got a we're going to have awkward conversation where I basically acknowledge that I don't know how to interpret my own models these things also have a really short shelf life I think man that Tim guy really crushed it as the data changes as the threat space changes even slightly your models get

stale really quickly just like your rules also subject the same problem and you need many models to achieve broad coverage right you need like and this is totally painting us child's childish version picture of this but you need like the Microsoft Word 2003 separating model and the one forward of seven and and and and and so you need tons of these models that you have to kind of keep all of them up to date and deal with all the issues that then becoming stale and interpretability like times every model you have in your whole system yikes okay so there's like another way you can kind of use data to try and defend networks I guess I should have

mentioned I worked at a company maybe or maybe not that company for a long time doing this and other things and recently I've been kind of doing cyber R&D for the government for a bit so that's kind of where I have the kind of visibility to say some of this stuff may be authoritative we maybe not the idea here is let's use a statistical model to describe normal data right if I can describe normalcy kind of it so facto anything that doesn't fit Title II in that bucket is anomalous I know iron that does it because I wrote models like this for them or you can also try and model the anomalous myths of observations directly huge field on that

if you care I'll point you to other papers or talks prose here is you get these really cool dynamic models that like can update really easily over time you get per environment customization right so I don't try and apply some generic model to like all these different enterprises they have really different stuff going on and you can detect unknowns which is super sexy like when you say that in a sales pitcher to the government they're like unknown unknowns that's like kind of the sign corn honest thing problems big problems these things are super noisy and that kind of fact derives from the really simple notion that every weird feedback over there everything that's anomaly is not necessarily malicious the

internet because of super casual design and usage patterns and because we need our admins to have broad freedoms do weird highly not with stuff it's totally benign all the time if if one in a thousand things is anomalous and you have big data so they're a bajillion things going Raj your network all of a sudden you have you know do some simple math you realize that there's gonna be ton of aberrations definitionally your definition of what is an anomaly like implies an algorithm for detecting that type or class of anomaly which implies what your result set will be and um TLDR anomaly detection is really really complicated it's easy to mess this up in in ways you won't know about and you

also get these terrible uninterpretable models okay so that's the that's the paradigm I'd set up just to kind of knock it down I'm gonna talk about this rule breaker thing that gets you hopefully some bits and pieces of the power of all three of these approaches and to some extent we can discuss this afterwards again it's nice that this is not a sales pitch its side steps a lot of their pitfalls so the idea the high-level idea behind this thing I'm going to eventually get to the point describe to you is that we're gonna generate simplified models of normalcy in the form of probabilistic rules right so I'm gonna look at your data and in

your data if port 53 then DNS likelihood 99% of the time we're gonna infer that from your data and then we'll go back through your data and try and identify observations that break that rule and we'll talk a lot in a minute about how to identify security relevant rules as opposed to just correlations nobody cares about you get dynamism you get some some unknown unknowns here from the anomaly detection part of this you get the interpretability of a rule so I can pass these results to the cyber smees really gracefully and they say why is this weird and I say look because in the in the data like if a and B and C than D

but here if a and B and C than F and they can use their mental model of networking to decide if that's bad or not and then you get some of the accuracy from statistical pattern matching these rules are probabilistic instead of deterministic which means they hold most of the time and then again the cyber speed can determine whether they're like I give a crap about a breakage of this or that rule is really silly okay a little bit more motivation so Network data because of our C's convergent use habits you often get these strong inter dependencies between fields or variables in your data so think you know if you're looking at net flow data from yeah for

bro or you're looking at I don't know host level data from insert name of your favorite agent here you get these strong correlations right file extensions that imply or go with mime types ports and protocols the second bullet is kind of neat because here we it we can start to talk about ranges of continuous variables like return by it's right so like I'm doing port 53 and it's a quad a record like and you get back a bajillion bytes that's kind of a typical port numbers correlate with protocols and so the high-level idea here is what if we can automatically identify strong categorical correlations right if port 53 then probably DNS to learn about a

network and this is great if you're on a consulting team or like some of the folks I've worked with in the past in the government side that go to different networks every month if you work in the same stock for the same corporate entity for like the last five years you probably know your network inside and out already maybe that's not cool to you but then you still get the second thing so we can then with these probabilistic rules identified again really simply even if you not a coder um you can then identify breakages of them to learn about anomalies and then we'll talk a little bit about how to pick the rules and the breakages that are actually

security relevant right to get you over they give a threshold okay hey man can't we just find like people who are using dns but not on port 53 with a sequel query like that's the first thing my analyst said to me and it's a super good critique right like hang on fancy math like I forgot sequel I've got a database get-out-of-town short answer is yes if so if you already know what mismatches you might care about right so if you already know you want to look for things not identified as DNS by your protocol inspector over port 53 and you just query that right you know select star where I don't know port equals 53 and protocol not equals

DNS but if you don't know what to look for again unknown unknowns sequel doesn't help you there and then yeah if there's only a few mismatches you care about so if that list of mismatches you care about is like of order you know tens of them sure query them up if you think that's a good use of your time maybe script that querying to automate those things that's nice but what if they're an arbitrary number of unknown mismatches that you might care about you can't sequel that okay and isn't this just anomaly detection like I kind of like laid out point one two and three for defenseless cyber data and I said this is kind of like overarching blah

blah blah but this is really just the third one sort of it certainly starts out that way right we're certainly going to model normalcy but we're gonna do it in a really particular way this thing is optimized for categorical data which that just means instead of a numerical thing like height in inches it's a categorical thing like color in the RGB kind of oh that was a terrible example like color in the Crayola eight-pack this thing is really performant in the face of irrelevant variables so you can put in lots of fields and if they're fields or variables don't correlate that anything else this thing doesn't care lots of anomaly detectors don't have that characteristic and this one

importantly is focused on near misses which makes it really potent in for security purposes so a lot of times because of adversarial intention your adversaries aren't fielding inputs that are tremendously anomalous they look just right in a lot of ways that are often like one feature or something and this thing is optimized to detect that okay let's talk a little bit about the language of rules because what we're gonna do is we're gonna induce them from data so rule breaker thinks of categorical correlations right again I'm going to go back to this kind of trivial example and we'll talk about all the complications that arise in a bit but if 4:53 then protocol is DNS right

that's a strong categorical correlation right an example oh there's my toy example and it kicks out a probability statement for that this is just a simple conditional probability note that these are descriptive rather than normative so this is not a vow shout rule this is I saw in your data this correlation holds so breakages of it or just breakages of correlations and rules I'll talk about this using these words they have three parts they have a predicate so if predicate oh they have a consequent and consequent and then they have a conditional likelihood so like pop up that last little thing again if you're thinking oh god how do you code that you get you're gonna get all that

fur for nothing if you use Python our spark so we'll talk about other things you're gonna lay on top of that you might want to cook yourself in a minute okay so at a high level here's the the kind of gameplan Association rule mining is kind of this big area of data analytics goes back like 30 years and it's the task of identifying antecedent sets highly likely to imply a consequent all right so find these correlations that's the like name of the like high volt if you want to Google it like what's Association rule mining and all the packages and those program leaders I talked about reference it as such I mean so it originated with market basket

analysis so you're running a supermarket and you want to find strongly correlated items from sets of things people buy so you can co-locate those items in the store it's really annoying when like the pickles are like with the sandwich stuff and the olives are elsewhere but at your mind you organize that store according to like things that go in cocktails right those things should be like together so for example bread and peanut butter usually implies jelly there's a citation if you want to go look at early one so the task involves identifying frequent itemsets so things commonly bought together in and for our use cases right cyber things that tend to correlate and then you use those to

identified rules two-step process again you're gonna get both these for free so I'm going to be brief about this okay freakin item set a frequent item set is just a set that occurs frequently 11:00 when things have good names you specify some level support s which is the frequency in some big data set it must a set must meet before you are going to call it frequent right these folks on at all back in 2000 came up with this excruciating ly clever way to find these things that's parallelizable and wicked fast if you're into algorithm development and you want to see a just a pristine example of data structures as algorithms go cup this paper I read it like four times I'm

still not sure I like understand the ins and outs of it it's it's just lovely but basically you scan a database once to find a sorted list of frequent items Singleton's and then you grow a tree based on relative conditional frequency and you use that tree structure to rapidly kind of pull these things out again if you're into that great if you're not you don't have to know this or code it yourself you're gonna get it for free in some package okay so suppose you have all these frequent itemsets right peanut butter jelly bread they go to you at all time um a rule is a unary split of one of those things where the

likelihood of the consequent given the predicate has some minimum confidence oh god these words so an item set might be a B and C a unary split is any split that leaves one of those things on on the end and so a rule might be something like if a and C then B with probability 96% all right so that's what the algorithm is doing to the hood find frequent items check all the unary splits of all the frequent enough items and see if they land with a high enough probability that you care all right so rule induction is that thing okay no one is here to learn about Association rule mining we're all here to try and like learn about

cybersecurity stuff we get two things from Association rule mining right we weren't about patterns in the network so this client uses DNS mate because they think they're clever they've pointed over some odd port right particular services might be strongly associated with particular usernames etc and we can get all that kind of stuff for free which is great if you're going into a new network kinda blind which has been my experience more times than I care to say and then again we want to do this kind of nomally detection task to find all the bad guys right we're going to identify discrepancies so maybe there's this if port 53 then protocol is dns some high percentage of the time right

that's the rule that second thing is a breakage so you're gonna sweep back to the data identifying sets that have the predicate and not a consequent right so I know how ip's work i shorten all the IPS just to make sure i don't accidentally in my toy examples point to a real IP so like one two three sent ICMP traffic to four five six over port 53 that'd be a breakage of that rule because the rule is if 53 then DNS but here we have 53 and ICMP so we're gonna find things like that and you get those all the rules and all the breakages you kind of get them for free and that's that's nice okay but these are just

correlations correlation is especially a really big data everywhere most of them are meaningless how do we generate one of these that are security relevant if you're thinking that you're asking is actually the right question so a couple ways choose right features make sure the rules are statistically sound and then automate the dropping right the trashing of uninteresting rules and breakages okay so some security relevant rules and breakages one thing you want to do here is choose security well features or sets of features where breakages of correlations therein are going to be of interest to you as an analyst right this is you using your noggin math doesn't get you this this is where your creativity and expertise needs to come

in so for example a an example that I run everywhere because it's nice and intuitive 'el is to check for strong correlations in the inferred file type so if you're using NetFlow a parser like bro or something it'll say hey man here's this file I think it was a text file and the file extinction was dot txt we think those things should match up we know that there's security relevant because that guy's like to you know stuff I don't know an executable into a PDF again if you knew that one use case you could just find that with sequel a sequel hook to whatever your database is but if you didn't know all the ones it

might be security relevant you can use this tool to kind of have them served up to you for free okay some other kind of examples I'll use please don't like furiously jot these down will make sure you slide through public ok the other thing you can do is check for your metrics so all these packages give you this thing called confidence for free which is that stupid thing the support of a and B right so the count the number of times peanut butter and jelly are like bought together divided by the support the count of a you can like this is directly convertible to conditional probabilities probability of B given a this is nice this one's better it the

only difference here is we've added this multiplier by the support of B so we penalized really really common B's what does that mean here's a garbage Venn diagram from five years ago when data science was new suppose we find this rule right like if machine learning then data science right the confidence is gonna be really high because all the machine learnings hypothetically according to this author is data science confidence equals 100 but what you'd find with the lift is that everything on here is data science so some rule that is if something in data science is not all that interesting so lift kind of goes in and penalize is those really common B's so lift is it

better metric again you get lift for free and like our Python and spark you have to write your own it's not it's not hard ok and then the last thing you're going to want to do even if he's taking these steps to like good features and use the right metric for figure out which rules are cool and which ones you're not going to care about you're still going to get garbage rules and I don't mean incorrect one session mean boring ones like if the mime type is text or HTML then the extension is HTML with a high probability you find some breakage uh-oh someone's got a mime type of text slash HTML and extension dot txt

ah it's the bad guys it's not the bad guys right you don't care so the team I'm working with now we implemented this an exceptions list which is a way to kind of whitelist rules parts of rules or breakages that we know we don't care about so we run the same use case a second time either on the same network or a different network or the next day or whatever we don't have the results we don't care about served up to us right so we could say like hey anytime the rule has like I don't know dot HTML and the breakage has dot txt don't show me that so it's really simple way of pruning these things we wrote like this

awful little kind of mini language to get parsed into that thing I'm Way more of a statistician than a coder and I'm sorry I'm not sorry ok here's a quick example so again because a lot of this is was done on the auspices of the government and on people's data that they won't let me talk about in detail I'm going to be like just a tiny bit so sorry the part where I didn't show you all the thousands of like fatty bad bad guys I caught this thing I can't like point a lot of fingers but just take this as a nice exemple are we think mimetype should be tightly coupled with file extension you know or vice versa

but the rules kind of have an arrow but there's no sense of causality or directionality under them alright and we know breakages of this might be interesting because of anecdotal evidence right that thing I just mentioned right it says PDF but look what's under there it's an executable uh oh so we have anecdotal evidence that this kind of breakage might be interesting and then we've got the unknown unknowns problem right I don't just want to find where someone stuffs an executable into a PDF I'm gonna find all the examples where someone has stuffed potentially weaponize Abul things I haven't Rhino can't enumerate that list I certainly can some of you could probably start to and we and stuff

weaponizing things into file extensions that are more typically benign looking alright so let's go find all of those things in my example data set which hopefully i've anonymized appropriately if you're looking at bro one of the breakouts and that's just a kind of a very standard NetFlow parser you get this breakout that strips out file names so you can pull the function off that really easily and the inferred mime types what does bro think this file is commit the wire here are some of these example rules that i found in this one data set and you read this as you know antecedent implies consequent with confidence I'll just point you to the second to last one

if the application is an executable then the file extension is DLL 0.999 percent of the time well what are the what are these things without that file extension let's go find those automagically I also just point to those top two rows and note that rules are often kind of bi-directional but not necessarily the same confidence so all that most of the mmm I'm sorry I raised two and three most of the dot XML is our application slash XML and all of the application selects slash XML or dot XML you often get those kind of relationships because they're strong categorical correlations but having different competences in both directions totally see previous Bend diagram question you said turn it up that makes more sense

alright okay so here are some example rules from some example network dug into one of these findings that Roy just pointed out to one of the breakages was this thing right so some IP pulling down that file type with a dot txt file extension know that this is kind of a starting point for an analysis where some of you cybersecurity professionals can can then dig in pivot from the IP or the Philo or the hash or whatever and go do the magical things you do this is basically a high quality tipper I think I hope this is usually the starting point for an analysis not kind of the end point in most cases so but hopefully

again we've got we've gotten something pretty digestible right we did Fancy Pants anomaly detection we generated rule we found a breaks for the rule I can send this to a secretary professional who can like interpret it immediately using their kind of whet neural network and figure out what to do next okay you want one of these for the like fourth time maybe I don't sell stuff I give stuff away and I make stuff I solve hard problems for pay so we can talk about those things if you care to if you want to infer rules in spark there's this thing called ml live and there's a frequent pattern mining module so you get frequent itemsets and rules

for free in our there's this a rules package in Python there's the frequent patterns import a module for that the rule checkers so what do you have for free you get rules which is a set and a consequent and usually a confidence level if you want to check those rules you gotta write that yourself but it's super easy because you just say if my original set has the predicate and not the consequent rut row and kick that thing out to some file if you look for rules that involve lots of variables so I've had all these two examples with a single predicate and a single consequent right if port then protocol you can have very complicated rules like if a and B

and C and D then e a lot of times I found in practices those things tend to be nested right so if a and B and C than D if a and B then D if a and and D if B then D and usually the most interesting one to look at is the most complicated rule that was broken so if you go roll this at home this afternoon and a couple of hours add that feature you'll be glad you did also really simple confidence is baked in an arm Python and spark arm Python have lift and spark if to write that yourself the formulas quite simple the exception list you're gonna want one again that's very

easy you just like identify with whoever you work with you're smees or you are the smees the rules the breakages that are boring and write a little way to not be shown those the second time okay here's a part way and it pains to tell you a couple of good things this thing does and then remind you of all of its limitations right unless you think I'm another person saying I've solved cybersecurity with math the strings here are is broad applicability right so we have lots of categorical features in cyber data continuous things can be made categorical by binning it's only really limited by your imagination of intelligent and kind of informed use cases you get great explain ability here

I've worked with smees both on the commercial side and on the kind of corporate side and and for government these socks when you hand them garbage or uninterpretable findings they hate you this doesn't have that problem it's really fast both to implement into execute so you it's quick and Python and R it scales in spark which is really nice if you want to hit like the last six months of net flow data from your big I don't know your Enterprise Holdings or something and then tuning this is trivial Tim didn't talk about how machine learning often has these hyper parameters so they're like choices you have to make about which algorithm and how to set up the algorithm to find

the thing you're looking for tuning can be really hard and this thing doesn't really have tuning parameters except the confidence level that causes you to care if you've done machine learning before you'd know that not having a million knobs to turn to get this thing dialed in right is nice okay and then and then pitfalls we talked kind of ad nauseam about how high confidence rules are not necessarily interesting pick the right features use a lift instead of confidence you have to use categorical data for this thing so you can do binning if you've ever thought hard about how to bin continuous things into categorical things in a way that's not lossy you'll know that that's

non-trivial there lots of ways to do it yeah you can't do it without losing something this tool only identifies what I'll call orthogonal outliers so I think of that as a strength right so we're looking for these anomalies that are only different in one way they're not like broadly different than a set if you have that kind of problem like show me the things that are really really weird this isn't the the tool for that and as of yet this whole set up it doesn't handle a multi-item consequent so all the rules are these unary splits if a and B and C then D not then D or E and so if you have a categorical

correlation that has multiple parts at the end of it you can't find them this way you know that's kind of future research for being the team where I'd like to go next all right and that's it questions yeah yeah so I think I'm tracking your question now could you yeah so that's a that's a great thought could you kind of the question was could you identify there's kind of Multi multi item consequence by using some kind of recursion here the answer is I think so maybe the problem is in the original set so imagine you would have found a rule that's like if tall didn't basketball player or hauled in pole-vaulter right and each of

those happen 50% of the time you can't find that rule unless you lower the confidence level you're looking for down to 50% at which point you're going to find a lot of illusory correlations

could that algorithm be written absolutely I plan on it let's talk can you get it canned like this afternoon not to my knowledge yeah okay yeah the question was what if you sang up an new service with legitimate traffic how do you make sure you don't fall afoul of this thing so there's several ways to go about that right one is you know don't generate rules the first day of the service right like and that's a whole now kind of like abstracted away from okay that's a nifty method how do I use this thing and practice right operationally lots of options there one like induced rules like I don't know one day and then use

those rules for the next couple of days or use it post hoc right so for yesterday I found these strong correlations and these breakages that kind of helps you get away with a new service problem if you want to hold rules and like use them like into the future you're gonna have to be savvy to the fact that you're you've changed your environment and again you can I'm gonna kind of default back to that exceptions list right you hand a bunch of things over to your speed you say hey I found these breakages of these rules they say oh I don't care about anything that involves News Service X from you know before the 10th because that was new

that day yeah I want it I want it now that's awesome I'm glad it was you know mildly convincing so you can infer rules here so that's that's for free no coding kind of acumen needed by you you're gonna have to write your own hooks to get data from where it lives your sim tool into Python or or spark or something the rule checker is again you write it yourself so it's just if this said I'm looking at contains the predicate and doesn't continue the consequent that's you know that's a one-liner that's an additional one liner that is useful three four is you get lift for free a lot of the time but if you want your own lift and spark

you have to write it I don't know if I'm be allowed to kind of share the code out for this project yet if yes then I'll try and make blast out of this community if no you'll write it yourself and that one's the only one that's not kind of one-liner and then this thing is something you'll for now it's not it's not secret except insofar as I haven't been given a thumbs up to kind of like push it out there yeah other questions there's like a hint of barbecue wafting in I think everyone is ready to get out of here all right well that's all I have I'll be around to the end of days if you

have questions kind of later come find me otherwise I'm not going to be between you and your lunch thank you [Applause] [Music] [Applause] [Music] you