A Novel SIEM Solution That Doesn't Cost An Arm And A Leg

Show transcript [en]

yeah hi my name's Phil right now I'm here from a cloud player from the awesome Texas office thanks for having us we're here to kind of talk about a project that we've been working on here for the last six to eight months they said presentation wasn't this gonna be by two of our colleagues Chan and autumn they couldn't make it but yeah I've been at cloud for about 12 months and what turned over to out right now hello my name is Alec from security engineering manager at cloud flare until recently I was based out of the Austin Texas office and in August I actually just moved out here to Lisbon which is pretty exciting who were really excited

about the office here as a whole and and getting to be some of the first people around is pretty neat and the fact that 'besides aligned with it also is super rad I'm a big fan of b-sides I've been lucky enough to be at six of them so far in different cities and we'll just go ahead and kind of dive right in before we get started though there's one kind of like a quick slide about what CloudFlare is and it's kind of important to realize what what we're doing in order to kind of understand a lot of the decisions that we made to build a lot of these things internally instead of buying them and so cloud ler at its core

is pretty large we have a hundred and ninety four points of presence around the world serving a hundred billion or more unique IP addresses daily and and at its core we've got 20 million internet properties behind our our core offering and so from a security standpoint that's like it's a really interesting place to be because we've got a lot of crazy things going on we offer security products as a company and and so that means that its core we've got a lot of logs a ton of logs and this is including like production logs we've got some examples over there as well as you know some some internal logs things that you just kind of need

to run a business and a security team as a whole is going to be interested in both of these you have to be able to put all of these things together and correlate all the stuff that's going on in order to pick out what's actually going on that's not normal things that stick out and should be investigated by a security practitioner and so when you have all this data this was a big question right like where do you put all of these logs there's a pretty traditional answer here I think anybody who's been on an incident response team would say well you put them in the scene it's just what you do it that's how

we've always done it and it works right you get data you can look at the data you can alert on that data and you can catch bad things and when bad things happen it's easy to kind of trace what happened because all of your logs are there but we've got a lot a lot of logs and and we're like okay why why are we putting the data there it's like hold up is this the right answer just because we've always done it that way doesn't mean it is and in particular we were thinking while rolling out OS query OS query gives you some pretty interesting locks there are a lot of differential logs and so you're not just getting

snapshots of what's happening all the time like you have to be able to compare previous logs from a from a known good state at one point in time in the past - what's going on currently in order to get a whole picture of what's happening and that's just not easy with a lot of traditional log infrastructure beyond that there's a lot of like hidden costs associated with these things you have to maintain the the things that are hosting your logging infrastructure and I don't know a single security professional who actually wants to get to work everyday and and like update the operating system on a machine and make sure that it's safe like nobody-nobody super interested in that if they're

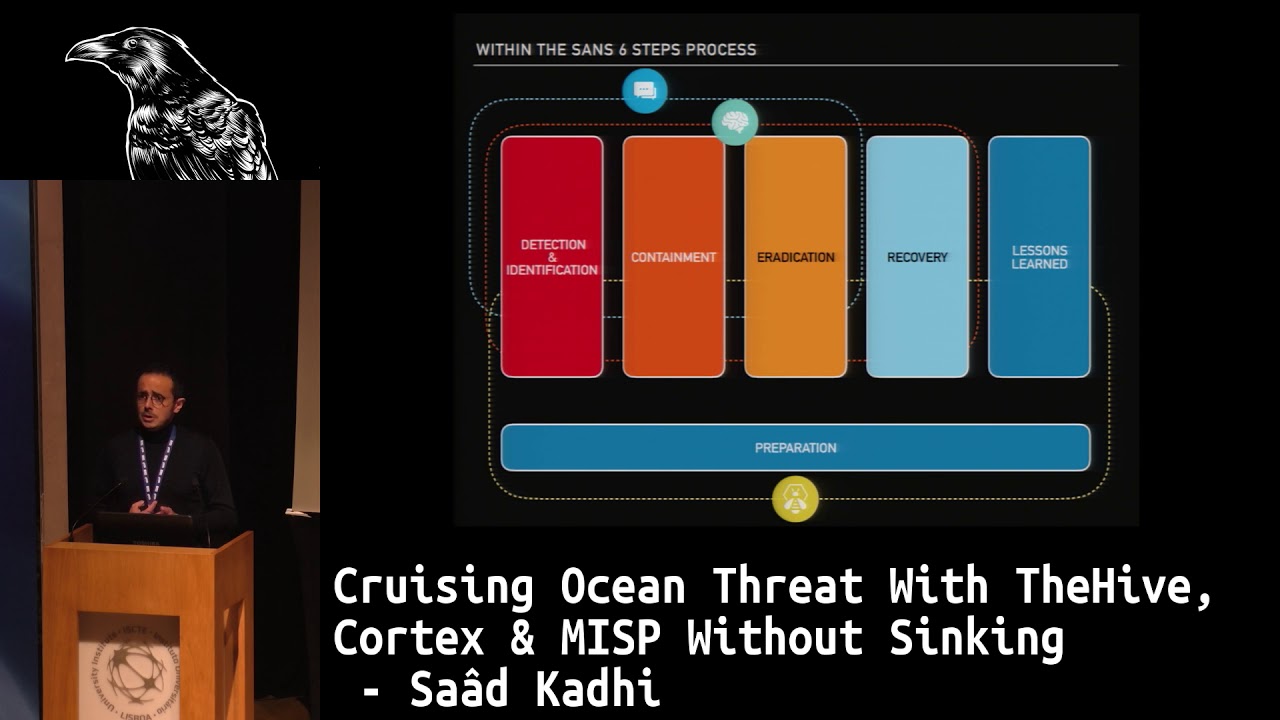

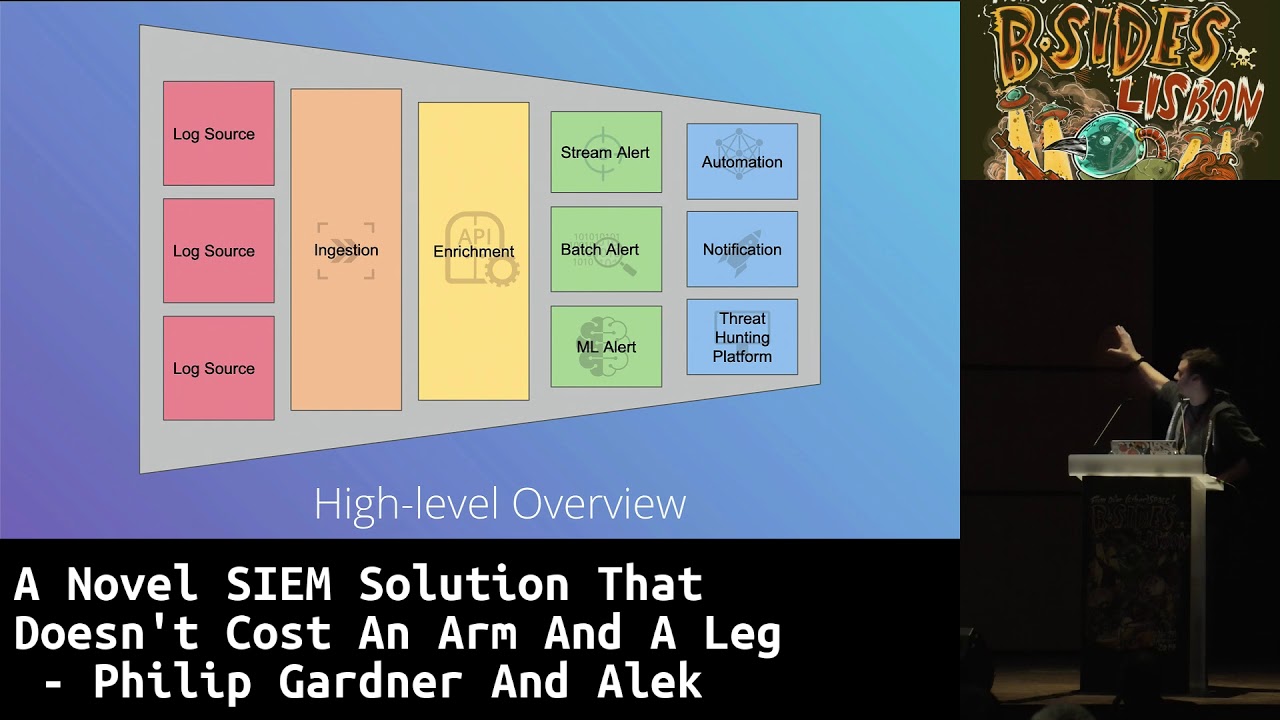

trying to dig into to incident or sponsor or a lot of other niche areas of security and so we took a step back and really just thought about what what were we trying to do with all this and so on the left here you can see we've got like all of our log sources and there's a ton of them and we kind of narrow that down we're not you know we picked out the important logs and we put those into a place we ingest them we're going to enrich those logs and and kind of correlate things and make it a little bit more human readable and useful and then once it's been a written rich we

can do all sorts of different different types of alerting right and then from there some of those alerts are determined to be false positives and some are not and we figure out what we want to do it if some of these things can be automated away to where a person never has to look at the response we just have tooling that does it sometimes we need to send a notification to an analyst to look to see if it's actually something that needs to be worked on and sometimes it just needs to go into - some things that we can look at it later and and so we took that diagram and wrote down like a list of real

requirements what what is that we need to be able to have in order to accomplish those goals and we came up with a list of things that are that are pretty hard requirements without these we wouldn't be able to do our job but then we ask this one other question like do we really need servers right do we need to have something like a traditional computer in place in order to do all this work because if you look at a lot of the traditional answers to this like the answer is yes you have to run a computer in order to do this but it's it's really it's just such a pain and if we get rid of it it make things

like really cool and it seems like a neat idea and I'm a fan of neat ideas personally so we added that one to the list there and it's the only one in a different color because the rest of these we can we can kind of solve with a traditional answer to this log aggregation and incident response problem but if we can add that in there then like we're removing a lot of overhead we're doing something a little bit neater and and so like this is this kind of madness in some senses but but it's also a lot of fun and so we we hopped on a GCP you're kind of like kids in a candy

store to start off with we're just looking around at all these neat things that exist that don't require the the maintenance of an underlying machine and and drew out this this crazy chart there is not a lot of rhyme or reason that went in this it grew over time but you can see there's there's a few things that are really important concepts at the core of all this you'll see some of these boxes are a little bit bigger than others like the detection response team down at the bottom stack driver which is basically a log visualization and searching platform and and then the real the real like core to changing the actual methodology of approaching

incident response and detection response is a message message bus pub/sub inside of GCP a few other things are pretty important as well like a sequel database to do correlation batching over time and and reticent key store management and source control and a lot of other tidbits but with that diagram it's kind of its kind of like this right how to draw an out we the diagram is basically those two circles and there's a lot of work that goes into actually building all that it's so so really looking at this it might seem a little crazy but it's not it doesn't look unwieldy until you pick like one of these pipelines and so this is that one pipeline right there

is it gets a lot more complicated when you actually start to integrate it and build it out and turn it from a line on a diagram into actual like working toad and so we took another step back after we explored these these kind of service options a little bit and and really looking at this you can kind of tell it's like it's a big data fluoride funnel taking a lot of things and turning it into only the things you care about and and so after revisiting a little bit we ended up with this and and what this really kind of represents you can see on the left it's a lot more similar to that previous diagram where we've got a bunch

of log sources on the left that are all being ingested in with various functions and then pushed into pub subtopics in the middle they're getting enriched and then we've got a nice little bit of alerting down at the bottom and then we've got a bunch of notifications on the end and so there's a lot to kind of digest with this diagram and I quickly went over things kind of intentionally because I'm gonna turn it over to Phil to really pick one of these these flows starting with one log source and going from the actual source of a log all the way until we get to a notification to the detection response team thanks yeah so basically we're gonna pick like one

of these log types that we have and kind of work through how it might go for the pipeline here but just because there's like a lot of like various implementation details that are really that important so for instance this is basically what a event flow from one of our core light sensors looks like if you're not familiar with it correlate is the commercial version of Zeke which was formerly known as bro from Calicut is the first sort of third of this diagram is like how do we get events that are streaming from correlate sensors into TCP in general and kind of a better question is how do we do this through for a very large variety of log sources

we kinda have to support lots of different ways to ingest data we could pull directly from like a SAS API or maybe the producer could just write directly to a cheesy bucket you know there's lots of different ways in the case of core lag we actually deployed a cloud function that fakes an elastic search API and will write all the incoming events in this batching manner to a cloud pub/sub topic there's kind of like a different way to sort of look at how we get data into the pipeline kind of like what I mentioned we don't want to be too draconian as far as what the implementation details are but we do have to sort of standardize on a couple

of pieces here are we first and foremost is every event we want to be have written into a cloud pub/sub which is not familiar with it it's basically a message broker that's like Kafka and a cool thing about these pub subs is that you can have multiple subscribers including these what they call background cloud functions that will basically fire off every single time a message enters the queue as well we wanted to be able to actively search these logs and I think Alec mentioned this as every every event gets written right to stackdriver which is essentially like a Cabana like front end it's got an automatic parsing of JSON and protobuf messages and probably the

big key for us is that it's free to search you get free 30-day retention and you're basically only paying for the API rights so once we have all the events is sort of coming in we need to start interrogating our data right there's lots of different types of questions we want to ask you know does this a bench map some known signature is there some like known threshold that just metric you know for symmetric that it's increased or maybe this is is this an event that's indicative of some kind of you know newer or anomalous behavior it made a sudden increase DNS queries is some Russian ASN or something you know who knows lots of different things we

kind of thought about this sort of three different type of techniques right which will basically break down at the three different types of sort of wool engines if you will we've got like a real-time we're trip wire or signature base things like you know is this a malicious ja3 hash this is a gnome type of squatting domain this is all powered by google cloud functions and then I say three is because this next one is more these next two methods are very similar right it's very batching a time-based right these are you know how do we query a bunch of event across either like multiple log sources across the various time time period or you know using some and

VML stuff this is all powered by bigquery which is essentially very Google sequel like products we've really done too much on the anomaly stuff that's gonna be coming more in like 20 20 but I won't go over that too much but we're just getting started with that anyway so first up the real-time rules of the trip where our rules as I mentioned these are all clap functions that we wrote with golang every single message that comes into pub/sub triggers an invocation these are wicked fast you know we're doing about 60 milliseconds on the average of execution time and then go laying it's just really nice gives us go routines which lets us do a lot of different things with each

message because it was easy concurrency [Music] we have about like 30 or 40 different cloud things right now that handle a bunch of different events coming in and these are all most the vast majority right now are written in going but we started kind of playing around with this new rule engine called the common expression language is that Google recently released this is the same sort of engine that powers a GCP I am policies you know firebase I am policies office okay or kubernetes things but you can see this is a lot easier to write then you know a bunch of boilerplate for going which makes it really easy for analysts to write sort of understandable

rules very very quickly without needing like a huge deep developer background which is pretty important part of a traditional the same next step are what we kind of have term batch rules these are sort of more complex queries that we can't air great interrogate events across multiple time periods multiple data sources but probably primarily the most important thing is with Isis or standard sequel syntax right so may see every single event is written to bigquery automatically from once it's written to stack driver through something called a export sink this is save a ton of time because we don't have to do any work at all this is just writing a filter that says drop everything it's a big query then we

write rules in a predetermined sort of ya know config loaded up through a couple of cloud functions that basically write these scheduled queries and then we'll run them on the schedule and then if any of those rules match will basically put them down at the pipeline the same way as the real-time alerts so I can see here's like a pretty simple batch rule that uh one of our team members wrote you know we're setting like the frequency how often you should run you know what the natives it goes right to a playbook I'll go more to the play books later but yeah you can see probably the most important part the query there it's just pretty

much standard sequel yeah so anyway once we have let's just let's say we triggered a rule we may see a need to respond to it somehow first and foremost we publish this again to a cloud pub/sub this is a pretty common thing that we're doing without the pipeline it basically gives us a lot of lag time in the ability to handle these events in multiple different fashions from sort of one ingress point this also includes false positives coming in there so we can like either discard those or verify them further by another cloud function down there same thing with like duplicate events you know the last thing you want to do is something we've seen a

lot is somebody doesn't isn't able to log into oktai they have like tons of multi-factor authentication failures and it's really just the same sort of incident if you will so we can sort of handle that in the queue right now we just have a one function that we call sirens it's basically a lightweight alert router pulls every single message from this raw detentions pub/sub and then based on the with the stamp alert one idea is it'll bratatat pages you to your JIRA or Google Chat we can't have a lot more subscribers you know things like Chronicle or whatnot but like I said we're just still don't sort of like pretty alpha phase here yeah anyway

so here's a quick match of like a google chat notification hearing see it took like 15 seconds one of our team members was playing around with the merlin CT framework but that was pretty quick so yeah that's kind of a nice brief overview we kind of learned quite a bit has this project been worth it probably Sasa this is definitely our primary detection response pipeline right now we are currently pushing around 23 terabytes a month we'll probably estimating they grow to 50 to 60 terabytes through the next year and probably the coolest part is most half the cost is just writing to your stack driver you know the invocation of cloud functions you know we're talking

hundreds of millions of invitations per week or per month is dirty a couple of other bucks this is about 50 times cheaper from some of our back to the hand math than a traditional seem and we definitely have a ton more to build as you can see but it's not 50 times more expensive to build over about davila some future plans way more log sources coming in we want to add a couple more correlation rule types you know things like these as a spike is has the frequency change of these sort of queries I don't want to look at a lot more I kind of sometimes when I kind of think about you know what is our sort of

detection posture look like it's like we want to do a lot more correlation which is signature base or do we want to sort of model what is what does this asset look like what does this human sort of behavior look like so we're gonna investigate a lot more a lot more data analysis tools Google has a ton of really really cool integrated ml tooling we're definitely hiring for that right now so if we just did feel free to talk to us and then integrating a lot more enrichment of advanced with bunch of threat and filled databases and because of our scale we have just all sorts of crazy data available available to us another cool thing that we're looking at

is automating playbooks through basically Jupiter notebooks which are my Google has a hosting thing like that as well but essentially Jupiter notebooks if you're not familiar are a way to sort of contain live code and documentation visualizations all in the same document this would let us you know save tons of time on the response side because you know maybe 70 80 all the the response play but could be done by the time a human actually looks at it yeah pretty cool but I'm pretty excited about that myself oh yeah so this is just kind of a quick aside to kind of wrap things up a little bit this graph represents about a week where we were initially testing and and

sending data in the bottom line where you can see there's almost nothing we were pushing about 20 gigs a day it was a singular log source from a portion of set of production systems and the idea was really just to see if this even worked and we we felt pretty confident that it's going to work really ok let's add another log source and see just what happens and without really thinking about it too much we turn this log source on on a Friday afternoon and that peat there is 9.1 terabytes and so we went from a system that was designed to accept around 20 to 50 gigs a day to accepting 9.1 terabytes a day and and

the really like super cool part about this is we didn't lose a single piece of data and and like we didn't end up with a bill that we couldn't pay and so we had all the data from this source that was pushing 9.1 terabytes pushing to a system that we we you know hadn't even tested a fraction of that on and and it just all worked and so that was kind of one of the like biggest accidental wins I think that we accomplished here and it was just really exciting to come back on Monday and see this graph and then promptly turn it off because that was not what we were looking to do so we

have just about five minutes left which is pretty good timing wise it's what we were aiming for so if there are any questions that anyone has okay hi great presentation just put a simple question related to bigquery it's a structured database the how do you cope with adding more sources in and events that have multiple fuels and the second question is have you looked into Chronicle the cloud seemed from Google thank you so second question first yeah we actively use Chronicle we're working with Google as well to sort of provide them with a lot more log sources to ingest into Chronicle it's definitely interesting to see what happens with all those news recently but as far as the

biggest or the big query so when you create this export through stackdriver it will actually automatically update those fields as long as they're just new we have had some issues where you know somebody had added a for one of our initial models of a data source was essentially int right and it was actually a flow and so that would cause some issues we have to like drop the table or whatnot but in general it's been pretty easy to it's not something we have to worry about too often really cool design have you tried work aiming against the red team like at what stage it would detect an attack attack it wouldn't be so what's the question if we beautified

playing against the red team to attack and pursue at which stage it will pick it up yes I don't want to go too much into the details of their the results of red teaming obviously but yes we have and the the results were pretty neat but that's about as much as I planned I didn't practice that question sorry so now we know that Google updates your machines who updates the containers I'm sorry could you repeat that please the beginning of the presentation you said you don't want updates operating systems on the machines who operates the containers yeah so I think to expand on that a little bit more like inherently the people like to throw

the word serverless around but what that really means is someone else is running the machines and the the approach that we were really trying to take here is can we offload some of the operational workload from our security team because the if we couldn't then we would have to start hiring sres and ops and if you look at a lot of the cloud providers via Google Amazon Microsoft or even CloudFlare itself like we have what we call a service offering is one of our products the idea is not to claim that there's no operating system or machine underneath the idea is to remove that overhead from the people using the platform and so that's that's kind of

what we were aiming for there that said no terraformers gonna let it work for us and we have a very robust or a CI CD system that'll deploy and rollback functions and tests and all that so but that was something we wanted to incorporate very very naturally to make sure that's seen a part of our DNA when building out this problem any more questions I don't think so so Phil Alex thank you very much thank you [Applause]