Revenge on the Worms! Towards Deception Against Automated Adversaries

Show original YouTube description

Show transcript [en]

hi everybody my name is urban martin and i'm the director of the ground truth track at b-sides las vegas andy applebaum and ron alford are doing our next talk called revenge on the worms towards deception against automated adversaries andy applebaum is a security researcher at mitre he works on applied and theoretical security research problems and is one of the leads on the caldera automated adversary emulation project he has a growing interest in the ability of attackers to both misuse and thwart machine learning and artificial intelligence systems ron alford is an ai researcher specializing in automated planning at mitre his interests include theoretical foundations of automated planning and developing new algorithms for autonomous cyber operations and response

if you have questions you can submit them to the live qa via discord and that qa session will immediately follow the talk so without any further delay please enjoy the talk and submit some questions thanks hey everybody my name is andy applebaum and i'm here to talk to you today about our work called revenge on the worms towards deception against automated adversaries as a quick intro i'm a security researcher at the mitre corporation or i work on projects like you know kind of working with the attack framework also with caldera i'm here with my colleague ron alford um ron if you want to take a quick second to introduce yourself yep i'm ron alford i also work at mitre

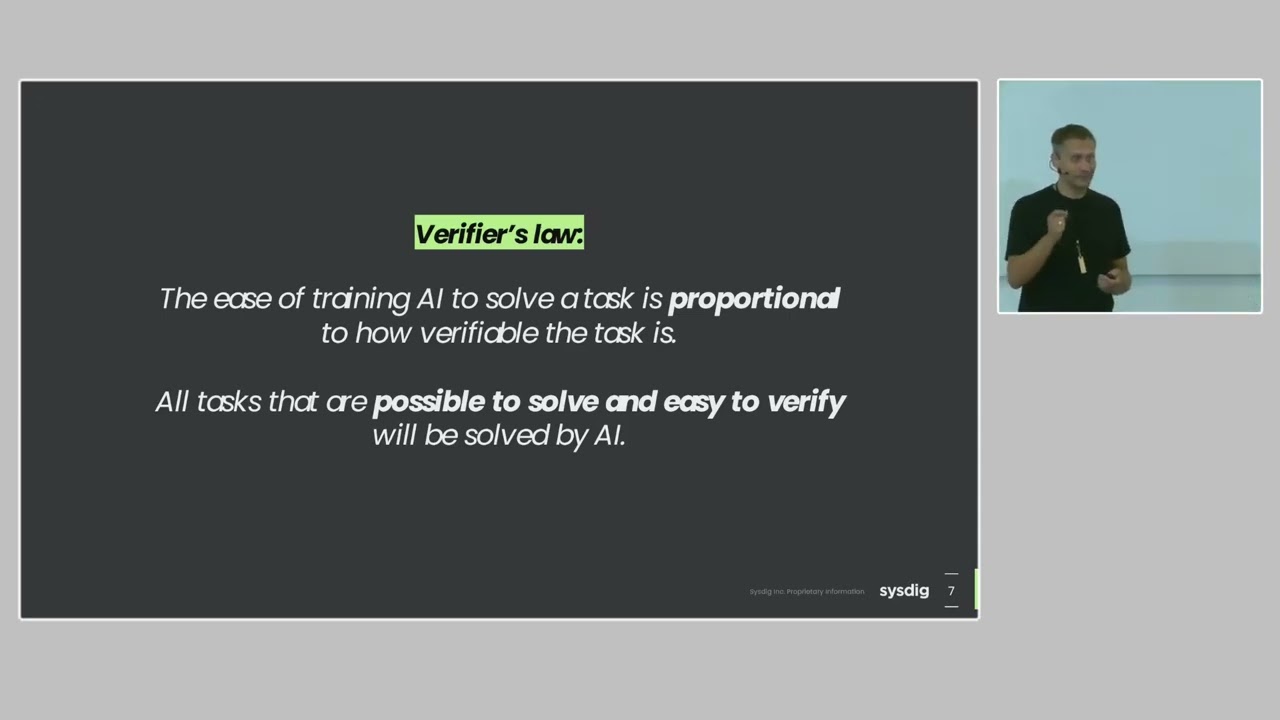

i yeah i work on automated planning with you know robots with cyber security uh wherever i can figure out where to fit in thanks ron um so to kind of just dive right into it really just kick off this talk um there's been a lot of papers and blog posts and articles in in the past five to ten years that talk about the potential malicious use of ai and machine learning and here's one from 2018 where they survey the landscape of potential security threats from the malicious uses of ai and one of the things they find is that the use of ai to automate tasks involved in carrying out cyber attacks will alleviate the existing trade-off between

the scale and efficacy of attacks really saying that using ai will be good for potentially attackers and this has gone on a lot you know even even more recently than 2018 there was a paper from the nscai in 2021 that kind of reported similar things where they looked on hey what's going on with ai and they said ai is deepening the threat posed by cyber attacks and they outline a lot of different ways that ai can be used for nefarious means including cyber right they list you know really one of their their big categories is accelerated cyber attacks they talk about self j self replicating ai generated malware rapid uh machine and machine escalation by automated c2

you know there there's a lot in there and and and there seems to be other reports other other blog posts that talk about this like potential for for big change that ai machine learning can have on you know kind of kind of how cyber attacks are conducted and this has also been seen in the security community there are a lot of papers blog posts open source tools that use you know ai or machine learning in some way to conduct cyber attacks more effectively you know be it you know using machine learning for social media scraping and and phishing um you know using gans to generate malware generating c2 domains using using gans you know there's

lots of these examples out there and you could fill a whole separate talk on diving through each of these individually that said there really aren't a ton of publicly documented at examples of adversaries actually leveraging ai or machine learning part of that of course is whether or not we can observe that and we would know that we're observing the use of these technologies but part of that is the question of whether or not adversaries are today using these technologies as part of their attacks having said that given you know all this forecasting and all the work that's been done in this space we think that one day there will be there will be attackers using ai and

machine learning to make their attacks more effective and i'm of course biased having worked on the caldera project if if if you haven't seen caldera it is open source software that we've put out there to run automated adversary emulation exercises it runs attacks in real time it leverages the miter attack framework it has a super low install overhead and it's heavily customizable and you know caldera caldera's designed to run these automated red teaming exercises but of course as you're using it it might you know you can kind of think about well could an adversary use this for nefarious means as well and talking about why that might matter is that caldera makes a lot of stuff that a red team would

want to do and maybe even an adversary would want to do it makes it easy number one caldera is smart we use ideas from the automated planning community during action selection ron's going to talk a lot more about that and i will too kind of further in the talk kind of diving into what automated planning is but really each action has its inputs its output and its requirements and that allows caldera to dynamically compose those actions into larger profiles caldera is also fast if you configure it the right way it can laterally move through a network at about a host a minute that's not you know blazing speeds but it's certainly you know p pretty up

there for for for moving through a network it's also extensible it it's not just like well here's 10 things it can do and that's it and you're done it's very easy to add new techniques or and capabilities and also integrate it with other tools such as bloodhound and then you know really outside of how they're making it easy it one of the big things that we note is caldera is what we know if what we're doing with caldera is fast and it's allowing us to do these dynamic capabilities could an adversary do it as well and and this really raises the question of well how do i how do we deceive excuse me how do we defend

against automated adversaries and and and here i put up kind of you know just so some very basic ideas for you know how could we do this using you know say the the categories in the cyber security framework you know do we want to do do we identify you know what's running on our network and and better catalog you know capabilities and that's always helpful but it it's not necessarily the answer to defend against an automated adversary so we protect our networks you know hardening things making sure you know all of our protections are out there mitigations things like that and and that's always helpful but you know it's you don't always protect all the things

that's why there's things like detection but when we think about detecting an automated adversary well if we're compromising a uh you know hosts at one per minute it might be hard for us to have our manual processes in the sock actually pick up on what an automated adversary is doing so that might not be the best option response is a great idea but we can't always respond if we don't detect if we're too slow there and then recovery is not an option either just because if we're if we're just lagging behind the adversary then they they're making tons of progress so you know really what do we do and you know to to kind of answer that we're

going to walk through a simple example um um the lead is buried we do want to talk about deception um so so what we do want to say let's use deception but before getting there you know here's a simple example of a screenshot of you know a network topology you know get given out by a bloodhound and it's relatively straightforward you know an attacker can start over here with alice's credentials and they want to get over to the domain controller and they can leverage this structure over here to get down to client two over there using the alice account from client two they're able to get access to bob's account because he has a session there

bob is a member of this domain admins group and that's an admin to the this this host over here and so now the adversary can get a foothold over there and from there they can get at the domain controllers group and there are in fact automated tools that are able to do this there there's a couple up there death star angry puppy go fetch that are able to automatically execute these paths from bloodhound now let's look at it again and and say what would happen if we throw and say deception in here and the idea is we can insert like a fake edge that says well this support group down here is an admin to this this win box over here

just so it's a fake thing in there we we've thrown it in into 80 and and we're just going to see what happens and now an automated adversary might come in it's either a member of this group see it's an admin on that box and try to laterally move but that won't work of course because it is a um a fake rule that we put in and so what happens well if it's a simple adversary they might just break they'll just keep trying to laterally move using alice over and over and over again and you've won the adversary is not getting through and even if the adversary is smart and they recognize that this isn't working or

they even recognize the deception you've now made it harder for them to run their operation and easier to detect them and so with that said our our thought and our our our objective here you know both of this talk in this kind of broader context ron and i are working in is we want to understand how to best deceive or disrupt the planning process of an autonomous agent you know particularly in in in in the cyber domain part of this is to formalize deception you know more on that academic side to also help grow the community of researchers considering this topic and also to start thinking about how to build capabilities that can anticipate future automated threats

and one of the big things too that we want to look at is how do we add deceptions to our environment to make them particularly challenging for an automated adversary is that different than what we'd want to do for a human so this talk is you know just being very upfront it's it's fairly academic where presenting primarily preliminary work we've done in simulations we've done a little bit with caldera but really the bulk of the the talk today is on that preliminary work run in the simulation space our goal though is really to help inspire others to at least start thinking hey how should we defend against automated adversaries you know we think that this might be a problem in the

future and if if we can help inspire others to think about it we think that'll be a big one and so our agenda is i'm going to hand it off to ron in just a second who's going to talk about some of the background and related research and we'll talk about some of these preliminary experiments we ran you know what the context was what the results were and then dial back for future work and some conclusions and takeaways so that said i will hand it over to ron to talk more about the background for for what we're looking into thank you andy uh the first we're going to start off with just thinking about you know what it means to be acting in a

cyber environment what are the types of things we're worried about maybe next slide andy okay um and the first place i guess a big disclaimer here we're definitely not the first people to think about you know deception in uh in cyber environments uh there's lots of people working on uh you know deploying honey pots on on networks which are uh you know for adversaries to move into and then we can observe them there and and see how they're acting uh decoy file placements we can you know monitor file you know that are you know touched or accessed or modified place decoy credentials and uh or you know just network obfuscation so that you know ddos attacks don't work as

well and not only that there's there's work on on putting all these pieces together so you can you know automate you know deception audit you know deception operate operations against an adversary um you know this is a this is an example of rds of one toolkit um you know but you know these are mostly designed against to be deployed against human adversaries that we expect to be coming onto networks but there's you know even more advanced stuff coming down the line uh especially in the in the realm of you know content generation for the for these networks um you've probably seen these these toy domains like uh you know uh i'm not quite sure how you know we

might deploy a you know fake uh you know airbnb listings to deceive adversaries on the network or this you know this monocle guy in the center but uh if we can generate you know believable fake code to put you know put places in our networks that could keep our adversaries at least the human adversaries entertained for a very long time will it keep uh you know automated worms entertain entertained well probably not maybe they'll suck it up maybe it's maybe it's good for that okay um so let's think about you know how an average area is going to going to work an environment so next slide any uh well actually you know back up maybe

a step so you know how does a human work on our network no that though sorry not not not just you handy uh me back up a step rhetorical step so how do how do humans behave in the environment well humans are are deliberative creatures uh we might be a little slow in moving through a network as a red team uh but uh but we can be innovative and take steps that you know that someone else hasn't seen before and piece together you know two separate components to have it to have a new effect that we want to do on the network um we're great at pattern recognition uh we can if we if we see a line of

hosts we might be able to match up posts to users uh we might be able to you know guess passwords in certain cases especially if you can you know pull up you know biographical information on on users on a network uh and and critically especially compared to computers our ability to detect anomalies such as you know you know host hosts that are just really out there for the network that we were expecting our ability that much better than than uh than current um ai systems uh also you know cyber adversaries can get irrationally managed use so you know if you're you know going around and they get the i get the get the impression that as as a defender

you're just kind of making fun of them with in the environment by by switching things around or by creating deceptions that are just really blatantly obvious they could just decide the nuke the you know nuclear domain um well this is compo this is supposed to you know you know what so if this is a human what is a computer well obviously you know if i made a list it's it's the opposite of that list uh so so computers are very fast at you know applying actions domain but it's prescribed by what the but what the programmer put into there um it's you know their pattern recognition is getting better on computers but anomaly detection stinks

and and what a nice thing about if especially if you can isolate some you know cyber adversary software or just you know making something for yourself and a as a as a stand-in is that you can run it over and over on on various iterations of a network and it will never get mad at you um and so this provides an opportunity for for testing that we don't have with uh with human red teams so let's look at you know the type of environment now now we can look at the type of environment that uh that a uh cyberaction you know an automated you know adversary might be working in and so let's look at a particular scenario so

we we have a host that we have that we've infected uh it has a remote access tool running on it that can run code for us and we've identified sensitive information on another host and we want to exfiltrate that you know that sensitive data uh from that secondary host and um and to do that we have a suite of techniques that we can apply um from you know exploiting a vulnerability in a certain piece of code to remote execution if you know under certain circumstances dumping credentials copying files and finally we have our exfiltration technique and we want to be able to pcs together all these separate techniques into a program or an opera an automated operation to

to go for it well the first technique that we've seen that you know that we've seen some in the in the wild is you know finite state machines um so a finite state machine is just that you know you've seen it before we take uh you know we have a our machine our machine starts at a certain stage it looks in the environment looks at what you know it has a prescribed output for a given result from its last action so there if we can take our four you know take four of our actions you know and and make a machine out of them we dump credentials on it we start off with that dump credentials on a box

we can amount to file share after that we copy a file remote execution rinse and repeat over and over until we get to our right box where we can apply our you know data exfiltration and then we're done that's great we've made our we made our tiny a nice little neat machine that you know that that automatically exfiltrates data from a network assuming that this is all we need to to do it on the network but what if our networks are more complicated than that and we need our other set of actions well now we have this finite state machine where do it doesn't it's not really clear where these other ones will fit into that we

might have to i mean maybe they can you know there's going to be different paths i mean there's different ways to weight them and maybe you know we still want to start to optimize and and this just gets really you know problematic to just plug this into a state machine and this is where the field of automated planning has really come it you know provides a really you know tempting tool to be able to apply to the you know building cyber adversaries you know automated cyber adversaries for us to er for for people to use um so you know a quick short take on what is automated planning one is it's a it's a pretty big subfield of ai

it's centered around sequencing actions um and the central philosophy of it is that you what the user describes is the physics of a domain um so we describe what actions the user can take or the agent can take sorry uh preconditions are what must be what must be true in the world for those actions to to be applied um what the effect of those actions is on the world so not necessarily how it changes the world but what what the world looks like after that action happens and a goal state to the world that we want to achieve and the in the automated planning system is meant to find a sequence of actions that takes us from

the initial state to a state of the world in which our our our goal is true um it's been a lot applied a lot of different places uh you know a kind of famous example here is uh the hubble telescope hubble telescopes gets you know requests from sciences uh from scientists it's a gets way more requests than it could ever take yeah uh you know number of observations you could ever take um they have to consider you know constraints on on their motion you know how many how much power they they can use up um thermal constraints whether the the earth is in the way um whether the transit you know path to the you know to give observation points at

the sun which you know is would be unfortunate um you know how far away the you know the angular distance uh how long it has to sit there and the then we have to take all those constraints and try to optimize uh a sequence of observations to take over a given period of time and so that they're they're trying to it's you know we have all these different options and we want to make the maximum you know you have to make the maximum science out of this telescope over a given period of time so next slide so how does this look like in the in the cyber domain well we have our various different actions so let's look at the copying of

file action uh what do we need to copy a rat over from host one host two well we need to have uh we need to have a working remote access tool on the on host one uh we need to have a mounted file share on host one from host two we have to write and we have to have right access to that file share now this is what is called a precondition or a requirement um for this action to take place uh so this is you know going back to the automated planning terminology okay so once we execute that that copy action we expect that there will be a new file on the target host and it will contain

the that file will contain the rat this is an effect or a consequence or post condition there's a lot of different words but this is what the action makes true in the world afterwards um so if we take a whole bunch of these together we can uh we can try to you know sequence on the sequence them to you know to act so let's look at you know this this copy file let's say so our goal is to copy a file over uh we'll look at the you know the other one shortly uh so to copy a file um it what it will do is you know get the file on the target but it needs to have a mounted file

share uh to get the mounted file share we do our amount share action um which mounts share button needs credentials and they get the credentials we take our our credential bumping tool which you know gives us credentials but needs a remote access tool with elevated permissions and together these three actions form a plan um and a plan as long as they our initial state of the world is what we think it is with you know an elevated remote access tool on a machine that has proper credentials this plan will get us uh to our goal state of the world of having a file on the on the target host so next slide so but in the cyber environment

especially as our domain our our abilities of our and techniques get more complicated and get more and we could just get just get more of them we might have more than one plan um so you know we have you know this set of hosts and so you know dumping credentials and some and mounting file sharing copy and file is somewhat you know and maybe analyst exploiting adventure vulnerability and running your remote you know remote desktop um so we have to be able to sequency yeah you have to not only have to look at you know as given plan we might want to have to look at multiple plans so any next slide and so you know here we might have three

different plans you know they're different lengths they're different so they might have different amount of time we might be worried about different you know uh levels of observability from the from the blue side analytics um or there just might be different you know probabilities that this this plan will actually work right um we might we might have different confidence in different easy these plans and so an automated planning system uh is able to you know run through these these these different uh sequences these different sets of actions that produce various you know you know sets of sequences sets of plans that it can sort through based on our criteria and pick out and and and then provide them to us um and

so then we can just you know okay we can try the best plan if that fails we can try the next plan um and and so that these automated planning tools give us a way to you know you know think about you know having lots of plans available to us and and how we might you know prioritize those uh so next next slide so you know just talking about some computational aspect planning is very very hard so um from a computational you know standpoint at least the worst case complexity um it's you know p space complete for propositional planning if you don't know that that's not that bad i mean that's not you don't need to really know

those words but what it means is that we might have to iterate through every you know every possible state of the world that we think we can reach in order to find a plan in the worst possible case and then if that wasn't the you know bad enough different things to make it much much worse so say say we don't you know control the output of our actions the other they they we know that they might fail they might return you know different types of things or maybe we don't know everything maybe they're important things about the domain uh that we don't we can't see we only infer by you know completing multiple actions or maybe

there's another you know actor in the in the environment that's uh working against us or maybe maybe we're not working against maybe they're working with us um and all these different attributes make planning exponentially harder uh yeah um and so they can essentially like uh and some of these can make it you know just just unsolvable and so a successful application of of of automated planning in the domain means making you know a careful use of you know the features that you care about uh so if you can ignore you know non-determined actions if you get if you say okay i know this action fails but i'll just handle it run time okay if it fails i'll just ask the

planner for a new plan instead of rigorously planning for all outcomes at the plan that makes it much easier to do okay so next slide now unfortunately in practice despite having this terrible worst case complexity uh people have found ways to make the problems that we typically look at easy to solve so every every couple of years there's this big international planning competition with lots of different tools tested on lots of different domain features on lots of different uh lots of different uh domain structures and for the most part there we're able to solve those problems and solve them relatively quickly and in fact you can pull down lots of the open source code for it

uh and plus uh you know cyber planning problems um have end up being much simpler structure than what a lot of these uh a lot of these planners work on a lot of what this competition focuses on so there's there's you know plenty of plenty of reason to think that you know we can solve these problems quickly it's not going to be a computational burden even though that the you know some of the theory says it might be so with that i think i'm going to hand it back to andy sorry i have one more yeah uh one more on this so deception you know the existing research on cyber reception has really been focused mostly on on

people so uh it's been taking you know honey pots and how do you design a honey pot to fit in within the network how do you distribute them within the network to make sure that they're not uh if they have properties about that that's you know that match with the hosts around them and then how on the inverse side as an adversary how do you look at it at a honey pot or a network confiscation and say that you know hey look that's fake you know how do you detect that and it goes back and forth in that in the research uh the gap here for looking at automated adversaries which we which we think is

coming is that when they do come uh they're going to introduce their own attack surface against them right because they're they're going to have some decision procedure and that decision procedure and that new conflict that that that makes them you know how they choose their actions is going to be is going to you know add some complications so you know can we use that against them to slow them down by you know uh you know introducing domain features that distract that the decision procedure uh can we make if they're simple enough can we get them stuck in loops can we make them accomplish our goals by making them you know trip sensors uh and analytics as they move through

the environment or or can we redirect them over to honeypots where we can safely observe uh you know what they're trying to do in their techniques now i think i'm handing it back over to andy thanks ron um so yeah i'm gonna dive back into it and talk about some of our experimental results and the big question here that that we're trying to look at is how robust are automated planners to changes in a cyber domain and of course the key word there is automated planners i know ron has talked a lot about them but i want to take a second to just quickly describe what an automated planner is you know really the simplest way to

refer to a planner is it's a domain independent program that takes as input a machine readable description of actions a start state and a goal state and outputs the sequence of actions to achieve that goal kind of starting in that initial state and usually what happens with automated planning is you have this machine readable format i'm just going to call it pdl for now it's topic for a separate talk um but but it allows you to specify okay these are my this is my action description these are the preconditions the post conditions the parameters we refer to this as the domain you also have a machine readable description of the environment we refer to this as the problem

and using those two the planner is able to come up with a plan that spits out that action sequence that gets you over to the goal that you're looking for and this is a gross over simplification but one of the ways that planners work is they effectively take this machine readable format and construct a graph from that machine readable format and then traverse the graph to find the actions to achieve the goal that you're looking for it's that that graph construction that's the real complexity here now of course that's an oversimplification and and just to make sure no one's walking away too with just that as what planning is um a a couple of quick notes this is now for

simplification um a lot of planners there's tons of variations in planners and a lot of it's really on how they ground things and how they search for things and then lastly um domains and problems are small but the resulting graphs can be huge um that's that's probably you know what one analogy i'd put there is chess you know the we all know the start state we know what the end state is but coming up with the sequence from the start to the end is is the big computational question it's a very large graph um so that little bit of extra background our experimental idea is relatively simple we want to leverage something called the caldera enterprise domain specification

this is in pdl and i'll i'll provide a little bit more detail on that in a couple of slides select a specific problem like a specific enterprise representation to focus on and then baseline how long it takes the planner to solve that problem and then make modifications to that problem re-running the planner to measure total computation time and we want to answer a few different things by doing this of course we do want to kick the tires and just see what happens it's always fun to do that but we want to identify you know what types of modifications work best to deceive planners we also want to try to fill out like how much effort we

need to put in to make a modification i'll give some examples of that one as we go through this we also want to know whether or not the modifications or the deceptions that we add whether or not we can make those work against multiple planners or whether or not you know we have one deception for one planner and another deception for another planner and then another question is how important is it where we put the modification this is big you know you you would think intuitively that if you put the modification in line of where the attack path is it's most likely to have a bigger impact but we want to double check and make

sure you know experimentally then lastly is what's the maximum possible slowdown we can have against the planner i guess maximum possible is a bit of a lofty goal uh but but certainly at least to try to understand how slow how much of this slowdown you can get against a planner and before getting into it a few disclaimers first we are assuming by running this that an adversary is using a domain independent planner this these planners that we're going to talk about are ones that you can use for what we're doing here but you can also use them for say um you know solving the game of snake um you know kind of anything represented in

pdl so we're making it's a relatively big assumption but but it is important for us to kind of you know really see what's what what what the mechanics of what we're looking at is here we also assume the adversary plans all at once never updating the plan in reality an adversary is going to execute something get an observation then replan um so so so this isn't necessarily the most realistic but again we still want to feel out these waters and lastly i would just add we aren't necessarily deceiving the adversary but we're modifying the topology it's a bit of a difference um but it's kind of important as we think about how to deceive well how do we actually

modify the topology right because the deceptions are going to modify the topology ultimately so that said a few kind of quick details about the caldera pdl domain basically it represents a very basic caldera adversary profile it scans hosts it dumps creds mount shares uses remote wmi for um for lateral movement this is actually if you want to go use caldera and use something representative of this it's if the adversary profile is alice 2.0 um should be out there in stockpile the domain represents a few kind of core objects you have windows domains hosts and then users with their credentials and there's about 30 different predicates that describe various features within the environment that we care about you know things like

status you know what's caldera's state of compromise things that just detail like hey this host has a host name and then relationship predicates say this user is an admin on this host and what's cool about the caldera domain is that it's monotonic and delete free which really here means that adding information does not cause contradictions so as long as there's a valid attack path and the problem we start with as long as we're only adding information we're never going to remove that attack path we're never going to cause a domain to be unsolvable there will always be a solution and then i'd also just kind of point out that this was used as part of the 2018

international planning competition so this one is also available freely online and so to give a very rough example um i'm just kind of like what the action flow looks like here here we have host one um we're gonna discover all hosts as the first command we're gonna see hosts two three and four we're then gonna dump creds on host one we're gonna get rick and rachel because they have active sessions we're gonna probe for admins on host four roy is an admin there can't do much with that info probe for admins on host three we see rachel's and admin on three since we've got rachel's creds we can laterally move over to host three we dump credits on three we get nothing

we then probe admins on two we get rick and chris as the admins there we've got rick's credentials so we can laterally move to two we dump creds we get roy and wilbur and using roy we get over to host four and then there's a couple of other things that because we're planning here and being a little bit more intelligent you know we we don't ever say you know scan host one for admins just because that piece of information isn't really relevant for us so that said a little bit more about our test setup we created a set of about 25 different modifications you know things like adding fake users adding fake credentials fake hosts

things like that for each trial we record the total time the plan length and some metadata we're not going to get into for this talk each trial we or each modification we run it multiple times to hedge against some potential randomness because we do make some random decisions here we're just doing 10 trials per modification we did test it on a variety of caldera problems here we're just focused on problem number eight and then we also were able to test on two public you know fairly high performance planners called fast downward and probe and so here are the results there's nothing there um no i'm kidding uh on the we're gonna walk through each of these kind of quickly but on the left

is the modification and the middle column is fast downward just the time the standard deviation across the trials and the total plan length or the average plan length and then the same for probe we talk about unmodified we can see fast downward versus probe 67 seconds for fast downward probe only took 41 seconds probe also came up with a length of of size 24 versus 26 so probe here was both faster fairly significantly faster and also more efficient it came up with a better plan the second modification we're going to talk about is adding new non-admin users just as cached on hosts and here what that looks like is we took that kind of starting example just

a bit of it just for demonstration detail and you can see in red we've got user c user a and user b just added as having active sessions on these hosts it just um it doesn't change the topology at all it's just an extra bit of information and we can see fast downward it doesn't really do much with with the info it same plan length three seconds more it's not a lot but probe takes eight seconds longer same plan length but that's like a twenty percent increase which is pretty large the third one um existing user cached on a random host so here we're just going to say like hey here pris is selected randomly and now

has an active session on host one doesn't really change the topology much at all um for some reason fast downward just explodes here we chose our host randomly we're still trying to figure out exactly why it was doing that but you can see the time on average was up a little bit over two minutes for the standard deviation of 200 seconds whereas probe the time actually went down which which does make sense because you're going to cash more credentials number four is we're going to make an existing user an admin on a random host so here we're just going to say roy is now an admin on host 3. it'll give us a lateral chain from 2 to 3.

nothing too fancy but when you put fast downward through it you get maybe one second increase you do get some standard deviation a bit of a jump there but not not a huge change and then for probe it gets a little bit slower again you've got some deviation there but you know really i'd say that this trial didn't have much of an impact on either planner now we get to some of the more fun ones um the first is adding a small disconnected network so we're going to take this kind of start diagram and then move host one up and then we've got host x added who has admin user a and user b totally disconnected from the goal

totally disconnected from the rest of the network but interestingly enough it causes fast downward to basically double its total time and come up with a slow a longer plan to solve this which is fairly interesting and probe it also causes a relatively significant increase that's 15 seconds against 41 um you know unmodified that's that that's pretty good now it gets even more fun we're going to add a medium disconnected network and what's going on here is we're going to add three of these hosts kind of in a little ring format where x gets to y gets to z again totally disconnected um you know it shouldn't really impact the actual path here now the time for fast downward it

increases even more 168 seconds now versus the 128 for the small disconnected network here we see interestingly enough the plan length is 26 as opposed to 28 for small disconnected network still trying to figure that one out too and for probe we got nothing probe actually can't solve this it it it crashes every single time we try to run the medium disconnected network case and then second to last we've got a medium connected network and here it's kind of the same as before where we're adding these three hosts but now we're connecting it by saying that rick is an admin on host x so now there's a chain one to x to y to z

and interestingly this again is still a slowdown for fast downward um only three seconds more than disconnected which is somewhat interesting and and probe can't even run it and then the last one is something silly just adding a bunch of new objects and we're not gonna connect to anything put any info in them it's gonna be here's wxyzv and a bunch of different users it's very bland it's it's not changing any topology there's no topology whatsoever and fast downward immediately recognizes this basically no change on the total time for it to solve it whereas probe this is actually probe's slowest um slowest trial that it actually completes going from 41 seconds up to 74. so this causes a fairly significant

slowdown for probe which um is fairly surprising given that fast downward looks at it and it's like all right i don't care no big deal so talk a little bit more about some of these results and and why they happened um you know the the planner performance varied it's really interesting you know probe is really fast but it struggles with grounding the problem so if the problem is too big it's going to struggle to kind of you know actually solve it whereas fast down where it's grounding it well but it's slower to actually come up with the plan so it's interesting to see those kind of varied approaches giving these varied results we also saw

that many of these one-off modifications that we did they had inconsistent or little effect usually at least one of prober fast downward to solve them easily you know with some exceptions around like the medium and the small disconnected networks and a couple of others we didn't talk too much about today and probably most consistently um the the most effective modification was indeed to add a fake network um interestingly connected versus disconnected didn't matter but but this was just from a consistency perspective just the most effective modification we could throw at the planner so a few takeaways just from the experiments themselves you know given the differences in planner methodology if you're thinking about or you're

worried about automated planners attacking your network your best bet from what we're seeing today is probably to bet on a portfolio of modifications probe just fell on its fl face by throwing noise at it whereas fast hour just you know breathes right through it um you know it's low cost to do that versus something higher cost like creating a whole other network and yeah adding an additional network that's likely the best way to slow down a planner based on these results but this of course is takes a lot more effort than just adding stuff in active directory willy-nilly and it's a little bit more time consuming and what's interesting too is that things that might work against a human

you know overwhelming an adversary with objects right in the case of that that last trial that fast downward just breezed through well i mean that's that's easy for some of the planners to solve it it isn't necessarily harder a couple of notes on some future work we're hoping to do and some other interesting things that that that we found so far um you know first we do want to expand our experiments this is an active area of research we're trying to look into we want to run more tests more planner more modifications different assumptions about our attackers you know different models we also want to better understand why some of the planners struggle with some

modifications as i mentioned um we're still trying to figure out why say fast downward when it when you get the the random user cache somewhere it just said you know what i give up i'm going to take you know two minutes now you know we still want to better understand why some of these trials cause such interesting results we also want to target online planners planners that execute an action and then get a response and then maybe change their plan that we can do more live deceptions as opposed to just topology modification and another big thing we're curious about is you know how do we deceive today's automated adversaries deceiving automated planners is great but it is a little bit far away

how would a simple automated adversary re respond to deception can we break it can we force it down the wrong path can we build a detection and prevention mechanism you know can we do that easily to support them and and also how would a simple adversary respond to some of our more interesting anti-planar deceptions and real quickly we actually have started a little bit down this path we've run caldera in some some basic profiles to understand how some little deceptions might throw it off and here's an example where we ran caldera using this this basic alice 2.0 credential set profile against 10 hosts set up so that there's only one real lateral movement path and we did it in two trials just one no

deception and one that did fake credentials added to the starting host and here's a little diagram fairly straightforward you can see we've got host one to two to three to four and we start on one we go through the ring it's fairly easy this took caldera unoptimized for speed so so not as quick as it could be but it took at 23 minutes and it executed 122 actions then over here we took the same network and just ran this cmd key command on that start one host and that added this this fake user user and password kind of credential combo i running caldera this way and now it took 29 minutes executing 131 actions and nine of those those nine extra

actions all use the fake credentials it was and and this is a fairly significant slowdown six minutes and nine extra things makes it easier for you to detect and so our hope is that with more testing we can better understand how caldera can potentially be a stand-in for today's automated adversary we also want to see if we can maybe make keldar a little bit smarter so that it could be a stand-in for even smarter adversaries and really allow us to better understand how to defend against both intelligent and unintelligent automated adversaries so with that um a couple of quick takeaways automated planning is cool but adversaries might think so too deception strategies versus humans might

not be the most optimal strategies versus bots deception against automated adversaries there's an untapped research area we think there's lots of different subtopics you can into we're really only scratching the surface here and would encourage others to also start thinking about it as well and then today you know kind of these simple deceptions are good enough versus things like caldera but into you know tomorrow that might not always be the case so we do think that this is an important area of of further research and with that um we put our contact info here um you send it feel free to send us an email i'm on twitter too and thank you for listening