Martin Holste - Beyond Math Practical Security Analytics

Show transcript [en]

good friend of mine I'm uh I'm very always very very impressed with uh this guy's knowledge of just about everything uh whether it comes to uh sort surata uh building PF ring aware applications uh log searching uh javascripts uh this guy knows it all uh he's just amazing super smart guy uh those of you who are you know famili with she on you use Elsa so you can thank this guy for it so ladies and Gentlemen please join me in welcoming Mr Martin py thanks Doug checks in the mail I I know a little bit about a few things uh so I'm usually a better Lessons Learned kind of back so we'll go with that uh how many of you guys saw

Patrick's talk just a little bit ago that was an awesome talk uh we didn't coordinate at all and we should should have because we had I think this would have been a great like uh collaborative double talk but it was he had a really good like evidence I would say to back up kind of some of the things that he and I work together the things that independently found so it was really cool to see that you know in fact uh the stuff that I've kind of had a hunch about and started looking into seems to pan out and that's kind of important because when I started on this talk a while ago I started writing

it I wasn't really sure I felt like I had a good lunch and I felt like I was right but you know I was kind of waiting to see if somebody else had come out no it's not true and if if there's something in a talk that you feel like know that's not true I think that would be you know we can have a question style the end that's good catch after if your experience is different because we all have only our own experiences and what we you know go on so it was kind of important to me when I started that the more I got into it the more I found that to be right so

that was kind of the big big lesson for me was you find something that you think is probably right go for it uh so how many of you guys run analytics in your shop several okay good uh how many would say that you feel pretty mature in it okay a little bit yep um so I've given this talk a couple of times and each time that seems to be about the response I've I've been giving talks for a few years and for the most part I've gotten a really like better response on this talk than some of the other ones I've given because it seems to resonate a little bit more and each time I I find

out kind of why that is and it makes me feel good that at least we can kind of talk about some of these things and get them out there because I think in our industry there's just so much to no one understand that that having you know a structured conversation about some of these tough uh areas is really important so if nothing else I hope that that's what you you get out of it so you don't need to know too much more about me Doug already went through that um there's my GitHub and and Twitter handle and I do work for fire eye so a while back uh I had privilege talking to uh Anon Chakan and that he

sort of went more in depth on this this is right off for the web and he had he's for many years kind of said stuff like this but he's been looking at analytics for Gartner for a while and this was a quote that really stuck out for me so his his gist here is that you already have enough tools to do your job that you're trying you're already trying to figure out all the tools you have and then if you throw another tool in the mix you're probably going to get some diminishing returns and whether that tool is analytics or not is almost beside the point but certainly for Analytics because not only is it another

tool it's a complicated tool so his point is that we can't expect a a security instent response team or sock to be have something thrust upon them and then it'll just magically make everything better and we really have to watch out for that so uh the interesting thing about snake oil is not whether or not the oil comes from snakes snake oil definitely comes from snakes but whether or not it provides any value is the question and I think that that is the fundamental question about analytics so it's not a question about whether the math is right or wrong it's not a question about you know is is this algorithm executing correctly the question is whether or not

that's going to help you find a bad guy and that's what we're we want to look at because my my thesis here and I I'm up for debate on it but what I have found so far is that it's very difficult for them to provide value on their own so why is it that security analytics need help so we have a number of challenges and I think the the easiest way to explain it is that it comes from other industries that were successfully using analytics so if you're going on uh let's say Amazon or Target or any online retailer and you are looking at an item chances are you're going to see at the bottom uh you might also want to buy

this right now what is the cost for them being wrong about that analytic not a whole lot right so either you don't click on it not their optimal scenario but no big deal or you do click on it and you're like uh that's not really for me so the the risk of false positive or false negative in that scenario is you know almost non-existent no one cares it's just a shot in the dark if it works it works and you can do all your AB testing to try to drive that but it doesn't matter uh it's the point is that analytics came from a place that was kind of low risk and we're trying to

apply it to an area that's highrisk and that's very challenging so we shouldn't expect it to be the magic bullet or just say oh this will solve all our problems or even a significant number of problems I think that's an important distinction and there's a number of reasons for that and these and I think you compare it with the retail industry they'll kind of come out uh naturally so they're war is the high cost of false positives negatives but the other problem is that the the output starts uh from an analyst or sorry it starts from a data scientist and is given to an analyst and that's very different because if you're looking at the the retail industry it's going to

start from a data scientist and then the data scientist will interpret the results here we have this handoff that occurs and we'll get into that a little bit and then we also have some serious problems with data and challenges of making that data useful for analytics so I wanted to uh use a specific example for the analytics so that we don't we're not just talking about the you know the entire body of work all the time I want to focus in on one as an example and try to show sort of the the pros and cons so for quential misuse uh before we can even start doing that first we need to break down the

problem and understand how would you even go about doing that so there's a number of different things you have to do to start with uh you have to collect you have to find all the curral uses and that's a non-starter in some smaller environments just getting centralized logging stuff like that that can be very difficult uh that's something that's near and dear to my heart and so for me I think that there's a good answer for that but it's for a lot of organization still tricky and then after that you still have to identify the context So within the context uh you have to identify the actor and the Target and then finally derive intent and the derivation of

intent is very difficult sometimes impossible but even uh taking that context in finding your actor implies that you know a lot about it and the other thing is that whenever you're dealing with analytics this is all the stuff that happened all of your security sort of did its job for For Better or Worse so these are all things that passed so the firewall said it was all good the login was authenticated you know you know technically even authorized all those kinds of things so this is all the stuff that already had a shot at knocking potentially bad thing down didn't do its job and this is what's left so we have to kind of remember that and so we're trying to

figure out that you know the three things the the target the actor and the intent so breaking things down like this is is really the fundamental process of making something proactive now let's look at sort of the opposite so sometimes you know it's a it's easier to describe something by saying what it isn't so we'll start with that uh so something that might be impractical and again uh if you've seen the situation where this worked yes there are situations where any of these things will work the question is uh how often should you be trying to recreate that situation so how about the specific situations like DNS tunneling uh it has a spad business use case so you're going to see

a lot more exploit kit activity than your DNS tunneling it may work but there's going to be a lot fewer times whether that's more or less valuable depends on the organization but from a a work perspective and you're saying what should we be going after if you're looking at a purely incidental uh case then you know we're say there's more incidents of this kind than this kind so we have a pretty high opportunity cost for pursuing that this is true not just for DNS tunneling which is one example but anytime you're saying I'm going to build an analytic that goes after this one thing uh you run the risk of saying well okay we're going to spend a lot of

time on this one thing that can shift I mean things move very quickly in our industry so the other way that it might be impractical uh takes a lot of tuning U it's not that it won't work you can definitely do it data XL is a good example so if you have U you're looking at just traffic anomalies you know first thing you do is start tossing out the you know the FTP server your you know Cloud front all that kind of stuff uh uh and then you have to add it to the Whit list well now you have a whit list problem it's not that it won't work but it's going to take time and there's a

lot of places where things can fail because you have more and more moving Parts there it might also be impractical if it's cost prohibited there are a ton of analytics which work really well on million couple million 10 million events those kinds of things but when you start doing in the billions it's it's totally infeasible uh some of the the things that I've seen was similarity clustering analysis there's a lot of different ways to do that in the majority require um an m byn is in all events times all events so you start with 100 now you you're at a thousand um so that's that could be a a real challenge to try to get from a

POC into something that is going to work for the company all the time and it's you may be looking at a very different uh proposition at that point and then I think this is probably the most common uh it's difficult to acquire the data and even within that there's data quality issues so as I said at the beginning that I wasn't super sure about my position when I started on this talk but I had a hunch and I got way more sure when I came across this paper here that I site uh it's it's a paper that Google delivered in a lecture at Stanford in 2014 I believe and they they really underscored all the issues that I seem to have been

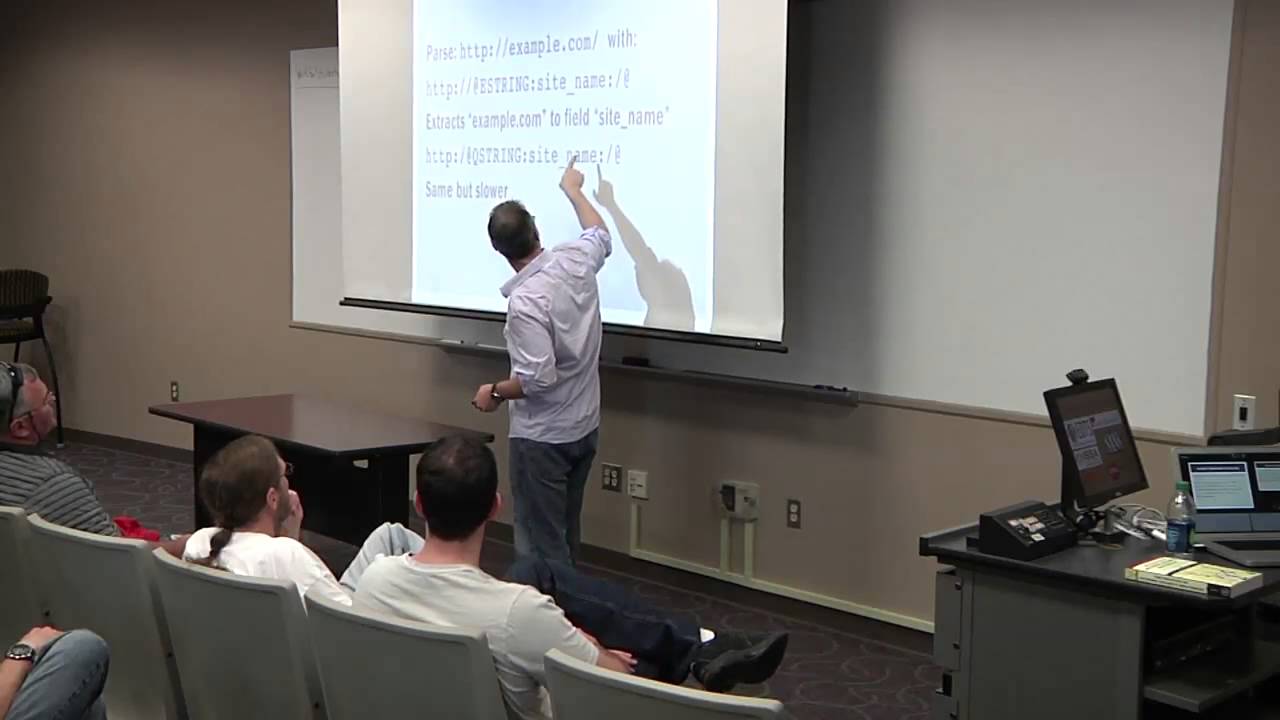

uh seeing and it made me feel a lot more confident in my opinions and I'll have a couple U call outs have a good diagram in a bit but these are some of the things that they saw that I've seen as well so log normalization is completely underappreciated uh I mean there's a million different reasons for that parsing is is the first step but then even after that you have taxonomy issues uh so calling um like a domain user like so domain sluser versus the user if you're trying to if you're trying to match up two sets you're say Well when the user was the same if they're prefixed a different way or they're coming from different systems where

maybe they have a middle initial uh those kinds of things that act I mean that will trip up your analytic before you even start so it's it's a real issue with acquiring the data time stamps are always a problem um multi-line logs or normalization issue and then just having the right fields to do the job then the other problem is just being able to explain what happened so it's you're not going to have a very practical analytic for a security team if you can't explain what occurred uh neural networks are a prime example of this they can do some really cool stuff I've played with neural networks a little B enough to get sort of a puc

kind of result and like oh this is really cool and then if you try to say well how do you know this thing is bad and then your analyst says well why was it bad it's almost impossible to explain that because the definition of why it's bad is the entire training set and it's not even that it's labeled or unlabeled it's that because of this there might be like two billion uh Records I mean that's that's a very difficult position to put your security analyst in and they probably won't be as inclined to ask for that kind of result in the future uh and it just adds to the overall problem um this was called a a

black box problem uh in that this paper so then the other problem is you have accuracy versus generality so if you start with something you're like okay this is pretty good this is also called overfitting I was talking Security on who who all went to the security on conference yesterday fa number okay so the guy talking about overfitting this is this is B uh really I'm so B you should be able to say you uh so the uh the the more overfitting you do is taking something that kind of worked to start with you're like well I'll tweak it this way and tweak it this way and tweak it this way and now it's working but now it's very

specific and if you think about sort of over specialization leading to Extinction um back kind of Patrick had talked about uh Evolution and that that's very true in this kind of sense so at at certain point it's going to become less useful right and uh Robin Sommer bro Fame put it very well he said uh you might notice that you now know enough about the activity you're looking for that you don't need any machine learning and what he meant by that was by the time you've tweaked it so many times what you end up with is a rule not an analytic so Google called this uh sorry so um when you have a ton of uh events

to start with and you do a really good job and you get it down to 1% that's still a ton of alerts and so Google found that they could always narrow down to five to 1% of employees were doing something they weren't supposed to do you know in an internal audit but it's still 500 employees a day so they called this signal fatigue and the the way that this manifests itself is just too many alerts for the sock okay so that's all the the things sort of I wouldn't say what not to do but some of the problems so let's look at attempting to make this practical and uh this is kind of if you if you're a

data scientist you haven't gotten your pitch workout already you might you might start reaching now um so this is these are things that You' say okay well we're not going to do the things that we said don't always work now we're going to try to make it practical so here we go let's try to solve a credential misuse problem so we're going to do a standard learning thing uh and so the first problem is getting label data sets because especially if you're in an IR organization you don't really know what's in your very first set so you might go on ground and there's already an incident occurring so you have nothing to base off of your ground truth

is is contaminated and when in any organization it's very difficult to say yes I am 100% sure that we don't already have uh you know beaconing or some bad activity going on right now so saying that you're going to look for a difference between then and now definitely has its pitfalls it's also very difficult to extract the right features um another call back to Patrick's talk he talked about strings and you have to convert those into numbers that's very tricky to do uh it can be done but it takes a lot of time and it's it's very easy to start putting too many features in and the cost of it all goes up and it

just it start you start to get into this spiral of like oh now I gotta do this and now I gotta do this and now I gotta do this that kind of goes back to the opportunity cost of it so the straight up machine learning on it is actually pretty difficult and same thing with with predicted Behavior this would be uh if you take pass activity for one user will say I'm like well they did this and then so because they they've done this in the past we're going to expect it to be you know this such and such in the future and if it's deviates by more than a certain amount then we want to alert

on that and that works a lot of the time back to Google's point you know maybe 99% of the time that's right but at any given point in time there's a number of people on vacation there are a number of people having something weird in their own lives occurring maybe you know a l On's at the hospital loging something weird uh so weird things happen to 1% of people all the time and that makes it that that that makes it such that it's actually normal to have 1% of things weird if I can put it that way so it's it's it's not malicious to have 1% of things being weird all the time which means you know within that 1% you have

that signal um and and this again if you're trying to do that you have every possible login and you're trying to look at what the login does now so there it's not to say that you should never use those kinds of predictive behaviors or or supervised learning uh you can definitely use them uh as long as you're going to use them in in a helpful manner so for anomaly detection uh as long as you're willing to deal with a lot of the alerts that that full 1% then that's okay I mean that's that's better than 99% right so let's get to 1% and then let's do something with it the same thing you can do with some clustering stuff so um an

example would be like net scoring if you get you know more than five bad guys in the same slash 24 okay we'll mark that 24 back you know that's that those are some some basic things you can do they're not silver bullets you're not going to make an alert on that but they can definitely help and that's kind of the foundation of practical analytics that's saying we're going to do something that's going to get us closer but we're going to admit up front that it's not going to give us a bad guy right up front so what are what are sort of the Hallmarks here it's easy to compute it's easy to understand and it's

easy to a if you can do all three of those things then there's a very good chance that what you're doing is going to be practical and that it's not going to take too much time and that you definitely going to derive value so I said i' kind of get back to that Google paper and from their diagram I thought this was really good so if you have a rules engine that's on the bottom it starts from the the security team writing rules they put it through the rules engine and they get alerts out that the security team looks at that works very well because people that write the rules the people that are evaluating them but if you are starting

with software engineers and it goes through feature engineering and then feature extraction and then it goes through all that stuff and then you get alerts and as I said before when you back Trace all that something gets Lost in Translation and that's that's one of the big challenges so how do we actually create the how do we synthesize where do we start from with the Practical stuff so you start with the the actual vfy our experience like I know what bad looks like because I've seen it a bunch right so you start with the knowledge then you have to add in appropriate amounts of data and for a lot of places this is very tricky uh just getting large enough

sample sizes of anything uh is fairly untenable in a lot of smaller organizations and then having the technology that's going to scale it and that that doesn't mean just like building it once it means running it day in day out in a sock grade scenario which is a lot different than just getting it running on your laptop once uh so th if you could do all three of those things then you're pretty practical if if so if you're working on something you're like gez I don't know if it would work all the time at scale or you know in our environment you know it's not that you shouldn't do it necessarily but it's it may be

impractical you might not get all the value from it so they don't flag anything good or bad they present facts they're not opinionated they just say what happened um it kind of reminds me of the difference between burrow and surcot in some ways um so bro will just describe what happens on the network surot will flag stuff as either hit a rule or didn't hit a rule uh so practical analytics will not require ground truth because of all the problems that go with that and this is the key one they're rarely actionable by themselves so I feel like they're often overlooked because you know some stakeholder comes in and says we need a way to find this we just saw this I just

read about this or or you know guys on the ground said we've been seeing this build me something that finds this and that's not the right way to go at it uh you need a much more building block approach so uh what would be the actual answer here and the trick it's a trick question there isn't one and one is the key word there uh if you put some of them together then maybe we can so let's look at uh what happens if we try to just take an actual practical analytic and try to do it by itself uh so if you'd say all right well it's practical to be able to say this log has never

happened before it's really easy to to figure out everyone understands it um but it's very noisy and uh like I said before people go on vacation novel situations arise so just saying that A Logan has never happened before something that starts out being practical but you don't get too much value out of it you could say uh it's anomalous that so many things are happening at the same time like spikes right uh very easy to calculate uh spikes are there's millions of tools that will give you spikes but there are legit reasons for that happening and so you can't just say well there's a spike something is absolutely an incident but we're getting closer because we

fulfilled all those those Hallmarks right it's easy to compute easy to convey and easy to understand so so we've got something here so we need to be able to put those things together to get something that's going to definitely provide value you know day in day out and this is the foundation of set intersection analytics uh I have to give some props to a local Brewery in Madison Wisconsin where I'm from proud resident and uh they have a box of of beer with this on the side of it and I'm like I must use this picture this picture is amazing uh whenever I'm feeling I have I have a that picture above my desk feel my just kind of look

at like be the kitty that kitty that kitty can do anything what at that thing so you could do it can do anything much so I approach this like a lot of things uh modular building blocks so the unix's philosophy of analytics so we're GNA each analytic does one thing and does it well and then we're going to combine them like you would a bash script with you know pipe pipe pipe and then hopefully then we're going to get something that's reproducible and valuable uh the way one way you can think about it is something called strange pairs uh so that's imagine you have two lists and it's really common really common to be on one really common

to be on the other common to be in both um try to think uh it's really common to be a quarterback it's really common to be a wide receiver it's uncommon to be both that way you guys don't want sportball references just start letting me know I can do Star Trek uh so then the the other major part of this is assuming that so it's not unusual to combine these things but the the novel sort of approach here is that if you assume upfront that you must combine them it opens the doors to going after things explaining to people no no I need time to work on this even though you're not going to get an immediate result it's

going to require patience which is not usually what stakeholders want to hear um but you can start showing real value that way and so to approach something knowing that it's not going to give you a final answer is a very powerful even but uh simple state St so what does this kind of look like from a flow um you guys read that in the back is that right probably not all right so on the left we have a detection objective so just what are we trying to do here and then in the middle we have a combination of set a and set B that come from in this case events and then you do some little bit of analysis you might do

a little bit of filtering and thresholding these are just very statistical kinds of things and then the output and this is kind of is an event and back to the not being uh opinionated but being factual U this is important because events can be sent back through the whole system so you kind of get uh some a commodity building block out of the whole thing and that means that uh not only can you put two things together you can put two things together at different points in time so let's look at the the advantages here uh specifically are we addressing our signal fatigue I am arguing yes uh now we have a a way to deal with any

kind of lengthy output lists because we have a way to filter them and so as example if you have a ton of service accounts and you filter to just firsttime logins uh suddenly you have a lot less first-time logins and allow service accounts to worry about and you might just be under that signal fatigue limit and so I I was saying that this is a way to start approaching problems knowing you're not going to have a final answer in one step this is basically saying that we're going to take stuff that was previously unviable and making it viable so before I was saying oh we can't use machine learning it's not going to give you a final answer it's

okay if it's a building block in the whole system so this is where it's not that math is wrong or bad it's just that it's only one piece of the overall puzzle and if you know that going in you're going to know up front oh we're going to also need to design this we're going to need to have this kind of data um we're going to need to put all these things together and this has a lot of repercussions so suddenly stuff that that was unimportant data can become important you have an opportunity to take advantage of some things like that uh so if you're looking at an overall set of events um just knowledge that was

previously uninteresting for security purposes suddenly becomes interesting so the list of everyone who downloaded in exe and is not interesting list of everyone who downloaded a PDF is not interesting but you put the two together the right time frame that might actually become sort of interesting if you're looking at a dropper scenario from pdfx and so this kind of these are just some ideas but these are you start creating your own laundry lists very easy to collect things that suddenly become valuable when you look at as small building blocks so you can get the output from other analytic modules um a list of users by job roll suddenly just the list of people who have access to a

transfer systems that becomes really important all of a sudden uh list of people who are on vacation based on what Outlook says you know out of office that could be interesting again if you that of itself not that interesting right um the list of assets that are previously compromised this is like one of my favorite ones you know did the did they really remediate that box that we said was hacked two weeks ago U let's check on that thing uh top browsers by time spent browsing uh my last job we we did a study for a couple of months and we basically found that and these are confirmed incidents for every 1,000 unique web pages you went to there would

be one incident so it's basically R Russian roulette with a revolver with a thousand Chambers or whatever you call it uh and so it was just a matter of time basically so you could pretty much predict how many incidents you were going to have based on how many URLs there were to the point where I actually had a quota for my guys like you didn't go home until he found me five incidents and it it actually worked out pretty well um but we we could use some of these techniques um so just top top bites transferred uh and then just some basic lists about IP information simp this kind of stuff if you have that more as a

less on like on demand lookup but more as a data set that can become really valuable information and then you can go a little bit further and combine those kind of sets with profiling so asking very specific questions that aren't they're not going to give you the result was this evil or not they're going to give you a building block style answer um so a question that you can ask that will define whether or not someone was an admin and then question you can ask that would say was this an admin like activity and then by finding the set difference in there you can actually get something worth investigating okay so if you want to uh

decide you want to do some of that profiling you know is that it sounds like something pretty Advanced not necessarily uh you could pick just a set of websites um you know not Reddit because that's you know a little bit more widespread but if you go to stack Overflow and GitHub enough you're probably a development those kinds of things you know maybe you go once or twice uh maybe not but you say you know what you go 10 times in a week There's a really really good chance you're a developer that's not necessarily like some machine learning thing right that's like a SQL query but now you have this list of people who are probably

developers and that's that I mean that's really uh practical to do and and valuable um did you do any kind of RDP in good chance you're an admin uh if you're constantly traveling all all over then you're higher risk uh you're a business traveler uh did you download a whole bunch of files well by definition you're you're an archival sof you're some archival soft sofware whether that's really what you're doing or not doesn't matter by factual definition that's what you're doing so what we have here with the the framework of building blocks is a bridge between the analysts uh sorry the analytics and the analysts so when I was saying that well the data scientists

create these analytics and then hope that the output is valuable to the analyst this is what gets you around that problem so you could you're still going to need some help running stuff like this at scale from data scientists or at least from um having the infr structure to support it but by using that framework as the bridge between the two worlds uh you can really have a more meaningful conversation and more meaningful U development requirements with them because you could say this is exactly what I need and this is exactly what I need and you'll know how they got that and that's the critical step so no analytics talk I think is complete without uh the obligatory

visualizations um for those of you who saw my talk yesterday at security onion um some of this will hopefully look for that was on purpose uh so I think that visualization really helped to make analytic usable and this is specifically in the early goings on when you're trying to figure out you know is like when you're profiling what does this even look like uh now I will give a shout out to Edward Tu who has written a number of books I have to recommend them all beautiful evidence was the one that I going to site mostly here I actually found it at the library uh so some of those technical references you can get at the

library if you're you know if it's a difference between and you know should I spend money on this book or not read it there is a middle ground uh I do I do like the library a lot um so if you weren't going to read it at all you might at least check the library uh so he has a few just basic points which I feel like is as a PSA is important to say have readable sensible labels um add emphasis to important points so try to you're trying to speed things up for the user right you're trying to make it as quick as possible so if there's something that you know is important then make it important on the

chart don't just put it up there and then don't use more screen space it's absolutely necessary uh so if you're had my talk yesterday uh I have some thoughts about dashboards and that I hate them uh so I think that dashboards are critical for getting you AE budget and that's where they're about uh and the reason I think that is if you are going to a dashboard to see if something is wrong you're probably looking for a spike or you're looking for something that has changed and that is something that can be scripted and then you can get back to doing doing something else so that that's my opinion on that sometimes you just don't have

the skills to do that in house and okay I get it um but that to me should be the goal uh is that a well oiled sock should not have anybody looking at a dashboard they should be responding to alerts when they come in if you're management I get it so I'm not gonna say don't make them you absolutely need to make some of them um but as an analyst I would I would find it um unfortunate if you were spending a lot of time in dashboards however if you're going to make them uh make them right and these are some of the ones that I hate and the reason that I hate them isn't because they look bad or anything

it's that you you can't just look at them and have any sens as to what's going on so you have to hover over every little thing to see what the you know what's in the legend and that's not going to save anybody any time that's just like okay we have some pie charts so this is why I'm such a fan of the sanki diagram sanki shows the same data that was in those pie charts where you have you know subsections or sub sectors uh but it's going to show not only the amounts and how much they vary by it's going to show the connectivity between them so it's this whole other dimension that it provides and you can actually

read it that's what I love about it so you can take one look at this and you know okay hey that big thing in the middle has got a lot of connections you can hover over that if you're really need to see how much it is but it doesn't really matter you're not interested in the total amount you can see by any one of those kind of stacked bar graphs exactly where the the stuff is going uh you should save a lot of time with time based controls instead of having a user click a whole bunch of times it's much nicer to have uh save them a whole zoom in right at the bottom you can see the entire time and if you

on the top you see a very specific section um so really just getting out of the way um so can anyone tell me where where this is from seen this before uh this is uh the handwriting of Galileo is figuring out for the first time in human history that there are moons around Jupiter and he did it with a little piece of glass in a tube and a quill and paper and I think about that a lot because it's very inspiring and it's inspiring because he was told the entire time he was wrong and I thought that was it's something that uh it's very inspiring so what he's doing here is each day going out and plotting where

the planet Jupiter is and these funny little things that he doesn't know what they are on day one but when he gets down to it's like about the 12th or 13th 14th day I think uh he's like you know what there seems to be sort of a cork screw motion here I think there's something actually orbiting this other place and I mean the the inspirational part aside I I thought this was a neat way of showing time based stuff especially as a changes so I thought it'd be really fun to have a visualization that goes and shows logins that way if you're if you're zeroing in on just a few users I thought this would

be a quick way to show uh an anomaly especially across days normally you see this in a calendar form form that and I think that can be valuable especially if you line them up by sort of day of the week but I think this really gets you for a time a day comparison um I like this Method All right so let's put it all together uh analytics are not magic and we need to own up to that so how would we do this uh with our our our practical analytics here for convention M use all right uh so what I what I looked at for this were um a way to show this in sets with some sort of

visualization on it so there's six different lists there and what I did for this was rig up a backend queries so that whenever a box is checked up there it would pull in that data and then merge it together and so it would like increase the counts uh so the the bottom the x- axis is the username so everything going across is the same user or sorry the U everything going across is going to sorry everything in a column is the same user because it's the x-axis and then and y- axis is the number of hosts that they logged into or sorry the host that they logged into and the size of the dot is the number of hosts so in

the the lower left there what you have is the same user uh logging in a whole bunch to one host and then the the orange dot above it they're logging in a little bit less but still a lot to a different host and then those kind of straight lines that you have across there are the same username logging in once to a whole bunch of hosts and so the idea was all right let's let's put a bunch of these building blocks together and we don't know which ones are going to give us something interesting but we're going to provide a format that we can play our hunches very quickly so we can start by unchecking it for you we'

say all right um let's look at the intersection of power users service accounts first-time logins and source code servers and it gets a little bit more interesting you already ruled out the ad controllers in the web server so the the big hitters are gone you kind of accounted for those but you still are left with a bunch so you have what four four two three four users to play with there all right so what if you go down even further now we're just looking at firsttime logins to source code servers and you only have one time that that happen and now you have you know you're out of that signal from TV area and you're into something that you can

actually start to uh investigate and maybe if this happens a lot now you could actually make a rule out of that a repeatable process so in conclusion uh the best analytics are the simplest ones and I think this is probably the easiest way put this if your analytics starts to look a lot like reporting you're probably on the right track that's sort of the the big takeaway and Reporting is very underrated for detection purposes I guess the eest way to say it uh and then assume that your analytics are going to need help so make sure that the output from those analytics is something like an event that goes into your normal analysis workflow I think way too often

you see the output from an analytic is like in of itself a deliverable and you have no way to go further into that to filter it and then that result needs to be easily communicated that kind of goes back to the previous statement if it's just an an event that's an output well it can clearly be communicated because it's just like any other piece of data at that point and then I think you need good visualizations at some level to be able to understand what's going on there and I think they're an effective interface especially in their early stages for uh playing with them and figuring out what it's valuable um that's it um glad to take

questions go ahead uh yes um you provide feedback once you start going through the Antics to R the sensors cells or the data sources and the second is how do you validate physics of some these sensors that being patched and modied whatever um to ensure that you're still getting data patch the change whatever okay let me repeat the question make sure I got it right so do you take the out from some of these analytics and then tweak the sensors that are creating the inputs so that you get better analytics uh well not really um and that's kind of to the first part if you have to tweak the input uh then it's not as practical anymore so I think the the

best way is to try to sidestep that if you're undering and that's kind of the point if you're tweaking a lot of stuff to get your analytics work then it becomes impractical any there you go seem like you got the typical data science problem where the data scientists don't have the domain knowledge and the analyst with the domain knowledge don't have data science knowledge especially mention Google paper where the feature set generation is done by the data scientists that's the main one you find that we're just not combining team rights team's right to get the analytics correct up front yes that's an excellent uh Point SL question Uh I that is strongly the case I feel that a lot of times there's both

um some siloing that goes on I think in most wordss but beyond that that's kind of the point of that bridge picture was that you need a almost a language or a framework to communicate between the two um disciplines and so by each group kind of meeting in the Middle where you your analy you know we're all smart folks we can we can do some math we can figure out some stuff but we don't have all data to sit there and read research papers so if you can get it to us in you know something like standard deviation or average you know some statistical functions we're going to understand if you can get it to is that way we're good

and on the other side uh they certainly know how to do that so if you kind of have the the smaller building blocks that don't require you know nearly as much uh machine learning and training sets and those kinds of things uh you're going to have much shorter conversations and more quality conversations between the two and once you start getting some successes there I think that the teams just sort of naturally start to communicate more and then I think you know the problem starts to solve itself like that um your the interdisciplinary knowledge sharing uh really starts to Blossom great great one last question more questions heckles are welcome challenges are welcome yes go back

toal Antics SL I just sure all right while I'm doing that any other questions all right I have a couple of giveaways here I should have made this the question uh but there was a previous slide looked similar also had three points on it and it was was how you know if an analytic is practical related but slightly different there were three points okay go yeah yeah

nice by and ran Jason uh okay I one more book to give away uh by another dear colleague uh Richard bck let's see um what are the two kinds of machine learning sry correct all

right