Comparing Malicious Files

Show original YouTube description

Show transcript [en]

hello everybody hello hello give everyone a moment to sit down

my name is rob simmons uh i go by utkanos which is uh duckbill platypus in russian if you speak russian there's a whole long story ask me ask me later about uh why why i have that handle so today we're going to be talking about comparing malicious files um and right now i am a independent malware researcher and i'm the former director of research from threatconnect so i want to focus the talk on a few problem statements and the first one is you know commonly known as the av problem so many av companies have their own unique nomenclature so when you submit a file to an av scanner or if the scanner finds a file on your

machine it will if it detects that it will return a result as to what it thinks that that file is and so that result varies across different uh scanner engines it also has various um uh you know unique unique nomenclature within one particular scanner and so this presents uh this presents a problem for using that data so even though it's very low quality data we can all admit that but i'll show you a few techniques for taking that low quality data and kind of squeezing a little bit of value out of it so there's also this is a related problem so the marketing problem marketing departments want to brand malware families they want to brand

vulnerabilities these days um these are for you know the uh to be famous for finding this first or for click bait you know who who knows exactly what the specific motivation is there but um that collection of motivations it's it's mostly uh driven by marketing and that's how you end up with uh very cute names like rockets and kittens and bears and pandas and all that sort of thing and then you end up with an additional layer of this where you have many many many different names for the same threat actor group you have many different names for the same intrusion sets and the same ttps actor groups and so it's hard to kind of pick these apart

and a little while ago i was you know i'm on twitter a lot reading all you know following uh various folks and robert m lee posted this this tweet which kind of hones in on on one of the reasons why number one you shouldn't really use these names you should because the names have the names that you see that are the outward facing names you know from a particular company those are a superset or a subset i should say of a larger set of just bins based on ttp malware behavior other indicators that are collected from that malware or the environment it's found in all these sorts of things and so the criteria that that company uses to make

those bins is probably unknown to you so you don't know how they're differentiating the different things that are coming in so they might not know the identity of a particular file but they'll place it in you know a temporary bin until uh more knowledge is gathered around what's in that particular bin and then maybe separating it into two you know two different bins and then at some point one of those will will get a more formalized name that they have honed in on an intrusion set or a threat actor and it's very specific and then you know that would become one of those more marketing term names so uh as as robert lee uh points out here

you should actually develop your own criteria for differentiating different malware files and how you cluster them how you differentiate them how you decide that they're similar or different and make that make that criteria internal to your own investigations and then you won't have to worry about coming up with names and and using other people's names you would then have a set of criteria you could take a data set that some company calls x uh you know x actor group or x malware family and you can compare that to your own internal criteria and see if it lines up with some of the bins that you're already collecting um and so that's a better way to to kind

of deal with this so there's also two two separate problems they're very closely related for and these are related to the work that we do in uh infosec and in malware analysis and reverse engineering so the first one is the researchers problem so when a researcher finds a new file that they've collected from you know wherever their collection systems are getting stuff and they want to know what it is they're looking at and they want to see is their previous research on this particular file or malware family threat actor group etc to determine is this a new attack is this something that i should spend time reverse engineering and deep diving and then perhaps publish information

publish a blog post publish something about it or share data about that particular new entity among the community there's a related problem so this is the incident responders problem and they're doing many of the same processes as a researcher but they have much less time so they're under the gun to figure out exactly what this particular implant or binary that's been collected off of an intrusion is related to what it is and one of the reasons why they want to find files that are related to it or figure out the malware family is to see is there research that's already been done on this and as opposed to what the researcher wants to know the

incident responder actually wants to see research that's already finished so they don't have to start at square one they can actually build on research that someone else has done about a particular malware family or actor group etc intrusion set etc so those are the two they're very closely related and you know the solutions for them are nearly the same so some of the methods here for sample identification for sample identification you need to just determine the malware family membership of a sample if possible you're not always going to be able to determine the malware family you could be looking at something that's a new variant it could be something that you know you've made a mistake and you might have

mislabeled it as a as an incorrect malware family but the goal is to determine the malware family membership of a sample and then once you know that you would you would hopefully have a larger set of samples there are public data sets there's one called the zoo which is hosted on github you can clone it and virustotal has a large data set various different repos or you have your own data set and what you want to do is take the file that you're looking at that's the unknown sample the one that's under that you're analyzing and then there are methods where you can compare that file to your repository and determine files that are similar or related in some

some of a various set of ways so i'm going to start with the low quality data that we were talking about earlier as far as av scanner results so if you take a particular file and you submit it to something like virustotal or total hash or the various uh online scanner engines where the file will be scanned against as many scanner engines as it as are configured there and so you get a big pile of results and one thing to note with the results is many of the companies that deploy uh that that write av scanners uh many of them actually share an engine and so if your calculus is to figure out how many you know what

is the detection percentage is this a highly detected piece of uh malware is it low detection knowing which ones are shared engines can help you correct for that for that data because if you if you count all of these as you know five or six different scanner engines detecting this you're you're actually going to throw off your results it's really just one that's detected it so shared engines are a problem another one is the development method for for signatures themselves so among av scanner engines they take different tactics as to what their their signature set does and how it works and how it's developed and so you have the generic generic generic signatures and generic

signatures could be easier to develop in certain ways but they cover a large swath of malware but they don't say exactly what it is it will say something like gen generic um and then the the the other end is a very specific specific signature so microsoft is fairly famous for their signature set and they're they get very very granular on naming the thing that you have submitted to uh to windows defender so here's a set of vendors that have usable results in my opinion so microsoft eset kaspersky sophos they all have fairly to different degrees fairly granular detection results that will either give you a place to start and then you can go look at the

the threat encyclopedias that they have where you can search for the result that you've gotten and see if they have a little bit more information about it so this is the technique i was just talking about so boiling down the results from a pile of unusable you know uh av scanner results boiling it down into something that you can use to go uh dig further into the you know the the literature and blog sphere and stuff like that to find research on a particular file so as you can see i've actually removed all of the generic results from this list so this is the this is this file and these are the results by the way av scanner results you have

to know the date of the av scanner result because the scanner result can change over time as av scanner engines update and change their you know removing of false positives changing of detections improvement of signatures so that the signatures will uh change over time so this is the this is the signature set what you want to do is make sure that you get the sneaky generic results removed you'll find zeus z-bot zeus those have kind of become generic results it's very unlikely that something is actually zeus anymore specifically so if you're seeing something like this it may contain some code that's a descendant of this but these are i consider these and a few others just

generic results so you want to remove those from your from your data set and so as you can see here these all have the same uh same result i'm going to count that b down in the end that might just be you know a little bit of a little bit of uniqueness that they've added to their own engine but it is still a shared engine across those uh scanners and then here we have a and avg they're reporting the same thing that appears to be another shared engine so after taking all of the commonalities from those results you end up with two two strings two scanner results isbar and simi and you know like i said

this is some this is very low quality but this gives you at least something which you can go to google or go to a threat intelligence platform or go to a malware repository and search for files that match these and see is this what i'm looking at or is it not and you know it can help you on your way to figuring out what you're looking at so another identification method or framework for identification is miter attack is who's familiar with miter attack awesome very very good so mitre attack is a framework of categorization for adversary tactics and techniques and it's an excellent first step and i say excellent first step it's actually very very good for

most of the things that you all are probably using it for now which is adversary behavior on the network pivoting um attack techniques and such but for malware classification it's not ready for prime time and it needs a lot of help so uh there are uh there you know the the former maec i think there's there's some uh i'm sure you could uh tell me more about this but i think there there's a lot more additions that are being put into attack including uh the idea of sub techniques so a technique is very uh generalized right now and so you'll see in a moment some of the sub techniques that i've actually identified and begun contributing to attack

so this is one specific one so the the the granularity of attack in this particular uh analysis area is is um not there yet but what you're looking at here is a structured exception handler so is everyone familiar with a structured exception handler there's a few okay in uh most programming languages i think most are all programming languages you have the idea and i'm i'm a python programmer so i'll kind of give this to you in the python ease but you have a try and an accept statement so you try something and then if it fails for some reason and generates an exception you would run code under the accept statement so in a windows world way

in uh windows compilers and sch what this basically does is the adversary creates a uh a set of code that they know is going to generate an exception so it will always generate an exception and then their malicious code resides in the structured exception handler so the uh you know the the binary will actually pass execution into the structured exception handler and then all the bad things happen and it's just an anti it's an anti-analysis technique it makes it a little bit more difficult for someone to just straightforward reverse engineer the binary and figure out where that code is actually located but to set up a structured exception handler uh you know the hint there is structured

so there are two components of it you can see at the top there's seh save and then at the bottom there's sch init so any time you set up a structured exception handler you're going to find both a save and an init it might do something slightly different in between the two but it is going to it needs both of those to actually set up the structured exception handler sch save is always the same so that's that's a single single set of hex which you can see starting over there is the 64 ff 35 and a lot of this a lot of zeros so that's going to be the same across all structured exception handlers

but uh when i when i began looking at this i always go out and look for folks uh yara signatures there's a few large yara signature repositories on github and they include techniques and stuff like this and so i looked there to see what uh what signatures were already available for detecting structured exception handlers and i found huh uh this right here is uh at least new to me um and i'm not sure if that this you know this actually is is fairly common across some you know malware families that i've found i am not familiar with why this is different from the the structure exception handler initialization that was already known i think it might just be depending on

what compiler you use as to which register it uses whether it's eax or others i still need to research more on that to figure out why there's a why there's variation in this but i found new variation in sch in it so i took the the the signature from naxones which is the author of the original signature um and added another uh another variation on sch init and also up here at the top i've begun uh putting attack techniques tactics and sub techniques even though sub techniques don't exist yet um i've begun collecting the sub techniques that i'm contributing to the project so i'm going ahead and and tagging them in this way so you'll see sch init is the name of the

rule so if this matches on a file yara will will report sch init and then it will also give you what's on the right side of the colon at the top those are just yara tags so it's free text and so i've begun making yara tags that match up with the techniques and tactics and sub techniques in attack and i recommend that if you've got a yara signature set this actually makes it very easy to track to track the various attack techniques so also i want to make sure that you contribute so attack is a you know it's a crowd-sourced kind of thing where people the people who use it need to contribute more information data signatures signature

patterns and techniques and tactics to it so please do that and here's an example of an area where you know it needs it it needs a little bit more granularity so second factor interception which is t1111 is very very general so a second factor can be intercepted in many different ways and so i've listed here a few potential and these have been submitted to attack as potential sub techniques so sms interception on the wire so this is a basically a man in the middle attack that's catching the unencrypted sms from your bank or from whatever organization and using it before you're able to use it sms interception by number porting this is where the adversary will figure out the phone

number that you have your second factor uh token sent to and they will port your phone number to a handset that they've purchased somewhere and you know they'll be able to intercept you know your sms phone calls and everything so that's an interception method also code interception uh via phishing page so nile fish and charming kitten they uh if you look on um on uh uh i don't remember the name of the research uh site out of the university of toronto i'm drawing a blank citizen lab sorry drawing a blank on citizen lab but you can look at citizen labs research on nile kitten nile fish and charming kitten about this particular technique so this is essentially they'll have a

phishing page that looks like your you know login but it includes the second factor token field and so they are sitting on that phishing page and will use your second factor token immediately so you type in your username password second factor token that moment they are using it to log into your account so and then there's the the lowest hanging fruit of second factor interception key logger and there are probably many more than this and i you know i would encourage you if you can think of any more uh please contribute them to to attack the next one is malpedia is anyone familiar with malpedia awesome so malpedia is again a repository of yara signatures

and there are not only yara signatures but reference samples for various malware families various threat actor groups ttps etc and so there are the the samples that you can compare the file that you have to and then there's a set of yar rules as you can see over here the the status over there there's a little yellow star and then a green something or other and a red tag so not all of the entries in malpedia are fully fleshed out right now so again uh you know please join the effort if you can contribute yara signatures or if you find a sample that you know is a particular family or or actor group and there isn't a sample

available here contribute that sort of thing to malpedia and this is what you get for for using malpedia you can drop a file into mailpedia and then it bounces that file across all of the yara signatures in the encyclopedia and then returns the percent match and the number of strings matched from any of the any of the signatures and then it will return rule names from those particular signatures that matched and then there's always google so i think most most of us who became i.t experts became i.t experts through this methodology which is you know after all the other things fail just google it with a few words and then follow the directions of what

what comes back so you know any any researcher even if you have a file hash google the file hash you might have you'll you'll commonly find that file hash showing up in virus total you'll have some virus total pages you might have hybrid analysis any of the other sites on the internet that allow google to index their sandbox results and other sorts of data you'll be able to get that data you know as long as it's as long as it's not you know right now as long as google has crawled it so google's always a good way good good backup method to always hit so i wanted to cover a few other systems these are a little bit a little bit more

esoteric so there's the common malware enumeration this is in that sort of same family of miter systems as uh cve etc so common malware enumeration is an attempt to to enumerate and differentiate different uh malware uh files and then maec malware attribute enumeration and characterization this is a subset or component of stixx taxi and so this is a way to um to represent things like sandbox results or to represent dynamic analysis results to represent uh reverse engineering results and so it's a way to classify and characterize malware there's also icar in the eu the wild list i put this on here this is a very very old system for providing a currently a constantly updated list of examples of

malware that's found in the wild and then cairo so that's a that you know that's a pretty good overview of the various classification systems that are available today but i think there we could do with something new and i hate the idea of like maybe we should have a new standard because if you have a new standard then you're going to have 10 variations of that standard of course so i don't know that i have this the the the full solution here but i can tell you from my by my background in biology biology already has for organisms a a system of nomenclature the latin naming system which does actually satisfy both the marketing problem

and the nomenclature problem from av scanners so as long as you've got one single nomenclature and it's shared across different vendors then you can actually have something that solves the av problem and then if you do something similar to the way that that latin latin naming works the scientist that discovers that new type of shrew gets to put you know their grandmother's name on that shrew and so you get the same benefit that the marketing types are looking for which is you know the the the the cute name or the thing that you can uh use in your marketing material so there's there there is a concept that other disciplines have at least solved already

so uh for associations and one of the main things that you're going to use for association i know dynamic analysis is uh you know in the the level of difficulty it's a little bit higher up in the level of difficulty but static analysis you have all the tools available to you so you can gather a wealth of data from a file without it even running it um so some hashes that you can gather from a file which allow you to relate it related to other files in your repository ssdeep so this is context context triggered piecewise hashing also known in street slang as fuzzy hash and so fuzzy hash basically instead of taking a file

and running it through a hash algorithm and arriving at one unique number or hash which is different if you change one bit in the file you're going to come up with a different number this is slightly different it takes a algorithm and divides your file into predetermined components and then runs a hashing algorithm on each one and what this does is when you take two of these you would then see how different and where that difference is between these two files and if you think about it from a mathematics perspective you've got a you know you have a three-dimensional space and then in the center is the file that you're looking at so if you run that

same file through the hashing algorithm you're going to get the same hash unless you're unless your your computer is broken but you're going to get the same hash and then you have all the other files in your repository and they are all going to have a different hash and those hashes are going to be a certain uh distance away from the center and so all of them are going to be some distance away and ss deep has a 1 to 100 scale and so the stuff that's completely different out here is going to be at the the far end of it and so what you want to do is choose a threshold so of all of the files in your

particular repository you want to choose a threshold where all the files that are within that edit distance away from the file that you're looking at you would consider related so it's a little bit of an art to figure out what that edit distance should be depending on the type of file you're looking at if you're talking about pe as opposed to word documents and pdfs etc and import hash so this is a this is a different type of hash but very also very useful for finding related files you take the import table of a pe executable and you create a hash of it so you take that import table which has the different functions that are imported for the software to

use and you take that import table which is can have a unique order of the imports make make a hash and then as long as you've done that to all the pe files in your repository you'll be able to find other files that have that same import table and are therefore likely related or it would at least give you a pool a smaller pool of files to sort through and figure out which ones are actually related and which ones are not but both of these hashes do have some gotchas and i want to cover the one main gotcha for each one when you're using them so ss deep ssdeep just looks at the file itself

and many many malware families will use what's called a packer which takes the the payload that actually gets run in memory uh and run on the the target's machine and it will either compress it encrypt it or use like an installer of some kind it'll use a commercial installer reuse that and so when you're running ssd you want to run ssd against the unpacked file the payload because if you run ssd in your repository and nothing is unpacked then you're all you're doing is finding the similarity between the two packers not the payloads so that's a that's the thing that you want to to watch out for with ssd uh import hashes the one of the main problems that i've

found with import hash is that you'll find net binary so if you're if you're working on a net binary and you take the import hash and plug it into your uh repository all you're going to find are thousands of other net binaries that have the same import table so it's completely useless if you're if you're you're investigating a net so another another wealth of data can be gathered using exif tool so xf metadata depending on the file type will basically pull out lots and lots of different data points for static analysis so this is a word doc and you have the the modification dates i understand that dates can be what's called time stomped which means the adversary has adjusted

has tampered with the time stamps before they deploy the malware but you know it's good to gather that data anyway even if it's time stopped because if they time stop in the same way across many files then the fact that they're time stomping in a in a in a regular way you could actually use as a as a data point so it gives you the mime type and down here at the bottom in uh in office land this is called author in pdf land it's called the pdf producer this will actually be in a slide a little bit later on but this is an artifact that's left by the the editor which edited the file and when

you you know when you first configure or install office it asks you what your name is and whatever you type in there gets uh put in the metadata of all the files so you know commonly you can find at least a string here that could be unique and differentiate a particular word doc from other word docs in your in your repository and the same thing last modified by it leaves a little uh the same that same string from another user so if you pass the file to someone else it will leave the last modified by from their name that's configured in their their office instance created date and then code page this is not a very

interesting code page but if you look at the code page and it comes up with a very unique or specific language that's another good data point now i want to talk about code signing certificates um a few weeks ago i i gave this talk in b-sides ljubljana and i actually misspoke and called them malware signing certificates and so uh you'll see why in a moment so what can happen is the the file could be signed with a fake cert or a self-signed cert so this is a certificate that has not been signed by a certificate authority it could also be signed with a real or stolen cert so it could be a real cert that is

bought from the ca uh and you'll see in a moment just how bad this is right now or it could be a stolen cert so they have uh compromised a legitimate software developer stolen the keys to their cert and then begun using their cert to sign malware there's also another kind of uh broker or uh you know a cert service sort of thing in that area in uh in many many areas of the world you can find a company who will actually let you use their cert for whatever you want to sign and so those usually end up being used to sign to sign malware you could also have a signed ish or broken signature

so the signature lives in a directory called the the um security directory image data security directory and so that directory even if it's in my consideration even if it's filled with gobbledygook if it's not a real cert i would consider that an attempt to be signed and so the whatever that data is in there even if it's super malformed or just junk the fact that that junk exists can be used to find other certs that or other files that are junk signed in that same way so i want to talk about abuse certificates for a moment so this is data from a malware family called file tour this is one that i began reversing back

in january and did a deep dive into it it's used by eastern european russian ukrainian and and turkish adversary groups and file tour is more more of a ttp or a behavior or a tactic technique rather than a true malware family because there's so many different actors that use this what file tour is specifically is inno setup which is a commercial well it's actually open source i believe it's an open source uh packer for you know freeware people and commercial software people to distribute their software so they'll make a legitimate application and then they'll pack it with inno setup and then that's the little setup program that you run and then it you know it says where do

you want to install stuff etc etc etc so the combination of downloading malware or installing malware and using inno setup is uh basically fat file tour so that's the that's that malware that sort of pseudo malware family or at least ttp so from file tour this is i have a data set of approximately 25 000 files and across those 25 000 files i found 500 valid valid certificates signed by certificate authorities so they are obviously the very lowest level of validation that's possible with the certificate authorities but they are still signed by the certificate authority and have not been revoked as of when i looked at this and the you know the the big offender

would be komodo and then it's kind of a who's who uh on down so you know 96 percent was signed by komodo and then you have thought and digicert and various other ones down there and like the drill bit digits and single percentages so in uh the the next the next set of of metadata is pe metadata so you have sections imports exports resources these each come from different areas of the pe structure so this is uh this is this i know this is very hard to see but this you can find if you just google pe structure or pe specification and you'll find this in the google image search so this is basically where where in the order of a pe file

where where are the sections where is the header where are imports where's code and so as you can see what you can do is you can take that that section or you can take that resource that data can then be run through a hashing function like md5 or sha-1 sha-256 and then if you do that across all of the files in your repository you can then do that to the file that you're looking at and you can find other files in the repository that share the same resource share the same import share the same exports etc and then that's a way that you can find related files and then kind of dig through the results you may

have this is a fair for some depending on the resource and depending on the section you can get a whole bunch of false positives or you could get a small number of related files so looking at sections this is uh dot reloc and this is for this particular sample and so if you use this md5 you would be able to find other samples that are like this one that have this same the same section again with resources the same thing rtversion resource in this particular sample gives you a shot to v6 of that of that resource which lets lets you then find files that share the same resource so there are a couple of different

algorithms that various folks have out there for finding related files so reversing labs they have a large data set of binaries and so if you have a file you can use the the rha to find other files that are related to that and they nicely wrote a blog about what their methodology is behind how uh the reversing lab's hash algorithm works if you use virustotal they have a they have a similar sort of thing it's the similar to index and so if you take a particular file hash and you run it through the similar to index it will it will return a set of files that uh you know google virus total decides are related via

you know machine learning and some of their black magic algorithms and that sort of thing so document metadata as we saw earlier some of the document metadata is very important these are some of the most important so author and produce pdf producer those are the same thing just the one is in pdf lan and one is in office and then time stamps language all these can be used to to relate to relate files and find new ones that are that are related to ones that you already have now let's talk about dynamic analysis so as opposed to static analysis this is where you actually run the file and you observe its behavior so one of the things has anyone is

anyone familiar with domain generation algorithms so in the same sort of concept the domain and generation algorithm is going to to generate a string each time and that string is known and so in that same way the file names for file for some malware families will drop itself or drop payloads or drop different data files or various things and the file names will be dynamically generated and so if you can figure out what a regex for for that particular pattern is based on their file name generation algorithm um you could you you can potentially differentiate different malware families by the file name patterns that they're leaving on your on on the victim's machine i highlight here url structure for

download this is just to to to kind of point out that this is very interesting it's not actually related to the malware family uh the structure of the download so if a malware binary is reaching out to a a uh infected wordpress or compromised wordpress site or joomla or something like this and downloading a payload from it the structure of the url on that compromised website is not necessarily related to the malware family but it is related specifically to the vulnerability uh usually is related to the vulnerability in the cms and so you can develop a regex for for the various uh variations of that uh url structure so thinking about the url structure for download

the c2 structure for urls that malware reaches out to that is uh directly related to the malware family so many people maintain right uh regex repositories of the uh you know for a particular malware family what are all the different variations in that malware family for its c2 and then you can take a new url that you've got and bounce it against that uh that set of regexes that you've developed or have been shared with you and you can figure out potentially what the mauer family is this is an example of that so this is more of a this this is actually more of a phishing attack but i wanted to show you the idea

of taking a a particular url and developing a regex from it so this is blogspot this is a blogspot malicious url structure and this is from an attack early last year against a set of journalists and as you can see in the regex down at the bottom there's a uid and you don't see the uid in the url here i actually removed it because the the uid is the victim's email address in base64 so i don't want to put any uh victim email addresses in my slide deck so if you want to read more about this particular attack this is a blog post that we wrote about it and um you know the reason why they

chose blog blogspot is it makes it easier to circumvent google's uh protection against phishing and malicious attacks because blogspot is already a google property and so they're not going to the you know the checks are not going to be as strict or specific for things that are already hosted on google's infrastructure you can also use mutual exclusions so mutex as they're known so this prevents a race condition if there's two processes running two multiple process two two processes running on a multi-processor machine they use a mutual exclusion so they don't you know step on each other's toes as they're doing work and so malware will actually just it's a program so often malware will use

a mutual exclusion to do that and so the string that it uses for mutual exclusion is often unique to that particular malware family so whatever string the malware author has chosen could show up across various builds of their malware you know and if it's reused in that way you could relate those together same thing with the registry key so as as malware executes and is run it goes through and does uh you know deletes modifications additions uh to the registry and so that set of registry modifications and keys that the malware has touched can be used to uh look to see is there another is there another uh you know file malware file that looks with the same keys or perhaps

looks at the same keys in the same order and so there's many ways that you can use the registry key set that a particular behavioral analysis comes up with and compare those two and see patterns across different malware families so let's go a little bit more complex and a little bit more you know high level here clustering algorithms so there's many different clustering algorithms that you can use and so a clustering algorithm takes a set of files that you've got in your repository and tries to determine uh clusters that are related based on that algorithm so i highly recommend if you're interested in doing malware clusterization so this is a this is a workshop from subdraven

this is one that i attended last year before last at botcom and it's all up on github it's a really really excellent really excellent review of of you know doing malware clusterization and it's based on the zoo which i mentioned earlier so the data set that you need to do this stuff is all everything is out there in github and you can work through it on your own so the algorithms that are used in there k-means and db scan have you know various various pros and cons um but one of the things that's really interesting about that is once you get through working through those uh the the that workshop you realize you you don't actually need a gpu you don't

actually need a lot of horsepower to do uh clusterization if you do clusterization smartly so if you use um and you know the i'm i'm a biology major so i'm not not too heavily into the math end of this but there's a difference between using a full matrix which has you know empty any of the empty slots actually have a zero and so if you use a sparse matrix as opposed to a full matrix you can use a computer like this rather than a super computer to analyze the matrices and so you'll find out if you go through that whole process there that it's quite easy to to use those algorithms on even a machine that has

the horsepower of this guy right here i want to have a pause here and point out a friend of mine and colleague tony gudwani she has a infosec finer things club and so please please follow this twitter handle and uh she works on malware malware malware research and wine pairing so what what wine goes well with certain certain malware and malware research so one of these fixes everything and the other is a roll of tape and that's the that that's the infosec finder things club twitter handle so please please give it a follow next method that i'm going to talk about is the diamond model for intrusion analysis so i'm not going to go into too much

detail here in a couple slides you'll have a link to go read the paper itself and and you know kind of go into complete detail there but i want to show you a a graphic from my old team that we developed and so you'll notice that we use the diamond model to analyze star wars and so you you notice this is a little bit of an inside joke but the victim is actually the death star and so the adversary is luke skywalker um you know as because of the because it's all you know it's based on intrusion analysis we kind of had to make the death star the victim because that's the the appropriate thing the appropriate

configuration there for uh for star wars so this was actually almost a knock down drag out fight in our team to because we disagreed on whether proton torpedoes were capabilities or whether they were infrastructure um and various different pieces that was a fun discussion but this is the diamond model paper uh please go download it read it implement it in your programs it's a very good way of taking a pile of unrelated data or seemingly unrelated data and creating uh you know creating nodes basically creating nodes and groupings and then relationships or edges between those nodes [Music] so another association method is ice water so this is a website ice water io search you can take a

particular md5 sha-1 sha-256 and you can plug it in there and then you can find based on the clusterization algorithm in ice water you can find other files that are related to that within a particular cluster and then you can see the orange orange clusters are one bit difference in the cluster id and then the green up there is a two bit difference from the cluster id and so you could find clusters that are in some way near the cluster that you're looking at and if the file is within this cluster you could perhaps find other you know other builds of a malware family or files that are related to it you also get this

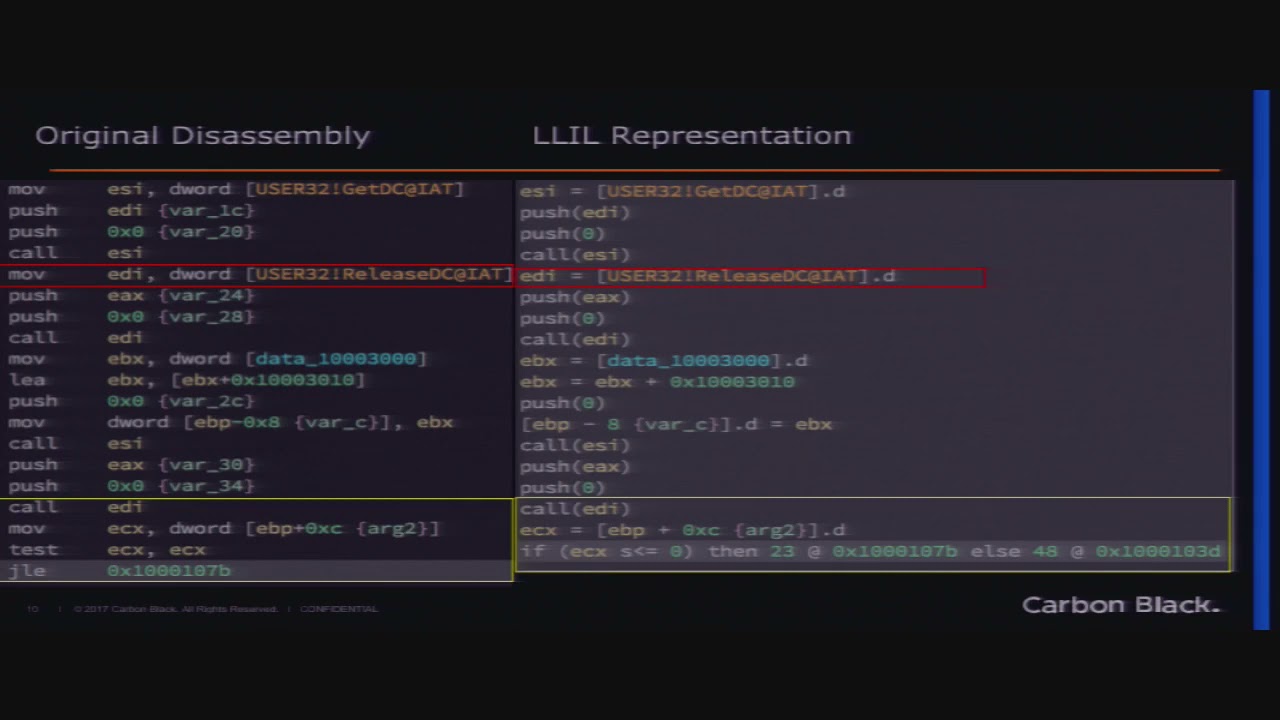

graphic of the file so it presents what the file looks like in a graphical sense and a few few data points that you get down at the bottom you know the the hashes etc so the next thing i want to talk about is control flow graph analysis so is anyone familiar with control flow graphs control flow graphs awesome so a control flow graph is essentially if you take a program you know pe binary or or any you know any program out there you're going to end up with blocks of code and then a block of code is linked together with all the other blocks of code in that particular file and they're linked together by

if then statements or they're linked together by loops and so if you take the whole set of blocks and all the ways that they're interconnected that is a control flow graph and so the control flow graph of the the code that's inside of a microsoft library is not important we don't care what the the control flow graph is in there but if you take the code that was written by the adversary the control flow graph of that adversary code could be the same or reused in other malware that that particular author has written because everyone reuses code you might cut and paste a particular you know a stretch of code paste it into something else and then

you're going to end up with a piece of control flow that matches that particular uh you know little section of code so this is a really good introduction to control flow graph analysis uh this is bur derby con from 2014 and this is the youtube video i highly recommend watching this this will give you a very very good understanding of control flow graph analysis if the if iron geek's camera was slightly to the left you'd see the top of my head in the front row and so this this is a control flow graph from redare and as you can see those are the different blocks of code and then you'll see green and red whether the if statement is

satisfied or not satisfied and then loops that go back to the top of a particular block of code also of course there's go to so you know that is another control flow method so there could just be a something like that that just goes off to to wherever you want it to go but for the most part it's if then and loops uh the last analysis technique i'm going to talk about is graphing your threat data so this is something that i have been doing deep dives into recently and this is something that is very very very powerful for looking at threat data so everyone's familiar with the stixx schema and if you think about it look at the

the ones on the on the left those are sticks domain objects and then the ones on the right are sticks relation relationship objects and so in graph theory you have nodes and edges and so this lines up perfectly with graph theory the nodes are your stick's domain objects and the edges are your sticks relationship objects and you can even see that you know many of the relationships or edges between the nodes even have you know as graph theory uh refers to it directionality so you can have bi-directional relationships edges or you have unidirectional edges and when you're working with when you're working with graphing data you need to have it in a format that is

conducive to doing graph work and so these are two i i recommend rdf because it's the it's the native format that my favorite graph database which you'll see in a moment uses you could also use json for linking data json for linking data if you're not familiar with it it is json that has a little bit of syntactic sugar that's been added to it to understand relationships between the key values in json rdf n quad is not quite as pretty and human readable but is as powerful you can see these so i've actually put spaces in there you don't have to have spaces but it should uh the space the spaces in between those blocks of text

each one each one of those blocks of text is a node and then you'll see the uh the the edges are the ones so in the top there's actually three edges one that one edge that leads to setup one led one edge that leads to m fam and one let one edge that leads to impash so uh and i'll give you an idea of how this actually looks but one of the two there's two main graph databases everyone we can have a discussion on which one is best after the talk but my favorite is d graph neo4j is uh you know an older uh database but d graph basically starts from scratch they're based on

some of the same research and people that built the graph database inside of google and this is their own uh separate company now it's basically a key value store called badger and then a relational uh you know layer on top of that of ways to to relate the different key values and if you want to get into graph theory i recommend introduction to graph theory by richard trudeau and then this is what you end up with you get a network graph so this particular network graph this is very simple the yellow you can see there those are two files and then the blue is they're related to each other via import hash so the import hash becomes a

node and then the files are two nodes and then the edges are the relationship that those two share that import hash you can get even more complex and even more complex and if you graph all of your your entire data set you can then uh you know kind of play around with the with the graph itself and you can get some kind of emergent properties that kind of a person would be able to see but maybe a machine might not be able to see certain relationships and then you can also use mathematics to to find relationships between the files without actually having a person playing with the graph but as you can see here the blue again

is an import hash the green the green there is actually the malware family name and then the the red are the file names any questions no time for questions come outside come outside and ask me questions