January Presentation Security Data Analysis for the masses

Show transcript [en]

All right everybody, we're gonna get started. Thanks for coming out. I see a lot of new faces. So my name is John Ziola. This is Steel City Information Security. Welcome. This is a group that kind of focuses on the application of information security as opposed to theory or specific niches within information security which are other groups that we have in the area. If you're interested in another type of group, let me know and I can help you find the right one if this one doesn't fit you. We've been around since late 2012 doing presentations and things like this. We also do hands-on labs and we do informal get-togethers where we'll just hang out at a bar and talk about info and stuff. So I want to say a

big thank you tonight to Anomaly for being our sponsor for this quarter.

So they sent me this long thing, I'm not going to read the whole thing. But they are essentially an aggregator and a manager of Threat Intel Information. Why are you taking pictures with me? They focus on open source, third party partnerships, threat sharing communities and trusted circles within their platform. One thing that I found really interesting about them when I was going on their website is, I don't know how many people have heard of Soldier Edge in the room, anyone raise a hand? Yeah, yeah, cool. So as you guys know, it's dead, right? Like it pretty much went away, it was a, it came out of FSISAC and then, you know, a bunch of weird things happened and then all

of a sudden one day there's a blog post and it's toast. and someone else is going to be buying the assets and whatever. But who knows what the future's gonna be, right? It's kind of unknown. And what Anomaly did in response to that, as soon as they found out, is they kind of flew, literally flew a lot of their team together and had a meeting and they said, we're gonna fix this problem for the infosight community and we're gonna get it completely out for free. I don't know if it's open source, but it's definitely for free. It's called Anomaly Stacks with two Xs. So if you were thinking about going down the Soldier Edge route,

that might be worth considering. Yeah. A couple more quick things about the group. We have a jobs forum. So if you're looking for a job or if you have a job, that would probably be a good place to go if you're looking for a job to find it. And if you have a job opening, please post it on there. Also, if you have internship openings, I may know a guy over there, Sam, raise your hand. Sam would like to get an internship position in Infosec. So if you have one, reach out to him. You can find that form very easily if you go to jobs.stillcityamlosec.com and that should redirect you to the Meetup webpage and all that fun stuff.

So we also have an event archive. So as you can see, there's a little camera here that Brian is helping out to record these and we've been recording them for a good bit, maybe a year or some, somewhere around there. And I've been posting them online. I've been posting my slides, the slides of other presenters online. If there's GitHub repos, there's links to that, details about where we were, when we were, what we were talking about. Just in talking to people, a lot of people seem to not know that that exists. So I wanted to point it out, and I also made another handy redirection. So if you go to eventarchivesingular.stillcitymsac.com, it'll redirect you appropriately to that. Also, kind of speaking about events, We have a member, Brian, who

is trying to start up a separate group that's vendor specific to Rapid7 for vulnerability scanning. If you are interested in that, talk to that guy. Please talk to that guy. That guy. This guy. Raise your hand again, Brian. That's the guy. He's the guy.

Normally, at this point, is when I would hand the mic to somebody else and say they're really smart and they know what they're talking about, they're going to give you a really interesting presentation. Unfortunately, I tried really, really hard to find somebody else to give this or a similar talk and you're stuck with me. I apologize for that in advance, but this is going to be a talk about security data analytics for the masses. And it focuses on applied infosec data analysis. So actually, how do you do it? A little bit about techniques, more about some tools. Some newer platforms and things that are kind of up and coming. Interesting stuff. So I wanna be very upfront. So I definitely am biased a little bit, right? So

I'm gonna talk about things. I might mention R, I might mention Python. I'm definitely more of a Pythonist, Pythonista maybe. I'm going to talk a little bit about two projects, both Apache incubating projects, one called Spot, one called Metron. I have a very big vested interest in Metron, so I wanna make sure I put that out there. And I'm also biased toward BroData, so I don't know if you guys know what Bro is, but it's a open source tool that can parse network logs. He'll call it an IDS. They don't like being called an IDS, but they're kind of like an IDS and a network forensics tool put together. Anyways, I'm very biased towards that, because that's the information I have at my disposal, so a lot

of the research that I do I focus on being able to use that data as opposed to something like an IP fix or NetFlow, which would be semi-similar. So I just want to make sure that those biases are obvious early on because all of those tools are good tools. I don't want to say anything bad about them, but I may shed them in a different light just because I have more experience with one or the other. I type a lot. I wrote a lot of notes. I'm not going to say any of that. So real quick, I want to tell a brief story. I want to discuss the path that my employer went down and

why I'm even giving this talk in the first place. Not only because it's been an interest of mine for many years, but... So my position is generally to develop blue team tools for my operational team to use in day-to-day ops. So protecting the university that I work for and all the subsidiaries that we own. We decided that we had a lot of data and that we should do something with it. And we didn't know what. We knew that we had a grep through logs sometimes, right? So we were like, all right, well, what's a faster version of grep? And we kind of looked and we said, okay, well, Elasticsearch is faster. I don't know if

you guys know what Elasticsearch is. It's essentially a database that's optimized for search queries. So we went down that route and we gave it a good effort. We invested a significant amount of money, at least for us, into it and a lot of time. And it didn't go so well, specific to I think our implementation mistakes that we made. And so we said, okay, well, Elasticsearch is kind of hard, let's find something else. What else can we do? So we said, well, what's better than just searching? Well, let's just get a SEM, right? Or a SEM or whatever you want to call it. Let's just get the whole kit kaboodle, right? We'll just spend all the money, it'll be a big deal, it'll be great, and it'll

magically fix all of our problems. And then we, so I did this big research project. I tested out all the different SIEM vendors, at least 100 hours, if not way more than that. And then we found out that it's really expensive to buy a SIEM. So we said, no, no, no, we're not going to do that either. So what's an alternative? And I had kind of heard about this thing called an SDAP. It's not very popular, that specific term.

How's it going, man? Secure Data Analytics Platform or Data Analysis Platform. It's a data analytics platform that's focused on InfoSec data. And I said, okay, well, maybe we'll look at that. And there was this project at the time called OpenSock. We'll talk more about that later. But it turned into Apache Metron, and that's kind of the path that we ended up going down. And to talk a little bit really briefly, so to give some context about the research that I'm doing, what my environment looks like, we're at about 30,000 events per second. in our environment, we get about 600 gigabytes per day of structured data, and we get about 84 terabytes a day of unstructured data that we're trying to store, retrieve, parse, things like that. Luckily, we

are not healthcare, which sometimes has to have seven-year retention. We only try to do three as kind of the upper end, really more like one to two years retention of our data. So when we were thinking really hard about how to use the data that we had and we were talking about going down the Medtron route, we thought, okay, well, what's the first step? And so, okay, well, how do you look at data? You try to visualize it, right? So we bought really big screens. We bought these huge monitors and we mounted them on our walls and they're great. So I put this awesome background wallpaper on it and it looks great, but it actually doesn't. Nothing at all. I think we watched Hackers on it the other day.

It really is not what we thought it would be. It was more of like, hey, having some sort of visualization would be a good idea, and then the day after Black Friday, my boss shows up with a couple big partners. It was like, hey, we're gonna do this. Okay. So big data, it's a big buzzword, right? It's got, you see all these different terms being associated with it. It's kind of hard to wrap your arms around. I didn't really fully understand what it was either, so I started searching on the internet. I binged it or Googled it or whatever, and I found this. Hold on a second. Hold on a second. I've got to fix my audio. What's

that? Yeah, good.

I completely forgot to test this. But it should work in theory.

They got big factories and clusters of data. It's like totally just floating around everywhere. I used to do business. If you had like 200 phone numbers, you put them in a Rolodex. But nowadays, I have 500 phone numbers, and I'm not going to get two Rolodexes. I need computers. I hear it all the time. I really, well, I don't really know what it means. Yeah. So obviously, I know a lot of other people will know what it is. I thought that was kind of funny, so I wanted to share that. So before I talk about specifics with big data and data technologies, I want to give you a little bit of background, a little bit about what's been happening lately. This literally came

out today, I just clipped it off the internet. It seems like kind of a big deal, a $27 million investment in research to fund AI, which is a method of using computers to essentially teach themselves artificial intelligence. I'm sure you've all heard of it. It was announced today, literally today. Or no, I'm sorry, the 10th. So, two days ago. And it's aiming to promote research and AI that's going to benefit humanity. There's also, really interestingly, in Pittsburgh right now, a competition that's going on with people in the Computer Science School at Carnegie Mellon happening at Rivers Casino. I think probably literally right now, it's going for 20 days long. They're playing against four of the best poker players in the world. They flew

in professionals and they're playing against a computer and they actually have something to win if the poker players win. Statistically relevant, like not by like 1% essentially, they will win a $200,000 purse. So they're using AI to kind of learn using that 120,000 hands of poker and trying to play against humans. Also, this past weekend, There was an AI hackathon over in Bakery Square that I went to. It was kind of interesting. And we got to hear from a lot of different people talking about how AI is going to impact the future and how it's currently impacting people. And essentially, they were pitching new ideas. So they were attempting to get a purse that IBM is going to be awarding

to a couple teams that can use AI in a way that will benefit humanity.

four-year long contest. So that's pretty cool. It's really interesting, especially these two things, how timely they are literally happening this past weekend and right now in AI in Pittsburgh. So I wanted to make sure that I mentioned those. But generally, I wanted to talk really, really quickly about why now. And so the term AI was coined back in 1956. It's been around for a really long time. And there have been some bumps in the road. What they call AI winters. There's actually been two of them. And in the middle of them, there's been some really important research that's came out of them, back propagation, right afterwards, reinforcement learning, which is really, really important for machine learning and data analysis. But

another thing that's really been pushing right now, within the last few years, and why this is happening right now, are these last two items. The data density that we have, not just the fact that it's easy that more data is becoming available to people, but also it's cheaper to store that data and retain it for a long period of time, and the processing cycles, the cost of processing that data is also coming down in more way than one, right? Not just Moore's Law, although Moore's Law is a big part of it, but also video games, right? So there have been some really interesting revelations over the last couple of decades, like NVIDIA's CUDA, which is essentially a more standard way to interface with GPUs, kind of allowing

them to turn into general processing GPUs, which can do what they call SIMD or SIMD, single instruction, multiple data. It's a long-winded way of saying the fact that people play video games is helping machine learning and AI kind of progress. And that's why we're kind of hitting the sweet spot. So there's also a really popular Venn diagram that talks about data science. And data science is essentially working with big data as a position. You have hacking skills on one side of it, math and stats knowledge on another, and then experience at the bottom. As they overlap, you've got these different areas. Machine learning is hacking skills and math. In the middle is data science. And then this little area over here, hacking skills

and experience, you've got the danger zone. So tonight, I'm not going to be talking about math or stats at all, so I guess welcome to the danger zone.

When you start working with these sort of big data environments, things can get a little bit frustrating. Like I said, I spent a lot of time upfront working with Elasticsearch and then that failing and then looking at scenes and then that kind of falling over and it can be a little bit frustrating. And to kind of illustrate that a little bit, I have another video for you. I'll try to make this one louder.

return zero results. Your search for milk return to 52,256 volts. Your top hip, milk of magnesium. No. Milk floats of yesteryear. No. This milk-teamed family will plan. No, sorry, I'm just looking for, you know, normal milk. Confine what you're looking for. That's what I'm saying. I confined it, so I'm asking

Milk, skimmed semi. Semi skimmed milk? Is this what I first asked you?

So yeah, that can definitely be what your experience is like when you're working with large sets of data. You think you're asking one question and you find out that it's returning a result that you didn't expect it to. But search is only one part of this, right? So the architecture for how this stuff works, especially with regard to InfoSec, has changed over the years. In the past, I'm sure, how many of you have heard of Hadoop before? Yeah, and then you have MapReduce and kind of batch processing and things like that. That has turned more from like an individual system or a set of systems, you know, HDFS and MapReduce into a bit of an ecosystem, right? And within that ecosystem, there are

different architectures, ways to use those tools. The one that's been very predominant recently is called the Lambda architecture. And I see a couple of nods. People are familiar with this. It's a little bit more of a popular term when talking about Hadoop. And you have, if you look at it from left to right, you kind of have information coming in, right? Like you get logs or you get whatever information. And it gets split into two different ways of processing. One is more gonna be bulk loading. So we're gonna store it and we're gonna map reduce jobs against it. And then you're gonna have streaming, right? So like the more memory heavy, like a storm or

spark streaming, something like that. And then you have kind of a fast table, which that could also be something like a Lucene, Fronted, Solar, or Elasticsearch. And then whenever you have a user, you have an app or something like that, it has to query across both. That's great because now we're getting one, for each one data, I'm getting both streaming and batch. We thought that was great whenever it first came out. But then as people used it a lot, they saw that there are some downsides to this. And then they came up with what they call the Kappa architecture. And it's extremely similar. It looks almost exactly the same, but there's a couple key points that make it different. You have your data, it comes in, and then

it goes to the stream processing system and it gets stream processed. And then you store it in your database and then the app receives access to the stored data. It seems a lot simpler, but it also seems like it's missing something. It's missing the batch processing. But what's interesting about this is that it allows the technology tools, like I was talking about before, innovation in the hardware space, has allowed us to process everything as if it was streaming. So instead of having to do batch differently than streaming, you can just take a large set of data that's sitting on disk maybe for the last year and just essentially try to rewind time and reprocess it as if it's streaming. And

so now the benefit that you get from that is that your app interface is less complicated which is kind of nice, but really the big benefit is here. So if I wanted to do something, whatever it is, with Lambda, I have to write it for two different architectures. I have to write it for streaming, and I have to write it for batch. You don't have to do that anymore with Kappa. You just write it once. So whenever you get into the details of things like Storm and running MapReduce jobs or Yarn, whatever, it can get extremely complicated, and you have to implement it in a way that's specific to the platform, so you can't just...

write it in one language and then write it in a different language and you can't just try to abstract that very simply. People have tried to do that, it kind of falls over. And so being able to move to the CAP architecture is a huge win. And some of the tools I'll be talking about later are actually using this CAP architecture, which is great, great stuff. So I promise I'm almost done with the intro stuff. And so there's two basic sides of machine learning too. You can even call it AI, that's a whole battle on its own if AI is machine learning or if machine learning is a subset of AI. I'm not going to talk about that tonight because that's stupid. It's like a

political fight, who cares? So you've got supervised and unsupervised data. And the main things that I want to emphasize about the differences between these two methods, these approaches, is that one requires labeled data and interaction, and the other one is unlabeled data and no interaction. So it's basically, there's some different examples I have down here about an algorithm that would be considered supervised versus considered unsupervised. Essentially, if you want to do some sort of supervised machine learning, you take a set of data that you're going to train that you know a little bit of something about, and you train with it. But you take a subset of that out, And you use that as your validation set. You say, I

already know the answer for this validation set. I'm going to train it with this training data. Then I'm going to give it my validation set and check the output and was it what I expected? If my algorithm is working, it should be what you expect, right? Or you might have to go back and tweak and that's how you kind of do your training. And then there's this, if you're actually going to be doing this stuff, you're probably looking at a stratified capable cross-validation, which is just a method of deriving your training and validation set from one big set of data, because there are ways to do that that could really bite you in the long

term. And so a lot of the information here is very high level, but I also wanted to point you at good resources. So click this link and look at this talk. I think it's called the five something of machine learning, I don't remember, the five tribes of machine learning, and it's a really good talk. And then at the bottom, a quick note to pull this back to the InfoSec, is that there's actually been some attempts to unify both supervised and unsupervised machine learning algorithms that are specific to information security. There was a team at MIT that was doing this. It was maybe about a year ago where it kind of became public. It's called, I don't know if it's pronounced AI2 or AI squared, but there's a

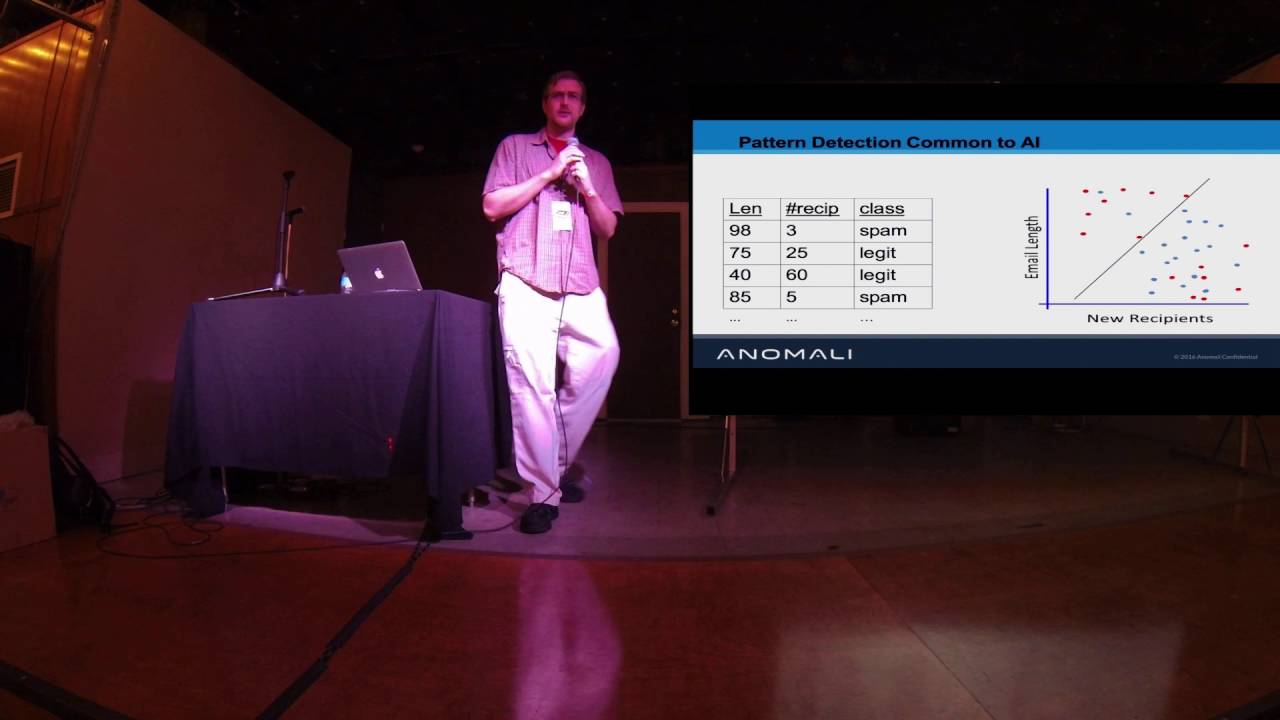

link. And they have a video and pretty things where they talk about how they kind of unify the two. There's also semi-supervised machine learning. I didn't. intend to talk about that tonight, but almost along the lines of what they're working on. So time to get the good stuff out, right? So now I'm going to talk about specific techniques really briefly. We'll talk about tools and then we can do some Q&A. So techniques. So here's a two-slide brief introduction to techniques in machine learning. Essentially, these techniques are meant to address six different types of questions. You have descriptive, exploratory, inferential, predictive, and then two that you'll probably never, never deal with, ever, because these are so insanely complicated

to do that it's really not reasonable to expect unless you have a PhD. So generally, descriptive, you're just looking to describe the data that you have. I see a couple heads nodding, people that deal with that every day. Exploratory, you're trying to find relationships between data that you have inferential, you want to use a set of data, maybe a subset of data, or a sample to drive maybe what the population, or a larger set of data, or future data, or I'm sorry, not future data, a larger set of data is about, and then predictive, which is exactly what I just said, you're trying to figure out what the future is, right? So, there's a lot of algorithms and things like

that. I'm not going to talk about them unless you want to ask questions about them. A couple quick mentions. So there are some algorithms like n-gram analysis and I don't know how to say his name, Levenstein distance, that tells you how different text is from other text. So you can use this to find domain generation algorithms for malware, C2, things like that. And n-gram analysis is really great for that. And Levenstein distance, so this, I think it's Damaral, Levenstein distance is built off of Levenstein distance. It just adds additional functions. But what it does is it tells you how different two words are. So typosquatting. You can see if you run that on, say, information that you have about web traffic for your

user population, and you find that they're going to very similar websites to mybigcompany.com, but it's not actually the same one, you may want to look into that because it's potentially efficient. And then there's a lot of other cool techniques here, like Challenger Champion, which is a method for testing out new algorithms without putting all of your eggs in that basket, right? So using different weights and percentages, allocating them. And you can actually, with that, it's really cool, is you can implement a new machine learning algorithm to your stack, but give it a weight of zero so that your SOC isn't seeing what could be potentially really bad information. but it allows you a way to slowly elevate it to where they are seeing

it and maybe it's the best algorithm that you have for whatever you're trying to do and then it'll become the champion, right? And then you have a bunch, you continue to have more challengers. And if you want to see more about this, most of this content is actually covered in this really, really good talk. If you guys are actually interested in this, I would highly recommend watching this Besides Vancouver 2015 talk. It's an hour on this slide and some other information. but he works at a really cool company and has been doing this stuff for years and gets a lot more detail with it, so he can break down a ton. I don't want to

watch that time. So why are techniques important? So I added this slide in here kind of at the last minute to show that techniques can be similar but not the same and that is a big, that's very different. So what you have here is some data that was generated and the ground truth, the The line that this data was actually generated from is this dotted dash green line right here. And you can see that that's kind of obvious, but you have a lot of outliers down here. And so this black line and this blue line are two different methods for doing linear regression against the data that you're seeing. And you can kind of see that using this more standard, or I'm sorry, using this

kind of simpler and more standard method could work. It will also give you a grossly wrong answer. So if you try to do predictive analysis using this linear regression, you're going to end up over here when you should be over here. It's just not going to work. And these algorithms are trying to do very similar things. But you can see this black line here is just a slightly different way of doing the same thing. It's specifically called the Theosen estimator.

So anyways, I just wanted to kind of show that the techniques actually do matter because if you end up with this blue line and you're trying to do predictive work off of it, you're just going to fail. And so the details do matter, but you also can abstract some of this. You don't need to have a PhD. You kind of need to understand that black line good, blue line bad. This stuff can actually be boiled down pretty simply, although a lot of academics make it seem like that is not possible. And now let's talk about some tools. So I have four tools to talk about really briefly. So first, we talked about Hadoop. This is what Hadoop looks like. Actually, this is dated a little bit. This is

June, February, March 2016. So it's grown. Actually, I was digging through it in a little bit more detail, and I found some newer projects that aren't on here yet. So this is actually a subset of what currently exists, although maybe some of them expired, so maybe it's not a subset. But so the things that we're going to talk about today leverage portions of this, such as this green section down here, like open source, Hadoop world. And then I'll also be talking a little bit about the infrastructure, kind of these NoSQL databases, graph databases, and some analytics, data science platforms and log analytics, see like your basic Splunk and Kibana, things like that. So it's extremely complicated. And I would definitely warn you that if you plan

on getting into the space and you aren't super familiar with it, there's a lot to learn. It has taken me years to get to the point where I'm comfortable just spouting off a bunch of silly sounding names like Scoop, Pig, Hive, you know, Flink, WetSpark, Storm, things like that. So, and I think this Twitter, this post really sums up what Hadoop is currently like. So, the best software is just buggy enough to generate just enough support revenue to fund long-term development. That's kind of the model for Hadoop, right? So a lot of the companies that do Hadoop, that they give these Hadoop distributions like MapR and Cloudera and Hortonworks, especially Hortonworks, They are completely funded from supporting the software and being able

to say you can put a ticket in and I'm willing to help you fix your environment or I'm going to help you upgrade your environment, professional services but also bug fixes and identifying bugs. So this is actually a pretty good representation of what the environment is currently like. And I would recommend that if you're looking to pick up a specific project within Hadoop to take a look at

Usually, if it's a Apache project, which it probably is, to look at their JIRA instance and look at what tickets they have open and kind of categorize and understand what the tickets look like. If you're seeing tickets where some people are having concerns and they're being dismissed and it looks like it's probably a valid concern, maybe be wary of that. And definitely, but don't judge a book by its cover either. Don't say this has 60,000 open JIRAs because they could all be enhancement JIRAs. Take a good look at them. and understand. Because in a lot of cases, when they have a bunch of tickets open, it's just an ambitious project, not necessarily a project that's falling over. Another thing that you can do, I do a lot, is

I look at the GitHub and I see how many commits they've had and how recently they've had them. There are definitely some projects that are out there that used to be extremely popular. So when you read a book, it says, oh, definitely use this one. It's the best one out there. And it has got like three commits in the last two months. That's the sign of a dying project. So the first tool that I'm going to talk about is the one that I said earlier, I have a lot of my eggs in this basket. It's Apache Metron. It's an incubating project. They make very clear that you should always say incubating when it's an incubating

Apache project, not Apache Metron. It's Apache Metron incubating. So just to be clear there. And their history, like I said earlier, I think, it started out in Cisco as OpenSoC, which is an open platform. I heard about it like two and a half years ago at BroCon, if you guys are familiar with that conference. And it eventually turned into Apache Metron. It migrated from out of Cisco into kind of semi Hortonworks' hands, but also Apache. And it's been around since September of 2013, which is relevant because they have a very close competitor that's been around for about a year less. It shows a little bit. They have slightly different architectures and roadmaps. but they're not new. Even though they're incubating, even though they don't

have a 1.0 release officially under Apache, that doesn't mean they haven't been around for many years. And they have a Docker instance, which I haven't played with at all, because it got merged into master like three days ago, I think. But there's a link to it, and you could totally probably get it to work. It probably works because they merged into master, and I know three or four people tested it. And there's also, if you guys are familiar with Bangor and Prana, Small virtual machines, they have big RAM installs too. They've had that forever, but that's pretty solid. And it leverages work works, but the Duke data stack, which if you don't know how that

works, this is really complicated. So they give you a little bundle and they say this will work. So you pay the money for that. Actually, in a lot of cases, it's free, but I would pay money for it because this is insane. So...

That's your spot. We'll talk about that in a minute. So, two quick slides about what their architecture looks like. I'm trying not to be overbearing with any of the tools, but I'm definitely open to questions. In fact, if any of you guys want to interrupt me and ask questions right now for free, for something happening, raise your hand, whatever you want to do. Yeah. So you can think of it very similarly to the same product, right? You take logs, they come in, Thread Intel comes in, you get enrichment sources. So it's a little bit different than some architectures, like maybe a Splunk, where they try not to do correlation, they try to do enrichment instead, and that's an important distinction. Instead of linking together two

different logs, they're taking one log and adding everything, all the context you have about it at the time, to the log itself. So they take in, say syslog, and it's this one big long string, they chop it up, they know what the fields are for syslog, okay, now we've got a message field. They might chop it up a second time, like, okay, we know this is Active Directory logs being sent over syslog. We'll chop it up and you make it and then you turn it into key value pairs like with JSON and you store it like that. But before you store it, you do all this enrichment with customizable enrichment sources, but you can also

do Threat Intel. Again, going back to the Anomaly Stacks or Soldier Edge, they used to work more closely with Soldier Edge and now it's like, well, what are you going to do? Because that's gone. And a little bit of the problem here is that they're trying to solve everything. They're trying to solve a lot of problems. There's definitely been an intentional focus to not boil the ocean, but there's just a lot in scope whenever you're talking about a project of this magnitude. So it's been interesting, it's been a rad. There has just been a UI put out as a pull request. It's not even a master yet, but it's definitely not available in any release. manage the cluster.

They use Kibana to view the data in it, but that's a very poor way of doing it because of the way the data is structured and there will probably be a replacement for that at some point in the future. So if UIs are your thing, this isn't yet. Although they're putting a lot of effort, I believe, into fixing that issue. It's a very UI driven thing. They're working from kind of the bottom up and this will be in contrast to the next project. So they're working on an extremely solid platform where they want things to be rock solid and then they're gonna layer things on top of it and like maybe actually doing work on

the data, get it ingested, do work on the data, then maybe view the data and then maybe configure the cluster through a UI and things like that. So they're kind of going from the bottom up and Patrick Spock from what I know and that is somewhat limited, although I have played with it and I can give you a quick demo. They're taking a slightly different tact where they're focusing on the machine learning algorithms and the actual tools and less on getting it up and running. So with Metron, you can say, like, install a couple dependencies and then type Vagrant up and it'll run for like, no joke, like an hour and a half and it'll

install everything you need and it'll work. And then you can play with it and it's got a full stack. And with Spot, it's like, go to their Wiki and just follow the pages and just manually do things. It hasn't been automated as consistent. But they have a little bit more focus on the machine learning side. And so this is another picture that shows their architecture in a slightly different way. And I wanted to talk really briefly about what you can send into this cluster. What sort of logs does it accept? And this is not comprehensive, but close to it is maybe 50%, I don't know exactly, of the amount of logs that they can natively ingest. And so the way that it works is they have to

write a parser for every type of log that gets sent into it, right? Which could be tough in certain implementation scenarios where you just wanna have an open listener and you can send anything to it and it'll just figure it out. This will not do that, right? This is a little bit different than that. But if you know what you're gonna be sending to it, you do have methods to customize the ingest. So you can write your own parser, right? If you know how to write Java, you could write your own parser in an efficient way, but you can also write your own parser inefficient way, they have CSV parsers and grot patterns. So you

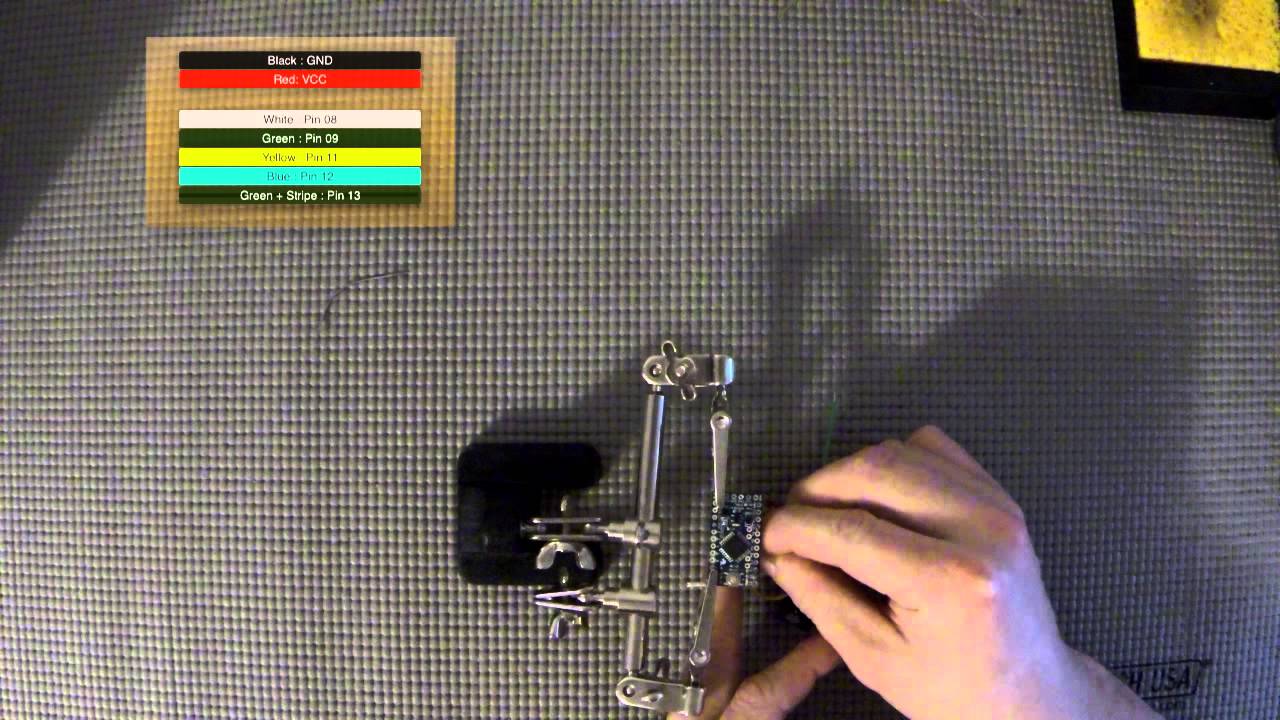

could essentially write what's a regex-e thing and parse, tokenize the data that comes in and then make use of it later on. And there's a whole bunch of steps here. I'm not gonna talk about all this stuff, but it comes in, it gets processed, which is like cleaning the data, which is a big deal and we'll talk about later. I'm still cleaning some data that I wanted to have for a demo tonight. I've been cleaning it for days, it just takes a while. So it cleans the data, which is huge. And then it does enrichments, it does some labeling, it may add like threat intel information for an IP that's in the log. And then it'll persist it. So it'll save it off

in different ways. And you can do analysis on the saved data. So some of it, maybe it'll just go to Elasticsearch or Solr and it'll just search and retrieve. And some of it will go to HDFS, which you can still retrieve with something like Hive. I know this is a lot of weird. I'm throwing out, but you can retrieve it with Hive. They use HQL, which is very similar to SQL, to retrieve the data. But also putting it in that environment, you can do your analysis. And it makes it much simpler. And they also have this tool called Stellar, S-T-E-L-L-A-R. And it allows you to do

semi-machine learning data analysis for sure in streaming in a very configurable way, where you don't have to reboot anything. Say, now do this, and it now does that for every log that comes through the system. You can use the profiler that it has and set certain thresholds. If you want to say, you know, this is an important number. It's the number of bytes being transferred per connection, and I'm getting that information in. And I want to know what the median, you know, I want to run a MAD analysis on a median absolute deviation, and if it's, you know, three standard deviations away, that's a problem. Very simple things like that you can do with not even a line, just a very small amount of information. And then you can

build on that and you can get more and more detailed analysis of the data. And then you can do more hardcore machine learning and analysis when it's on disk as well. But that, what I was just talking about, is mostly for streaming. So we have Apache Spot, which is a project that's being pushed a lot by Intel, was actually initially started by Intel, and then Cloudera is a strong partner of Intel, so they've been working together on it. Started in November 2014, September 2013, November 2014, so they're about a year off. And it used to be called Open Network Insight, because everything gets renamed now. It used to be called Open Network Insight, now it's called Apache Spot, incubating, I should say incubating next to it, sorry. And

they also have a Docker instance, but their Docker instance has been around for a lot longer, and I've got an actually working demo of that if you are interested. It leverages Cloudera's Hadoop stack, which is either CDH or EH, depending on if it's free or paid, essentially. And it's focused on an open data model, so a lot of the higher-ups in Cloudera came from ArcSight, so if you guys are familiar with ArcSight and the Ceph format, they're trying to do something similar in the Hadoop world, and they're calling it an open data model. There's an open pull request for the documentation around that right now. So, again, this is very, like, new stuff. And the

roadmap for this project is coming soon to a mailing list near you, allegedly. So, we'll see if that happens, I'm sure it will. But if you're interested in more about what the future's going to look like for this project, you can sign up for the dev mailing list, that's actually a link to sign up for it. And this is what their architecture looks like. You can tell it's very similar to before, right? So there's this, logs come in, they do things and they get stored. Logs come in, they do things and they get stored, right? It's very similar, in fact, it's very similar tools. Like you see Kafka here, this is Kafka.

This is Spark Streaming, a competitor Spark Streaming, is Storm. Very similar technologies in use here. They're a little bit more specific on what data they're looking for. for now, and I believe they have plans to expand this, but they're looking at flow information, DNS information, and proxy information. And it essentially gets stored in Hive, HGFS, HGFS with Hive. And so I wanna put a quick warning out there before everyone goes home, sure not all of you, but maybe some of you will go home and install Hadoop, and oh, this is great, that was a total pain in the neck, but it scales really well, I guess. Maybe we should do this at work. Quick warning, there

is a great talk. I didn't put it on the slide. I have a big QR code at the end that'll point you to some good references. But there's a really good talk called Hadoop Safari, I think, where they were hunting for vulnerabilities in Hadoop stacks. And they show you a lot of the issues. A lot of the issues with Hadoop as they sit right now is that they have insecure defaults but they have secure available settings. And if you set up a default cluster, if you have network access to it, you have remote code execution, period. So just wanna let that sink in. If you have network access to a default installed Hadoop cluster, you have remote code execution on

it. So don't give people network access and don't use the defaults. So big, big, big warning there. There's also a lot of projects trying to address this problem. I have them at the bottom because there's so many of them, it's kind of ridiculous. But it's also kind of a good thing, right? It definitely has some emphasis. People are looking at this. All of these, however, are bolt-ons, except for Project Rhino, which is a project coming out of Intel where they're trying to implement security into core Hadoop. So it's just a project that they're internally taking on to push things upstream into the Hadoop products. themselves that currently exist to make them more secure. That's really

good and I commend them for that work. It's really, really great stuff. Some of these projects are more mature than others and your mileage may vary, but it's generally a good thing. And like I said, it's mostly a trusted network, data in motion, mostly unencrypted, although you can encrypt it. Remote code execution, definitely possible, but you can secure it essentially the way that their model works for Hadoop for security is that they'll use file system ACLs and in a lot of environments they make it in a way where it's very convenient for an administrator to set 777 on a folder because it's breaking their stuff and all of a sudden it works when you do that. But now you're giving everybody access to everything and also by default if

you, you can set permissions for a certain user but in the web HDFS interfaces You can just say what user you are, and you don't actually have to authenticate. You just say, oh, that file is owned by user ABC. I am now ABC. Can I have it? Yes, thank you. So that's a little bit concerning. Definitely keep an eye out for that. And then there is some hope. So Clutter has some good proprietary bullet-ons. They are a little bit mixed where they support open source, but they also do proprietary things. Some of their proprietary bullet-ons are actually pretty decent from what I've heard and what I've looked at and what they've told me. And then there are projects like Apache Accumulo, which like a lot of other good

stuff is unfortunately from the NSA, but it does a lot of really good cell level, row level, column level security within the database stored on HDFS. So you can dole out permissions in different ways. So two more quick tools, one's called Rita. So if any of you guys know John Strand, Paul Asadorian, I think it's Sec504 that he wrote in Taught, he's also wrote a couple other ones. They, his company, it's a mixture of Black Hills information security and offensive countermeasures. I don't know exactly where the line is there, but they kind of wrote this thing called Rita. And it's also called AI Hunter. I think AI Hunter is the officially supported, purchasable, more commercial version of Rita. Rita is open source. And yeah, it's probably used

internally as well as a backend for commercial. MongoDB instead of HDFS, so it's a lot easier to keep going, a lot less overhead in getting things started up. It can have its own implementation issues. I'm sure everybody in the room can raise a hand. How many people have heard of the problems with MongoDB recently? Ransomware, or not ransomware, holding MongoDB databases for ransom. There was a report two years ago where Shodan said, hey, there's a lot of MongoDB databases that are listening on all interfaces, which means in some cases they're accessible from the internet. So, an interesting note, while I was going through the install of this, I found that the way that the RITA team was suggesting that MongoDB be started

forces it to bind to all interfaces and has that same issue, even though that problem has been solved for years, if you just start it like a service, like most sane people would do, or just start it normally with MongoDB, the way that they that they did it is that they say do MongoD dash dash fork dash dash logter, put your logs here, and then never specify dash F configuration file, which in the configuration file is where it says what interface to bind to. So it just says, oh, well, you didn't tell me, so I'm going to bind to all of them. So I thought that was scary, so I opened a pull request and I asked them to fix it. It was only about

a day ago. So if you run home, it might not be merged yet, so don't fall. Beware, is what I'm trying to say. of that. However, that is a somewhat minor and easy fix. It's just a concerning one. And all feedback is via the terminal. There's no GUI for this, although I think you can buy one through offensive countermeasures. And I gave this a shot. I gave it a shot and I haven't got it working yet. It's mostly because I don't have enough resources on the server side. So I have an 8 CPU 64 gig RAM VM that I'm running this in and it's been processing 300 gigs of data that I gave it because I knew that 300 gigs of data, had some attacker traffic in it, and

may also have interesting things that I wanted to see if it would pull out. And it hasn't done anything yet, it just kept yelling at me that like, I can't parse this, I can't parse that, you need to change this, you need to remove that field, you need to, so I have worked through all of those things, but it's probably still like running, I don't know, I don't even know.

Yeah, it's still parsing. So, these are the error messages that I was getting, unmatched field and log and things like that. We can talk more about. Oh, you can't see that. I'm talking to myself over here. I'm like, oh, you can't see that?

Yeah, so this is it. I'm essentially running an import of all that data. It's yelling at me, this unmatched field and log. If you are interested in this and you play with it, that just means that you may have a custom added field that it doesn't know how to just ignore. It actually fails to insert the entire log because that exists. So you have to clean it up, which on 300 gigs of data, on the box that I had, took like 24 hours to actually do the cleanup. That being said, the jury's still out. I don't know if this is a good tour or not, but I wanted to recommend it to people, because it's somewhat easy to get going. And then the

last one is an honorable mention. This is Bloodhound. How many people have heard of Bloodhound? This is getting a little bit more popular. One-fifth, maybe? So this is a graph. Graph Theory and Power View, Power Path, which is PowerShell scripts that allow some enumeration of environments and use this thing called driven to local admin where they can say, if any of you have ever had a pen test, you'll know that when an attacker gets in, they get one box, probably an endpoint, and then they're going to try to pivot from that to another one and pivot to another one and pivot to another one and escalate their privileges by stealing the credentials from one box to the next

to the next. until they get a domain admin or an enterprise admin, something like that. It's a very typical way that a pentest goes. And this helps that immensely. So it depends heavily on InvokeMimikatz, which I watched an interesting presentation also by John Strand recently. If you think that InvokeMimikatz won't work in your environment, you should click that link, and it's not anything bad, I swear. It's a PowerPoint presentation with embedded records. No, it's a PowerPoint presentation that goes through some very simple things that his team did, essentially said commands, renaming different parts of it and adding specific comments to make it a fade antivirus. And it was a very, very simplistic, just more of an example than anything, way of showing how you could get

invokeMimiKatz standard gets picked up by a large percentage of AV on virus total to zero out of 64. And it actually, so one thing I want to mention is actually it does do active scanning. It does something. There are two options, you can be quiet and efficient. I thought this was actually really cool, Invoke Stealth User Hunter, I have the details written down, but for memory, it grabs, so whenever you're on an AD join machine, it'll grab all of the servers that it natively knows about. Things like, here's where my profile is located, it's on this server, and here are my domain controllers that it can pull down easily, and very simple things like that, and it just runs against those. Let me see.

script paths, profile paths, home directories, things like that. And it just scans those instead of being noisy and doing a full enumeration of the environment, but obviously more comprehensive. And that is what it looks like. So whenever you're done, it puts it into a graph database. So this is your Active Directory environment. And it essentially puts these relationships together that if you were to go down this path, maybe here is domain admin. And so, okay, now I know I have to own this box, and then this user, I'll get this user, then I'll own this box, and I'll get this user, and I'll domain admin. Essentially, it gives you that path. Instead of having this giant web of like, I know there's a thousand systems, and I'm just gonna

own a few of them, and hopefully they don't find me, because I'm being really noisy right now. This is a way to do that a little bit more with a scalpel than a sledgehammer, and just find exactly here's the path that I need to go. Obviously, like I said, there's some active scanning involved, but you can make it a little bit stealthy. So in summary,

Fluid data analysis programs within InfoSec are very important in my opinion. Open source is a valuable way to get you started for free.

However, hardware does actually cost money. I want to stipulate that. But open source is free and it will get you part of the way. And really part of this is showing what open source will get you. And then what you're actually paying for when you buy an enterprise tool that you don't get with open source. So you're paying for the difference. You're not paying for nothing with the enterprise tool, because you could do some of this with man hours and open source tools. So I want to kind of make that a little bit more obvious. And be aware of insecure defaults in Hadoop. I want to make sure I emphasize that. And watch that talk. Seriously, that's Alex Laffler again. That was an awesome talk. I loved it.

So here's a couple references, and then I'll take some questions. So these are just links to all kinds of things. And that QR code goes to a GitHub repo that I put together, a readme file. And it's a huge list if you want to learn more about anything that I talked about and way, way, way more. It's in the format of like an awesome list. If you've ever heard of awesome awesomeness, they have these giant GitHub readmes of interesting information on a topic. Like if you want to learn programming, there's one for C, there's one for C++. Scala, Java, every single one, and you just scroll and scroll and scroll and it's a curated list of high quality information that's been turned into essentially like a

table of contents and categorized information. So it's very cool. And so I made one kind of following that theme, but it's focused on information security data analysis, which one doesn't really exist for that. on really becoming one that was just relevant for this talk. I wanted to give you guys information if you were interested. Any questions and then contact information at the bottom if you want to get a hold of me. So any questions? Anyone awake? All right, a couple people are awake. What's up? So you mentioned that this is a contacts enhancement rather than a correlation type goal. Where do you see this project going? Is it going to replace SIEMS? Is it more like a carbon black for SIEMS

type of thing? Yeah, so the question is, I mentioned earlier that this is doing enrichment instead of correlation. Where do I see this going? Is it going to replace SIEMS? I'm not going to say that it'll replace SIEMS because one of the things that it does not explicitly do well, that SIEMS do well, is parsing data that's sent to it. If you send to something like an ArcSight or Splunk, it knows how to parse tons of data and you can kind of send the data in a more uniform way. And for here, it's a little bit more specific. If I want to send my Bro logs, I have to send it to my Bro Kafka topic. If I want to send Suricata logs, I send it

to my Suricata Kafka topic. I can't just configure one way, like there's not just one big funnel for all the information. However, I think architecturally, this has some definite benefits over some same products. and that they could definitely be used, depending on the environment, at the same time. I can tell you in my environment, we are going to be using instead of a seam because seams were cost prohibitive for me, but I definitely would not recommend that in every scenario. It doesn't straight up replace it, but it complements it very well. Yep. So you're not doing any correlation with it? I mean, you are doing some correlation. Yeah, so the question is calling me out and trying to say that I am

doing correlation with Metron. And you kind of are, right? So you're enriching it with the information that you currently have available. So a differentiation, I would say, is that correlating can be seen as a pointer or a link between two sets of data. And enriching puts all of that context in one set of data. So if you think about it over a long period of time, if I look at data from two years ago, It's a little bit harder to do correlation from two years ago than it is to do enrichment because if I just look at the one log that I'm looking for, I have all the context there anymore. I don't need a

pointer to it. So you're going to have some data duplication there for sure, but I think there's a definite difference. Also, you're doing a little more intelligent correlation because you're looking for trends and you're doing a little bit of machine learning. Yeah, so I would not call that correlation. I would call that data analysis.

that's something that you can go back as you add in the new data source, enrich it. Right. So that's a really good question. So the question is, is enrichment only at ingest? Or can you add it post? So there's a couple discussions that are ongoing about that in the Apache Metron community. One is, can I enrich in enrichment? So I have data coming in, and it streams in, and it enriches to be a certain country that I want to keep an eye on. The way the topology is currently set up, you have two chances. So you enrich it with regular enrichment, regular I guess, and then you can enrich it with threat intel information and then it's gone and there's no more enrichment really possible unless you were to

do it bulk at rest. There's been conversations about different ways to approach this. Do we make that topology bigger? So we can say you can enrich here, then you can add in things that you want. I want to enrich here which assumes on that, and I want to enrich here which assumes on that. and then I do Threat and Tell, or maybe I do Threat and Tell here, and making that configurable, that could get complicated though, and be hard to support. Another thought is, okay, I can go through this enrichment topology and I'm done. Can't I just rerun a set of the information that I just enriched through it one more time, and it'll get caught by different things now, and I can essentially just set a TTL,

like you would with network traffic, so you don't get an infinite loop, but you can just keep sending subsets through, so it's less and less computationally expensive, but you're getting everything that you want. That's another thought, and there are a couple other thoughts with that. That isn't a solved problem right now. Like I said, you have two chances. You're gonna enrich it with the regular enrichment, you're gonna enrich it with Thread in Toe, and it's gone. Then there's the thought of, okay, well now you have it on disk. Can I enrich it there? And the thought is yes, but in actuality, it hasn't been proven out yet, and it isn't an available feature. However, that is definitely something that I'm pushing, because

if I find out some interesting bit of information, or I just, as things progress, I write more helpful enrichers, don't I want to have that available for all of my historical data? Because I want as much context as possible for them. And so you could definitely do that with a MapReduce job or something like that. It's possible, like I said. It's not necessarily a current feature in the environment for Apache Metron or Spot, as far as I'm aware. But that's a really important and good question. Anything else?

So, I have a couple of requests of you. One is to please vote. This is a different QR code than before. This will send you to the meetup page, and I would like you to vote for a couple of things in particular. It really only gives you two choices, but feel free to leave comments and chastise me for talking too much or being a bad presenter or whatever. But I would like you to vote on this location. This is the first time we've ever been in this room. If it's hot, if the audio is bad, didn't like a projector or anything, it's helpful for me so I know where to pick if the location's bad.

The reason why I moved to this location is because I did a poll and I asked what location is most convenient for people in the group and North Shore was the number one so now we're on North Shore. So that's how this group runs. The reason why we are on the second Thursday of the month is because I did a poll that said which week of the month, first, second, third, fourth is best. You guys picked second. Which day of the week, Monday, Tuesday, Wednesday, Thursday, Friday? He said Thursday, so here we are. The second Thursday of the month, North Shore. So this is a group that I try to let you guys have input

into. So please vote and let me know what you thought of that. Also, please let me know what you thought of this topic, this presentation. There are... I'm obviously going to be doing a follow-on to this with a hands-on lab next month, but it's also a potential that I may do some training on this topic that's also hands-on, but maybe more of like a half day or a whole day. So if you think this is valuable or helpful to you, that's helpful to me. If not, feel free to tell me that this is a dumb idea and I should not do it because I don't want to waste my time. Yeah. Hey, Hach, I've been

real curious to see what the correlations look like, what the output of the data

Yeah, we can definitely, we're gonna exactly see that next month. So I'm sorry, the question is, or maybe more of a comment, I'd like to see what it looks like. Give me something, right? And so that's really, with this group, that's why we do the lab, so we can actually see what this looks like. There are examples on the website, I can show you what it looks like. Really, you're just gonna see a giant JSON blob with information on it, but I can help parse through it and tell you what's important and why it's helpful and how you can use it. that's gonna be the focus of next month. And I'm actually gonna try and

get some of the core developers from the Metron project to actually show up next month. They're just over in Ohio, a lot of them. Some are otherwhere, but other places, but yeah. Yeah, good stuff. Any things that I wanna say? Oh, and a call for collaboration. So if you're doing stuff like this or wanna do stuff like this, let's work together, right? I love learning from other people. I hope I can help other people too. This is definitely an interest of mine. I've been working on this for years. If you want to crash course, if you just, you know, don't feel bad if you ask me a question and you think it's a dumb question, you know, it's not a dumb question. It took me multiple years

to be able to go through the alphabet soup that is the Hadoop ecosystem to know how they interconnect and to find out more details. This talk is a very brief summarization of what I've done over the last couple years, and I tried to put some of it out in the readme to help, but also, Like I said, I'd love a bi-directional collaboration on this, especially in the Pittsburgh area for security data analysis. All right, that's it. Thank you very much.

And I have to comment. I'm kind of sad that none of you guys made me use any of my other slides or quotes, especially this one. Oh, crap, I lost it. There's one coming out.

that there would be no math. I wanted someone to ask a math question. Like seriously, I was really hoping that that would happen. You know, stupid, really corny jokes about, you know, using data. This is the actual set commands that you can use to change your meme cats. This is what graph theory, that's what Hadoop looks like. You know, I'm sad. Good question, yeah, what's up? All right, so you mentioned you have a new .file

Yeah, so the comment slash question is, you mentioned earlier that if you have bad data, it'll skip the entire log for Rita or Aon Hunter. Can't you just pull it out? And yes, you can. Whenever you're pulling out a column for tab-delimited data across 300 gigabytes, it just takes time. So it just took me about two days to process, finding out which ones It was because it wasn't always obvious. But yeah, I shouldn't say that. It wasn't that bad, actually. But once I pulled them out and reprocessed it, it seemed to go pretty well. I did run into a couple of disk IO failures, but it was very limited. I think I got like seven of them. I don't even know what the barrier condition for that is.

I don't know if I lost the file or if I lost the log line or what exactly happened there. But yes, you definitely can just clean your data. And that was part of a little bit of my emphasis earlier. My clicker doesn't work anymore. With this slide right here. With this process and that kind of cleaning tier. That's actually really, really important. It saves you a ton of time in the long term. Right now I'm spending two days doing what this does in real time. But yes, very valid point. Someone else had a question? Are there any add-ons that might you basically push those bad records, like a holding type, or processing a few of your

back-to-date on, or at least allow the entire screen to do it for? I'm sorry, can you say that again? A way add-ons to...

Yeah, yeah, yeah. So with Rita, I don't believe so because each file, it just errors on the file because there's a set number of columns and if it doesn't like the column, it doesn't like the whole file. So it actually makes sense to error out early for that. With things like Apache Metron, if you fail on what they call a tuber or a tuple, which is just a log line, a JSON log line, if you fail on that, it actually drops it into an error queue. So you get an error message with the contents of the tuper that failed and the fact that it failed. And it makes it very obvious in the Storm UIs that something failed and that should happen very rarely. But

yeah, it definitely gets dropped down. And then if you wanted to customize it, you could do whatever you want, right? Once it fails, it goes in the error queue. You could try to reprocess it, but maybe you have a loop problem. Maybe you just have it send an email and say, like, hey, this was bad. Figure out why this failed personally. But yeah, that definitely works that way.

I have a question. How far pi do you know? 32 digits. So the question was how far pi do you know? Because I saw him write pi on a piece of paper at the ISSA meeting. I know pi to 32 digits because sometime I was memorizing it because I'm weird. And I found out that if you learn it to like either 20 or 32 digits, I forget which, you can calculate the circumference of a circle the size of the universe within one inch. And I'm like, that's pretty accurate.

Hey John, that stuff you're processing on the other screen, where's that actually processing at? On your laptop or somewhere else? Oh, it's on, I have a ESXi box that runs under my desk at work because I'm that guy. Actually, that's that guy for my whole university. Pretty much everybody does that unfortunately. But it's kind of like my lab environment, so I have a free ESXi server license that runs on it which allows me to allocate up to eight vCPUs. The system actually has 24, but I can only give it eight because of the licensing. and then it has 128 gigs of RAM, I just gave it 64 because I could. And so that's where I'm running it. So it's not

a laptop. It's a Tower T430, I think, Dell Powerade. Any other questions? And feel free to come up and talk to me afterwards or send me an email at the bottom there, johnziola.gmail.com. No H, J-O-N. All right, thanks everyone.